Fangxuan Sun

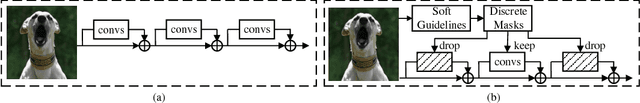

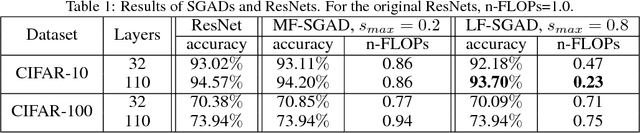

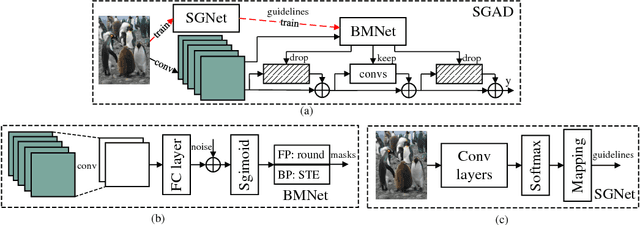

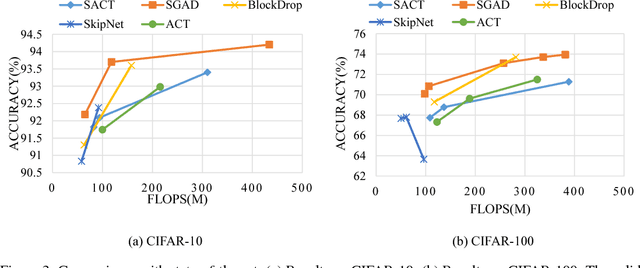

SGAD: Soft-Guided Adaptively-Dropped Neural Network

Jul 04, 2018

Abstract:Deep neural networks (DNNs) have been proven to have many redundancies. Hence, many efforts have been made to compress DNNs. However, the existing model compression methods treat all the input samples equally while ignoring the fact that the difficulties of various input samples being correctly classified are different. To address this problem, DNNs with adaptive dropping mechanism are well explored in this work. To inform the DNNs how difficult the input samples can be classified, a guideline that contains the information of input samples is introduced to improve the performance. Based on the developed guideline and adaptive dropping mechanism, an innovative soft-guided adaptively-dropped (SGAD) neural network is proposed in this paper. Compared with the 32 layers residual neural networks, the presented SGAD can reduce the FLOPs by 77% with less than 1% drop in accuracy on CIFAR-10.

Intra-layer Nonuniform Quantization for Deep Convolutional Neural Network

Aug 06, 2016

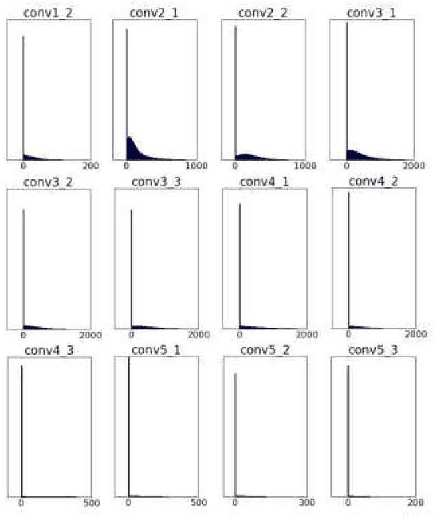

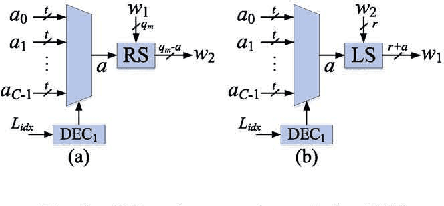

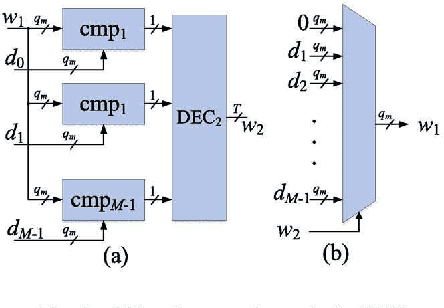

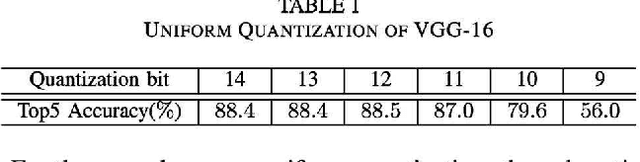

Abstract:Deep convolutional neural network (DCNN) has achieved remarkable performance on object detection and speech recognition in recent years. However, the excellent performance of a DCNN incurs high computational complexity and large memory requirement. In this paper, an equal distance nonuniform quantization (ENQ) scheme and a K-means clustering nonuniform quantization (KNQ) scheme are proposed to reduce the required memory storage when low complexity hardware or software implementations are considered. For the VGG-16 and the AlexNet, the proposed nonuniform quantization schemes reduce the number of required memory storage by approximately 50\% while achieving almost the same or even better classification accuracy compared to the state-of-the-art quantization method. Compared to the ENQ scheme, the proposed KNQ scheme provides a better tradeoff when higher accuracy is required.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge