Fabrizio Pittorino

HERCULES: Hardware-Efficient, Robust, Continual Learning Neural Architecture Search

May 03, 2026Abstract:Neural Architecture Search (NAS) has emerged as a powerful framework for automatically discovering neural architectures that balance accuracy and efficiency. However, as AI transitions from static benchmarks to real-world deployment, the traditional focus on hardware-aware efficiency is no longer sufficient. We observe that modern NAS methods, especially those that target edge AI, are evolving to address a triple objective: Efficiency, Robustness, and Continual Learning. While efficiency ensures feasibility in resource-constrained environments, robustness guarantees reliability under environmental variabilities, and continual learning enables adaptation to sequential tasks without catastrophic forgetting. We propose a taxonomy of NAS approaches through this triple lens, distinguishing between methods targeting resource optimization, environmental resilience, and architectural plasticity. This unified perspective reveals that these axes, though often studied in isolation, are mutually reinforcing. Building on this taxonomy, we map the current landscape of these NAS methods into a new framework called Hardware-Efficient, Robust, and ContinUal LEarning Search (HERCULES). We define the desiderata, the twelve labours of HERCULES, addressing the non-trivial challenge of balancing an adequate search-space exploration with the immense computational costs of a multi-objective NAS, accounting for these crucial objectives of current AI systems. By identifying critical gaps in existing research, this survey outlines a roadmap toward integrated algorithmic, architectural, and hardware-software co-design for truly deployable, lifelong-learning AI systems.

Position Paper: From Edge AI to Adaptive Edge AI

Mar 31, 2026Abstract:Edge AI is often framed as model compression and deployment under tight constraints. We argue a stronger operational thesis: Edge AI in realistic deployments is necessarily adaptive. In long-horizon operation, a fixed (non-adaptive) configuration faces a fundamental failure mode: as data and operating conditions evolve and change in time, it must either (i) violate time-varying budgets (latency/energy/thermal/connectivity/privacy) or (ii) lose predictive reliability (accuracy and, critically, calibration), with risk concentrating in transient regimes and rare time intervals rather than in average performance. If a deployed system cannot reconfigure its computation - and, when required, its model state - under evolving conditions and constraints, it reduces to static embedded inference and cannot provide sustained utility. This position paper introduces a minimal Agent-System-Environment (ASE) lens that makes adaptivity precise at the edge by specifying (i) what changes, (ii) what is observed, (iii) what can be reconfigured, and (iv) which constraints must remain satisfied over time. Building on this framing, we formulate ten research challenges for the next decade, spanning theoretical guarantees for evolving systems, dynamic architectures and hybrid transitions between data-driven and model-based components, fault/anomaly-driven targeted updates, System-1/System-2 decompositions (anytime intelligence), modularity, validation under scarce labels, and evaluation protocols that quantify lifecycle efficiency and recovery/stability under drift and interventions.

Active In-Context Learning for Tabular Foundation Models

Mar 28, 2026Abstract:Active learning (AL) reduces labeling cost by querying informative samples, but in tabular settings its cold-start gains are often limited because uncertainty estimates are unreliable when models are trained on very few labels. Tabular foundation models such as TabPFN provide calibrated probabilistic predictions via in-context learning (ICL), i.e., without task-specific weight updates, enabling an AL regime in which the labeled context - rather than parameters - is iteratively optimized. We formalize Tabular Active In-Context Learning (Tab-AICL) and instantiate it with four acquisition rules: uncertainty (TabPFN-Margin), diversity (TabPFN-Coreset), an uncertainty-diversity hybrid (TabPFN-Hybrid), and a scalable two-stage method (TabPFN-Proxy-Hybrid) that shortlists candidates using a lightweight linear proxy before TabPFN-based selection. Across 20 classification benchmarks, Tab-AICL improves cold-start sample efficiency over retrained gradient-boosting baselines (CatBoost-Margin and XGBoost-Margin), measured by normalized AULC up to 100 labeled samples.

SQUAD: Scalable Quorum Adaptive Decisions via ensemble of early exit neural networks

Jan 30, 2026Abstract:Early-exit neural networks have become popular for reducing inference latency by allowing intermediate predictions when sufficient confidence is achieved. However, standard approaches typically rely on single-model confidence thresholds, which are frequently unreliable due to inherent calibration issues. To address this, we introduce SQUAD (Scalable Quorum Adaptive Decisions), the first inference scheme that integrates early-exit mechanisms with distributed ensemble learning, improving uncertainty estimation while reducing the inference time. Unlike traditional methods that depend on individual confidence scores, SQUAD employs a quorum-based stopping criterion on early-exit learners by collecting intermediate predictions incrementally in order of computational complexity until a consensus is reached and halting the computation at that exit if the consensus is statistically significant. To maximize the efficacy of this voting mechanism, we also introduce QUEST (Quorum Search Technique), a Neural Architecture Search method to select early-exit learners with optimized hierarchical diversity, ensuring learners are complementary at every intermediate layer. This consensus-driven approach yields statistically robust early exits, improving the test accuracy up to 5.95% compared to state-of-the-art dynamic solutions with a comparable computational cost and reducing the inference latency up to 70.60% compared to static ensembles while maintaining a good accuracy.

Architecture-Aware Minimization (A$^2$M): How to Find Flat Minima in Neural Architecture Search

Mar 13, 2025Abstract:Neural Architecture Search (NAS) has become an essential tool for designing effective and efficient neural networks. In this paper, we investigate the geometric properties of neural architecture spaces commonly used in differentiable NAS methods, specifically NAS-Bench-201 and DARTS. By defining flatness metrics such as neighborhoods and loss barriers along paths in architecture space, we reveal locality and flatness characteristics analogous to the well-known properties of neural network loss landscapes in weight space. In particular, we find that highly accurate architectures cluster together in flat regions, while suboptimal architectures remain isolated, unveiling the detailed geometrical structure of the architecture search landscape. Building on these insights, we propose Architecture-Aware Minimization (A$^2$M), a novel analytically derived algorithmic framework that explicitly biases, for the first time, the gradient of differentiable NAS methods towards flat minima in architecture space. A$^2$M consistently improves generalization over state-of-the-art DARTS-based algorithms on benchmark datasets including CIFAR-10, CIFAR-100, and ImageNet16-120, across both NAS-Bench-201 and DARTS search spaces. Notably, A$^2$M is able to increase the test accuracy, on average across different differentiable NAS methods, by +3.60\% on CIFAR-10, +4.60\% on CIFAR-100, and +3.64\% on ImageNet16-120, demonstrating its superior effectiveness in practice. A$^2$M can be easily integrated into existing differentiable NAS frameworks, offering a versatile tool for future research and applications in automated machine learning. We open-source our code at https://github.com/AI-Tech-Research-Lab/AsquaredM.

Quantifying Cryptocurrency Unpredictability: A Comprehensive Study of Complexity and Forecasting

Feb 13, 2025Abstract:This paper offers a thorough examination of the univariate predictability in cryptocurrency time-series. By exploiting a combination of complexity measure and model predictions we explore the cryptocurrencies time-series forecasting task focusing on the exchange rate in USD of Litecoin, Binance Coin, Bitcoin, Ethereum, and XRP. On one hand, to assess the complexity and the randomness of these time-series, a comparative analysis has been performed using Brownian and colored noises as a benchmark. The results obtained from the Complexity-Entropy causality plane and power density spectrum analysis reveal that cryptocurrency time-series exhibit characteristics closely resembling those of Brownian noise when analyzed in a univariate context. On the other hand, the application of a wide range of statistical, machine and deep learning models for time-series forecasting demonstrates the low predictability of cryptocurrencies. Notably, our analysis reveals that simpler models such as Naive models consistently outperform the more complex machine and deep learning ones in terms of forecasting accuracy across different forecast horizons and time windows. The combined study of complexity and forecasting accuracies highlights the difficulty of predicting the cryptocurrency market. These findings provide valuable insights into the inherent characteristics of the cryptocurrency data and highlight the need to reassess the challenges associated with predicting cryptocurrency's price movements.

* This is the author's accepted manuscript, modified per ACM self-archiving policy. The definitive Version of Record is available at https://doi.org/10.1145/3703412.3703420

Training Multi-Layer Binary Neural Networks With Local Binary Error Signals

Nov 28, 2024Abstract:Binary Neural Networks (BNNs) hold the potential for significantly reducing computational complexity and memory demand in machine and deep learning. However, most successful training algorithms for BNNs rely on quantization-aware floating-point Stochastic Gradient Descent (SGD), with full-precision hidden weights used during training. The binarized weights are only used at inference time, hindering the full exploitation of binary operations during the training process. In contrast to the existing literature, we introduce, for the first time, a multi-layer training algorithm for BNNs that does not require the computation of back-propagated full-precision gradients. Specifically, the proposed algorithm is based on local binary error signals and binary weight updates, employing integer-valued hidden weights that serve as a synaptic metaplasticity mechanism, thereby establishing it as a neurobiologically plausible algorithm. The binary-native and gradient-free algorithm proposed in this paper is capable of training binary multi-layer perceptrons (BMLPs) with binary inputs, weights, and activations, by using exclusively XNOR, Popcount, and increment/decrement operations, hence effectively paving the way for a new class of operation-optimized training algorithms. Experimental results on BMLPs fully trained in a binary-native and gradient-free manner on multi-class image classification benchmarks demonstrate an accuracy improvement of up to +13.36% compared to the fully binary state-of-the-art solution, showing minimal accuracy degradation compared to the same architecture trained with full-precision SGD and floating-point weights, activations, and inputs. The proposed algorithm is made available to the scientific community as a public repository.

FlatNAS: optimizing Flatness in Neural Architecture Search for Out-of-Distribution Robustness

Feb 29, 2024Abstract:Neural Architecture Search (NAS) paves the way for the automatic definition of Neural Network (NN) architectures, attracting increasing research attention and offering solutions in various scenarios. This study introduces a novel NAS solution, called Flat Neural Architecture Search (FlatNAS), which explores the interplay between a novel figure of merit based on robustness to weight perturbations and single NN optimization with Sharpness-Aware Minimization (SAM). FlatNAS is the first work in the literature to systematically explore flat regions in the loss landscape of NNs in a NAS procedure, while jointly optimizing their performance on in-distribution data, their out-of-distribution (OOD) robustness, and constraining the number of parameters in their architecture. Differently from current studies primarily concentrating on OOD algorithms, FlatNAS successfully evaluates the impact of NN architectures on OOD robustness, a crucial aspect in real-world applications of machine and deep learning. FlatNAS achieves a good trade-off between performance, OOD generalization, and the number of parameters, by using only in-distribution data in the NAS exploration. The OOD robustness of the NAS-designed models is evaluated by focusing on robustness to input data corruptions, using popular benchmark datasets in the literature.

The star-shaped space of solutions of the spherical negative perceptron

May 18, 2023

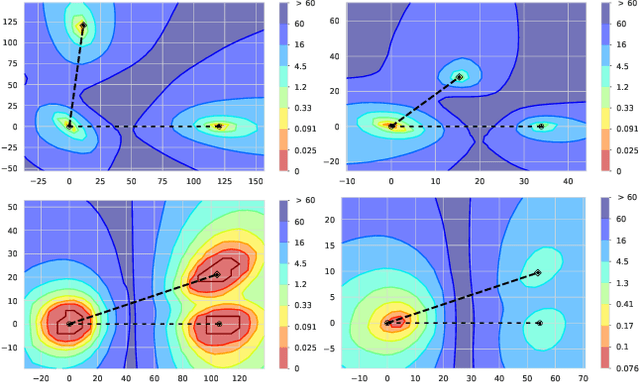

Abstract:Empirical studies on the landscape of neural networks have shown that low-energy configurations are often found in complex connected structures, where zero-energy paths between pairs of distant solutions can be constructed. Here we consider the spherical negative perceptron, a prototypical non-convex neural network model framed as a continuous constraint satisfaction problem. We introduce a general analytical method for computing energy barriers in the simplex with vertex configurations sampled from the equilibrium. We find that in the over-parameterized regime the solution manifold displays simple connectivity properties. There exists a large geodesically convex component that is attractive for a wide range of optimization dynamics. Inside this region we identify a subset of atypically robust solutions that are geodesically connected with most other solutions, giving rise to a star-shaped geometry. We analytically characterize the organization of the connected space of solutions and show numerical evidence of a transition, at larger constraint densities, where the aforementioned simple geodesic connectivity breaks down.

Deep Networks on Toroids: Removing Symmetries Reveals the Structure of Flat Regions in the Landscape Geometry

Feb 07, 2022

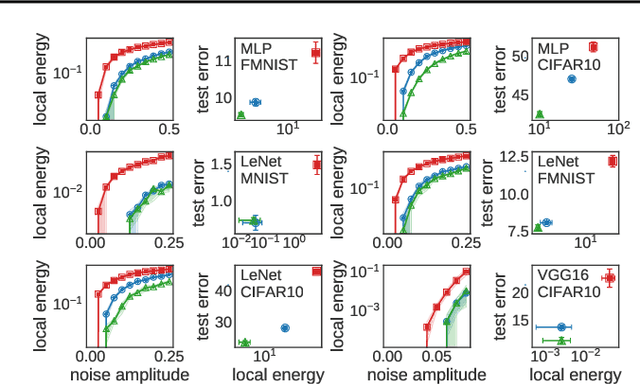

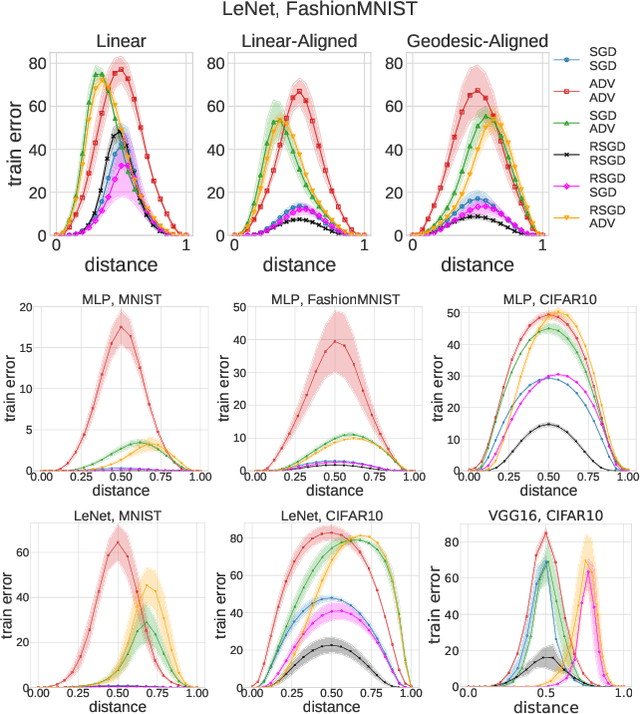

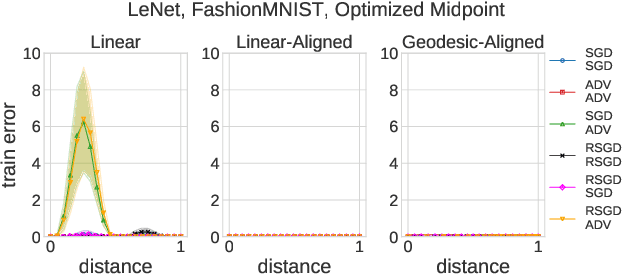

Abstract:We systematize the approach to the investigation of deep neural network landscapes by basing it on the geometry of the space of implemented functions rather than the space of parameters. Grouping classifiers into equivalence classes, we develop a standardized parameterization in which all symmetries are removed, resulting in a toroidal topology. On this space, we explore the error landscape rather than the loss. This lets us derive a meaningful notion of the flatness of minimizers and of the geodesic paths connecting them. Using different optimization algorithms that sample minimizers with different flatness we study the mode connectivity and other characteristics. Testing a variety of state-of-the-art architectures and benchmark datasets, we confirm the correlation between flatness and generalization performance; we further show that in function space flatter minima are closer to each other and that the barriers along the geodesics connecting them are small. We also find that minimizers found by variants of gradient descent can be connected by zero-error paths with a single bend. We observe similar qualitative results in neural networks with binary weights and activations, providing one of the first results concerning the connectivity in this setting. Our results hinge on symmetry removal, and are in remarkable agreement with the rich phenomenology described by some recent analytical studies performed on simple shallow models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge