Evgenii Kanin

Development of Deep Transformer-Based Models for Long-Term Prediction of Transient Production of Oil Wells

Oct 12, 2021

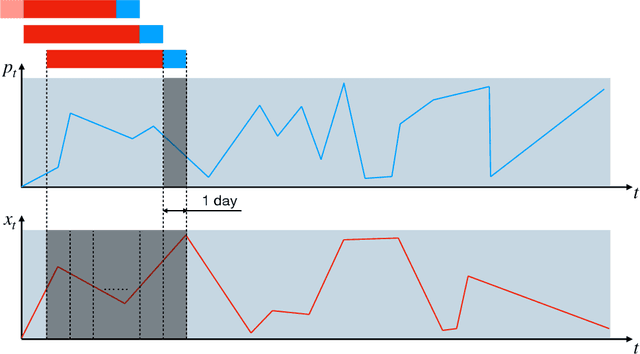

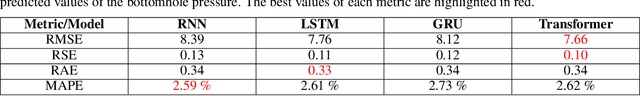

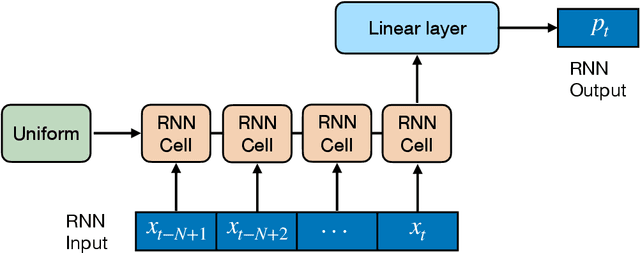

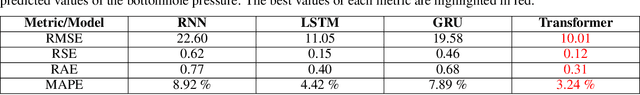

Abstract:We propose a novel approach to data-driven modeling of a transient production of oil wells. We apply the transformer-based neural networks trained on the multivariate time series composed of various parameters of oil wells measured during their exploitation. By tuning the machine learning models for a single well (ignoring the effect of neighboring wells) on the open-source field datasets, we demonstrate that transformer outperforms recurrent neural networks with LSTM/GRU cells in the forecasting of the bottomhole pressure dynamics. We apply the transfer learning procedure to the transformer-based surrogate model, which includes the initial training on the dataset from a certain well and additional tuning of the model's weights on the dataset from a target well. Transfer learning approach helps to improve the prediction capability of the model. Next, we generalize the single-well model based on the transformer architecture for multiple wells to simulate complex transient oilfield-level patterns. In other words, we create the global model which deals with the dataset, comprised of the production history from multiple wells, and allows for capturing the well interference resulting in more accurate prediction of the bottomhole pressure or flow rate evolutions for each well under consideration. The developed instruments for a single-well and oilfield-scale modelling can be used to optimize the production process by selecting the operating regime and submersible equipment to increase the hydrocarbon recovery. In addition, the models can be helpful to perform well-testing avoiding costly shut-in operations.

A Predictive Model for Steady-State Multiphase Pipe Flow: Machine Learning on Lab Data

May 23, 2019

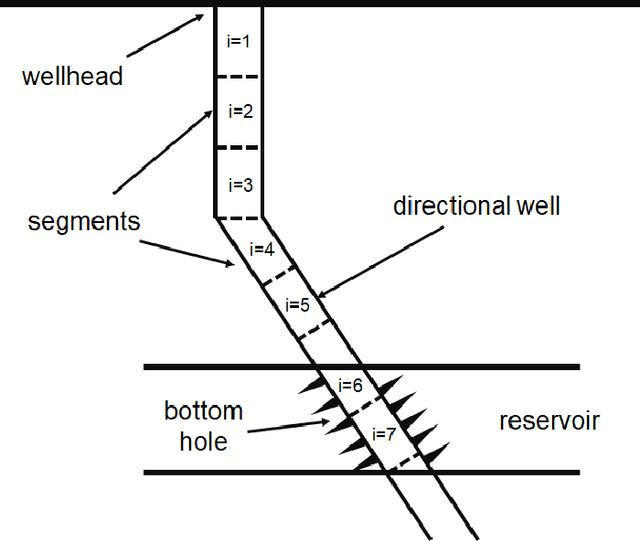

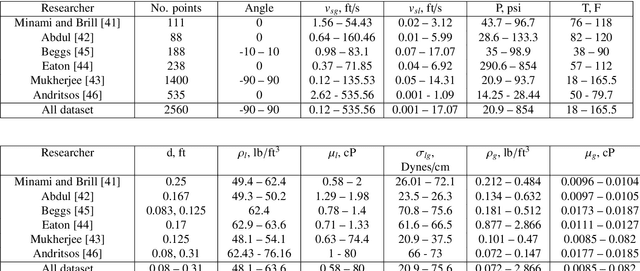

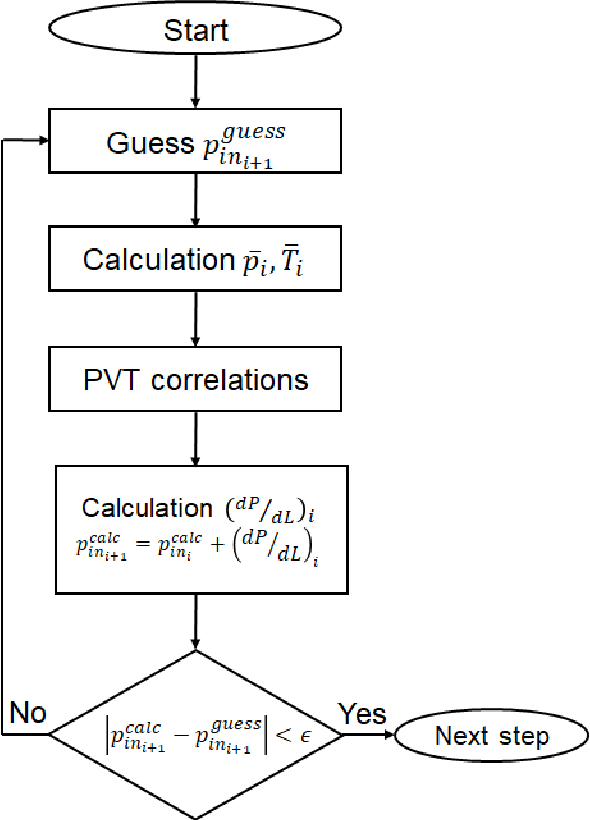

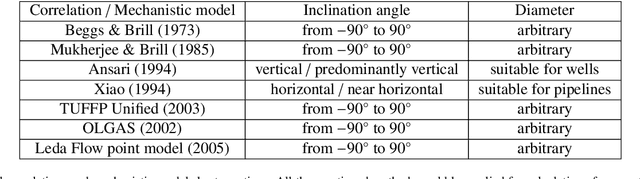

Abstract:Engineering simulators used for steady-state multiphase pipe flows are commonly utilized to predict pressure drop. Such simulators are typically based on either empirical correlations or first-principles mechanistic models. The simulators allow evaluating the pressure drop in multiphase pipe flow with acceptable accuracy. However, the only shortcoming of these correlations and mechanistic models is their applicability. In order to extend the applicability and the accuracy of the existing accessible methods, a method of pressure drop calculation in the pipeline is proposed. The method is based on well segmentation and calculation of the pressure gradient in each segment using three surrogate models based on Machine Learning algorithms trained on a representative lab data set from the open literature. The first model predicts the value of a liquid holdup in the segment, the second one determines the flow pattern, and the third one is used to estimate the pressure gradient. To build these models, several ML algorithms are trained such as Random Forest, Gradient Boosting Decision Trees, Support Vector Machine, and Artificial Neural Network, and their predictive abilities are cross-compared. The proposed method for pressure gradient calculation yields $R^2 = 0.95$ by using the Gradient Boosting algorithm as compared with $R^2 = 0.92$ in case of Mukherjee and Brill correlation and $R^2 = 0.91$ when a combination of Ansari and Xiao mechanistic models is utilized. The method for pressure drop prediction is also validated on three real field cases. Validation indicates that the proposed model yields the following coefficients of determination: $R^2 = 0.806, 0.815$ and 0.99 as compared with the highest values obtained by commonly used techniques: $R^2 = 0.82$ (Beggs and Brill correlation), $R^2 = 0.823$ (Mukherjee and Brill correlation) and $R^2 = 0.98$ (Beggs and Brill correlation).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge