Enzo Baccarelli

AFAFed -- Protocol analysis

Jun 29, 2022

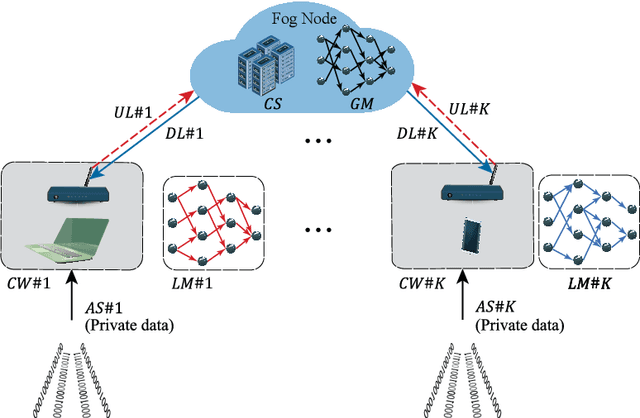

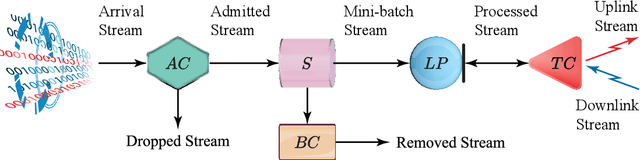

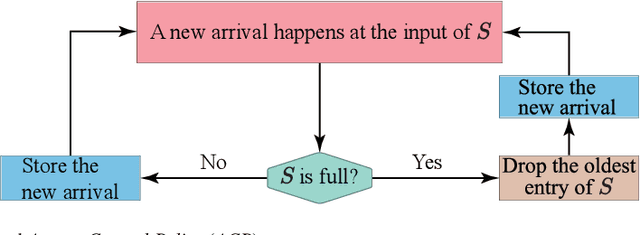

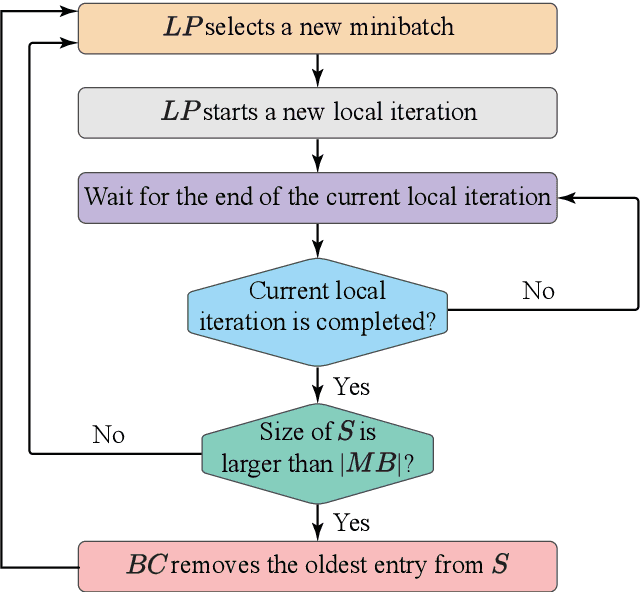

Abstract:In this paper, we design, analyze the convergence properties and address the implementation aspects of AFAFed. This is a novel Asynchronous Fair Adaptive Federated learning framework for stream-oriented IoT application environments, which are featured by time-varying operating conditions, heterogeneous resource-limited devices (i.e., coworkers), non-i.i.d. local training data and unreliable communication links. The key new of AFAFed is the synergic co-design of: (i) two sets of adaptively tuned tolerance thresholds and fairness coefficients at the coworkers and central server, respectively; and, (ii) a distributed adaptive mechanism, which allows each coworker to adaptively tune own communication rate. The convergence properties of AFAFed under (possibly) non-convex loss functions is guaranteed by a set of new analytical bounds, which formally unveil the impact on the resulting AFAFed convergence rate of a number of Federated Learning (FL) parameters, like, first and second moments of the per-coworker number of consecutive model updates, data skewness, communication packet-loss probability, and maximum/minimum values of the (adaptively tuned) mixing coefficient used for model aggregation.

Gomoku: analysis of the game and of the player Wine

Nov 01, 2021

Abstract:Gomoku, also known as five in a row, is a classical board game, ideally suited for quickly testing novel Artificial Intelligence (AI) techniques. With the aim of facilitating a developer willing to write a new Gomoku player, in this report we present an analysis of the main game concepts and strategies, which is wider and deeper than existing ones. Moreover, after discussing the general structure of an artificial player, we present and analyse a strong Gomoku player, named Wine, the code of which is freely available on the Internet and which is an excelent example of how a modern player is organised.

Why should we add early exits to neural networks?

Apr 27, 2020

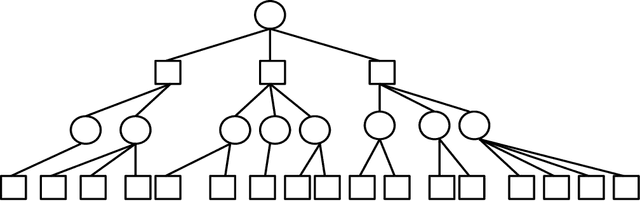

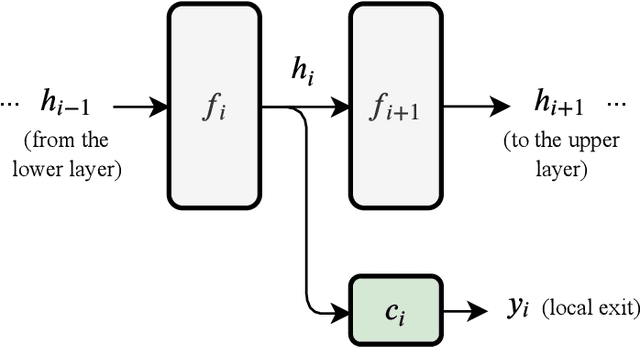

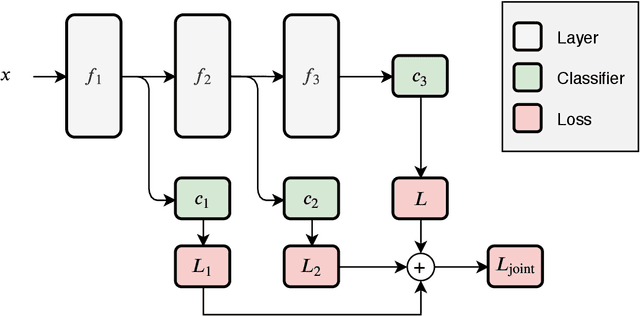

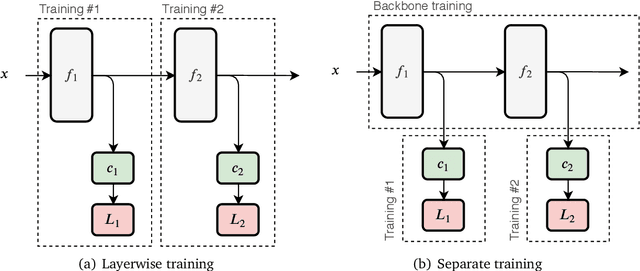

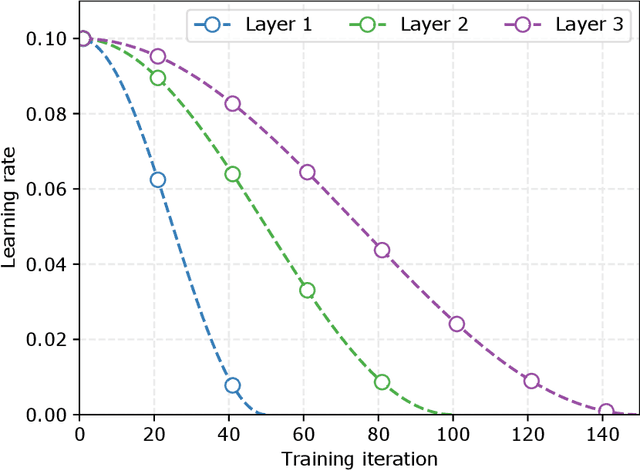

Abstract:Deep neural networks are generally designed as a stack of differentiable layers, in which a prediction is obtained only after running the full stack. Recently, some contributions have proposed techniques to endow the networks with early exits, allowing to obtain predictions at intermediate points of the stack. These multi-output networks have a number of advantages, including: (i) significant reductions of the inference time, (ii) reduced tendency to overfitting and vanishing gradients, and (iii) capability of being distributed over multi-tier computation platforms. In addition, they connect to the wider themes of biological plausibility and layered cognitive reasoning. In this paper, we provide a comprehensive introduction to this family of neural networks, by describing in a unified fashion the way these architectures can be designed, trained, and actually deployed in time-constrained scenarios. We also describe in-depth their application scenarios in 5G and Fog computing environments, as long as some of the open research questions connected to them.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge