Enayetur Raheem

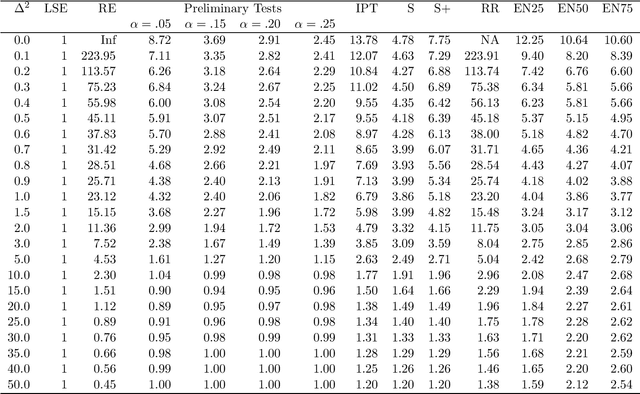

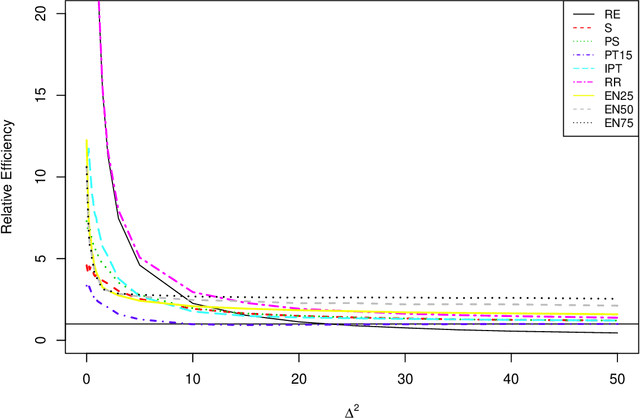

Penalty, Shrinkage, and Preliminary Test Estimators under Full Model Hypothesis

Mar 24, 2015

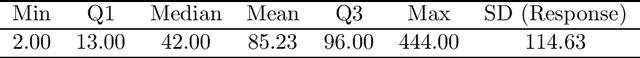

Abstract:This paper considers a multiple regression model and compares, under full model hypothesis, analytically as well as by simulation, the performance characteristics of some popular penalty estimators such as ridge regression, LASSO, adaptive LASSO, SCAD, and elastic net versus Least Squares Estimator, restricted estimator, preliminary test estimator, and Stein-type estimators when the dimension of the parameter space is smaller than the sample space dimension. We find that RR uniformly dominates LSE, RE, PTE, SE and PRSE while LASSO, aLASSO, SCAD, and EN uniformly dominates LSE only. Further, it is observed that neither penalty estimators nor Stein-type estimator dominate one another.

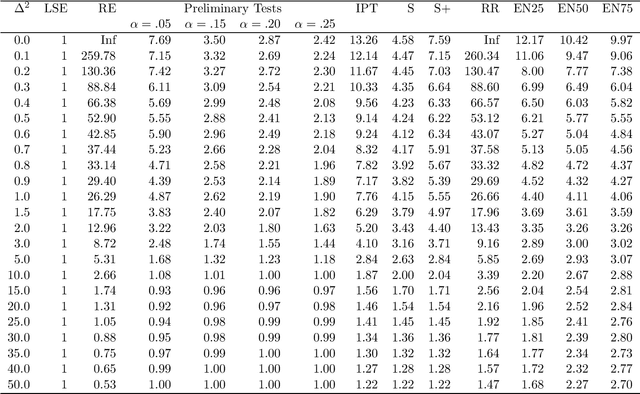

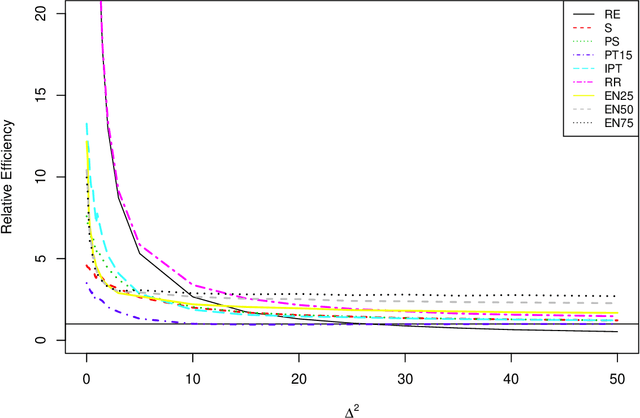

Improved LASSO

Mar 17, 2015

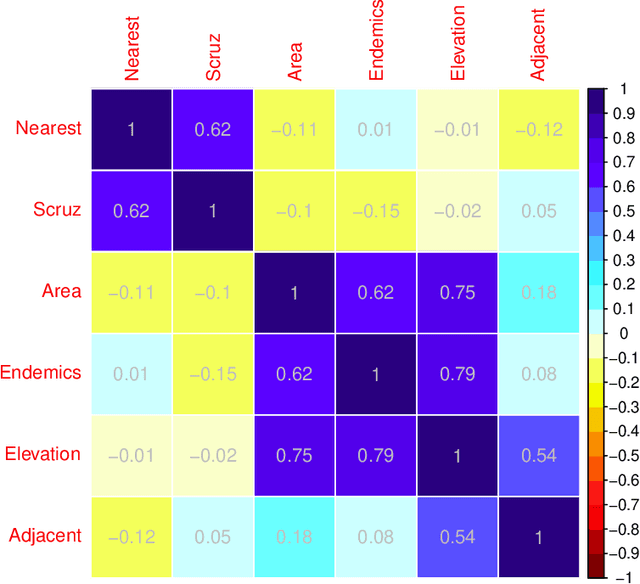

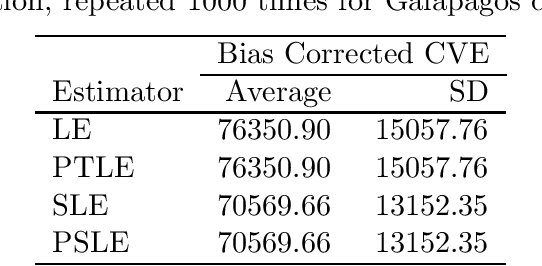

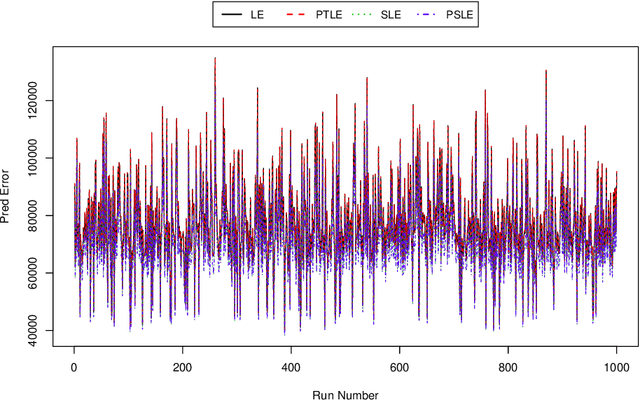

Abstract:We propose an improved LASSO estimation technique based on Stein-rule. We shrink classical LASSO estimator using preliminary test, shrinkage, and positive-rule shrinkage principle. Simulation results have been carried out for various configurations of correlation coefficients ($r$), size of the parameter vector ($\beta$), error variance ($\sigma^2$) and number of non-zero coefficients ($k$) in the model parameter vector. Several real data examples have been used to demonstrate the practical usefulness of the proposed estimators. Our study shows that the risk ordering given by LSE $>$ LASSO $>$ Stein-type LASSO $>$ Stein-type positive rule LASSO, remains the same uniformly in the divergence parameter $\Delta^2$ as in the traditional case.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge