Ehsan Arbabi

Effects of Images with Different Levels of Familiarity on EEG

Oct 12, 2017

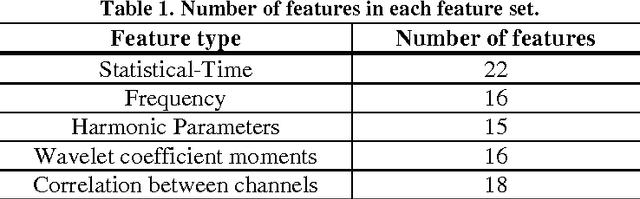

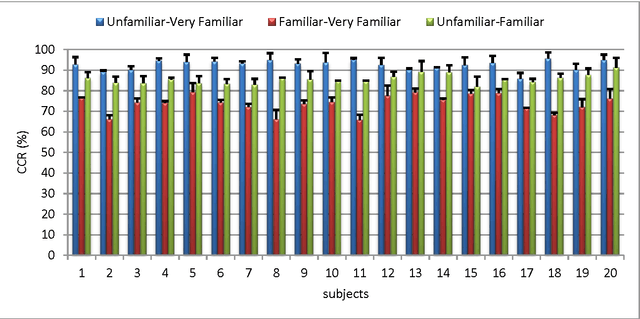

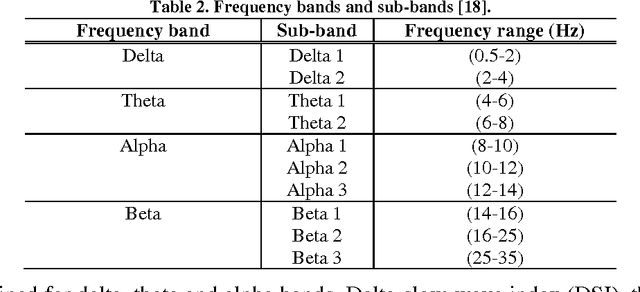

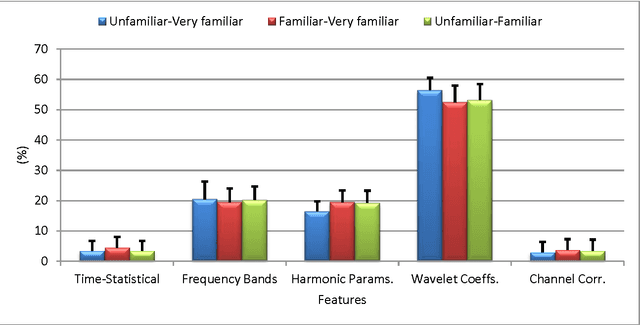

Abstract:Evaluating human brain potentials during watching different images can be used for memory evaluation, information retrieving, guilty-innocent identification and examining the brain response. In this study, the effects of watching images, with different levels of familiarity, on subjects' Electroencephalogram (EEG) have been studied. Three different groups of images with three familiarity levels of "unfamiliar", "familiar" and "very familiar" have been considered for this study. EEG signals of 21 subjects (14 men) were recorded. After signal acquisition, pre-processing, including noise and artifact removal, were performed on epochs of data. Features, including spatial-statistical, wavelet, frequency and harmonic parameters, and also correlation between recording channels, were extracted from the data. Then, we evaluated the efficiency of the extracted features by using p-value and also an orthogonal feature selection method (combination of Gram-Schmitt method and Fisher discriminant ratio) for feature dimensional reduction. As the final step of feature selection, we used 'add-r take-away l' method for choosing the most discriminative features. For data classification, including all two-class and three-class cases, we applied Support Vector Machine (SVM) on the extracted features. The correct classification rates (CCR) for "unfamiliar-familiar", "unfamiliar-very familiar" and "familiar-very familiar" cases were 85.6%, 92.6%, and 70.6%, respectively. The best results of classifications were obtained in pre-frontal and frontal regions of brain. Also, wavelet, frequency and harmonic features were among the most discriminative features. Finally, in three-class case, the best CCR was 86.8%.

A Categorical Approach for Recognizing Emotional Effects of Music

Sep 17, 2017

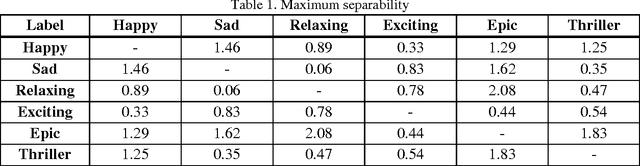

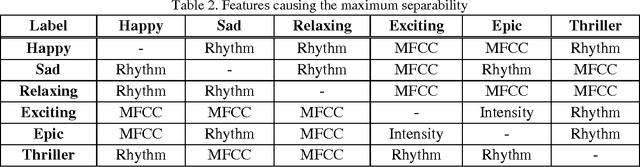

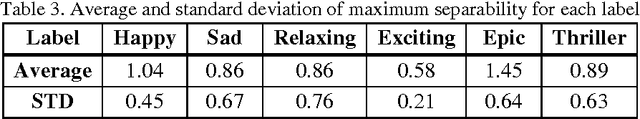

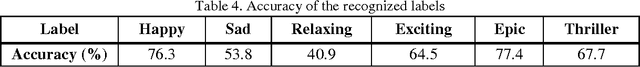

Abstract:Recently, digital music libraries have been developed and can be plainly accessed. Latest research showed that current organization and retrieval of music tracks based on album information are inefficient. Moreover, they demonstrated that people use emotion tags for music tracks in order to search and retrieve them. In this paper, we discuss separability of a set of emotional labels, proposed in the categorical emotion expression, using Fisher's separation theorem. We determine a set of adjectives to tag music parts: happy, sad, relaxing, exciting, epic and thriller. Temporal, frequency and energy features have been extracted from the music parts. It could be seen that the maximum separability within the extracted features occurs between relaxing and epic music parts. Finally, we have trained a classifier using Support Vector Machines to automatically recognize and generate emotional labels for a music part. Accuracy for recognizing each label has been calculated; where the results show that epic music can be recognized more accurately (77.4%), comparing to the other types of music.

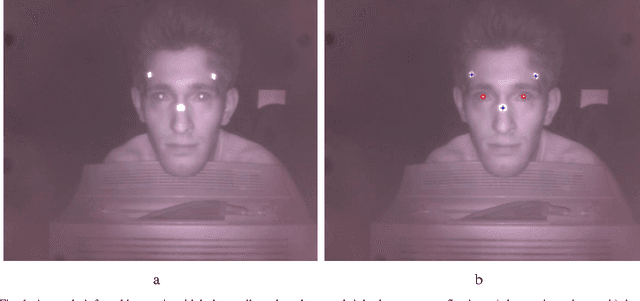

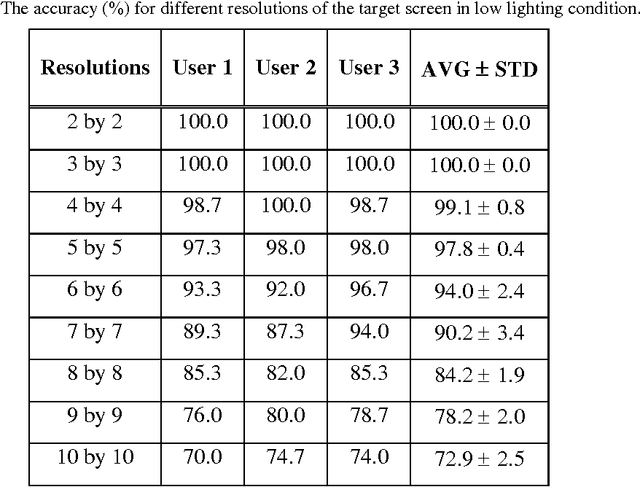

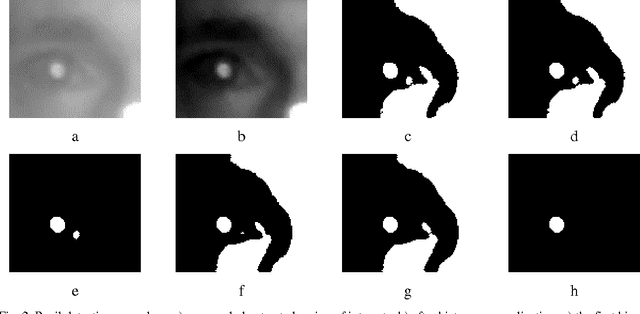

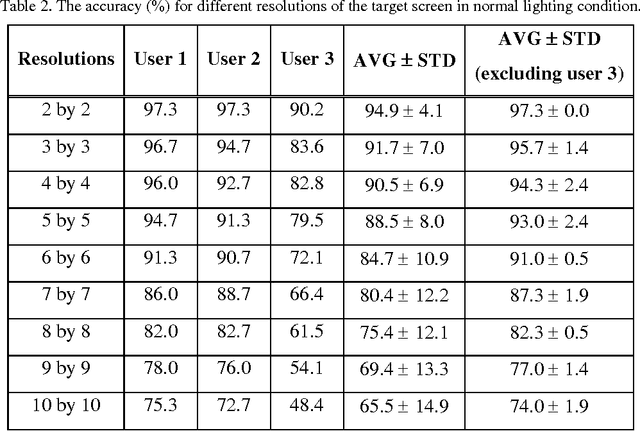

A low cost non-wearable gaze detection system based on infrared image processing

Sep 12, 2017

Abstract:Human eye gaze detection plays an important role in various fields, including human-computer interaction, virtual reality and cognitive science. Although different relatively accurate systems of eye tracking and gaze detection exist, they are usually either too expensive to be bought for low cost applications or too complex to be implemented easily. In this article, we propose a non-wearable system for eye tracking and gaze detection with low complexity and cost. The proposed system provides a medium accuracy which makes it suitable for general applications in which low cost and easy implementation is more important than achieving very precise gaze detection. The proposed method includes pupil and marker detection using infrared image processing, and gaze evaluation using an interpolation-based strategy. The interpolation-based strategy exploits the positions of the detected pupils and markers in a target captured image and also in some previously captured training images for estimating the position of a point that the user is gazing at. The proposed system has been evaluated by three users in two different lighting conditions. The experimental results show that the accuracy of this low cost system can be between 90% and 100% for finding major gazing directions.

Evaluation of Classical Features and Classifiers in Brain-Computer Interface Tasks

Sep 12, 2017

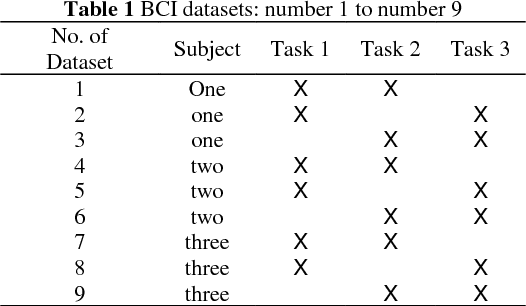

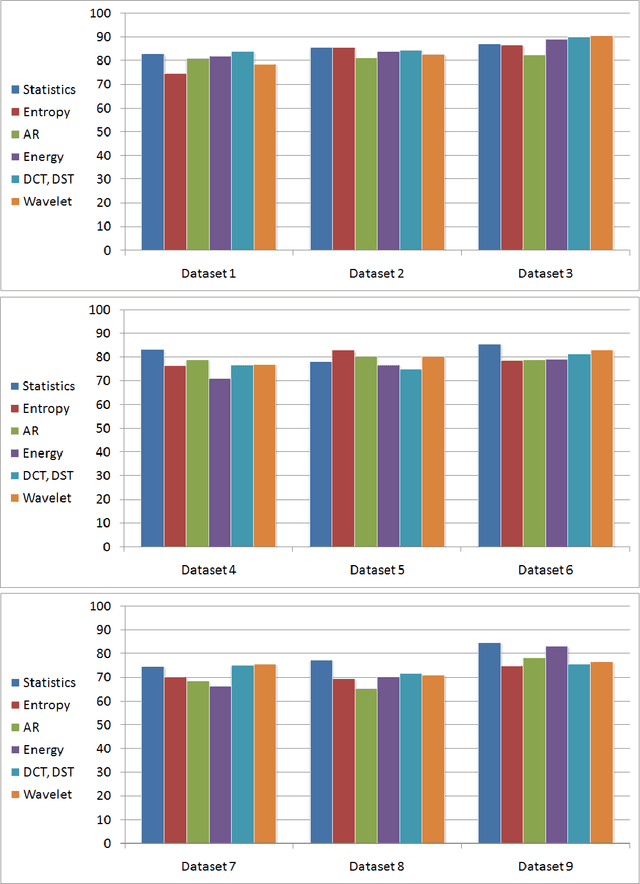

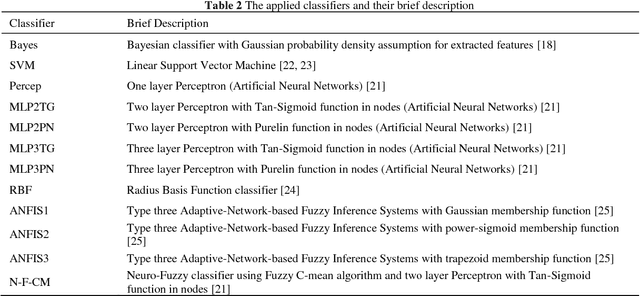

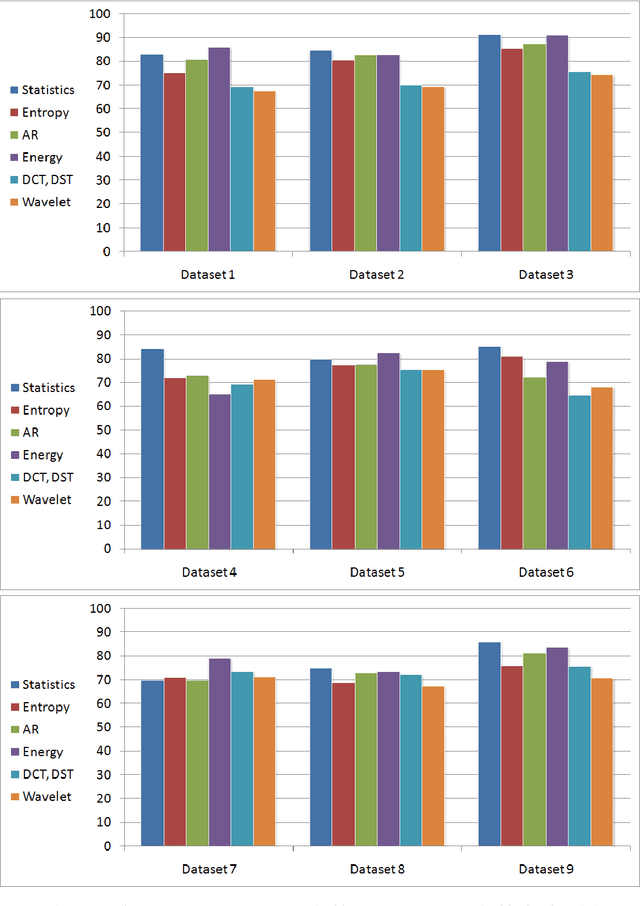

Abstract:Brain-Computer Interface (BCI) uses brain signals in order to provide a new method for communication between human and outside world. Feature extraction, selection and classification are among the main matters of concerns in signal processing stage of BCI. In this article, we present our findings about the most effective features and classifiers in some brain tasks. Six different groups of classical features and twelve classifiers have been examined in nine datasets of brain signal. The results indicate that energy of brain signals in {\alpha} and \b{eta} frequency bands, together with some statistical parameters are more effective, comparing to the other types of extracted features. In addition, Bayesian classifier with Gaussian distribution assumption and also Support Vector Machine (SVM) show to classify different BCI datasets more accurately than the other classifiers. We believe that the results can give an insight about a strategy for blind classification of brain signals in brain-computer interface.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge