Dmitry Kazhdan

Now You See Me : Concept-based Model Extraction

Oct 25, 2020

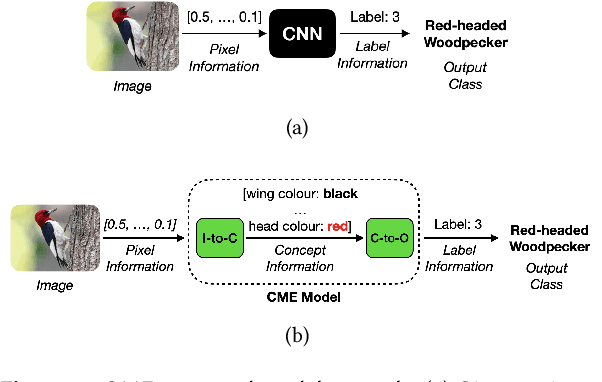

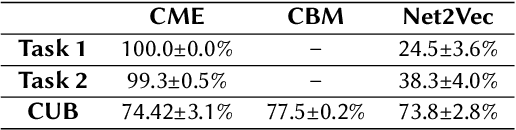

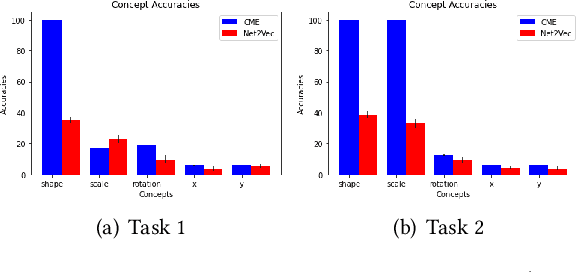

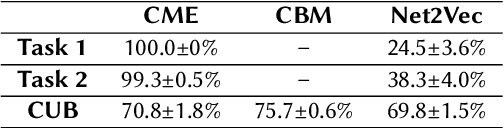

Abstract:Deep Neural Networks (DNNs) have achieved remarkable performance on a range of tasks. A key step to further empowering DNN-based approaches is improving their explainability. In this work we present CME: a concept-based model extraction framework, used for analysing DNN models via concept-based extracted models. Using two case studies (dSprites, and Caltech UCSD Birds), we demonstrate how CME can be used to (i) analyse the concept information learned by a DNN model (ii) analyse how a DNN uses this concept information when predicting output labels (iii) identify key concept information that can further improve DNN predictive performance (for one of the case studies, we showed how model accuracy can be improved by over 14%, using only 30% of the available concepts).

MARLeME: A Multi-Agent Reinforcement Learning Model Extraction Library

Apr 16, 2020

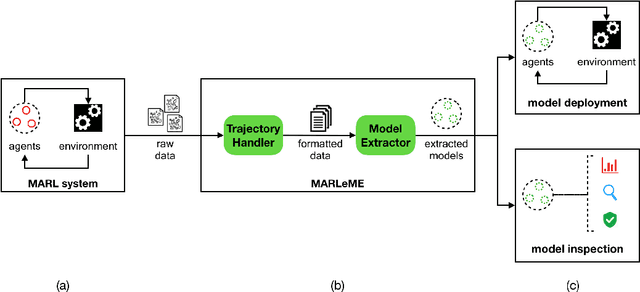

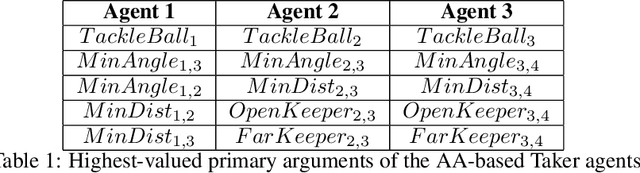

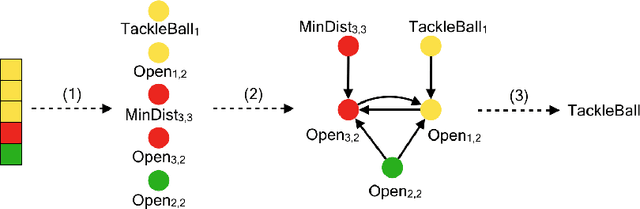

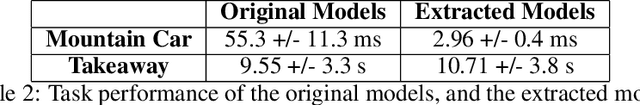

Abstract:Multi-Agent Reinforcement Learning (MARL) encompasses a powerful class of methodologies that have been applied in a wide range of fields. An effective way to further empower these methodologies is to develop libraries and tools that could expand their interpretability and explainability. In this work, we introduce MARLeME: a MARL model extraction library, designed to improve explainability of MARL systems by approximating them with symbolic models. Symbolic models offer a high degree of interpretability, well-defined properties, and verifiable behaviour. Consequently, they can be used to inspect and better understand the underlying MARL system and corresponding MARL agents, as well as to replace all/some of the agents that are particularly safety and security critical.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge