David Noever

Michael Pokorny

Numeracy from Literacy: Data Science as an Emergent Skill from Large Language Models

Jan 31, 2023Abstract:Large language models (LLM) such as OpenAI's ChatGPT and GPT-3 offer unique testbeds for exploring the translation challenges of turning literacy into numeracy. Previous publicly-available transformer models from eighteen months prior and 1000 times smaller failed to provide basic arithmetic. The statistical analysis of four complex datasets described here combines arithmetic manipulations that cannot be memorized or encoded by simple rules. The work examines whether next-token prediction succeeds from sentence completion into the realm of actual numerical understanding. For example, the work highlights cases for descriptive statistics on in-memory datasets that the LLM initially loads from memory or generates randomly using python libraries. The resulting exploratory data analysis showcases the model's capabilities to group by or pivot categorical sums, infer feature importance, derive correlations, and predict unseen test cases using linear regression. To extend the model's testable range, the research deletes and appends random rows such that recall alone cannot explain emergent numeracy.

Chatbots in a Honeypot World

Jan 10, 2023Abstract:Question-and-answer agents like ChatGPT offer a novel tool for use as a potential honeypot interface in cyber security. By imitating Linux, Mac, and Windows terminal commands and providing an interface for TeamViewer, nmap, and ping, it is possible to create a dynamic environment that can adapt to the actions of attackers and provide insight into their tactics, techniques, and procedures (TTPs). The paper illustrates ten diverse tasks that a conversational agent or large language model might answer appropriately to the effects of command-line attacker. The original result features feasibility studies for ten model tasks meant for defensive teams to mimic expected honeypot interfaces with minimal risks. Ultimately, the usefulness outside of forensic activities stems from whether the dynamic honeypot can extend the time-to-conquer or otherwise delay attacker timelines short of reaching key network assets like databases or confidential information. While ongoing maintenance and monitoring may be required, ChatGPT's ability to detect and deflect malicious activity makes it a valuable option for organizations seeking to enhance their cyber security posture. Future work will focus on cybersecurity layers, including perimeter security, host virus detection, and data security.

Chatbots As Fluent Polyglots: Revisiting Breakthrough Code Snippets

Jan 05, 2023Abstract:The research applies AI-driven code assistants to analyze a selection of influential computer code that has shaped modern technology, including email, internet browsing, robotics, and malicious software. The original contribution of this study was to examine half of the most significant code advances in the last 50 years and, in some cases, to provide notable improvements in clarity or performance. The AI-driven code assistant could provide insights into obfuscated code or software lacking explanatory commentary in all cases examined. We generated additional sample problems based on bug corrections and code optimizations requiring much deeper reasoning than a traditional Google search might provide. Future work focuses on adding automated documentation and code commentary and translating select large code bases into more modern versions with multiple new application programming interfaces (APIs) and chained multi-tasks. The AI-driven code assistant offers a valuable tool for software engineering, particularly in its ability to provide human-level expertise and assist in refactoring legacy code or simplifying the explanation or functionality of high-value repositories.

Chatbots as Problem Solvers: Playing Twenty Questions with Role Reversals

Jan 01, 2023Abstract:New chat AI applications like ChatGPT offer an advanced understanding of question context and memory across multi-step tasks, such that experiments can test its deductive reasoning. This paper proposes a multi-role and multi-step challenge, where ChatGPT plays the classic twenty-questions game but innovatively switches roles from the questioner to the answerer. The main empirical result establishes that this generation of chat applications can guess random object names in fewer than twenty questions (average, 12) and correctly guess 94% of the time across sixteen different experimental setups. The research introduces four novel cases where the chatbot fields the questions, asks the questions, both question-answer roles, and finally tries to guess appropriate contextual emotions. One task that humans typically fail but trained chat applications complete involves playing bilingual games of twenty questions (English answers to Spanish questions). Future variations address direct problem-solving using a similar inquisitive format to arrive at novel outcomes deductively, such as patentable inventions or combination thinking. Featured applications of this dialogue format include complex protein designs, neuroscience metadata, and child development educational materials.

Chatbots in a Botnet World

Dec 22, 2022Abstract:Question-and-answer formats provide a novel experimental platform for investigating cybersecurity questions. Unlike previous chatbots, the latest ChatGPT model from OpenAI supports an advanced understanding of complex coding questions. The research demonstrates thirteen coding tasks that generally qualify as stages in the MITRE ATT&CK framework, ranging from credential access to defense evasion. With varying success, the experimental prompts generate examples of keyloggers, logic bombs, obfuscated worms, and payment-fulfilled ransomware. The empirical results illustrate cases that support the broad gain of functionality, including self-replication and self-modification, evasion, and strategic understanding of complex cybersecurity goals. One surprising feature of ChatGPT as a language-only model centers on its ability to spawn coding approaches that yield images that obfuscate or embed executable programming steps or links.

The Turing Deception

Dec 09, 2022Abstract:This research revisits the classic Turing test and compares recent large language models such as ChatGPT for their abilities to reproduce human-level comprehension and compelling text generation. Two task challenges -- summarization, and question answering -- prompt ChatGPT to produce original content (98-99%) from a single text entry and also sequential questions originally posed by Turing in 1950. The question of a machine fooling a human judge recedes in this work relative to the question of "how would one prove it?" The original contribution of the work presents a metric and simple grammatical set for understanding the writing mechanics of chatbots in evaluating their readability and statistical clarity, engagement, delivery, and overall quality. While Turing's original prose scores at least 14% below the machine-generated output, the question of whether an algorithm displays hints of Turing's truly original thoughts (the "Lovelace 2.0" test) remains unanswered and potentially unanswerable for now.

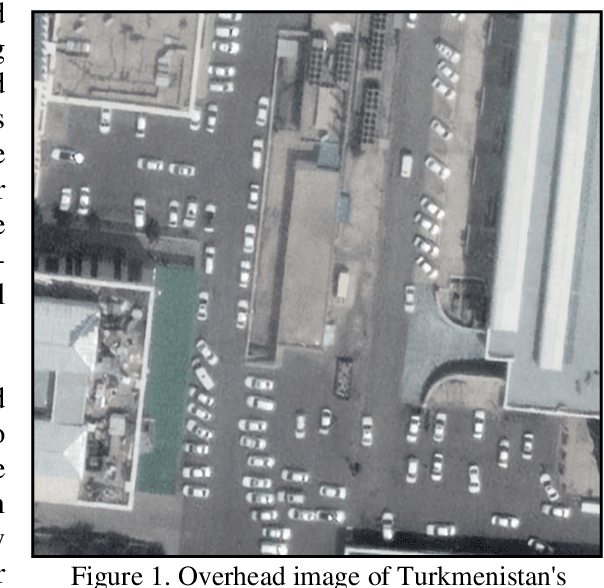

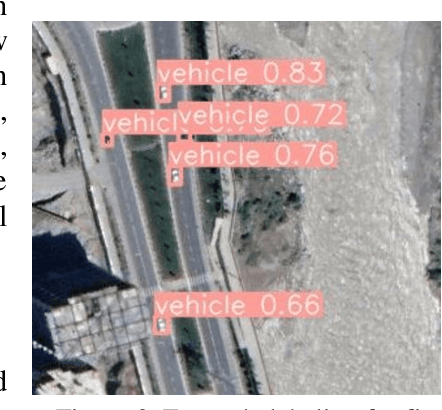

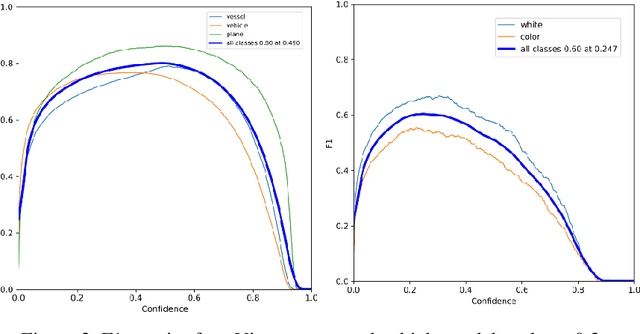

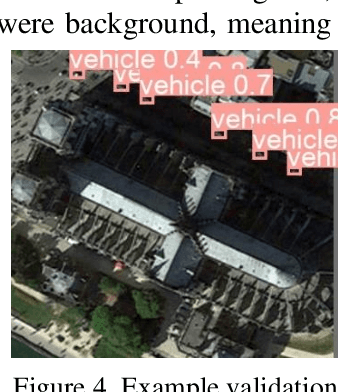

Soft Labels for Rapid Satellite Object Detection

Dec 01, 2022Abstract:Soft labels in image classification are vector representations of an image's true classification. In this paper, we investigate soft labels in the context of satellite object detection. We propose using detections as the basis for a new dataset of soft labels. Much of the effort in creating a high-quality model is gathering and annotating the training data. If we could use a model to generate a dataset for us, we could not only rapidly create datasets, but also supplement existing open-source datasets. Using a subset of the xView dataset, we train a YOLOv5 model to detect cars, planes, and ships. We then use that model to generate soft labels for the second training set which we then train and compare to the original model. We show that soft labels can be used to train a model that is almost as accurate as a model trained on the original data.

Soft-labeling Strategies for Rapid Sub-Typing

Sep 23, 2022

Abstract:The challenge of labeling large example datasets for computer vision continues to limit the availability and scope of image repositories. This research provides a new method for automated data collection, curation, labeling, and iterative training with minimal human intervention for the case of overhead satellite imagery and object detection. The new operational scale effectively scanned an entire city (68 square miles) in grid search and yielded a prediction of car color from space observations. A partially trained yolov5 model served as an initial inference seed to output further, more refined model predictions in iterative cycles. Soft labeling here refers to accepting label noise as a potentially valuable augmentation to reduce overfitting and enhance generalized predictions to previously unseen test data. The approach takes advantage of a real-world instance where a cropped image of a car can automatically receive sub-type information as white or colorful from pixel values alone, thus completing an end-to-end pipeline without overdependence on human labor.

Physical Systems Modeled Without Physical Laws

Jul 26, 2022

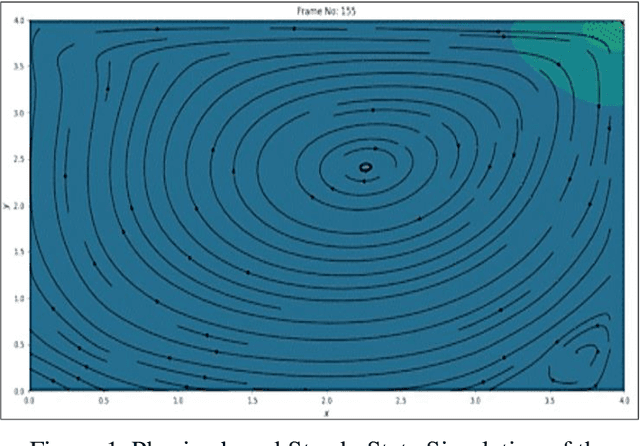

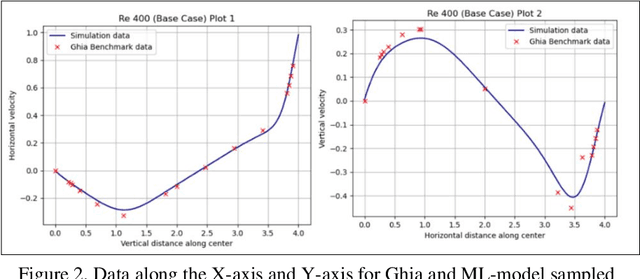

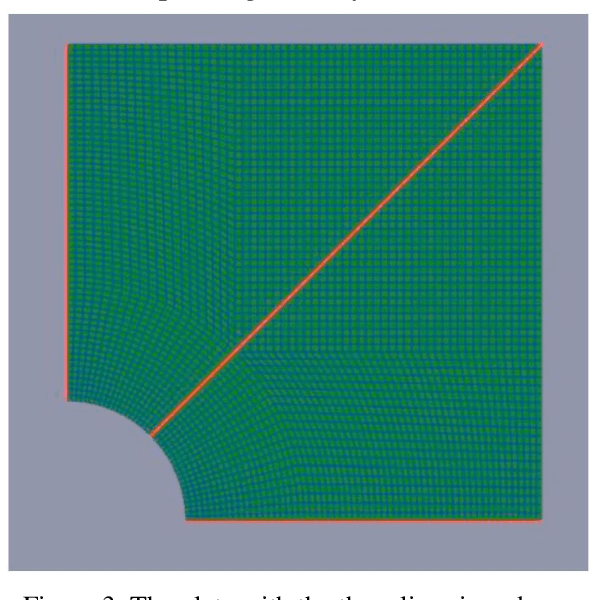

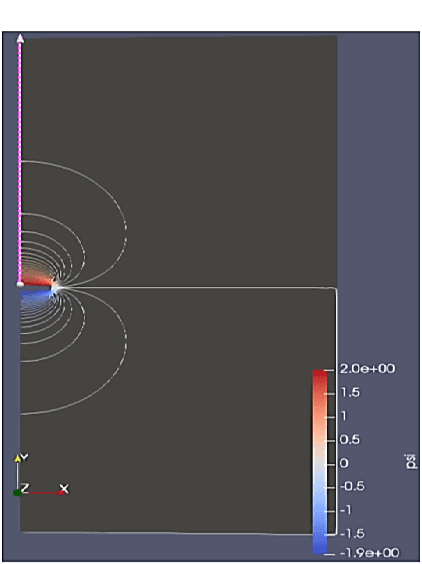

Abstract:Physics-based simulations typically operate with a combination of complex differentiable equations and many scientific and geometric inputs. Our work involves gathering data from those simulations and seeing how well tree-based machine learning methods can emulate desired outputs without "knowing" the complex backing involved in the simulations. The selected physics-based simulations included Navier-Stokes, stress analysis, and electromagnetic field lines to benchmark performance as numerical and statistical algorithms. We specifically focus on predicting specific spatial-temporal data between two simulation outputs and increasing spatial resolution to generalize the physics predictions to finer test grids without the computational costs of repeating the numerical calculation.

Word Play for Playing Othello (Reverses)

Jul 18, 2022

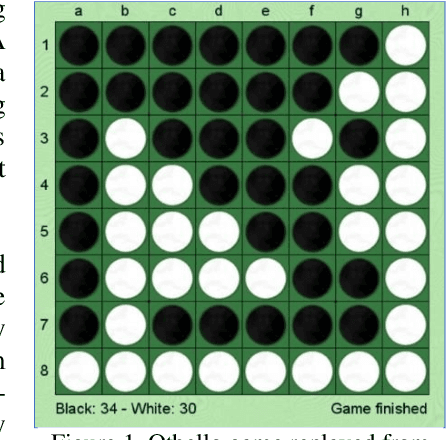

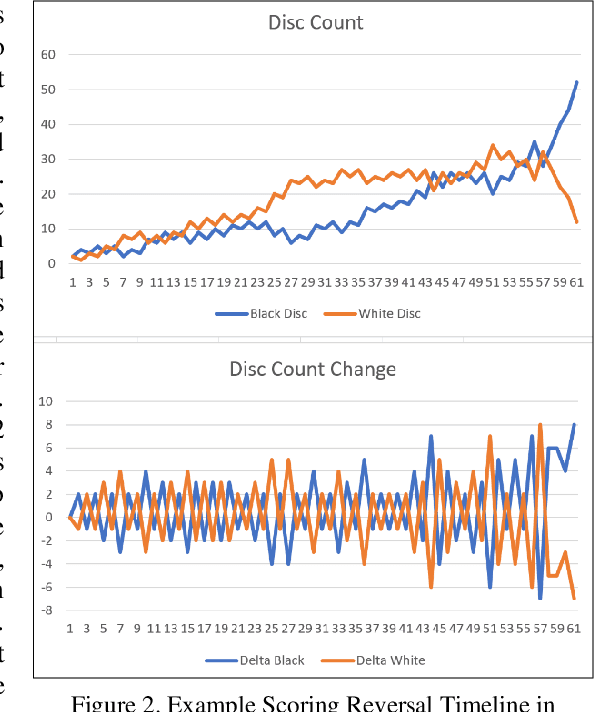

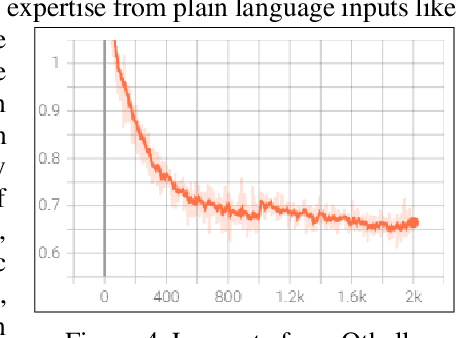

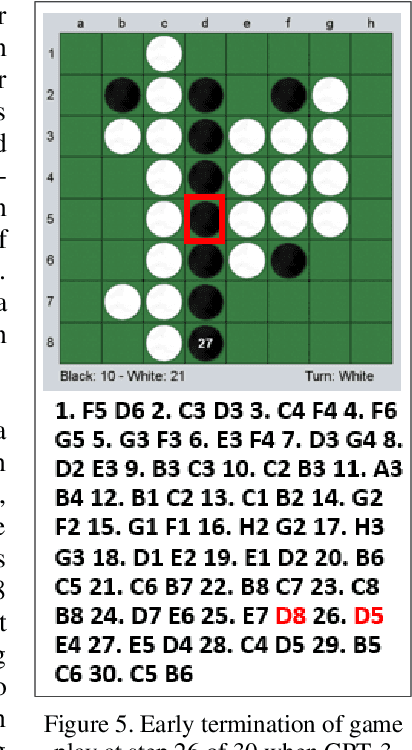

Abstract:Language models like OpenAI's Generative Pre-Trained Transformers (GPT-2/3) capture the long-term correlations needed to generate text in a variety of domains (such as language translators) and recently in gameplay (chess, Go, and checkers). The present research applies both the larger (GPT-3) and smaller (GPT-2) language models to explore the complex strategies for the game of Othello (or Reverses). Given the game rules for rapid reversals of fortune, the language model not only represents a candidate predictor of the next move based on previous game moves but also avoids sparse rewards in gameplay. The language model automatically captures or emulates championship-level strategies. The fine-tuned GPT-2 model generates Othello games ranging from 13-71% completion, while the larger GPT-3 model reaches 41% of a complete game. Like previous work with chess and Go, these language models offer a novel way to generate plausible game archives, particularly for comparing opening moves across a larger sample than humanly possible to explore. A primary contribution of these models magnifies (by two-fold) the previous record for player archives (120,000 human games over 45 years from 1977-2022), thus supplying the research community with more diverse and original strategies for sampling with other reinforcement learning techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge