David Camarillo

Differences between Two Maximal Principal Strain Rate Calculation Schemes in Traumatic Brain Analysis with in-vivo and in-silico Datasets

Sep 12, 2024

Abstract:Brain deformation caused by a head impact leads to traumatic brain injury (TBI). The maximum principal strain (MPS) was used to measure the extent of brain deformation and predict injury, and the recent evidence has indicated that incorporating the maximum principal strain rate (MPSR) and the product of MPS and MPSR, denoted as MPSxSR, enhances the accuracy of TBI prediction. However, ambiguities have arisen about the calculation of MPSR. Two schemes have been utilized: one (MPSR1) is to use the time derivative of MPS, and another (MPSR2) is to use the first eigenvalue of the strain rate tensor. Both MPSR1 and MPSR2 have been applied in previous studies to predict TBI. To quantify the discrepancies between these two methodologies, we conducted a comparison of these two MPSR methodologies across nine in-vivo and in-silico head impact datasets and found that 95MPSR1 was 5.87% larger than 95MPSR2, and 95MPSxSR1 was 2.55% larger than 95MPSxSR2. Across every element in all head impacts, MPSR1 was 8.28% smaller than MPSR2, and MPSxSR1 was 8.11% smaller than MPSxSR2. Furthermore, logistic regression models were trained to predict TBI based on the MPSR (or MPSxSR), and no significant difference was observed in the predictability across different variables. The consequence of misuse of MPSR and MPSxSR thresholds (i.e. compare threshold of 95MPSR1 with value from 95MPSR2 to determine if the impact is injurious) was investigated, and the resulting false rates were found to be around 1%. The evidence suggested that these two methodologies were not significantly different in detecting TBI.

Predictive Factors of Kinematics in Traumatic Brain Injury from Head Impacts Based on Statistical Interpretation

Feb 13, 2021

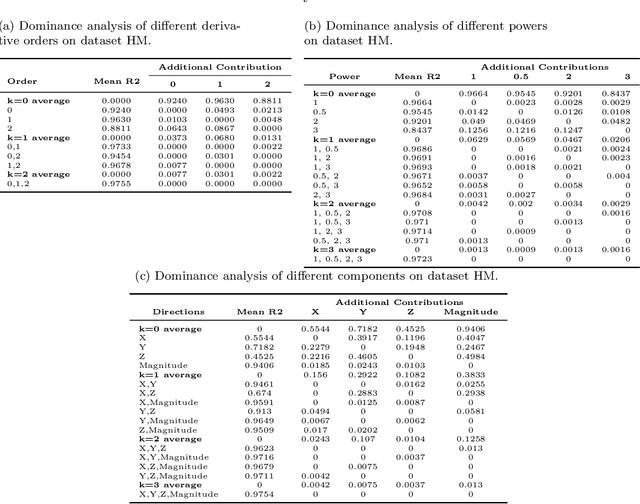

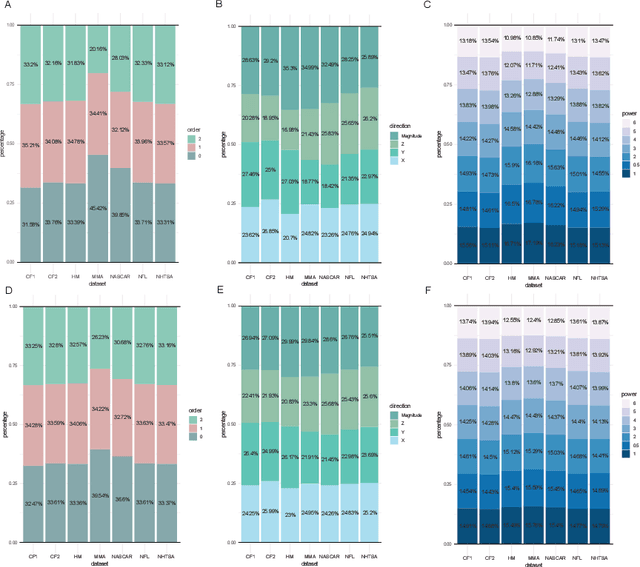

Abstract:Brain tissue deformation resulting from head impacts is primarily caused by rotation and can lead to traumatic brain injury. To quantify brain injury risk based on measurements of accelerational forces to the head, various brain injury criteria based on different factors of these kinematics have been developed. To better design brain injury criteria, the predictive power of rotational kinematics factors, which are different in 1) the derivative order, 2) the direction and 3) the power of the angular velocity, were analyzed based on different datasets including laboratory impacts, American football, mixed martial arts (MMA), NHTSA automobile crashworthiness tests and NASCAR crash events. Ordinary least squares regressions were built from kinematics factors to the 95% maximum principal strain (MPS95), and we compared zero-order correlation coefficients, structure coefficients, commonality analysis, and dominance analysis. The angular acceleration, the magnitude and the first power factors showed the highest predictive power for the laboratory impacts, American football impacts, with few exceptions (angular velocity for MMA and NASCAR impacts). The predictive power of kinematics in three directions (x: posterior-to-anterior, y: left-to-right, z: superior-to-inferior) of kinematics varied with different sports and types of head impacts.

OffsetNet: Deep Learning for Localization in the Lung using Rendered Images

Sep 15, 2018

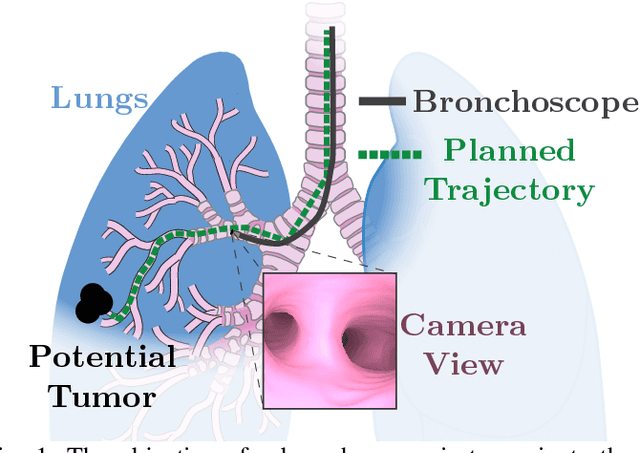

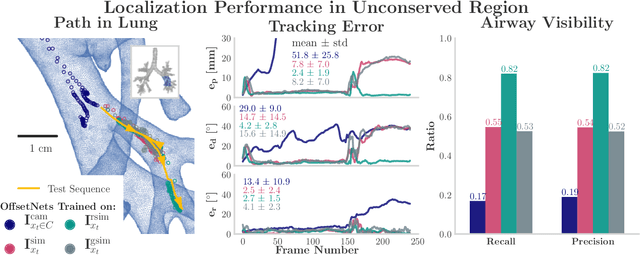

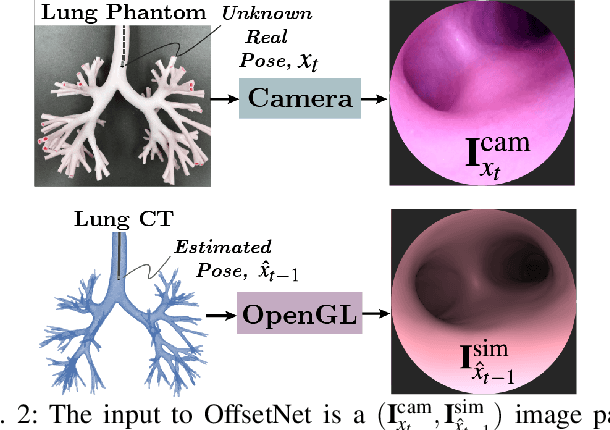

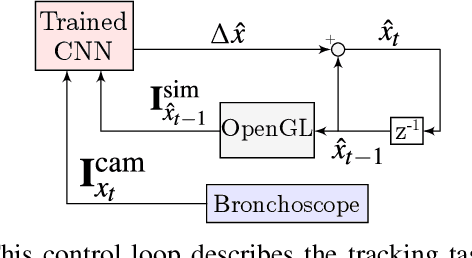

Abstract:Navigating surgical tools in the dynamic and tortuous anatomy of the lung's airways requires accurate, real-time localization of the tools with respect to the preoperative scan of the anatomy. Such localization can inform human operators or enable closed-loop control by autonomous agents, which would require accuracy not yet reported in the literature. In this paper, we introduce a deep learning architecture, called OffsetNet, to accurately localize a bronchoscope in the lung in real-time. After training on only 30 minutes of recorded camera images in conserved regions of a lung phantom, OffsetNet tracks the bronchoscope's motion on a held-out recording through these same regions at an update rate of 47 Hz and an average position error of 1.4 mm. Because this model performs poorly in less conserved regions, we augment the training dataset with simulated images from these regions. To bridge the gap between camera and simulated domains, we implement domain randomization and a generative adversarial network (GAN). After training on simulated images, OffsetNet tracks the bronchoscope's motion in less conserved regions at an average position error of 2.4 mm, which meets conservative thresholds required for successful tracking.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge