Danijela Cabric

Distributed Transmit Beamforming: Analyzing the Maximum Communication Range

May 10, 2022

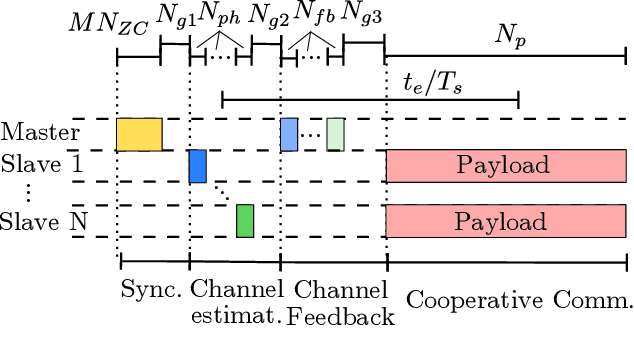

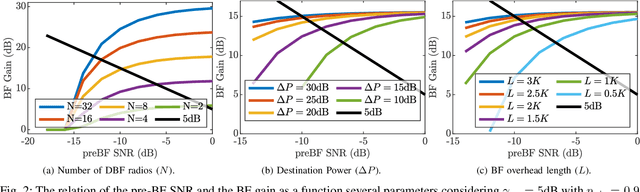

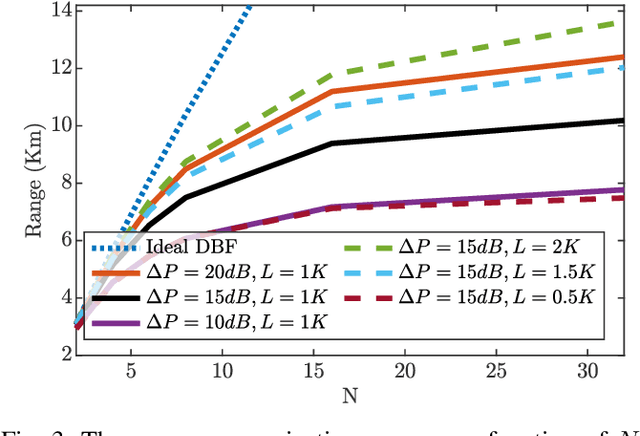

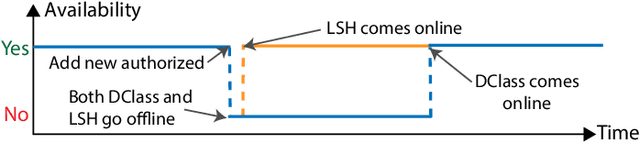

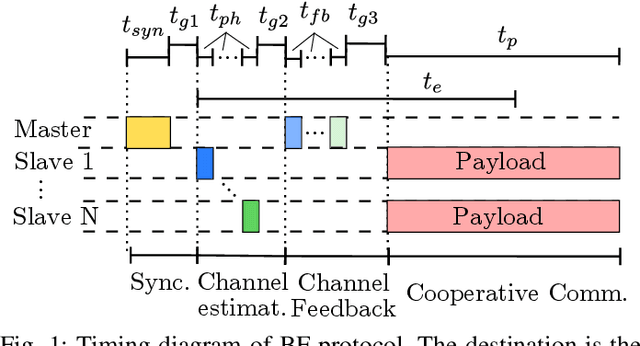

Abstract:Distributed transmit beamforming is a technique that adjusts the signals from cooperating radios to combine coherently at a destination radio. To achieve coherent combining, the radios can exchange preambles with the destination for frequency synchronization and signal phase adjustment. At the destination, coherent combining leads to a beamforming (BF) gain. The BF gain can extend the communication range by countering the path loss that increases with the distance from the destination. While ideally the maximum range can be trivially calculated from the BF gain, in reality, the BF gain depends on the distance because, at a larger distance, lower SNR of the exchanged preambles causes higher synchronization and phase estimation errors, which in turn degrades the BF gain. In this paper, considering the BF gain degradation for a destination-led BF protocol, we calculate the maximum communication range to realize a desired post-BF SNR by analyzing the relation between the pre-BF SNR and the BF gain. We show that increasing the preamble lengths or increasing the destination power can significantly increase the maximum range while just increasing the number of radios gives diminishing range extension.

Energy Efficiency Tradeoffs for Sub-THz Multi-User MIMO Base Station Receivers

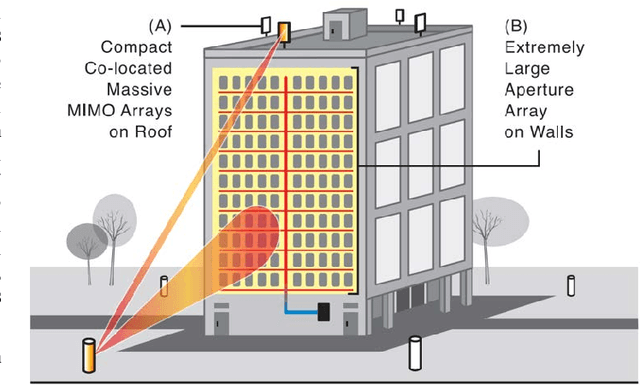

Feb 16, 2022Abstract:Sub-terahertz (sub-THz) antenna array architectures significantly impact power usage and communications capacity in multi-user multiple-input multiple-output (MU-MIMO) systems. In this work, we compare the energy efficiency and spectral efficiency of three MU-MIMO capable array architectures for base station receivers. We provide a sub-THz circuits power analysis, based on our review of state-of-the-art D-band and G-band components, and compare communications capabilities through wideband simulations. Our analysis reveals that digital arrays can provide the highest spectral efficiency and energy efficiency, due to the high power consumption of sub-THz active phase shifters or when SNR and system spectral efficiency requirements are high.

WiSig: A Large-Scale WiFi Signal Dataset for Receiver and Channel Agnostic RF Fingerprinting

Jan 12, 2022

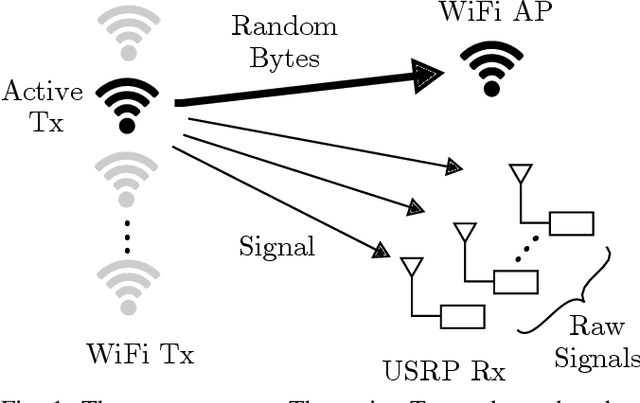

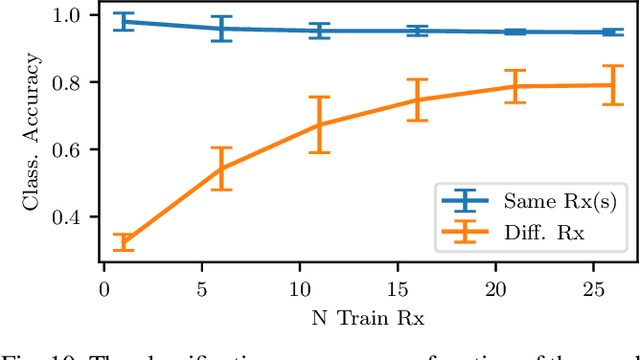

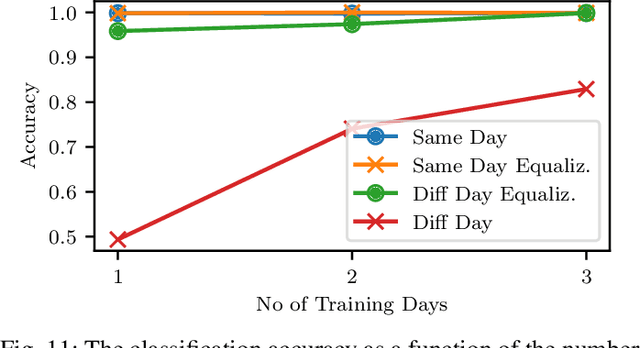

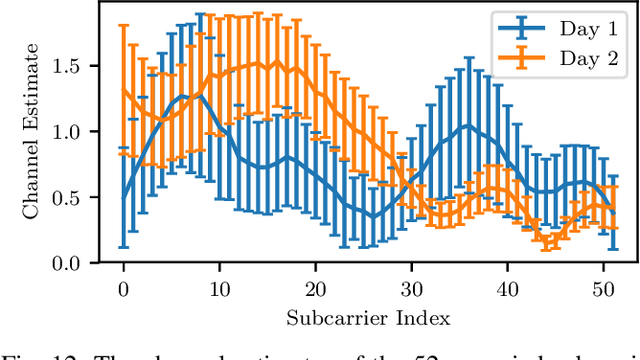

Abstract:RF fingerprinting leverages circuit-level variability of transmitters to identify them using signals they send. Signals used for identification are impacted by a wireless channel and receiver circuitry, creating additional impairments that can confuse transmitter identification. Eliminating these impairments or just evaluating them, requires data captured over a prolonged period of time, using many spatially separated transmitters and receivers. In this paper, we present WiSig; a large scale WiFi dataset containing 10 million packets captured from 174 off-the-shelf WiFi transmitters and 41 USRP receivers over 4 captures spanning a month. WiSig is publicly available, not just as raw captures, but as conveniently pre-processed subsets of limited size, along with the scripts and examples. A preliminary evaluation performed using WiSig shows that changing receivers, or using signals captured on a different day can significantly degrade a trained classifier's performance. While capturing data over more days or more receivers limits the degradation, it is not always feasible and novel data-driven approaches are needed. WiSig provides the data to develop and evaluate these approaches towards channel and receiver agnostic transmitter fingerprinting.

Multi-Mode Spatial Signal Processor with Rainbow-like Fast Beam Training and Wideband Communications using True-Time-Delay Arrays

Jan 08, 2022

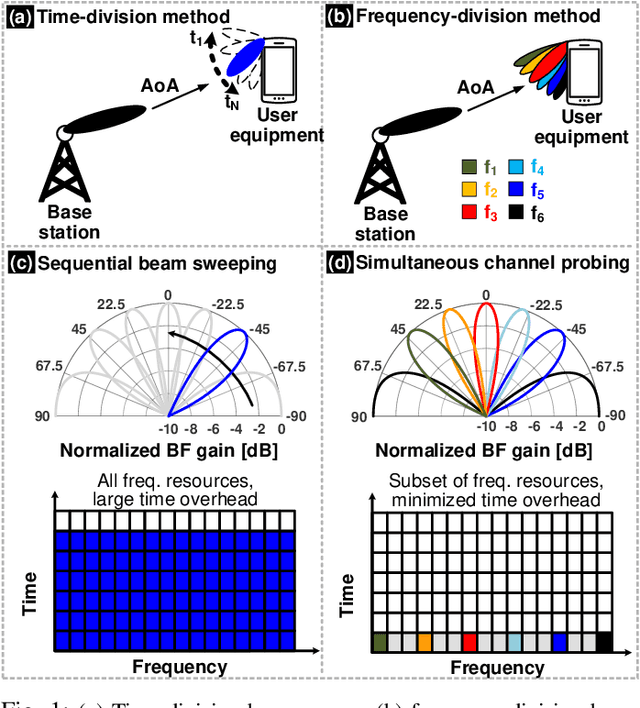

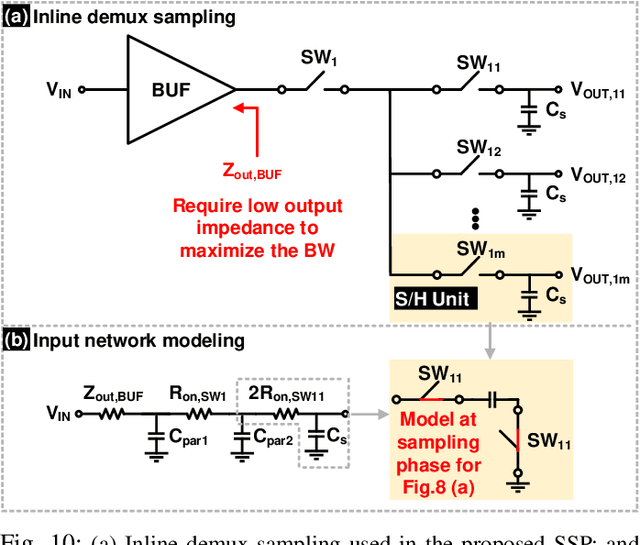

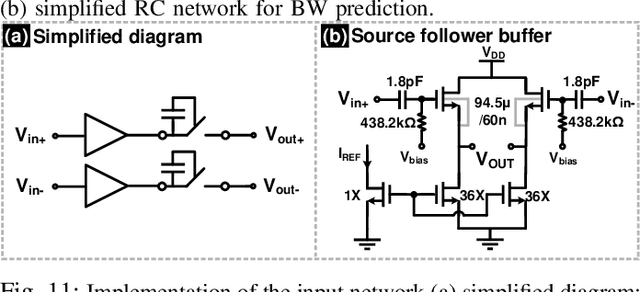

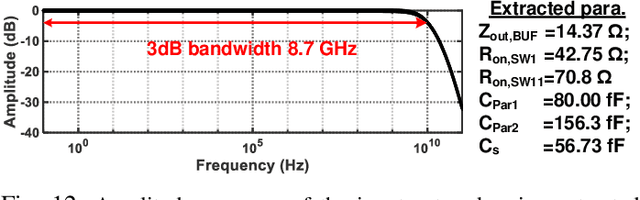

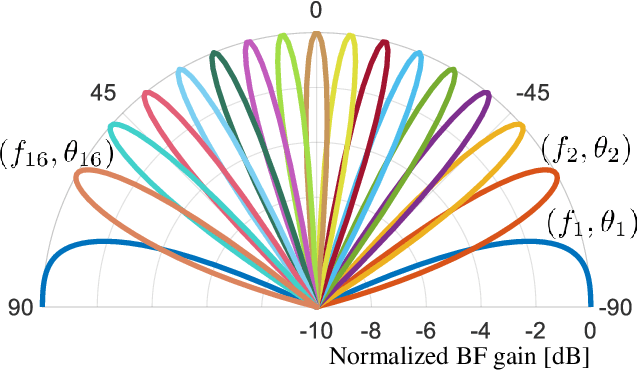

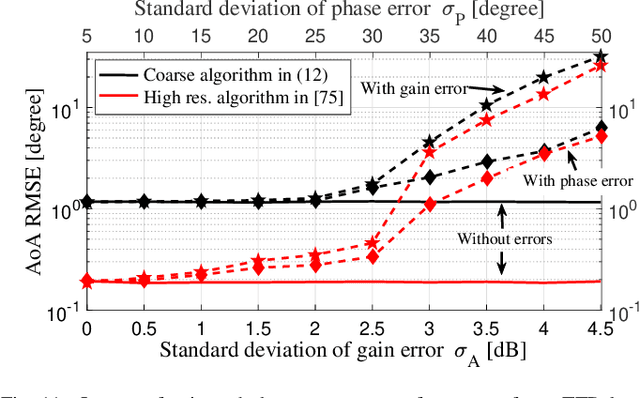

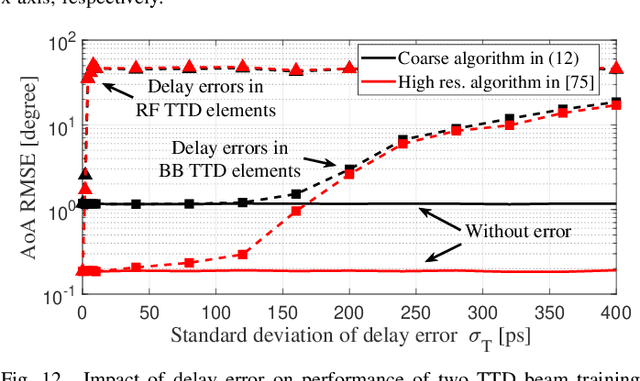

Abstract:Initial access in millimeter-wave (mmW) wireless is critical toward successful realization of the fifth-generation (5G) wireless networks and beyond. Limited bandwidth in existing standards and use of phase-shifters in analog/hybrid phased-antenna arrays (PAA) are not suited for these emerging standards demanding low-latency direction finding. This work proposes a reconfigurable true-time-delay (TTD) based spatial signal processor (SSP) with frequency-division beam training methodology and wideband beam-squint less data communications. Discrete-time delay compensated clocking technique is used to support 800~MHz bandwidth with a large unity-gain bandwidth ring-amplifier (RAMP)-based signal combiner. To extensively characterize the proposed SSP across different SSP modes and frequency-angle pairs, an automated testbed is developed using computer-vision techniques that significantly speeds up the testing progress and minimize possible human errors. Using seven levels of time-interleaving for each of the 4 antenna elements, the TTD SSP has a delay range of 3.8 ns over 800 MHz and achieves unique frequency-to-angle mapping in the beamtraining mode with nearly 12 dB frequency-independent gain in the beamforming mode. The SSP is prototyped in 65nm CMOS with an area of 1.98mm$^2$ consuming only 29 mW excluding buffers. Further, an error vector magnitude (EVM) of 9.8% is realized for 16-QAM modulation at a speed of 122.8 Mb/s.

Wideband Beamforming with Rainbow Beam Training using Reconfigurable True-Time-Delay Arrays for Millimeter-Wave Wireless

Nov 30, 2021

Abstract:The decadal research in integrated true-time-delay arrays have seen organic growth enabling realization of wideband beamformers for large arrays with wide aperture widths. This article introduces highly reconfigurable delay elements implementable at analog or digital baseband that enables multiple SSP functions including wideband beamforming, wideband interference cancellation, and fast beam training. Details of the beam-training algorithm, system design considerations, system architecture and circuits with large delay range-to-resolution ratios are presented leveraging integrated delay compensation techniques. The article lays out the framework for true-time-delay based arrays in next-generation network infrastructure supporting 3D beam training in planar arrays, low latency massive multiple access, and emerging wireless communications standards.

Real-time Wireless Transmitter Authorization: Adapting to Dynamic Authorized Sets with Information Retrieval

Nov 04, 2021

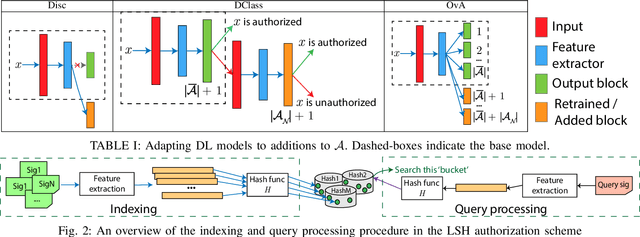

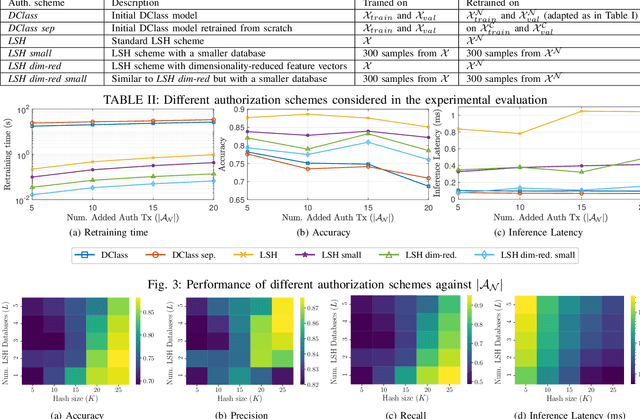

Abstract:As the Internet of Things (IoT) continues to grow, ensuring the security of systems that rely on wireless IoT devices has become critically important. Deep learning-based passive physical layer transmitter authorization systems have been introduced recently for this purpose, as they accommodate the limited computational and power budget of such devices. These systems have been shown to offer excellent outlier detection accuracies when trained and tested on a fixed authorized transmitter set. However in a real-life deployment, a need may arise for transmitters to be added and removed as the authorized set of transmitters changes. In such cases, the system could experience long down-times, as retraining the underlying deep learning model is often a time-consuming process. In this paper, we draw inspiration from information retrieval to address this problem: by utilizing feature vectors as RF fingerprints, we first demonstrate that training could be simplified to indexing those feature vectors into a database using locality sensitive hashing (LSH). Then we show that approximate nearest neighbor search could be performed on the database to perform transmitter authorization that matches the accuracy of deep learning models, while allowing for more than 100x faster retraining. Furthermore, dimensionality reduction techniques are used on the feature vectors to show that the authorization latency of our technique could be reduced to approach that of traditional deep learning-based systems.

Distributed Transmit Beamforming: Design and Demonstration from the Lab to UAVs

Oct 26, 2021

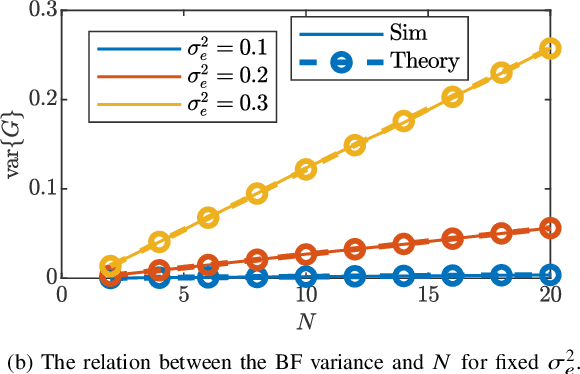

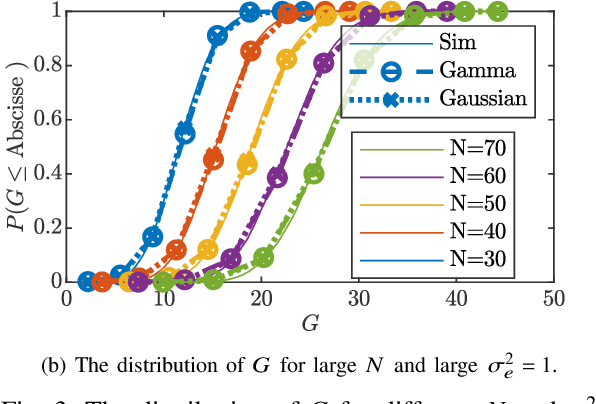

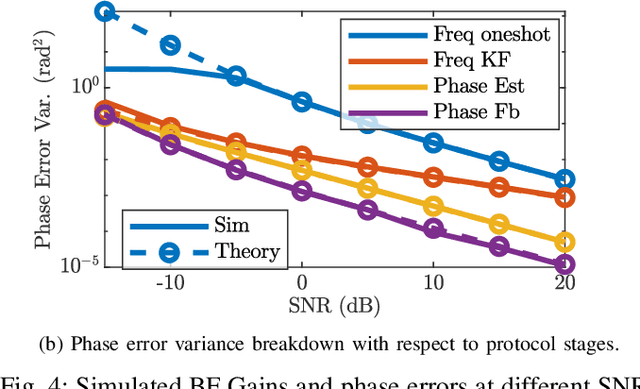

Abstract:Cooperating radios can extend their communication range by adjusting their signals to ensure coherent combining at a destination radio. This technique is called distributed transmit beamforming. Beamforming (BF) relies on the BF radios having frequency synchronized carriers and phases adjusted for coherent combining. Both requirements are typically met by exchanging preambles with the destination. However, since BF aims to increase the communication range, the individually transmitted preambles are typically at low SNR and their lengths are constrained by the channel coherence time. These noisy preambles lead to errors in frequency and phase estimation, which result in randomly changing BF gains. To build reliable distributed BF systems, the impact of estimation errors on the BF gains need to be considered in the design. In this work, assuming a destination-led BF protocol and Kalman filter for frequency tracking, we optimize the number of BF radios and the preamble lengths to achieve reliable BF gain. To do that, we characterize the relations between the BF gains distribution, the channel coherence time, and design parameters like the SNR, preamble lengths, and the number of radios. The proposed relations are verified using simulations and via experiments using software-defined radios in a lab and on UAVs.

Machine Learning Assisted Phase-less Millimeter-Wave Beam Alignment in Multipath Channels

Sep 29, 2021

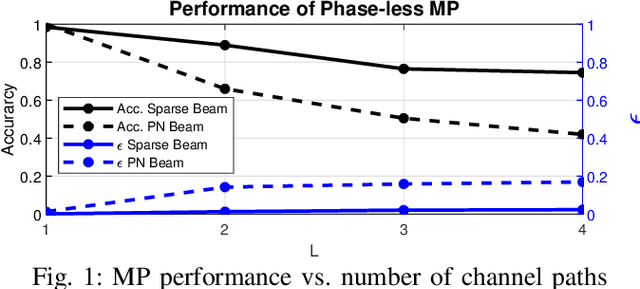

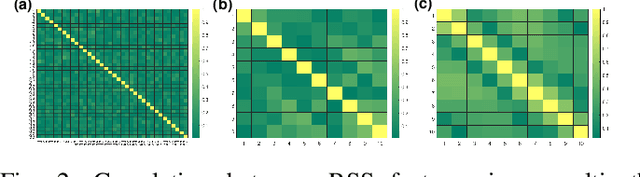

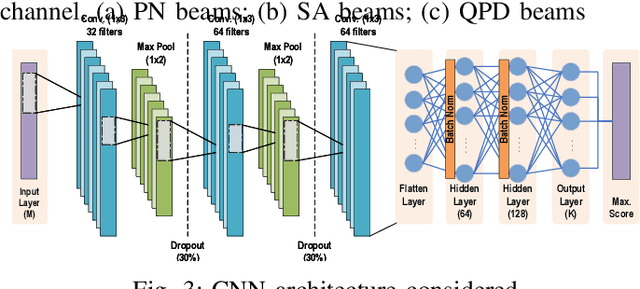

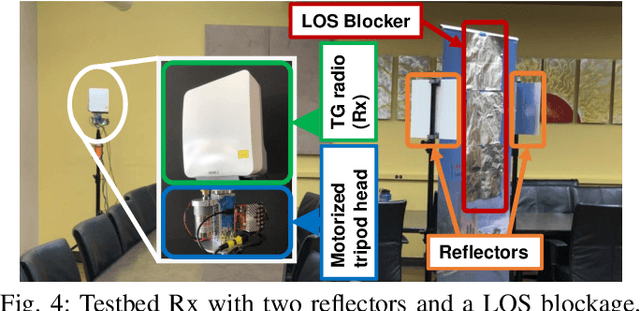

Abstract:Communication systems at millimeter-wave (mmW) and sub-terahertz frequencies are of increasing interest for future high-data rate networks. One critical challenge faced by phased array systems at these high frequencies is the efficiency of the initial beam alignment, typically using only phase-less power measurements due to high frequency oscillator phase noise. Traditional methods for beam alignment require exhaustive sweeps of all possible beam directions, thus scale communications overhead linearly with antenna array size. For better scaling with the large arrays required at high mmW bands, compressive sensing methods have been proposed as their overhead scales logarithmically with the array size. However, algorithms utilizing machine learning have shown more efficient and more accurate alignment when using real hardware due to array impairments. Additionally, few existing phase-less beam alignment algorithms have been tested over varied secondary path strength in multipath channels. In this work, we introduce a novel, machine learning based algorithm for beam alignment in multipath environments using only phase-less received power measurements. We consider the impacts of phased array sounding beam design and machine learning architectures on beam alignment performance and validate our findings experimentally using 60 GHz radios with 36-element phased arrays. Using experimental data in multipath channels, our proposed algorithm demonstrates an 88\% reduction in beam alignment overhead compared to an exhaustive search and at least a 62\% reduction in overhead compared to existing compressive methods.

Open Set RF Fingerprinting using Generative Outlier Augmentation

Aug 30, 2021

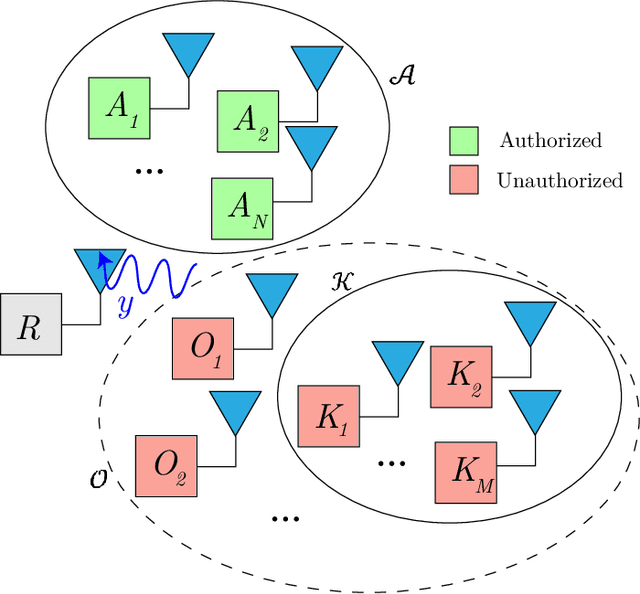

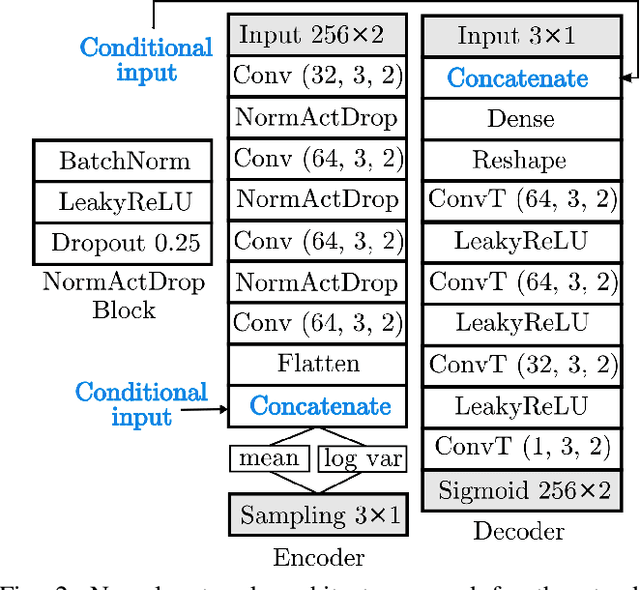

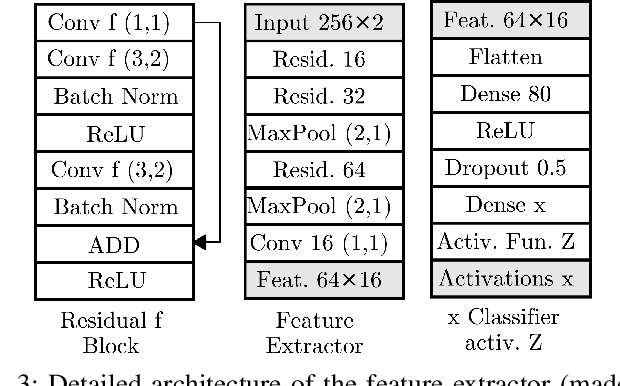

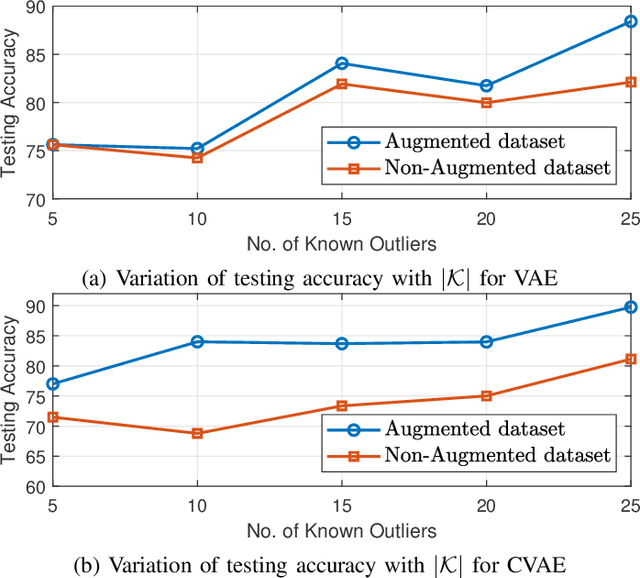

Abstract:RF devices can be identified by unique imperfections embedded in the signals they transmit called RF fingerprints. The closed set classification of such devices, where the identification must be made among an authorized set of transmitters, has been well explored. However, the much more difficult problem of open set classification, where the classifier needs to reject unauthorized transmitters while recognizing authorized transmitters, has only been recently visited. So far, efforts at open set classification have largely relied on the utilization of signal samples captured from a known set of unauthorized transmitters to aid the classifier learn unauthorized transmitter fingerprints. Since acquiring new transmitters to use as known transmitters is highly expensive, we propose to use generative deep learning methods to emulate unauthorized signal samples for the augmentation of training datasets. We develop two different data augmentation techniques, one that exploits a limited number of known unauthorized transmitters and the other that does not require any unauthorized transmitters. Experiments conducted on a dataset captured from a WiFi testbed indicate that data augmentation allows for significant increases in open set classification accuracy, especially when the authorized set is small.

Rainbow-link: Beam-Alignment-Free and Grant-Free mmW Multiple Access using True-Time-Delay Array

Aug 21, 2021

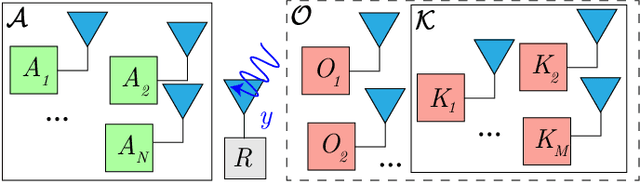

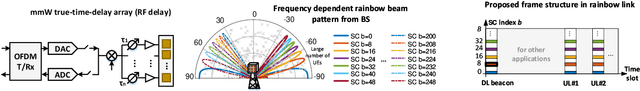

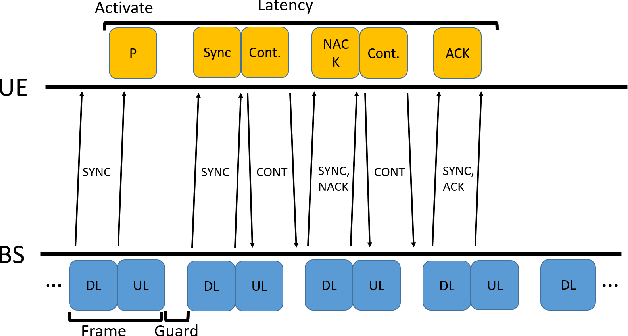

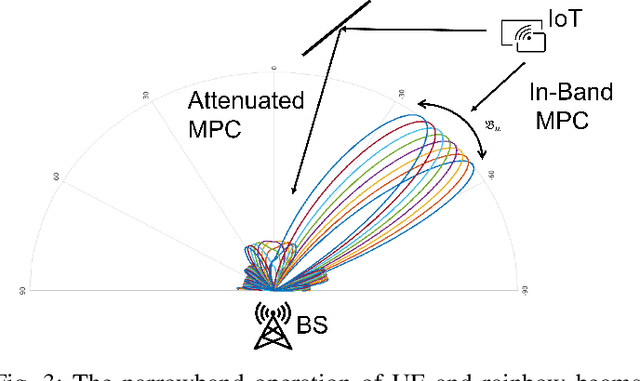

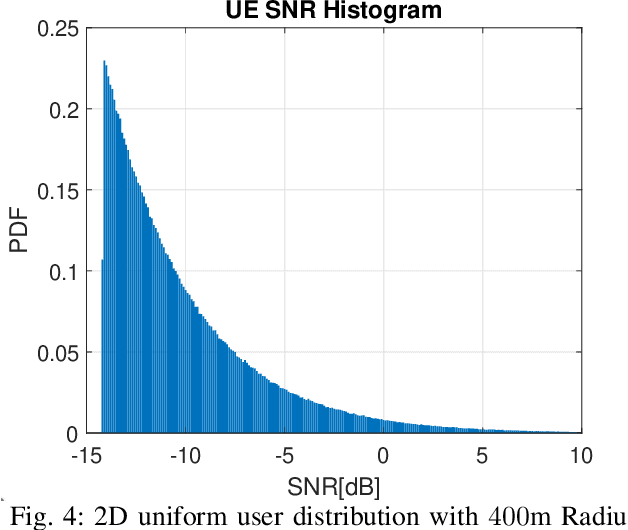

Abstract:In this paper we propose a novel millimeter wave (mmW) multiple access method that exploits unique frequency dependent beamforming capabilities of True Time Delay (TTD) array architecture. The proposed protocol combines a contentionbased grant-free access and orthogonal frequency-division multiple access (OFDMA) scheme for uplink machine type communications. By exploiting abundant time-frequency resource blocks in mmW spectrum, we design a simple protocol that can achieve low collision rate and high network reliability for short packets and sporadic transmissions. We analyze the impact of various system parameters on system performance during synchronization and contention period. We exploit unique advantages of frequency dependent beamforming, referred as rainbow beam, to eliminate beam training overhead and analyze its impact on rates, latency, and coverage. The proposed system and protocol can flexibly accommodate different low latency applications with moderate rate requirements for a very large number of narrowband single antenna devices. By harnessing abundant resources in mmW spectrum and beamforming gain of TTD arrays rainbow link based system can simultaneously satisfy ultra-reliability and massive multiple access requirements.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge