D. Fouchez

A Probabilistic Autoencoder for Type Ia Supernovae Spectral Time Series

Jul 15, 2022

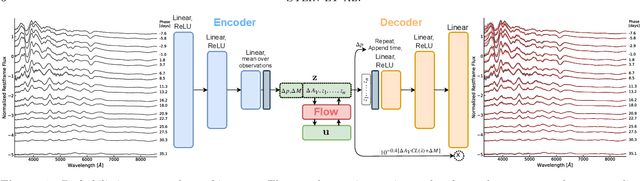

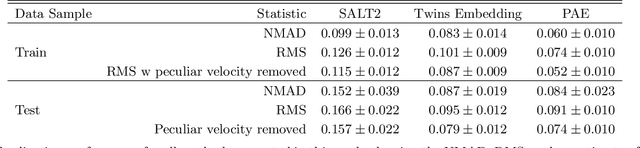

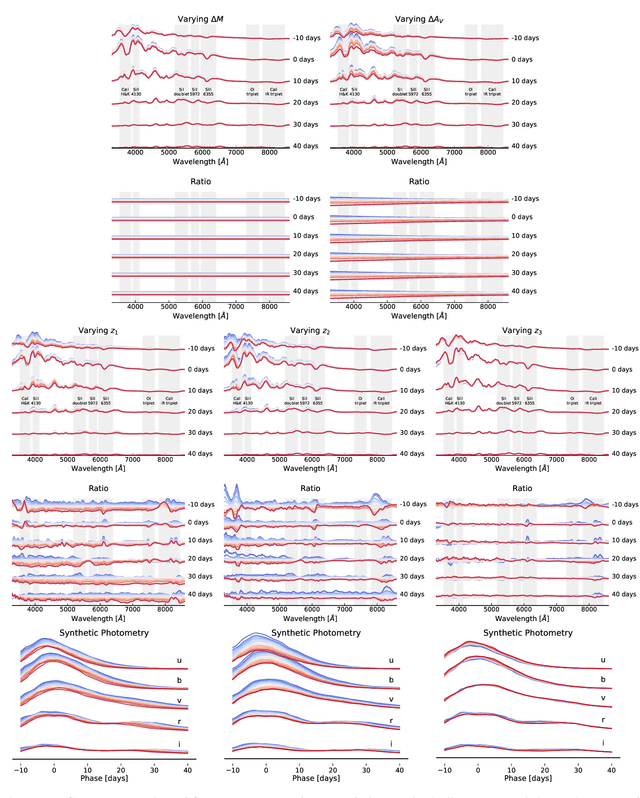

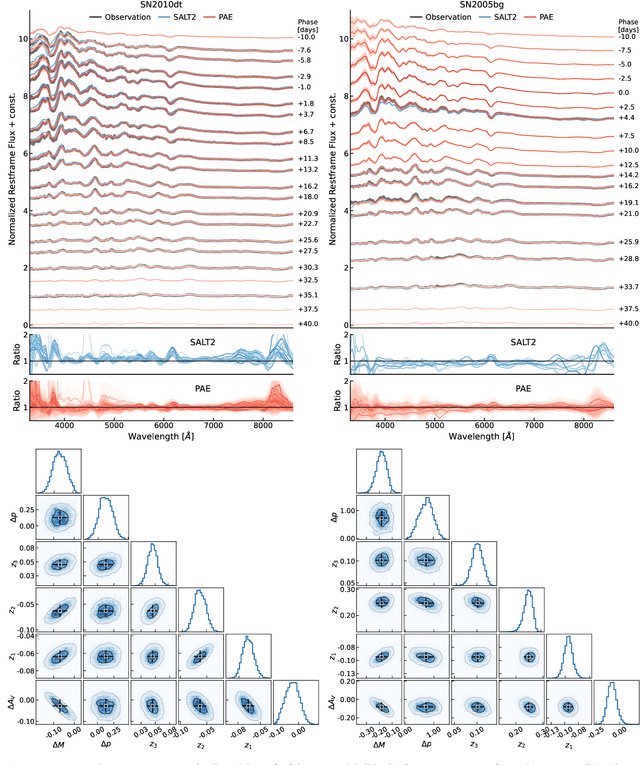

Abstract:We construct a physically-parameterized probabilistic autoencoder (PAE) to learn the intrinsic diversity of type Ia supernovae (SNe Ia) from a sparse set of spectral time series. The PAE is a two-stage generative model, composed of an Auto-Encoder (AE) which is interpreted probabilistically after training using a Normalizing Flow (NF). We demonstrate that the PAE learns a low-dimensional latent space that captures the nonlinear range of features that exists within the population, and can accurately model the spectral evolution of SNe Ia across the full range of wavelength and observation times directly from the data. By introducing a correlation penalty term and multi-stage training setup alongside our physically-parameterized network we show that intrinsic and extrinsic modes of variability can be separated during training, removing the need for the additional models to perform magnitude standardization. We then use our PAE in a number of downstream tasks on SNe Ia for increasingly precise cosmological analyses, including automatic detection of SN outliers, the generation of samples consistent with the data distribution, and solving the inverse problem in the presence of noisy and incomplete data to constrain cosmological distance measurements. We find that the optimal number of intrinsic model parameters appears to be three, in line with previous studies, and show that we can standardize our test sample of SNe Ia with an RMS of $0.091 \pm 0.010$ mag, which corresponds to $0.074 \pm 0.010$ mag if peculiar velocity contributions are removed. Trained models and codes are released at \href{https://github.com/georgestein/suPAErnova}{github.com/georgestein/suPAErnova}

Photometric Redshift Estimation with Convolutional Neural Networks and Galaxy Images: A Case Study of Resolving Biases in Data-Driven Methods

Feb 21, 2022

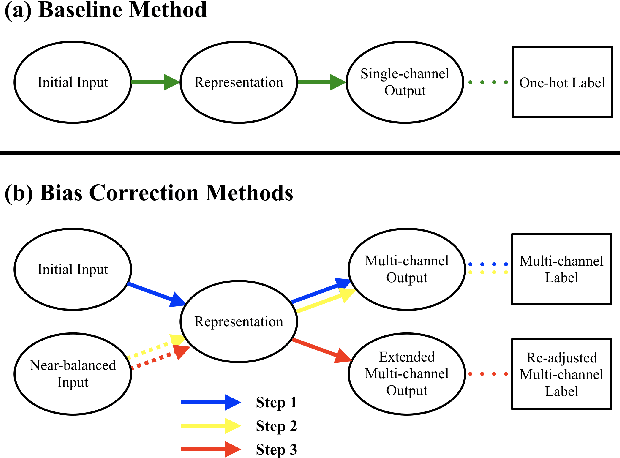

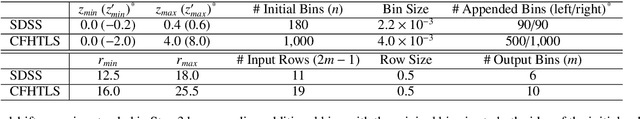

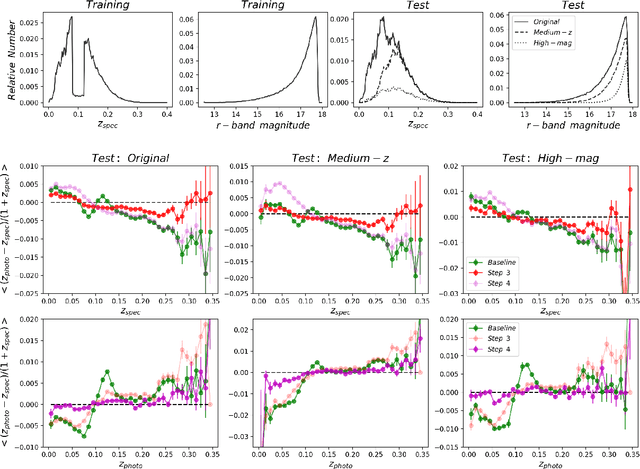

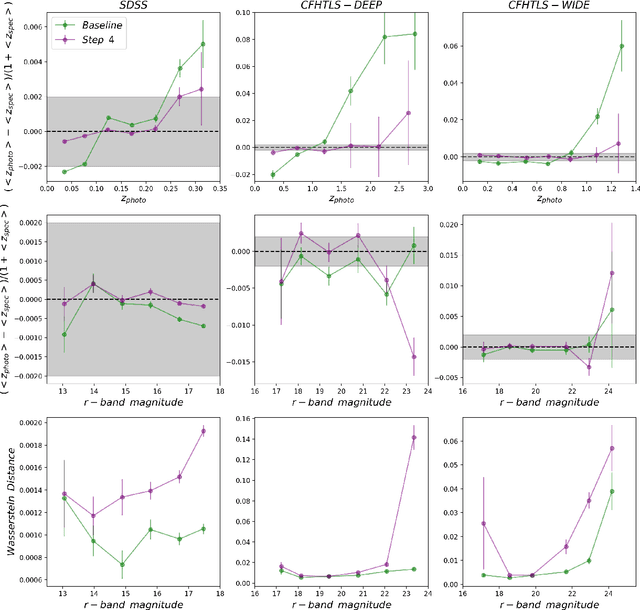

Abstract:Deep Learning models have been increasingly exploited in astrophysical studies, yet such data-driven algorithms are prone to producing biased outputs detrimental for subsequent analyses. In this work, we investigate two major forms of biases, i.e., class-dependent residuals and mode collapse, in a case study of estimating photometric redshifts as a classification problem using Convolutional Neural Networks (CNNs) and galaxy images with spectroscopic redshifts. We focus on point estimates and propose a set of consecutive steps for resolving the two biases based on CNN models, involving representation learning with multi-channel outputs, balancing the training data and leveraging soft labels. The residuals can be viewed as a function of spectroscopic redshifts or photometric redshifts, and the biases with respect to these two definitions are incompatible and should be treated in a split way. We suggest that resolving biases in the spectroscopic space is a prerequisite for resolving biases in the photometric space. Experiments show that our methods possess a better capability in controlling biases compared to benchmark methods, and exhibit robustness under varying implementing and training conditions provided with high-quality data. Our methods have promises for future cosmological surveys that require a good constraint of biases, and may be applied to regression problems and other studies that make use of data-driven models. Nonetheless, the bias-variance trade-off and the demand on sufficient statistics suggest the need for developing better methodologies and optimizing data usage strategies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge