D. A. Hudson

Automation for Interpretable Machine Learning Through a Comparison of Loss Functions to Regularisers

Jun 07, 2021

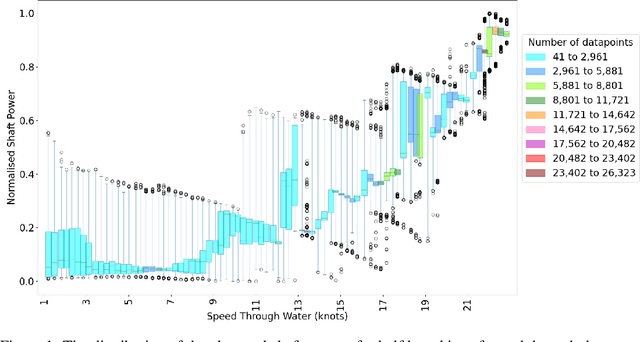

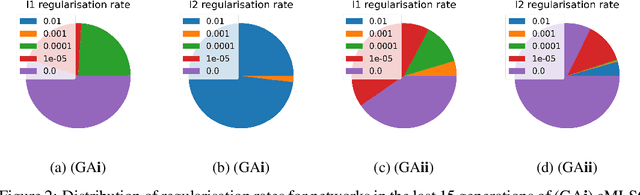

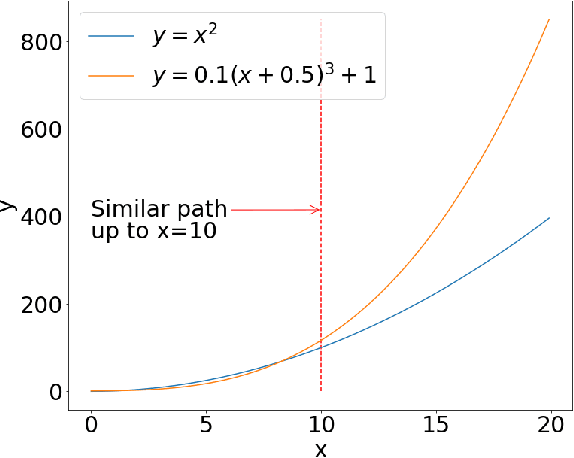

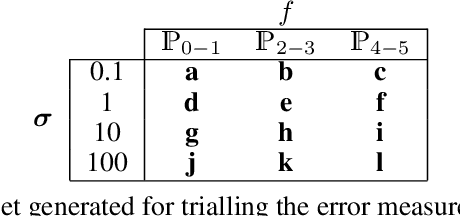

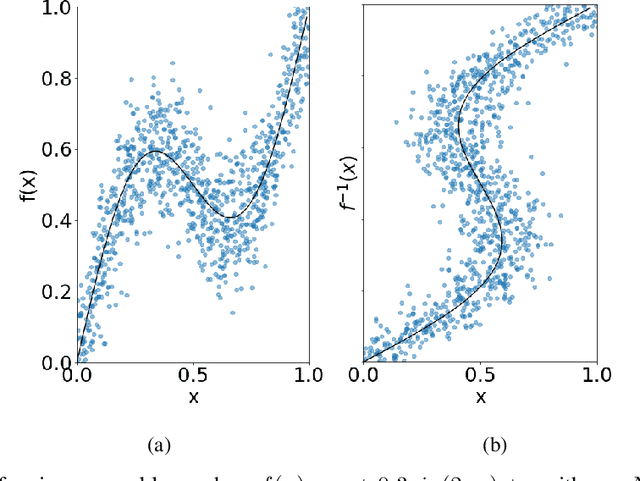

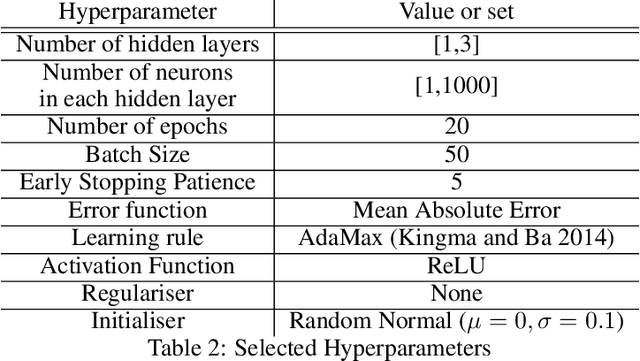

Abstract:To increase the ubiquity of machine learning it needs to be automated. Automation is cost-effective as it allows experts to spend less time tuning the approach, which leads to shorter development times. However, while this automation produces highly accurate architectures, they can be uninterpretable, acting as `black-boxes' which produce low conventional errors but fail to model the underlying input-output relationships -- the ground truth. This paper explores the use of the Fit to Median Error measure in machine learning regression automation, using evolutionary computation in order to improve the approximation of the ground truth. When used alongside conventional error measures it improves interpretability by regularising learnt input-output relationships to the conditional median. It is compared to traditional regularisers to illustrate that the use of the Fit to Median Error produces regression neural networks which model more consistent input-output relationships. The problem considered is ship power prediction using a fuel-saving air lubrication system, which is highly stochastic in nature. The networks optimised for their Fit to Median Error are shown to approximate the ground truth more consistently, without sacrificing conventional Minkowski-r error values.

Towards Error Measures which Influence a Learners Inductive Bias to the Ground Truth

May 04, 2021

Abstract:Artificial intelligence is applied in a range of sectors, and is relied upon for decisions requiring a high level of trust. For regression methods, trust is increased if they approximate the true input-output relationships and perform accurately outside the bounds of the training data. But often performance off-test-set is poor, especially when data is sparse. This is because the conditional average, which in many scenarios is a good approximation of the `ground truth', is only modelled with conventional Minkowski-r error measures when the data set adheres to restrictive assumptions, with many real data sets violating these. To combat this there are several methods that use prior knowledge to approximate the `ground truth'. However, prior knowledge is not always available, and this paper investigates how error measures affect the ability for a regression method to model the `ground truth' in these scenarios. Current error measures are shown to create an unhelpful bias and a new error measure is derived which does not exhibit this behaviour. This is tested on 36 representative data sets with different characteristics, showing that it is more consistent in determining the `ground truth' and in giving improved predictions in regions beyond the range of the training data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge