Cristian Axenie

Antifragile Control Systems: The case of mobile robot trajectory tracking in the presence of uncertainty

Feb 10, 2023Abstract:Mobile robots are ubiquitous. Such vehicles benefit from well-designed and calibrated control algorithms ensuring their task execution under precise uncertainty bounds. Yet, in tasks involving humans in the loop, such as elderly or mobility impaired, the problem takes a new dimension. In such cases, the system needs not only to compensate for uncertainty and volatility in its operation but at the same time to anticipate and offer responses that go beyond robust. Such robots operate in cluttered, complex environments, akin to human residences, and need to face during their operation sensor and, even, actuator faults, and still operate. This is where our thesis comes into the foreground. We propose a new control design framework based on the principles of antifragility. Such a design is meant to offer a high uncertainty anticipation given previous exposure to failures and faults, and exploit this anticipation capacity to provide performance beyond robust. In the current instantiation of antifragile control applied to mobile robot trajectory tracking, we provide controller design steps, the analysis of performance under parametrizable uncertainty and faults, as well as an extended comparative evaluation against state-of-the-art controllers. We believe in the potential antifragile control has in achieving closed-loop performance in the face of uncertainty and volatility by using its exposures to uncertainty to increase its capacity to anticipate and compensate for such events.

A Framework for Learning Invariant Physical Relations in Multimodal Sensory Processing

Jun 30, 2020

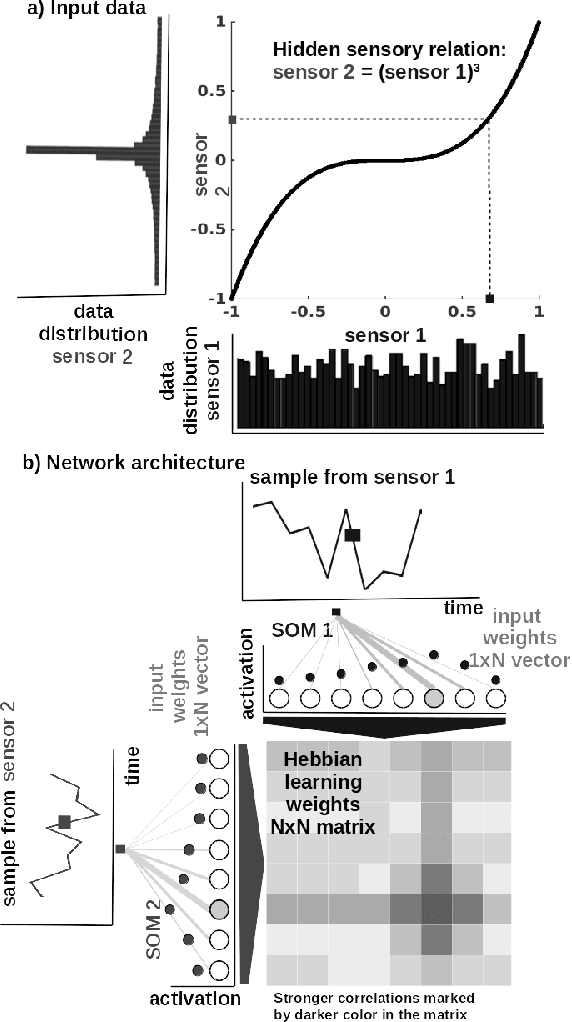

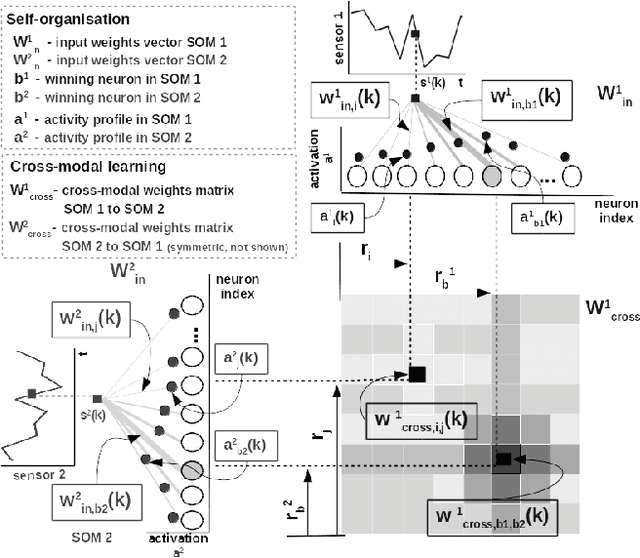

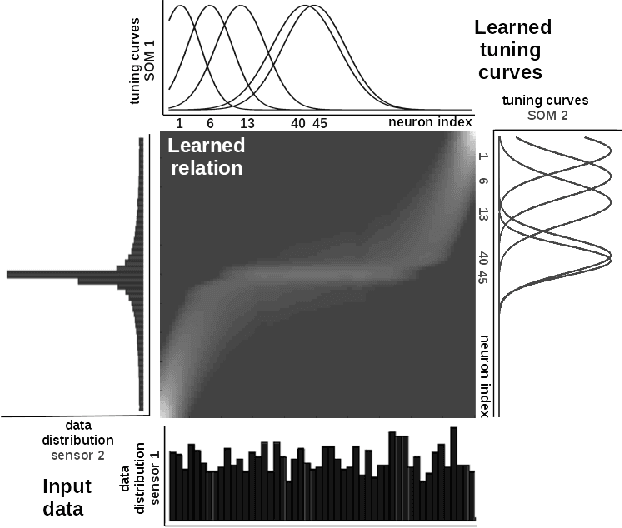

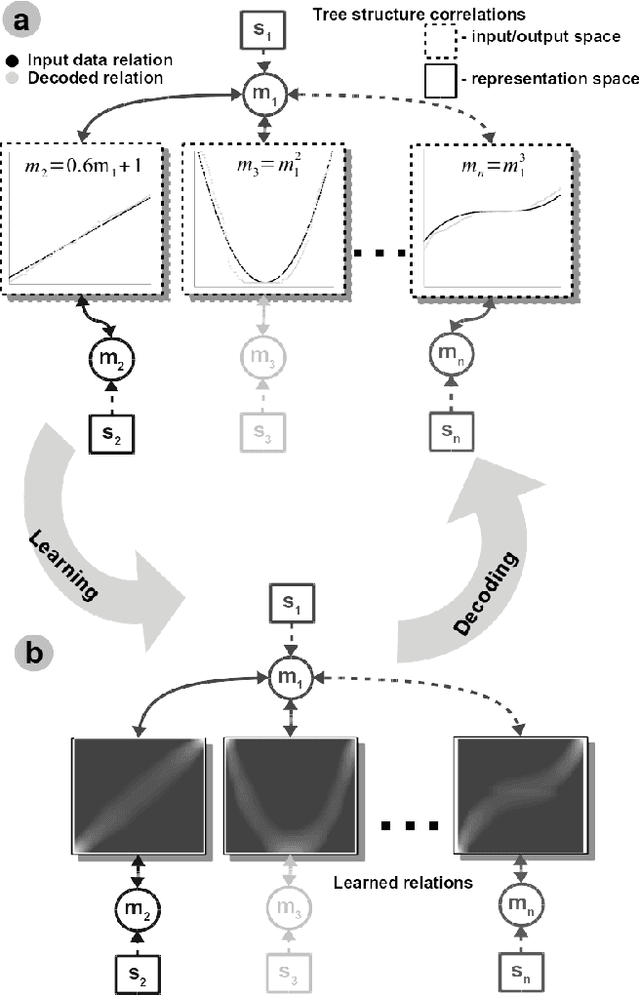

Abstract:Perceptual learning enables humans to recognize and represent stimuli invariant to various transformations and build a consistent representation of the self and physical world. Such representations preserve the invariant physical relations among the multiple perceived sensory cues. This work is an attempt to exploit these principles in an engineered system. We design a novel neural network architecture capable of learning, in an unsupervised manner, relations among multiple sensory cues. The system combines computational principles, such as competition, cooperation, and correlation, in a neurally plausible computational substrate. It achieves that through a parallel and distributed processing architecture in which the relations among the multiple sensory quantities are extracted from time-sequenced data. We describe the core system functionality when learning arbitrary non-linear relations in low-dimensional sensory data. Here, an initial benefit rises from the fact that such a network can be engineered in a relatively straightforward way without prior information about the sensors and their interactions. Moreover, alleviating the need for tedious modelling and parametrization, the network converges to a consistent description of any arbitrary high-dimensional multisensory setup. We demonstrate this through a real-world learning problem, where, from standard RGB camera frames, the network learns the relations between physical quantities such as light intensity, spatial gradient, and optical flow, describing a visual scene. Overall, the benefits of such a framework lie in the capability to learn non-linear pairwise relations among sensory streams in an architecture that is stable under noise and missing sensor input.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge