Claudia Landi

A Kernel for Multi-Parameter Persistent Homology

Sep 26, 2018

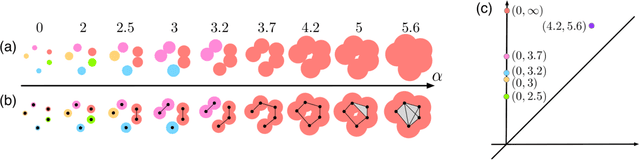

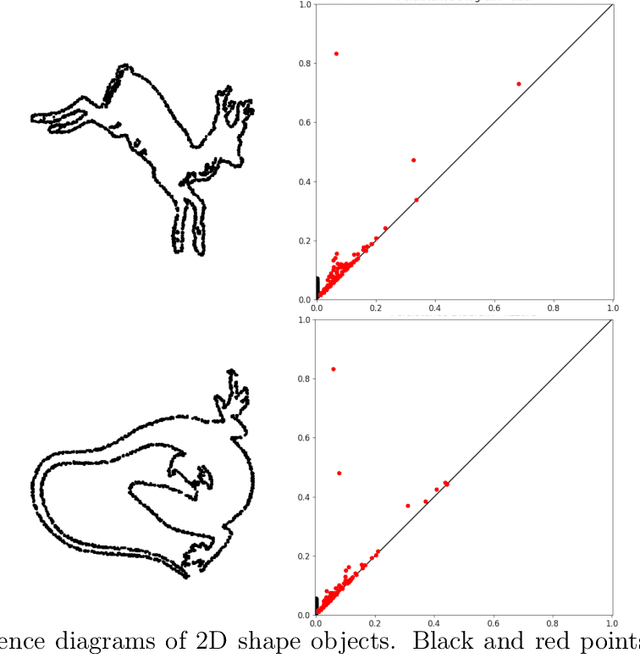

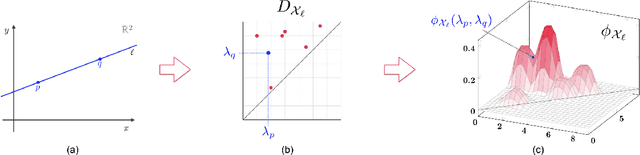

Abstract:Topological data analysis and its main method, persistent homology, provide a toolkit for computing topological information of high-dimensional and noisy data sets. Kernels for one-parameter persistent homology have been established to connect persistent homology with machine learning techniques. We contribute a kernel construction for multi-parameter persistence by integrating a one-parameter kernel weighted along straight lines. We prove that our kernel is stable and efficiently computable, which establishes a theoretical connection between topological data analysis and machine learning for multivariate data analysis.

Stability in multidimensional Size Theory

Aug 02, 2006

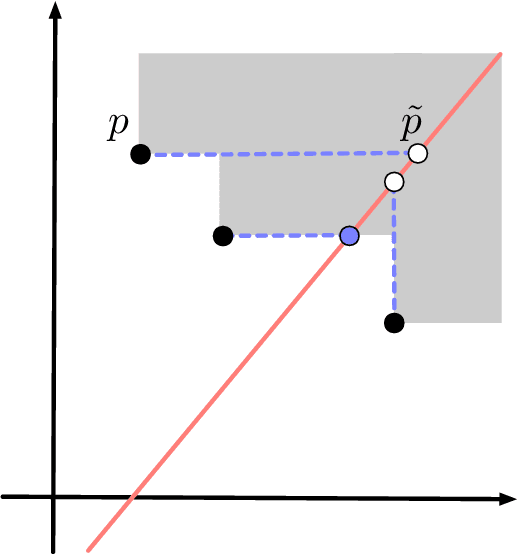

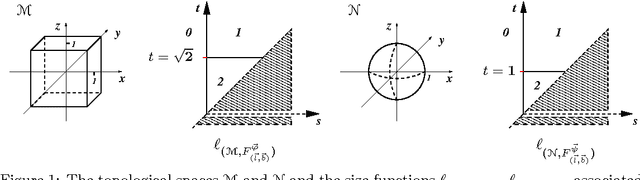

Abstract:This paper proves that in Size Theory the comparison of multidimensional size functions can be reduced to the 1-dimensional case by a suitable change of variables. Indeed, we show that a foliation in half-planes can be given, such that the restriction of a multidimensional size function to each of these half-planes turns out to be a classical size function in two scalar variables. This leads to the definition of a new distance between multidimensional size functions, and to the proof of their stability with respect to that distance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge