Christopher Hansen

Useful nonrobust features are ubiquitous in biomedical images

Apr 24, 2026Abstract:We study whether deep networks for medical imaging learn useful nonrobust features - predictive input patterns that are not human interpretable and highly susceptible to small adversarial perturbations - and how these features impact test performance. We show that models trained only on nonrobust features achieve well above chance accuracy across five MedMNIST classification tasks, confirming their predictive value in-distribution. Conversely, adversarially trained models that primarily rely on robust features sacrifice in-distribution accuracy but yield markedly better performance under controlled distribution shifts (MedMNIST-C). Overall, nonrobust features boost standard accuracy yet degrade out-of-distribution performance, revealing a practical robustness-accuracy trade-off in medical imaging classification tasks that should be tailored to the requirements of the deployment setting.

Automated Essay Scoring Using Transformer Models

Oct 13, 2021

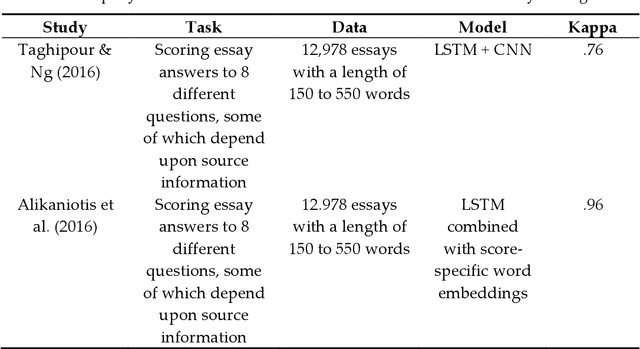

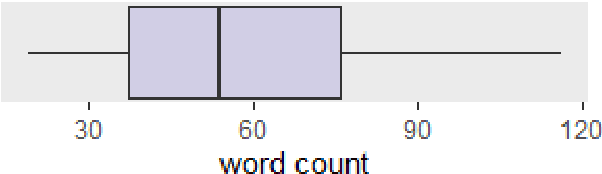

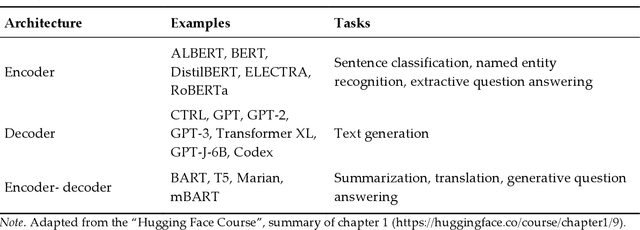

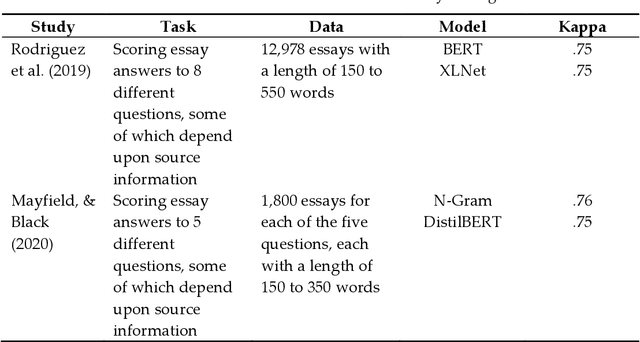

Abstract:Automated essay scoring (AES) is gaining increasing attention in the education sector as it significantly reduces the burden of manual scoring and allows ad hoc feedback for learners. Natural language processing based on machine learning has been shown to be particularly suitable for text classification and AES. While many machine-learning approaches for AES still rely on a bag-of-words (BOW) approach, we consider a transformer-based approach in this paper, compare its performance to a logistic regression model based on the BOW approach and discuss their differences. The analysis is based on 2,088 email responses to a problem-solving task, that were manually labeled in terms of politeness. Both transformer models considered in that analysis outperformed without any hyper-parameter tuning the regression-based model. We argue that for AES tasks such as politeness classification, the transformer-based approach has significant advantages, while a BOW approach suffers from not taking word order into account and reducing the words to their stem. Further, we show how such models can help increase the accuracy of human raters, and we provide a detailed instruction on how to implement transformer-based models for one's own purpose.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge