Chongyang Wang

Automatic Detection of Protective Behavior in Chronic Pain Physical Rehabilitation: A Recurrent Neural Network Approach

Feb 24, 2019

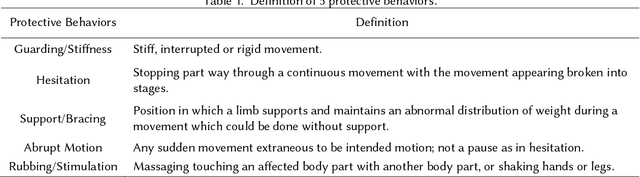

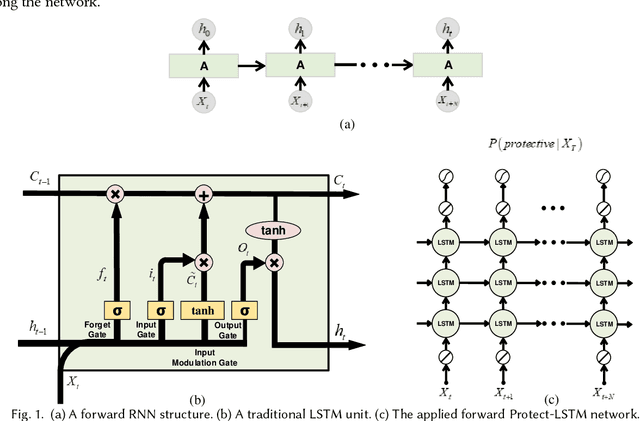

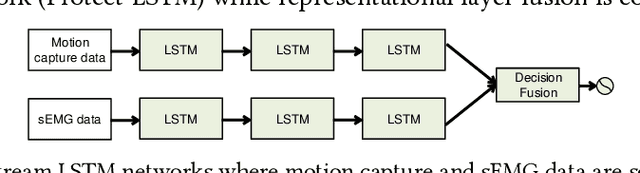

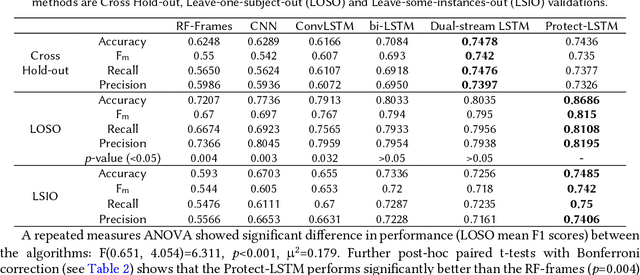

Abstract:In chronic pain physical rehabilitation, physiotherapists adapt movement to current performance of patients especially based on the expression of protective behavior, gradually exposing them to feared but harmless and essential everyday movements. As physical rehabilitation moves outside the clinic, physical rehabilitation technology needs to automatically detect such behaviors so as to provide similar personalized support. In this paper, we investigate the use of a Long Short-Term Memory (LSTM) network, which we call Protect-LSTM, to detect events of protective behavior, based on motion capture and electromyography data of healthy people and people with chronic low back pain engaged in five everyday movements. Differently from previous work on the same dataset, we aim to continuously detect protective behavior within a movement rather than overall estimate the presence of such behavior. The Protect-LSTM reaches best average F1 score of 0.815 with leave-one-subject-out (LOSO) validation, using low level features, better than other algorithms. Performances increase for some movements when modelled separately (mean F1 scores: bending=0.77, standing on one leg=0.81, sit-to-stand=0.72, stand-to-sit=0.83, reaching forward=0.67). These results reach excellent level of agreement with the average ratings of physiotherapists. As such, the results show clear potential for in-home technology supported affect-based personalized physical rehabilitation.

Micro-Attention for Micro-Expression recognition

Nov 09, 2018

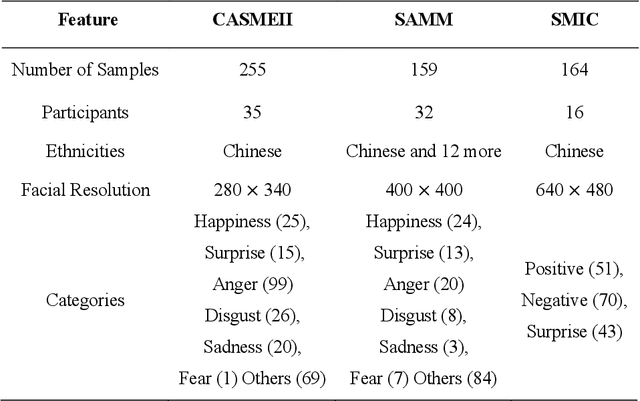

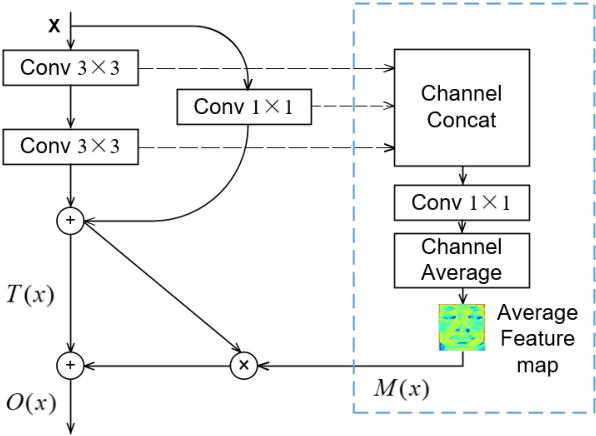

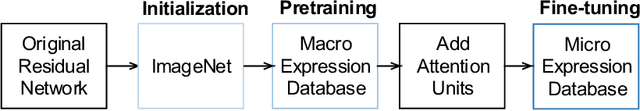

Abstract:Micro-expression, for its high objectivity in emotion detection, has emerged to be a promising modality in affective computing. Recently, deep learning methods have been successfully introduced into micro-expression recognition areas. Whilst the higher recognition accuracy achieved with deep learning methods, substantial challenges in micro-expression recognition remain. Issues with the existence of micro expression in small-local areas on face and limited size of databases still constrain the recognition accuracy of such facial behavior. In this work, to tackle such challenges, we propose novel attention mechanism called micro-attention cooperating with residual network. Micro-attention enables the network to learn to focus on facial area of interest (action units). Moreover, coping with small datasets, a simple yet efficient transfer learning approach is utilized to alleviate the overfitting risk. With an extensive experimental evaluation on two benchmarks (CASMEII, SAMM) and post-hoc feature visualizations, we demonstrate the effectiveness of proposed micro-attention and push the boundary of automatic recognition of micro-expression.

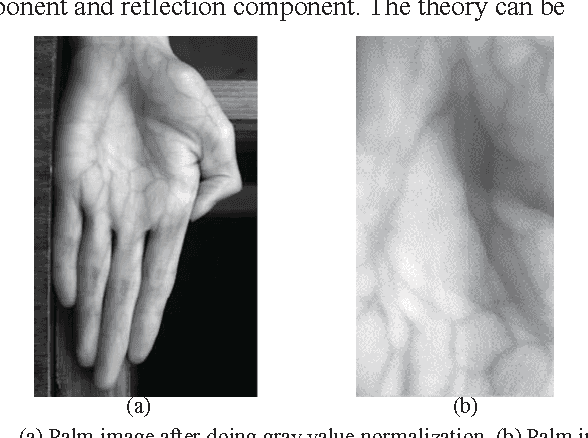

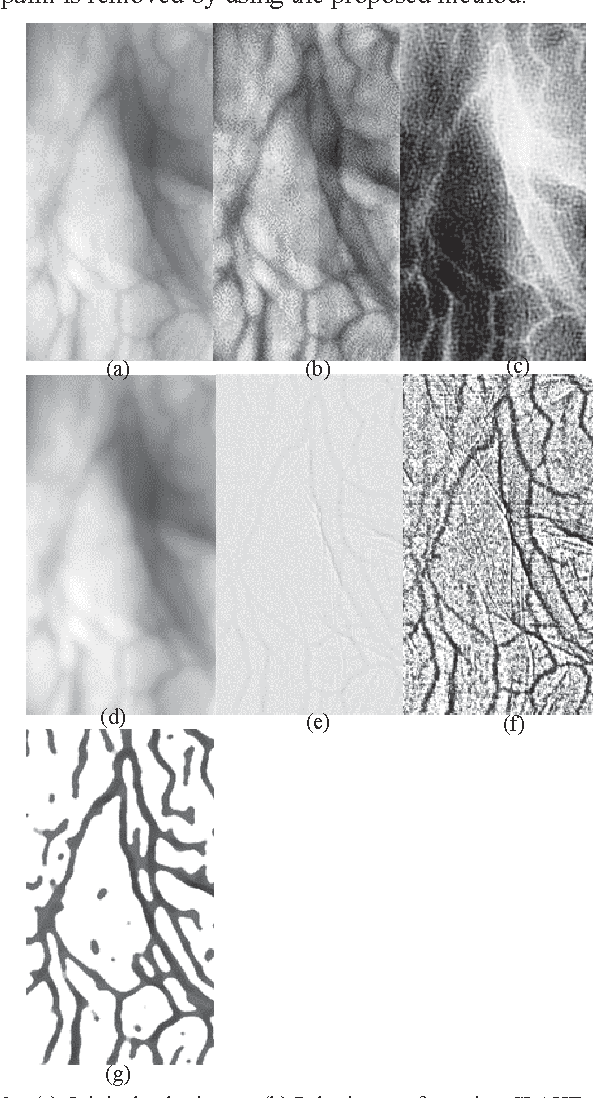

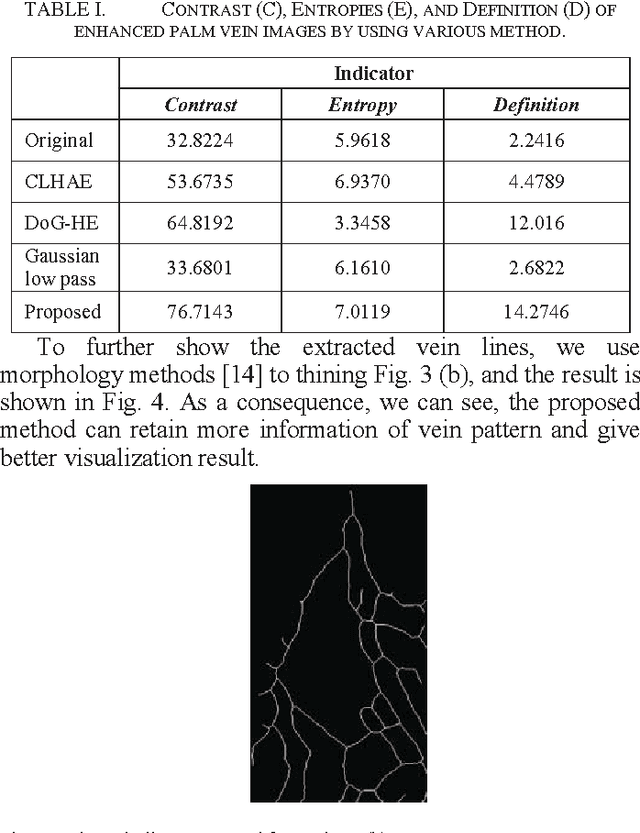

A single scale retinex based method for palm vein extraction

May 26, 2016

Abstract:Palm vein recognition is a novel biometric identification technology. But how to gain a better vein extraction result from the raw palm image is still a challenging problem, especially when the raw data collection has the problem of asymmetric illumination. This paper proposes a method based on single scale Retinex algorithm to extract palm vein image when strong shadow presents due to asymmetric illumination and uneven geometry of the palm. We test our method on a multispectral palm image. The experimental result shows that the proposed method is robust to the influence of illumination angle and shadow. Compared to the traditional extraction methods, the proposed method can obtain palm vein lines with better visualization performance (the contrast ratio increases by 18.4%, entropy increases by 1.07%, and definition increases by 18.8%).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge