Camille Phiquepal

Optimizing Trajectory-Trees in Belief Space: An Application from Model Predictive Control to Task and Motion Planning

May 03, 2026Abstract:This paper explores the benefits of computing arborescent trajectories (trajectory-trees) instead of commonly used sequential trajectories for partially observable robotic planning problems. In such environments, a robot infers knowledge from observations, and the optimal course of action depends on these observations. \revise{Trajectory-trees, optimized in belief space, naturally capture this dependency by branching where the belief state is expected to evolve into multiple distinct scenarios, such as upon receiving an observation. Unlike sequential trajectories, which model a single forward evolution of the system, trajectory-trees capture multiple possible contingencies.} First, we focus on Model Predictive Control (MPC) and demonstrate the benefits of planning tree-like trajectories. We formulate the control problem as the optimization of a tree with a single branching (PO-MPC). This improves performance by reducing control costs through more informed planning. To satisfy the real-time constraints of MPC, we develop an optimization algorithm called Distributed Augmented Lagrangian (D-AuLa), which leverages the decomposability of the PO-MPC formulation to parallelize and accelerate the optimization. We apply the method to both linear and non-linear MPC problems using autonomous driving examples. Second, we address Task And Motion Planning (TAMP), and introduce a planner (PO-LGP) reasoning on decision trees at task level, and trajectory-trees at motion-planning level. This approach builds upon the Logic-Geometric-Programming Framework (LGP) and extends it to partially observable problems. The experiments show the method's applicability to problems with a small belief state size, and scales to larger problems by optimizing explorative policies, which are used as macro-actions in an overarching task plan.

Asymptotically Optimal Belief Space Planning in Discrete Partially-Observable Domains

Sep 19, 2023

Abstract:Robots often have to operate in discrete partially observable worlds, where the states of world are only observable at runtime. To react to different world states, robots need contingencies. However, computing contingencies is costly and often non-optimal. To address this problem, we develop the improved path tree optimization (PTO) method. PTO computes motion contingencies by constructing a tree of motion paths in belief space. This is achieved by constructing a graph of configurations, then adding observation edges to extend the graph to belief space. Afterwards, we use a dynamic programming step to extract the path tree. PTO extends prior work by adding a camera-based state sampler to improve the search for observation points. We also add support to non-euclidean state spaces, provide an implementation in the open motion planning library (OMPL), and evaluate PTO on four realistic scenarios with a virtual camera in up to 10-dimensional state spaces. We compare PTO with a default and with the new camera-based state sampler. The results indicate that the camera-based state sampler improves success rates in 3 out of 4 scenarios while having a significant lower memory footprint. This makes PTO an important contribution to advance the state-of-the-art for discrete belief space planning.

Control-Tree Optimization: an approach to MPC under discrete Partial Observability

Jan 31, 2023Abstract:This paper presents a new approach to Model Predictive Control for environments where essential, discrete variables are partially observed. Under this assumption, the belief state is a probability distribution over a finite number of states. We optimize a \textit{control-tree} where each branch assumes a given state-hypothesis. The control-tree optimization uses the probabilistic belief state information. This leads to policies more optimized with respect to likely states than unlikely ones, while still guaranteeing robust constraint satisfaction at all times. We apply the method to both linear and non-linear MPC with constraints. The optimization of the \textit{control-tree} is decomposed into optimization subproblems that are solved in parallel leading to good scalability for high number of state-hypotheses. We demonstrate the real-time feasibility of the algorithm on two examples and show the benefits compared to a classical MPC scheme optimizing w.r.t. one single hypothesis.

* 6 pages, 10 figures

Path-Tree Optimization in Partially Observable Environments using Rapidly-Exploring Belief-Space Graphs

Apr 09, 2022

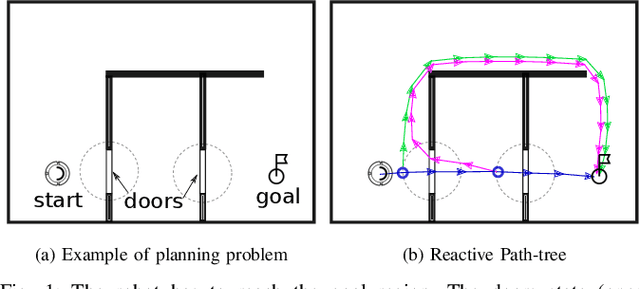

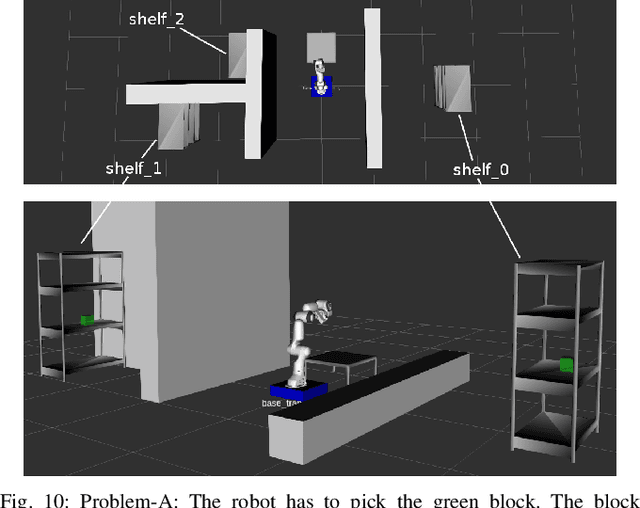

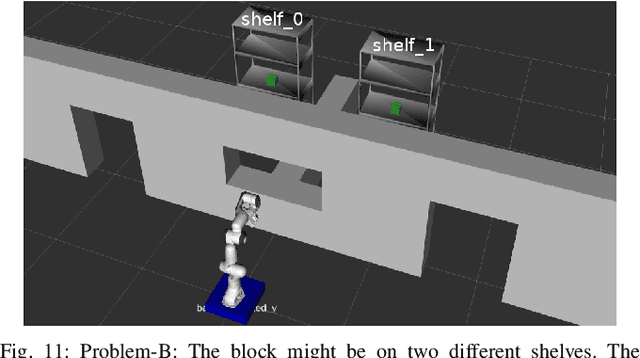

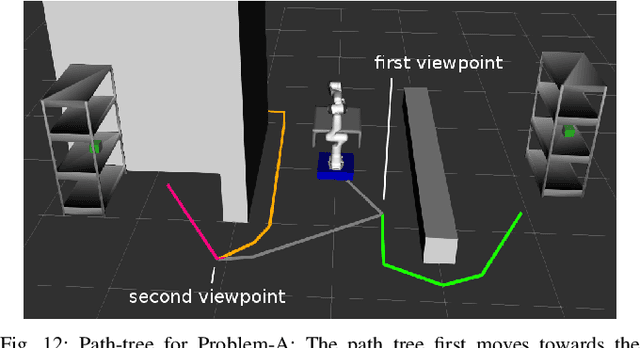

Abstract:Robots often need to solve path planning problems where essential and discrete aspects of the environment are partially observable. This introduces a multi-modality, where the robot must be able to observe and infer the state of its environment. To tackle this problem, we introduce the Path-Tree Optimization (PTO) algorithm which plans a path-tree in belief-space. A path-tree is a tree-like motion with branching points where the robot receives an observation leading to a belief-state update. The robot takes different branches depending on the observation received. The algorithm has three main steps. First, a rapidly-exploring random graph (RRG) on the state space is grown. Second, the RRG is expanded to a belief-space graph by querying the observation model. In a third step, dynamic programming is performed on the belief-space graph to extract a path-tree. The resulting path-tree combines exploration with exploitation i.e. it balances the need for gaining knowledge about the environment with the need for reaching the goal. We demonstrate the algorithm capabilities on navigation and mobile manipulation tasks, and show its advantage over a baseline using a task and motion planning approach (TAMP) both in terms of optimality and runtime.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge