C. Pino

Astronomical source finding services for the CIRASA visual analytic platform

Oct 18, 2021

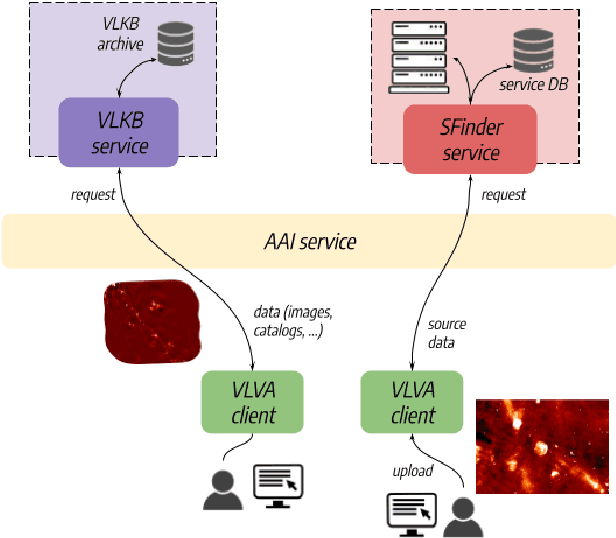

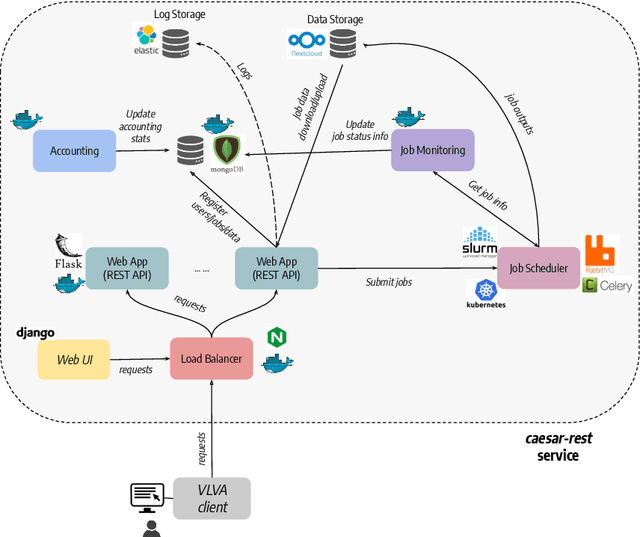

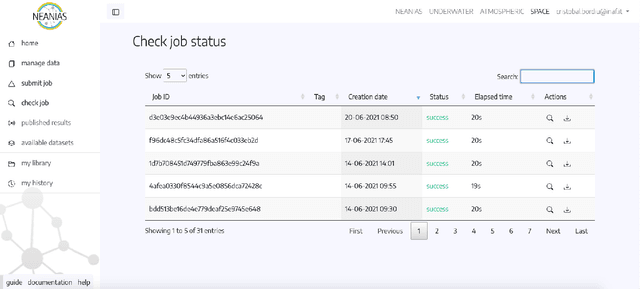

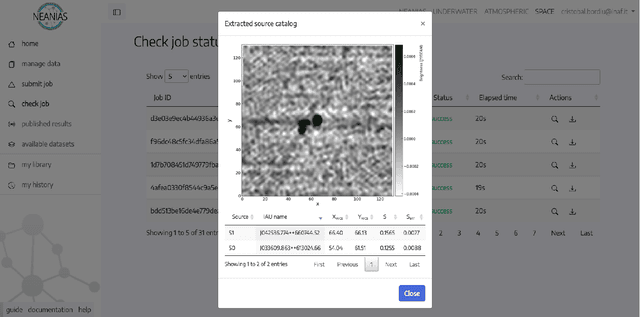

Abstract:Innovative developments in data processing, archiving, analysis, and visualization are nowadays unavoidable to deal with the data deluge expected in next-generation facilities for radio astronomy, such as the Square Kilometre Array (SKA) and its precursors. In this context, the integration of source extraction and analysis algorithms into data visualization tools could significantly improve and speed up the cataloguing process of large area surveys, boosting astronomer productivity and shortening publication time. To this aim, we are developing a visual analytic platform (CIRASA) for advanced source finding and classification, integrating state-of-the-art tools, such as the CAESAR source finder, the ViaLactea Visual Analytic (VLVA) and Knowledge Base (VLKB). In this work, we present the project objectives and the platform architecture, focusing on the implemented source finding services.

A Saliency-based Convolutional Neural Network for Table and Chart Detection in Digitized Documents

Apr 17, 2018

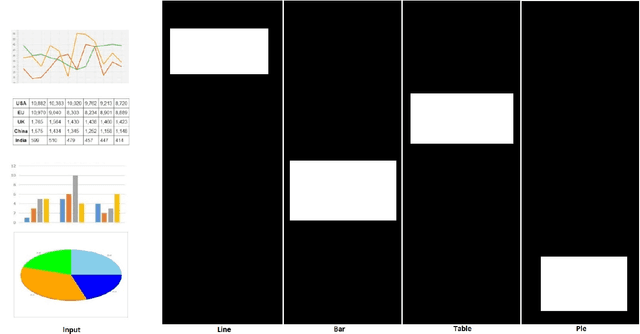

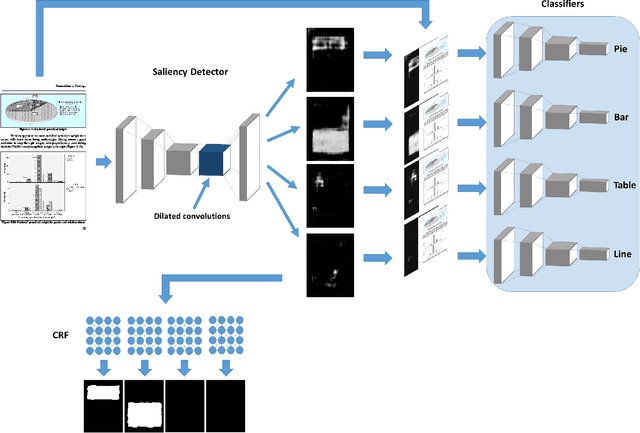

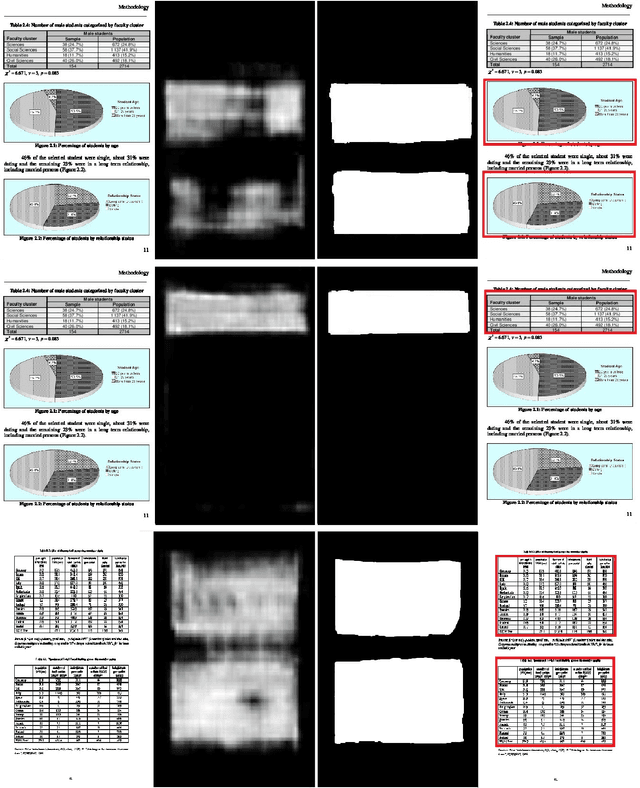

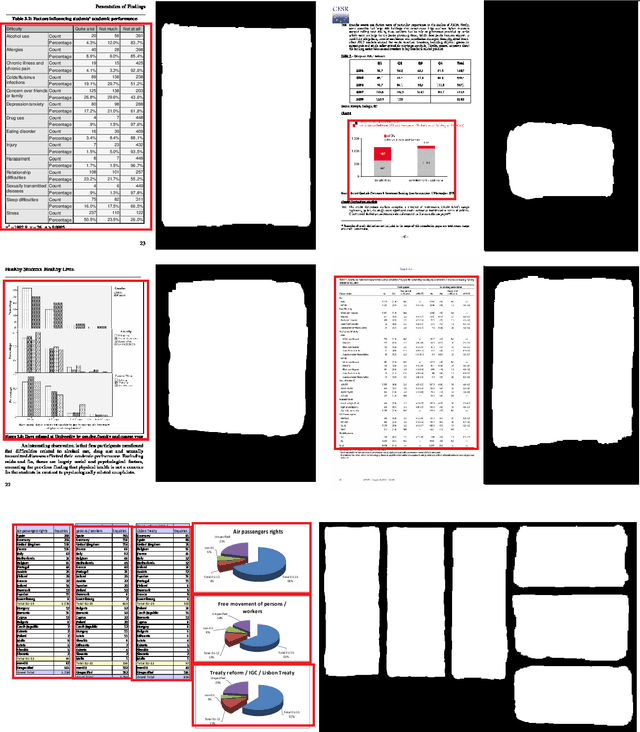

Abstract:Deep Convolutional Neural Networks (DCNNs) have recently been applied successfully to a variety of vision and multimedia tasks, thus driving development of novel solutions in several application domains. Document analysis is a particularly promising area for DCNNs: indeed, the number of available digital documents has reached unprecedented levels, and humans are no longer able to discover and retrieve all the information contained in these documents without the help of automation. Under this scenario, DCNNs offers a viable solution to automate the information extraction process from digital documents. Within the realm of information extraction from documents, detection of tables and charts is particularly needed as they contain a visual summary of the most valuable information contained in a document. For a complete automation of visual information extraction process from tables and charts, it is necessary to develop techniques that localize them and identify precisely their boundaries. In this paper we aim at solving the table/chart detection task through an approach that combines deep convolutional neural networks, graphical models and saliency concepts. In particular, we propose a saliency-based fully-convolutional neural network performing multi-scale reasoning on visual cues followed by a fully-connected conditional random field (CRF) for localizing tables and charts in digital/digitized documents. Performance analysis carried out on an extended version of ICDAR 2013 (with annotated charts as well as tables) shows that our approach yields promising results, outperforming existing models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge