C. Emre Koksal

A Queueing-Theoretic Framework for Dynamic Attack Surfaces: Data-Integrated Risk Analysis and Adaptive Defense

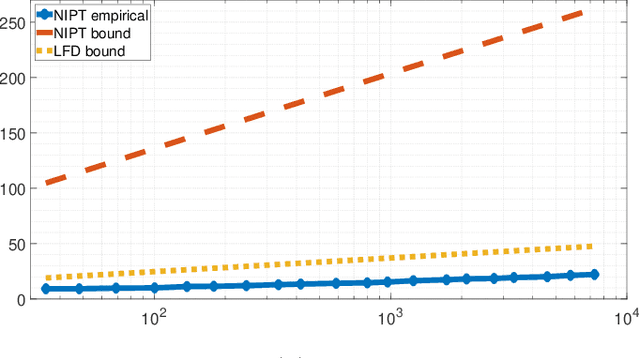

Apr 12, 2026Abstract:We develop a queueing-theoretic framework to model the temporal evolution of cyber-attack surfaces, where the number of active vulnerabilities is represented as the backlog of a queue. Vulnerabilities arrive as they are discovered or created, and leave the system when they are patched or successfully exploited. Building on this model, we study how automation affects attack and defense dynamics by introducing an AI amplification factor that scales arrival, exploit, and patching rates. Our analysis shows that even symmetric automation can increase the rate of successful exploits. We validate the model using vulnerability data collected from an open source software supply chain and show that it closely matches real-world attack surface dynamics. Empirical results reveal heavy-tailed patching times, which we prove induce long-range dependence in vulnerability backlog and help explain persistent cyber risk. Utilizing our queueing abstraction for the attack surface, we develop a systematic approach for cyber risk mitigation. We formulate the dynamic defense problem as a constrained Markov decision process with resource-budget and switching-cost constraints, and develop a reinforcement learning (RL) algorithm that achieves provably near-optimal regret. Numerical experiments validate the approach and demonstrate that our adaptive RL-based defense policies significantly reduce successful exploits and mitigate heavy-tail queue events. Using trace-driven experiments on the ARVO dataset, we show that the proposed RL-based defense policy reduces the average number of active vulnerabilities in a software supply chain by over 90% compared to existing defense practices, without increasing the overall maintenance budget. Our results allow defenders to quantify cumulative exposure risk under long-range dependent attack dynamics and to design adaptive defense strategies with provable efficiency.

Organizational Security Resource Estimation via Vulnerability Queueing

Apr 11, 2026Abstract:We provide an approach that closely estimates an organization's cyber resources directly from vulnerability timestamps, using a non-stationary queueing framework. Traditional attack-surface metrics operate on static snapshots, ignoring the core attack-defense dynamics within information systems, which exhibit bursty, heavy-tailed, and capacity-constrained behavior. Our approach to modeling such dynamics is based on a queueing abstraction of attack surfaces. We utilize a segmentation method to identify piecewise-stationary regimes via Gaussian mixture modeling (GMM) of queue length distributions. We fit segment-specific arrival, service, and resource parameters through the minimization of Kullback--Leibler divergence (KL) between the empirical and estimated distributions. Applied to both large-scale software supply chain data and multi-year private logistics enterprise cyber-ticket workflows, the model estimates organizational resources, measured in the time-varying active personnel and output rate per personnel, solely from bug report and fix timings for software supply chains, and discovery and patch timestamps in the enterprise setting. Our results provide 91--96\% accuracy in resource estimation, making the dynamic queueing framework a compelling approach for understanding attack surface dynamics. Further, our framework exposes resource bottlenecks, establishing a foundation for predictive workforce planning, patch-race modeling, and proactive cyber-risk management.

Bi-Level Online Provisioning and Scheduling with Switching Costs and Cross-Level Constraints

Jan 26, 2026Abstract:We study a bi-level online provisioning and scheduling problem motivated by network resource allocation, where provisioning decisions are made at a slow time scale while queue-/state-dependent scheduling is performed at a fast time scale. We model this two-time-scale interaction using an upper-level online convex optimization (OCO) problem and a lower-level constrained Markov decision process (CMDP). Existing OCO typically assumes stateless decisions and thus cannot capture MDP network dynamics such as queue evolution. Meanwhile, CMDP algorithms typically assume a fixed constraint threshold, whereas in provisioning-and-scheduling systems, the threshold varies with online budget decisions. To address these gaps, we study bi-level OCO-CMDP learning under switching costs (budget reprovisioning/system reconfiguration) and cross-level constraints that couple budgets to scheduling decisions. Our new algorithm solves this learning problem via several non-trivial developments, including a carefully designed dual feedback that returns the budget multiplier as sensitivity information for the upper-level update and a lower level that solves a budget-adaptive safe exploration problem via an extended occupancy-measure linear program. We establish near-optimal regret and high-probability satisfaction of the cross-level constraints.

Quickest Detection over Sensor Networks with Unknown Post-Change Distribution

Dec 23, 2020

Abstract:We propose a quickest change detection problem over sensor networks where both the subset of sensors undergoing a change and the local post-change distributions are unknown. Each sensor in the network observes a local discrete time random process over a finite alphabet. Initially, the observations are independent and identically distributed (i.i.d.) with known pre-change distributions independent from other sensors. At a fixed but unknown change point, a fixed but unknown subset of the sensors undergo a change and start observing samples from an unknown distribution. We assume the change can be quantified using concave (or convex) local statistics over the space of distributions. We propose an asymptotically optimal and computationally tractable stopping time for Lorden's criterion. Under this scenario, our proposed method uses a concave global cumulative sum (CUSUM) statistic at the fusion center and suppresses the most likely false alarms using information projection. Finally, we show some numerical results of the simulation of our algorithm for the problem described.

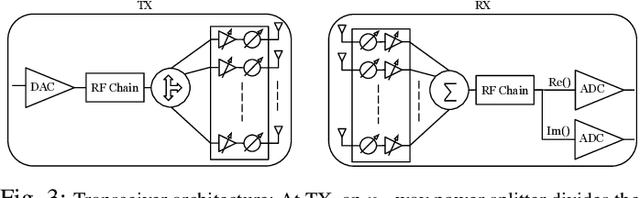

Source Coding Based mmWave Channel Estimation with Deep Learning Based Decoding

Apr 30, 2019

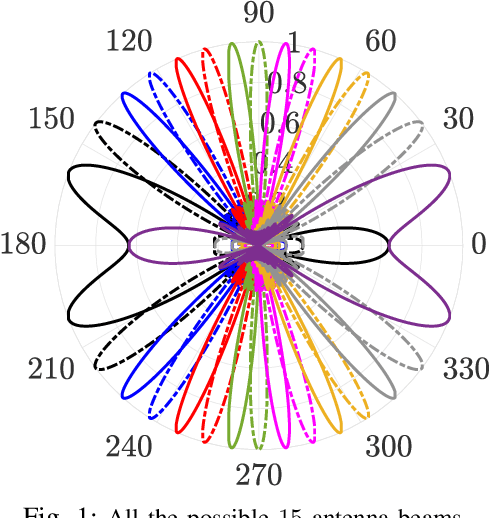

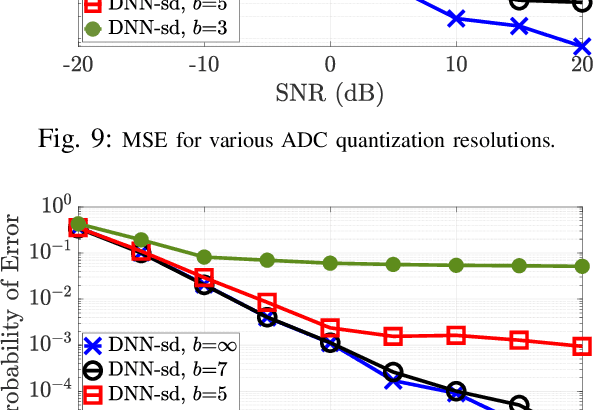

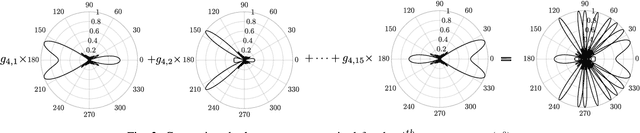

Abstract:mmWave technology is set to become a main feature of next generation wireless networks, e.g., 5G mobile and WiFi 802.11ad/ay. Among the basic and most fundamental challenges facing mmWave is the ability to overcome its unfavorable propagation characteristics using energy efficient solutions. This has been addressed using innovative transceiver architectures. However, these architectures have their own limitations when it comes to channel estimation. This paper focuses on channel estimation and poses it as a source compression problem, where channel measurements are designed to mimic an encoded (compressed) version of the channel. We show that linear source codes can significantly reduce the number of channel measurements required to discover all channel paths. We also propose a deep-learning-based approach for decoding the obtained measurements, which enables high-speed and efficient channel discovery.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge