C. Donoso-Oliva

ASTROMER: A transformer-based embedding for the representation of light curves

May 02, 2022

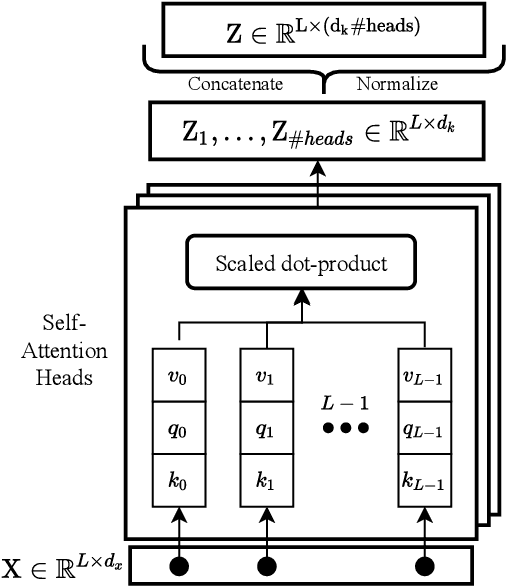

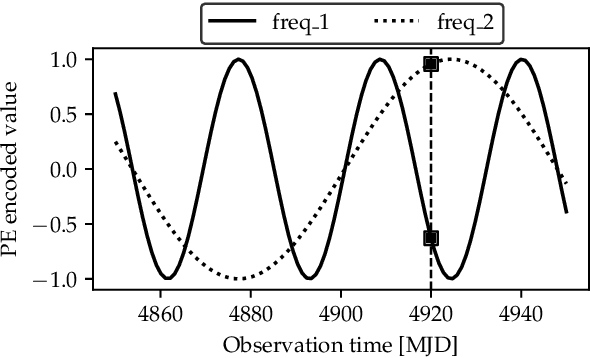

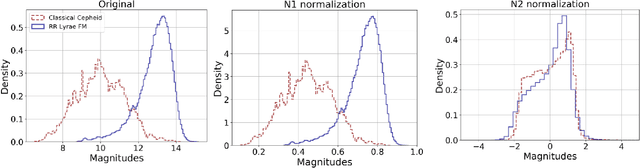

Abstract:Taking inspiration from natural language embeddings, we present ASTROMER, a transformer-based model to create representations of light curves. ASTROMER was trained on millions of MACHO R-band samples, and it can be easily fine-tuned to match specific domains associated with downstream tasks. As an example, this paper shows the benefits of using pre-trained representations to classify variable stars. In addition, we provide a python library including all functionalities employed in this work. Our library includes the pre-trained models that can be used to enhance the performance of deep learning models, decreasing computational resources while achieving state-of-the-art results.

The effect of phased recurrent units in the classification of multiple catalogs of astronomical lightcurves

Jun 07, 2021

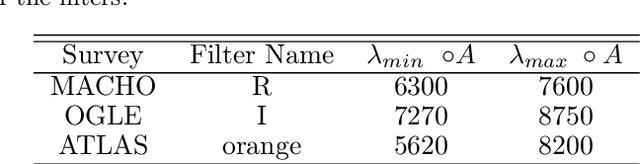

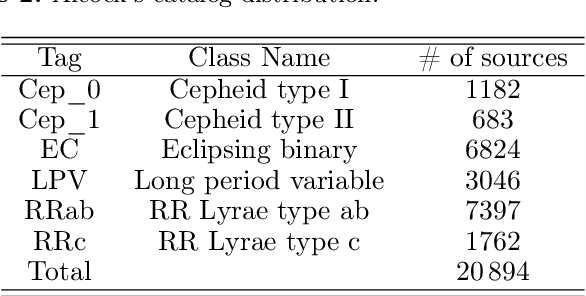

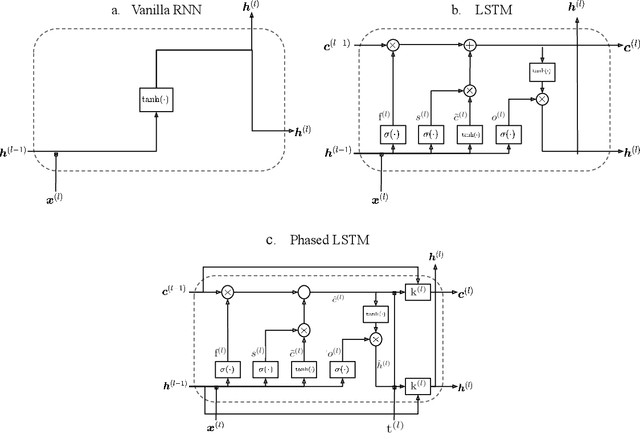

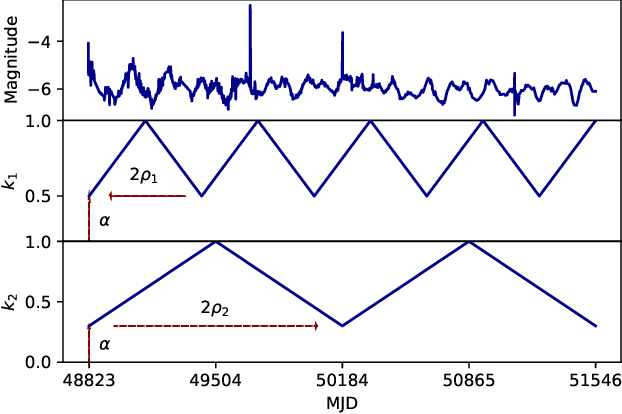

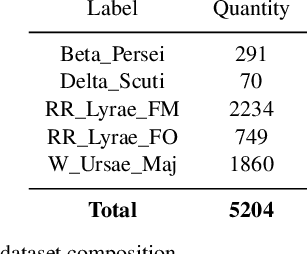

Abstract:In the new era of very large telescopes, where data is crucial to expand scientific knowledge, we have witnessed many deep learning applications for the automatic classification of lightcurves. Recurrent neural networks (RNNs) are one of the models used for these applications, and the LSTM unit stands out for being an excellent choice for the representation of long time series. In general, RNNs assume observations at discrete times, which may not suit the irregular sampling of lightcurves. A traditional technique to address irregular sequences consists of adding the sampling time to the network's input, but this is not guaranteed to capture sampling irregularities during training. Alternatively, the Phased LSTM unit has been created to address this problem by updating its state using the sampling times explicitly. In this work, we study the effectiveness of the LSTM and Phased LSTM based architectures for the classification of astronomical lightcurves. We use seven catalogs containing periodic and nonperiodic astronomical objects. Our findings show that LSTM outperformed PLSTM on 6/7 datasets. However, the combination of both units enhances the results in all datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge