Benjamin Lange

Unilateral Relationship Revision Power in Human-AI Companion Interaction

Mar 24, 2026Abstract:When providers update AI companions, users report grief, betrayal, and loss. A growing literature asks whether the norms governing personal relationships extend to these interactions. So what, if anything, is morally significant about them? I argue that human-AI companion interaction is a triadic structure in which the provider exercises constitutive control over the AI. I identify three structural conditions of normatively robust dyads that the norms characteristic of personal relationships presuppose and show that AI companion interactions fail all three. This reveals what I call Unilateral Relationship Revision Power (URRP): the provider can rewrite how the AI interacts from a position where these revisions are not answerable within that interaction. I argue that designing interactions that exhibit URRP is pro tanto wrong because it involves cultivating normative expectations while maintaining conditions under which those expectations cannot be fulfilled. URRP has three implications: i) normative hollowing (commitment is elicited but no agent inside the interaction bears it), ii) displaced vulnerability (the user's exposure is governed by an agent not answerable to her within the interaction), and iii) structural irreconcilability (when trust breaks down, reconciliation is structurally unavailable because the agent who acted and the entity the user interacts with are different). I discuss design principles such as commitment calibration, structural separation, and continuity assurance as external substitutes for the internal constraints the triadic structure removes. The analysis therefore suggests that a central and underexplored problem in relational AI ethics is the structural arrangement of power over the human-AI interaction itself.

Relational Norms for Human-AI Cooperation

Feb 17, 2025Abstract:How we should design and interact with social artificial intelligence depends on the socio-relational role the AI is meant to emulate or occupy. In human society, relationships such as teacher-student, parent-child, neighbors, siblings, or employer-employee are governed by specific norms that prescribe or proscribe cooperative functions including hierarchy, care, transaction, and mating. These norms shape our judgments of what is appropriate for each partner. For example, workplace norms may allow a boss to give orders to an employee, but not vice versa, reflecting hierarchical and transactional expectations. As AI agents and chatbots powered by large language models are increasingly designed to serve roles analogous to human positions - such as assistant, mental health provider, tutor, or romantic partner - it is imperative to examine whether and how human relational norms should extend to human-AI interactions. Our analysis explores how differences between AI systems and humans, such as the absence of conscious experience and immunity to fatigue, may affect an AI's capacity to fulfill relationship-specific functions and adhere to corresponding norms. This analysis, which is a collaborative effort by philosophers, psychologists, relationship scientists, ethicists, legal experts, and AI researchers, carries important implications for AI systems design, user behavior, and regulation. While we accept that AI systems can offer significant benefits such as increased availability and consistency in certain socio-relational roles, they also risk fostering unhealthy dependencies or unrealistic expectations that could spill over into human-human relationships. We propose that understanding and thoughtfully shaping (or implementing) suitable human-AI relational norms will be crucial for ensuring that human-AI interactions are ethical, trustworthy, and favorable to human well-being.

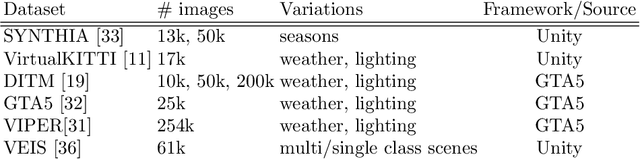

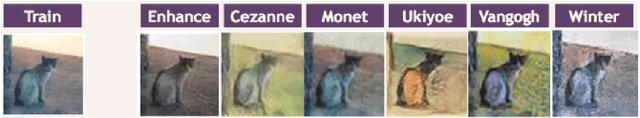

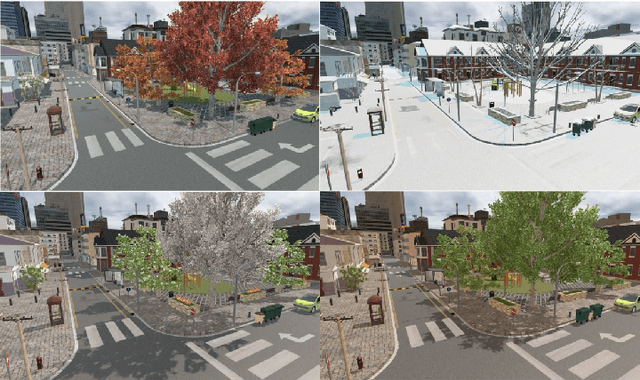

Mixing Real and Synthetic Data to Enhance Neural Network Training -- A Review of Current Approaches

Jul 17, 2020

Abstract:Deep neural networks have gained tremendous importance in many computer vision tasks. However, their power comes at the cost of large amounts of annotated data required for supervised training. In this work we review and compare different techniques available in the literature to improve training results without acquiring additional annotated real-world data. This goal is mostly achieved by applying annotation-preserving transformations to existing data or by synthetically creating more data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge