Benedikt Brückner

IoUCert: Robustness Verification for Anchor-based Object Detectors

Mar 04, 2026Abstract:While formal robustness verification has seen significant success in image classification, scaling these guarantees to object detection remains notoriously difficult due to complex non-linear coordinate transformations and Intersection-over-Union (IoU) metrics. We introduce IoUCert, a novel formal verification framework designed specifically to overcome these bottlenecks in foundational anchor-based object detection architectures. Focusing on the object localisation component in single-object settings, we propose a coordinate transformation that enables our algorithm to circumvent precision-degrading relaxations of non-linear box prediction functions. This allows us to optimise bounds directly with respect to the anchor box offsets which enables a novel Interval Bound Propagation method that derives optimal IoU bounds. We demonstrate that our method enables, for the first time, the robustness verification of realistic, anchor-based models including SSD, YOLOv2, and YOLOv3 variants against various input perturbations.

Verification of Neural Networks against Convolutional Perturbations via Parameterised Kernels

Nov 07, 2024Abstract:We develop a method for the efficient verification of neural networks against convolutional perturbations such as blurring or sharpening. To define input perturbations we use well-known camera shake, box blur and sharpen kernels. We demonstrate that these kernels can be linearly parameterised in a way that allows for a variation of the perturbation strength while preserving desired kernel properties. To facilitate their use in neural network verification, we develop an efficient way of convolving a given input with these parameterised kernels. The result of this convolution can be used to encode the perturbation in a verification setting by prepending a linear layer to a given network. This leads to tight bounds and a high effectiveness in the resulting verification step. We add further precision by employing input splitting as a branch and bound strategy. We demonstrate that we are able to verify robustness on a number of standard benchmarks where the baseline is unable to provide any safety certificates. To the best of our knowledge, this is the first solution for verifying robustness against specific convolutional perturbations such as camera shake.

Siamese Basis Function Networks for Defect Classification

Dec 09, 2020

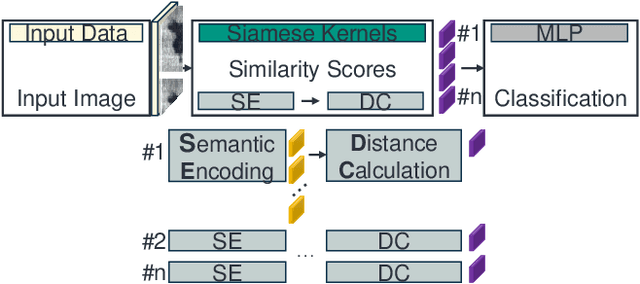

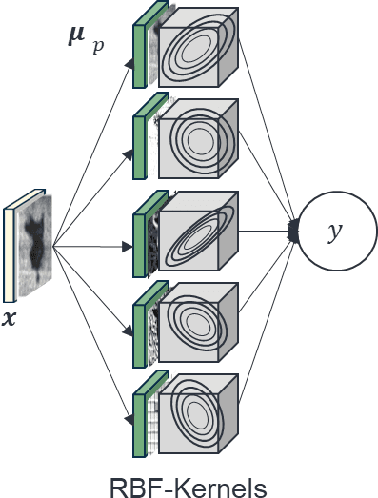

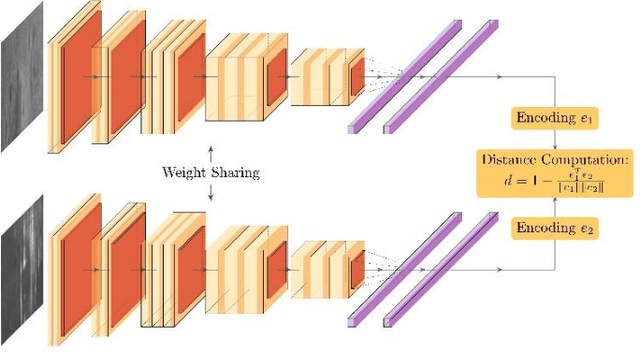

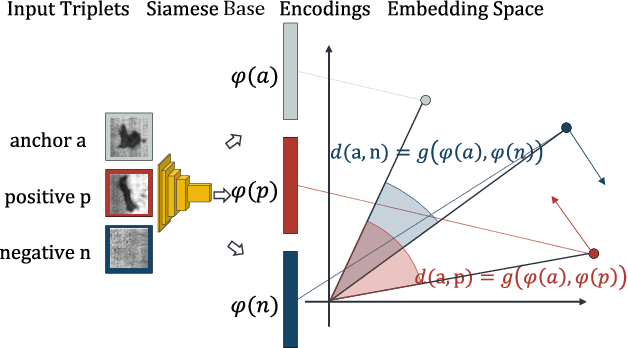

Abstract:Defect classification on metallic surfaces is considered a critical issue since substantial quantities of steel and other metals are processed by the manufacturing industry on a daily basis. The authors propose a new approach where they introduce the usage of so called Siamese Kernels in a Basis Function Network to create the Siamese Basis Function Network (SBF-Network). The underlying idea is to classify by comparison using similarity scores. This classification is reinforced through efficient deep learning based feature extraction methods. First, a center image is assigned to each Siamese Kernel. The Kernels are then trained to generate encodings in a way that enables them to distinguish their center from other images in the dataset. Using this approach the authors created some kind of class-awareness inside the Siamese Kernels. To classify a given image, each Siamese Kernel generates a feature vector for its center as well as the given image. These vectors represent encodings of the respective images in a lower-dimensional space. The distance between each pair of encodings is then computed using the cosine distance together with radial basis functions. The distances are fed into a multilayer neural network to perform the classification. With this approach the authors achieved outstanding results on the state of the art NEU surface defect dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge