Bastian Boll

A Family of LLMs Liberated from Static Vocabularies

Mar 16, 2026Abstract:Tokenization is a central component of natural language processing in current large language models (LLMs), enabling models to convert raw text into processable units. Although learned tokenizers are widely adopted, they exhibit notable limitations, including their large, fixed vocabulary sizes and poor adaptability to new domains or languages. We present a family of models with up to 70 billion parameters based on the hierarchical autoregressive transformer (HAT) architecture. In HAT, an encoder transformer aggregates bytes into word embeddings and then feeds them to the backbone, a classical autoregressive transformer. The outputs of the backbone are then cross-attended by the decoder and converted back into bytes. We show that we can reuse available pre-trained models by converting the Llama 3.1 8B and 70B models into the HAT architecture: Llama-3.1-8B-TFree-HAT and Llama-3.1-70B-TFree-HAT are byte-level models whose encoder and decoder are trained from scratch, but where we adapt the pre-trained Llama backbone, i.e., the transformer blocks with the embedding matrix and head removed, to handle word embeddings instead of the original tokens. We also provide a 7B HAT model, Llama-TFree-HAT-Pretrained, trained entirely from scratch on nearly 4 trillion words. The HAT architecture improves text compression by reducing the number of required sequence positions and enhances robustness to intra-word variations, e.g., spelling differences. Through pre-training, as well as subsequent supervised fine-tuning and direct preference optimization in English and German, we show strong proficiency in both languages, improving on the original Llama 3.1 in most benchmarks. We release our models (including 200 pre-training checkpoints) on Hugging Face.

Sigma Flows for Image and Data Labeling and Learning Structured Prediction

Aug 28, 2024Abstract:This paper introduces the sigma flow model for the prediction of structured labelings of data observed on Riemannian manifolds, including Euclidean image domains as special case. The approach combines the Laplace-Beltrami framework for image denoising and enhancement, introduced by Sochen, Kimmel and Malladi about 25 years ago, and the assignment flow approach introduced and studied by the authors. The sigma flow arises as Riemannian gradient flow of generalized harmonic energies and thus is governed by a nonlinear geometric PDE which determines a harmonic map from a closed Riemannian domain manifold to a statistical manifold, equipped with the Fisher-Rao metric from information geometry. A specific ingredient of the sigma flow is the mutual dependency of the Riemannian metric of the domain manifold on the evolving state. This makes the approach amenable to machine learning in a specific way, by realizing this dependency through a mapping with compact time-variant parametrization that can be learned from data. Proof of concept experiments demonstrate the expressivity of the sigma flow model and prediction performance. Structural similarities to transformer network architectures and networks generated by the geometric integration of sigma flows are pointed out, which highlights the connection to deep learning and, conversely, may stimulate the use of geometric design principles for structured prediction in other areas of scientific machine learning.

Generative Assignment Flows for Representing and Learning Joint Distributions of Discrete Data

Jun 06, 2024

Abstract:We introduce a novel generative model for the representation of joint probability distributions of a possibly large number of discrete random variables. The approach uses measure transport by randomized assignment flows on the statistical submanifold of factorizing distributions, which also enables to sample efficiently from the target distribution and to assess the likelihood of unseen data points. The embedding of the flow via the Segre map in the meta-simplex of all discrete joint distributions ensures that any target distribution can be represented in principle, whose complexity in practice only depends on the parametrization of the affinity function of the dynamical assignment flow system. Our model can be trained in a simulation-free manner without integration by conditional Riemannian flow matching, using the training data encoded as geodesics in closed-form with respect to the e-connection of information geometry. By projecting high-dimensional flow matching in the meta-simplex of joint distributions to the submanifold of factorizing distributions, our approach has strong motivation from first principles of modeling coupled discrete variables. Numerical experiments devoted to distributions of structured image labelings demonstrate the applicability to large-scale problems, which may include discrete distributions in other application areas. Performance measures show that our approach scales better with the increasing number of classes than recent related work.

Generative Modeling of Discrete Joint Distributions by E-Geodesic Flow Matching on Assignment Manifolds

Feb 12, 2024

Abstract:This paper introduces a novel generative model for discrete distributions based on continuous normalizing flows on the submanifold of factorizing discrete measures. Integration of the flow gradually assigns categories and avoids issues of discretizing the latent continuous model like rounding, sample truncation etc. General non-factorizing discrete distributions capable of representing complex statistical dependencies of structured discrete data, can be approximated by embedding the submanifold into a the meta-simplex of all joint discrete distributions and data-driven averaging. Efficient training of the generative model is demonstrated by matching the flow of geodesics of factorizing discrete distributions. Various experiments underline the approach's broad applicability.

Quantum State Assignment Flows

Jun 30, 2023

Abstract:This paper introduces assignment flows for density matrices as state spaces for representing and analyzing data associated with vertices of an underlying weighted graph. Determining an assignment flow by geometric integration of the defining dynamical system causes an interaction of the non-commuting states across the graph, and the assignment of a pure (rank-one) state to each vertex after convergence. Adopting the Riemannian Bogoliubov-Kubo-Mori metric from information geometry leads to closed-form local expressions which can be computed efficiently and implemented in a fine-grained parallel manner. Restriction to the submanifold of commuting density matrices recovers the assignment flows for categorial probability distributions, which merely assign labels from a finite set to each data point. As shown for these flows in our prior work, the novel class of quantum state assignment flows can also be characterized as Riemannian gradient flows with respect to a non-local non-convex potential, after proper reparametrization and under mild conditions on the underlying weight function. This weight function generates the parameters of the layers of a neural network, corresponding to and generated by each step of the geometric integration scheme. Numerical results indicates and illustrate the potential of the novel approach for data representation and analysis, including the representation of correlations of data across the graph by entanglement and tensorization.

On Certified Generalization in Structured Prediction

Jun 15, 2023

Abstract:In structured prediction, target objects have rich internal structure which does not factorize into independent components and violates common i.i.d. assumptions. This challenge becomes apparent through the exponentially large output space in applications such as image segmentation or scene graph generation. We present a novel PAC-Bayesian risk bound for structured prediction wherein the rate of generalization scales not only with the number of structured examples but also with their size. The underlying assumption, conforming to ongoing research on generative models, is that data are generated by the Knothe-Rosenblatt rearrangement of a factorizing reference measure. This allows to explicitly distill the structure between random output variables into a Wasserstein dependency matrix. Our work makes a preliminary step towards leveraging powerful generative models to establish generalization bounds for discriminative downstream tasks in the challenging setting of structured prediction.

A Nonlocal Graph-PDE and Higher-Order Geometric Integration for Image Labeling

May 09, 2022

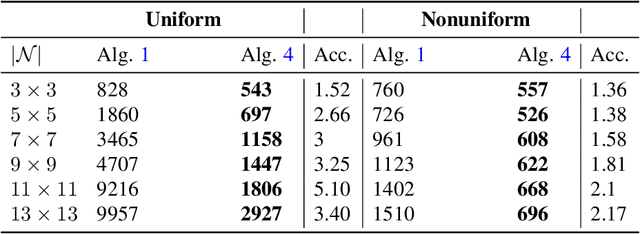

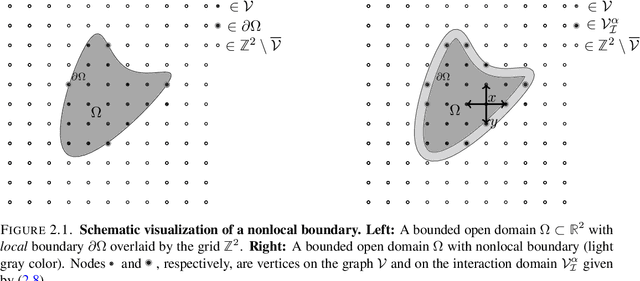

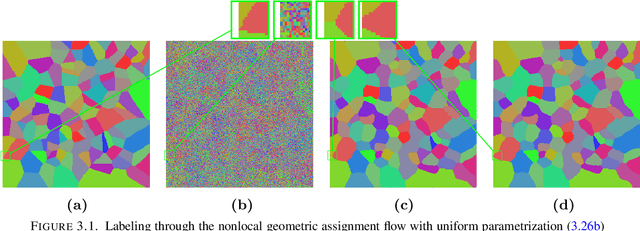

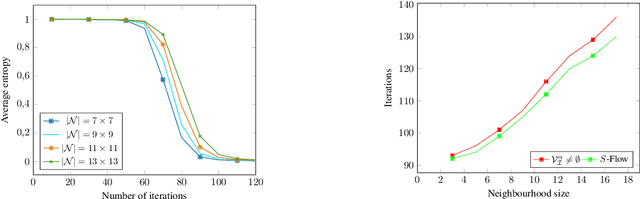

Abstract:This paper introduces a novel nonlocal partial difference equation (PDE) for labeling metric data on graphs. The PDE is derived as nonlocal reparametrization of the assignment flow approach that was introduced in \textit{J.~Math.~Imaging \& Vision} 58(2), 2017. Due to this parameterization, solving the PDE numerically is shown to be equivalent to computing the Riemannian gradient flow with respect to a nonconvex potential. We devise an entropy-regularized difference-of-convex-functions (DC) decomposition of this potential and show that the basic geometric Euler scheme for integrating the assignment flow is equivalent to solving the PDE by an established DC programming scheme. Moreover, the viewpoint of geometric integration reveals a basic way to exploit higher-order information of the vector field that drives the assignment flow, in order to devise a novel accelerated DC programming scheme. A detailed convergence analysis of both numerical schemes is provided and illustrated by numerical experiments.

Self-Certifying Classification by Linearized Deep Assignment

Jan 26, 2022Abstract:We propose a novel class of deep stochastic predictors for classifying metric data on graphs within the PAC-Bayes risk certification paradigm. Classifiers are realized as linearly parametrized deep assignment flows with random initial conditions. Building on the recent PAC-Bayes literature and data-dependent priors, this approach enables (i) to use risk bounds as training objectives for learning posterior distributions on the hypothesis space and (ii) to compute tight out-of-sample risk certificates of randomized classifiers more efficiently than related work. Comparison with empirical test set errors illustrates the performance and practicality of this self-certifying classification method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge