Azaria Paz

On Testing Whether an Embedded Bayesian Network Represents a Probability Model

Feb 27, 2013

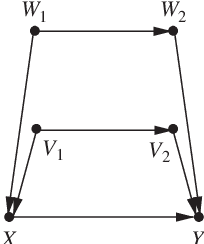

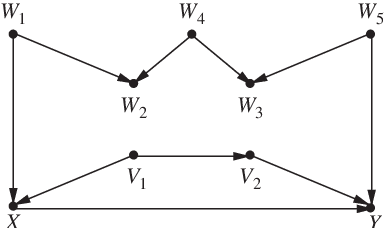

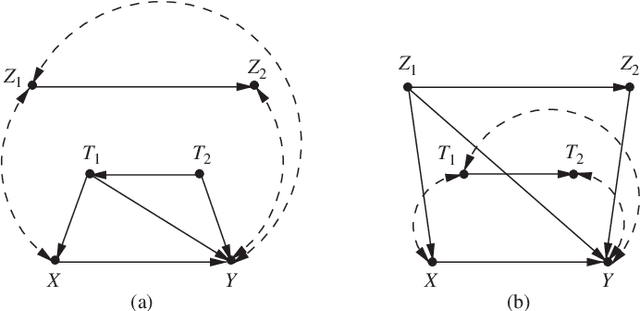

Abstract:Testing the validity of probabilistic models containing unmeasured (hidden) variables is shown to be a hard task. We show that the task of testing whether models are structurally incompatible with the data at hand, requires an exponential number of independence evaluations, each of the form: "X is conditionally independent of Y, given Z." In contrast, a linear number of such evaluations is required to test a standard Bayesian network (one per vertex). On the positive side, we show that if a network with hidden variables G has a tree skeleton, checking whether G represents a given probability model P requires the polynomial number of such independence evaluations. Moreover, we provide an algorithm that efficiently constructs a tree-structured Bayesian network (with hidden variables) that represents P if such a network exists, and further recognizes when such a network does not exist.

Confounding Equivalence in Causal Inference

Mar 15, 2012

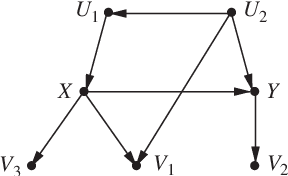

Abstract:The paper provides a simple test for deciding, from a given causal diagram, whether two sets of variables have the same bias-reducing potential under adjustment. The test requires that one of the following two conditions holds: either (1) both sets are admissible (i.e., satisfy the back-door criterion) or (2) the Markov boundaries surrounding the manipulated variable(s) are identical in both sets. Applications to covariate selection and model testing are discussed.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge