Arpit Jadon

Test-Time Modification: Inverse Domain Transformation for Robust Perception

Dec 15, 2025

Abstract:Generative foundation models contain broad visual knowledge and can produce diverse image variations, making them particularly promising for advancing domain generalization tasks. While they can be used for training data augmentation, synthesizing comprehensive target-domain variations remains slow, expensive, and incomplete. We propose an alternative: using diffusion models at test time to map target images back to the source distribution where the downstream model was trained. This approach requires only a source domain description, preserves the task model, and eliminates large-scale synthetic data generation. We demonstrate consistent improvements across segmentation, detection, and classification tasks under challenging environmental shifts in real-to-real domain generalization scenarios with unknown target distributions. Our analysis spans multiple generative and downstream models, including an ensemble variant for enhanced robustness. The method achieves substantial relative gains: 137% on BDD100K-Night, 68% on ImageNet-R, and 62% on DarkZurich.

FireNet: A Specialized Lightweight Fire & Smoke Detection Model for Real-Time IoT Applications

May 28, 2019

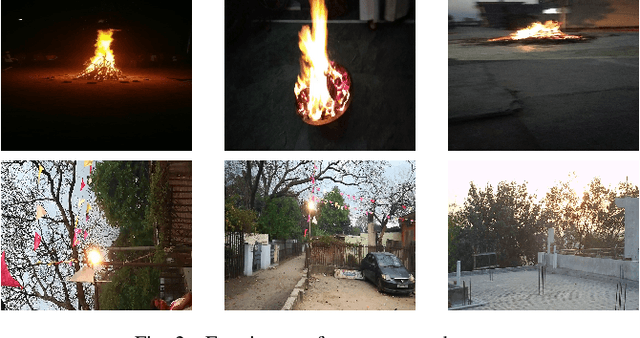

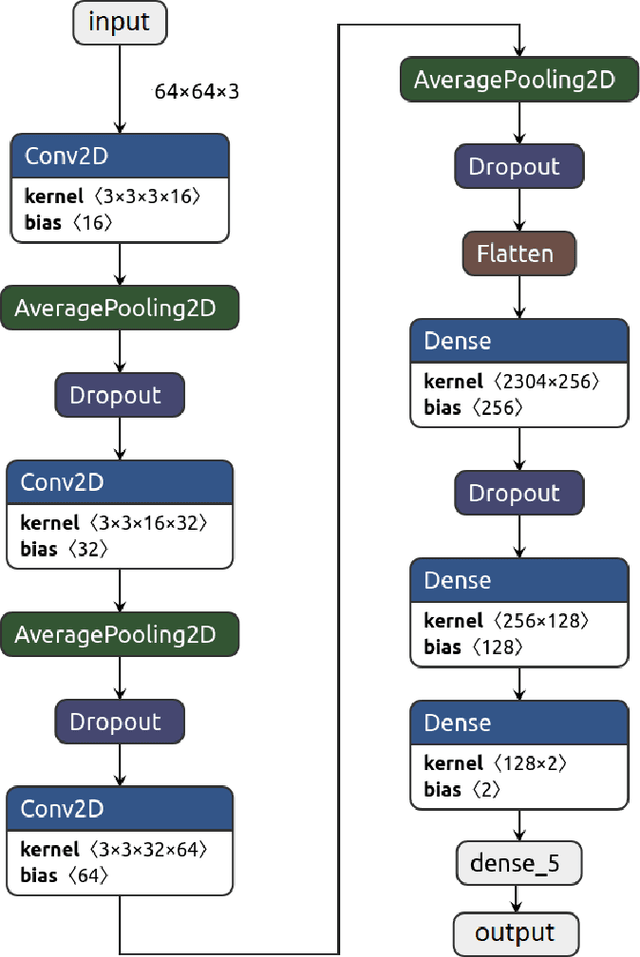

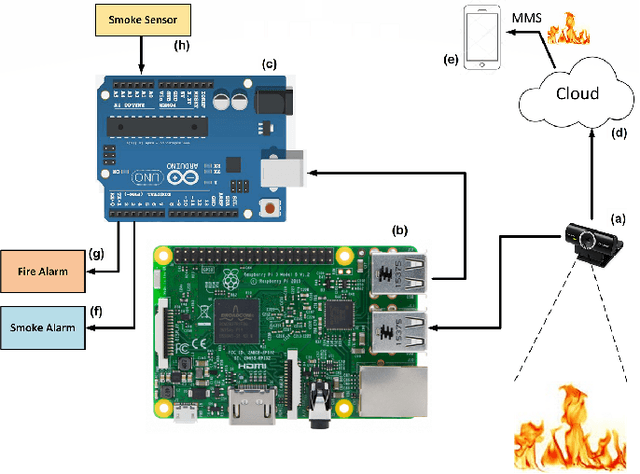

Abstract:Fire disasters typically result in lot of loss to life and property. It is therefore imperative that precise, fast, and possibly portable solutions to detect fire be made readily available to the masses at reasonable prices. There have been several research attempts to design effective and appropriately priced fire detection systems with varying degrees of success. However, most of them demonstrate a trade-off between performance and model size (which decides the model's ability to be installed on portable devices). The work presented in this paper is an attempt to deal with both the performance and model size issues in one design. Toward that end, a `designed-from-scratch' neural network, named FireNet, is proposed which is worthy on both the counts: (i) it has better performance than existing counterparts, and (ii) it is lightweight enough to be deploy-able on embedded platforms like Raspberry Pi. Performance evaluations on a standard dataset, as well as our own newly introduced custom-compiled fire dataset, are extremely encouraging.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge