Anxiao

Andrew

Functional Error Correction for Robust Neural Networks

Jan 12, 2020

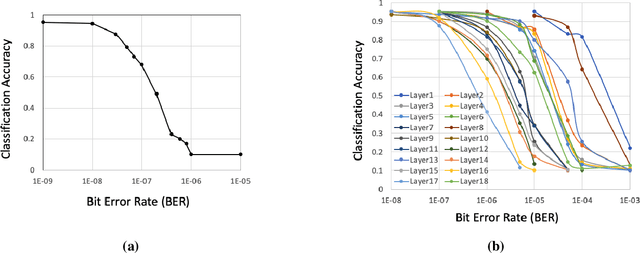

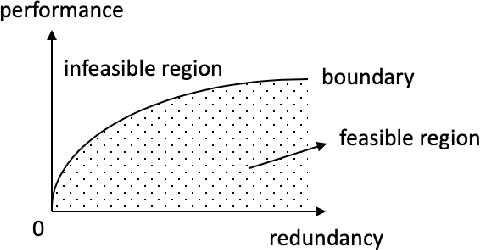

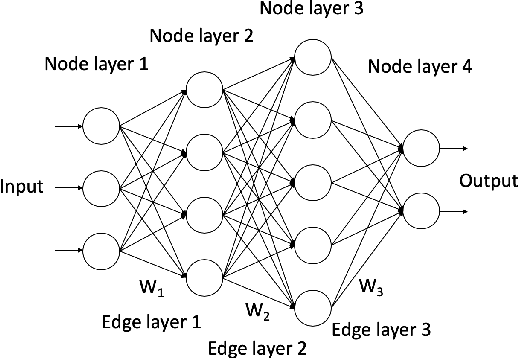

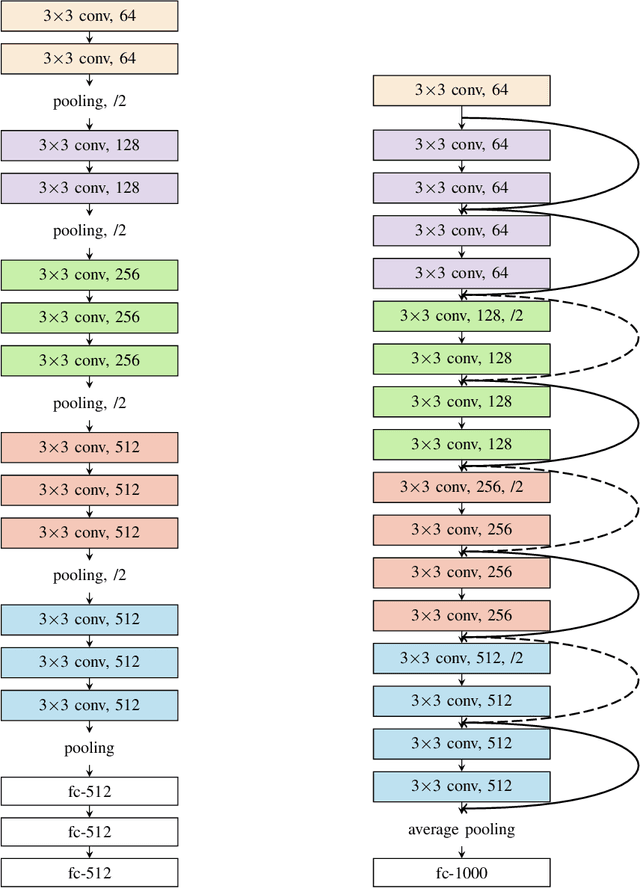

Abstract:When neural networks (NeuralNets) are implemented in hardware, their weights need to be stored in memory devices. As noise accumulates in the stored weights, the NeuralNet's performance will degrade. This paper studies how to use error correcting codes (ECCs) to protect the weights. Different from classic error correction in data storage, the optimization objective is to optimize the NeuralNet's performance after error correction, instead of minimizing the Uncorrectable Bit Error Rate in the protected bits. That is, by seeing the NeuralNet as a function of its input, the error correction scheme is function-oriented. A main challenge is that a deep NeuralNet often has millions to hundreds of millions of weights, causing a large redundancy overhead for ECCs, and the relationship between the weights and its NeuralNet's performance can be highly complex. To address the challenge, we propose a Selective Protection (SP) scheme, which chooses only a subset of important bits for ECC protection. To find such bits and achieve an optimized tradeoff between ECC's redundancy and NeuralNet's performance, we present an algorithm based on deep reinforcement learning. Experimental results verify that compared to the natural baseline scheme, the proposed algorithm achieves substantially better performance for the functional error correction task.

U-Finger: Multi-Scale Dilated Convolutional Network for Fingerprint Image Denoising and Inpainting

Aug 05, 2018

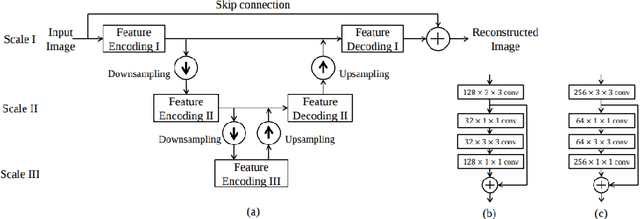

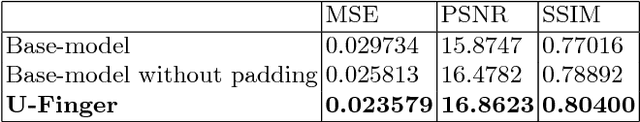

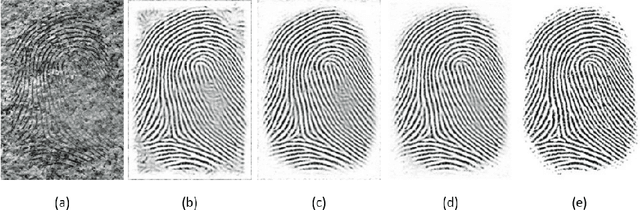

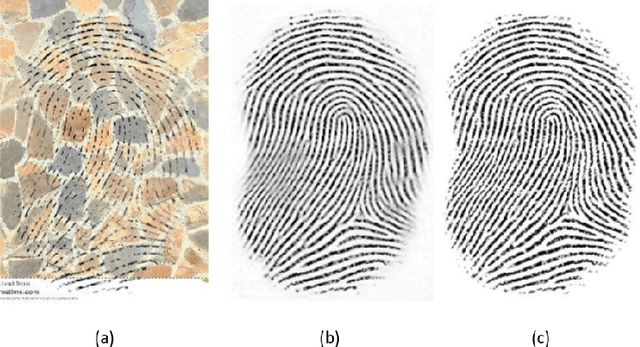

Abstract:This paper studies the challenging problem of fingerprint image denoising and inpainting. To tackle the challenge of suppressing complicated artifacts (blur, brightness, contrast, elastic transformation, occlusion, scratch, resolution, rotation, and so on) while preserving fine textures, we develop a multi-scale convolutional network, termed U- Finger. Based on the domain expertise, we show that the usage of dilated convolutions as well as the removal of padding have important positive impacts on the final restoration performance, in addition to multi-scale cascaded feature modules. Our model achieves the overall ranking of No.2 in the ECCV 2018 Chalearn LAP Inpainting Competition Track 3 (Fingerprint Denoising and Inpainting). Among all participating teams, we obtain the MSE of 0.0231 (rank 2), PSNR 16.9688 dB (rank 2), and SSIM 0.8093 (rank 3) on the hold-out testing set.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge