Anjith George

Cross Modal Focal Loss for RGBD Face Anti-Spoofing

Mar 01, 2021

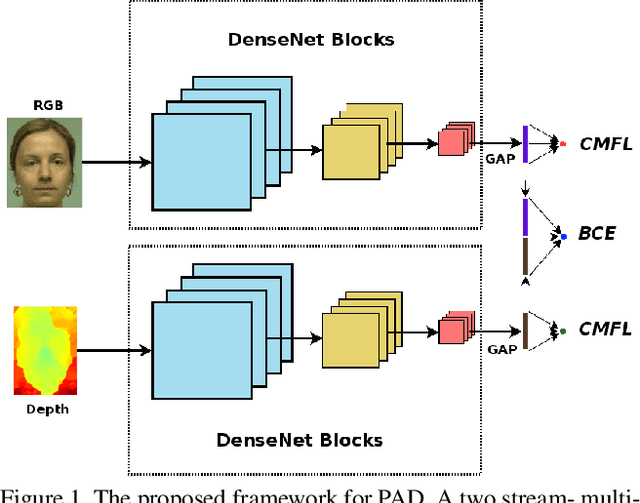

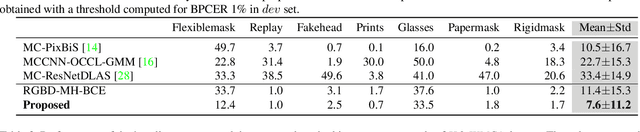

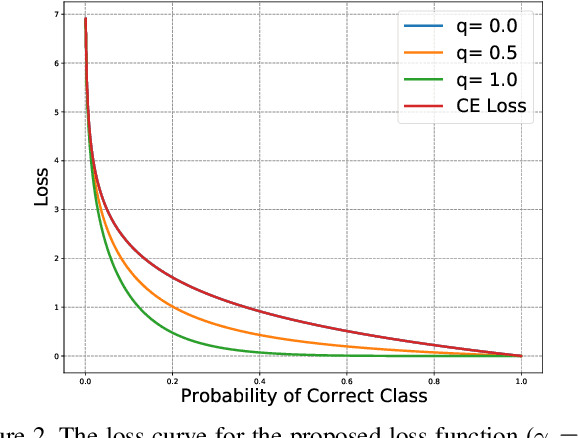

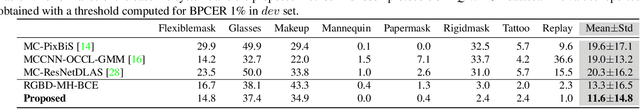

Abstract:Automatic methods for detecting presentation attacks are essential to ensure the reliable use of facial recognition technology. Most of the methods available in the literature for presentation attack detection (PAD) fails in generalizing to unseen attacks. In recent years, multi-channel methods have been proposed to improve the robustness of PAD systems. Often, only a limited amount of data is available for additional channels, which limits the effectiveness of these methods. In this work, we present a new framework for PAD that uses RGB and depth channels together with a novel loss function. The new architecture uses complementary information from the two modalities while reducing the impact of overfitting. Essentially, a cross-modal focal loss function is proposed to modulate the loss contribution of each channel as a function of the confidence of individual channels. Extensive evaluations in two publicly available datasets demonstrate the effectiveness of the proposed approach.

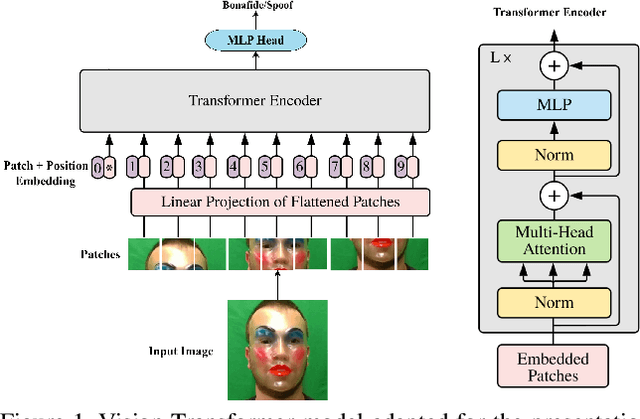

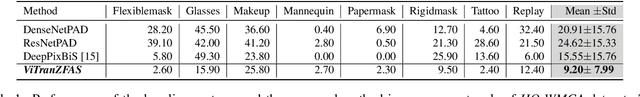

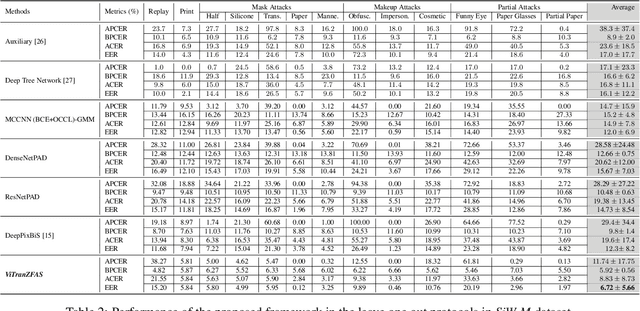

On the Effectiveness of Vision Transformers for Zero-shot Face Anti-Spoofing

Nov 16, 2020

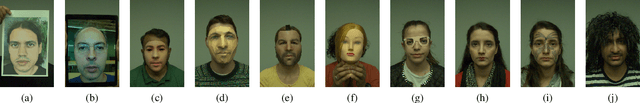

Abstract:The vulnerability of face recognition systems to presentation attacks has limited their application in security-critical scenarios. Automatic methods of detecting such malicious attempts are essential for the safe use of facial recognition technology. Although various methods have been suggested for detecting such attacks, most of them over-fit the training set and fail in generalizing to unseen attacks and environments. In this work, we use transfer learning from the vision transformer model for the zero-shot anti-spoofing task. The effectiveness of the proposed approach is demonstrated through experiments in publicly available datasets. The proposed approach outperforms the state of the art methods in the zero-shot protocols in the HQ-WMCA and SiW-M datasets by a large margin. Besides, the model achieves a significant boost in cross-database performance as well.

The High-Quality Wide Multi-Channel Attack database

Sep 21, 2020

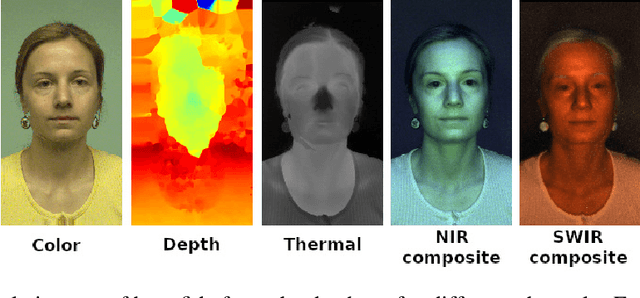

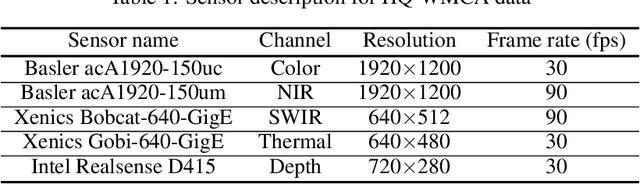

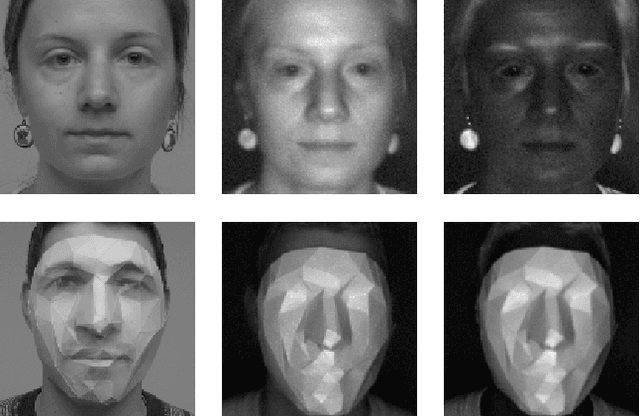

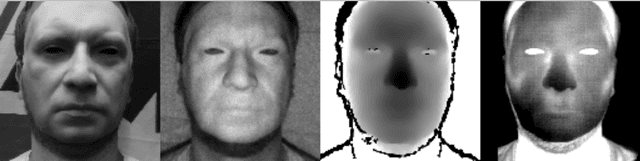

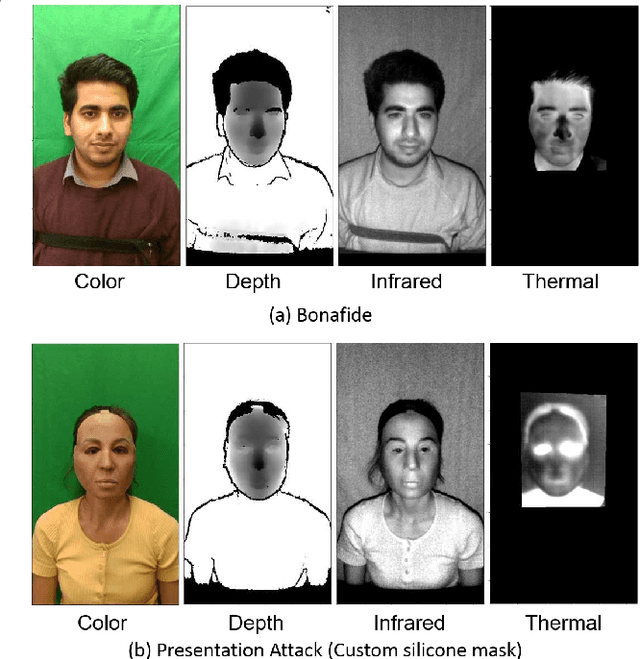

Abstract:The High-Quality Wide Multi-Channel Attack database (HQ-WMCA) database extends the previous Wide Multi-Channel Attack database(WMCA), with more channels including color, depth, thermal, infrared (spectra), and short-wave infrared (spectra), and also a wide variety of attacks.

Deep Models and Shortwave Infrared Information to Detect Face Presentation Attacks

Jul 22, 2020

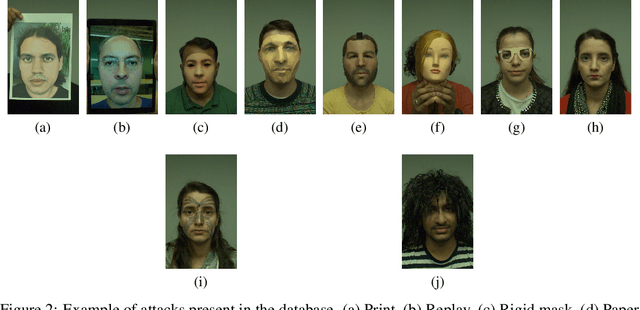

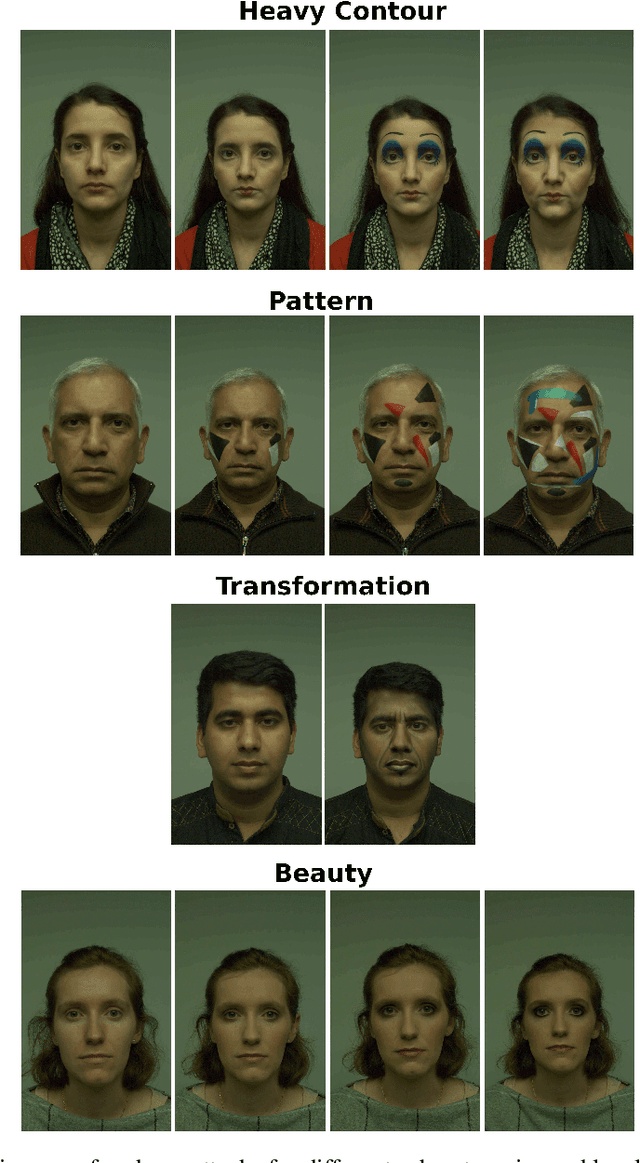

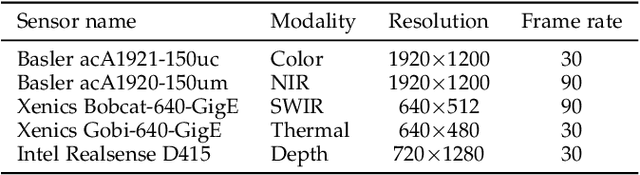

Abstract:This paper addresses the problem of face presentation attack detection using different image modalities. In particular, the usage of short wave infrared (SWIR) imaging is considered. Face presentation attack detection is performed using recent models based on Convolutional Neural Networks using only carefully selected SWIR image differences as input. Conducted experiments show superior performance over similar models acting on either color images or on a combination of different modalities (visible, NIR, thermal and depth), as well as on a SVM-based classifier acting on SWIR image differences. Experiments have been carried on a new public and freely available database, containing a wide variety of attacks. Video sequences have been recorded thanks to several sensors resulting in 14 different streams in the visible, NIR, SWIR and thermal spectra, as well as depth data. The best proposed approach is able to almost perfectly detect all impersonation attacks while ensuring low bonafide classification errors. On the other hand, obtained results show that obfuscation attacks are more difficult to detect. We hope that the proposed database will foster research on this challenging problem. Finally, all the code and instructions to reproduce presented experiments is made available to the research community.

Learning One Class Representations for Face Presentation Attack Detection using Multi-channel Convolutional Neural Networks

Jul 22, 2020

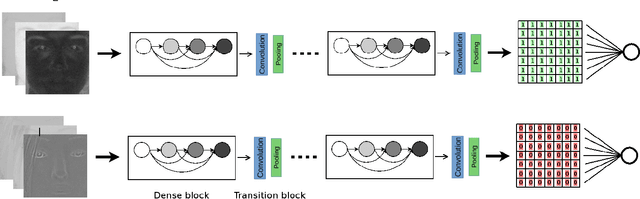

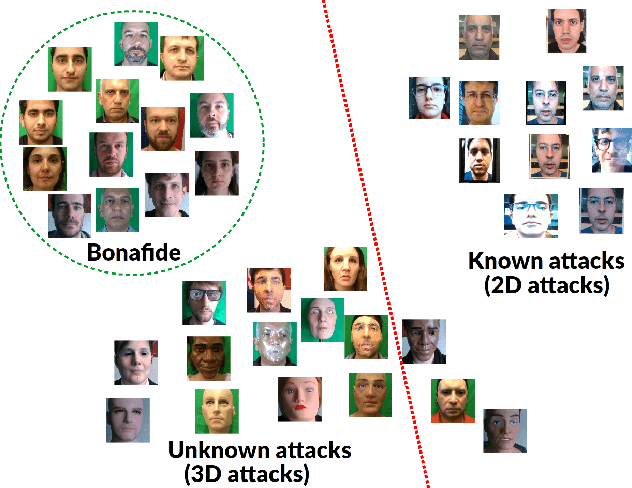

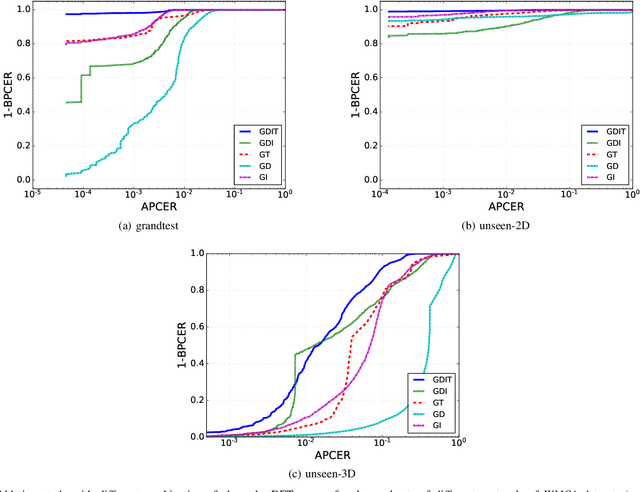

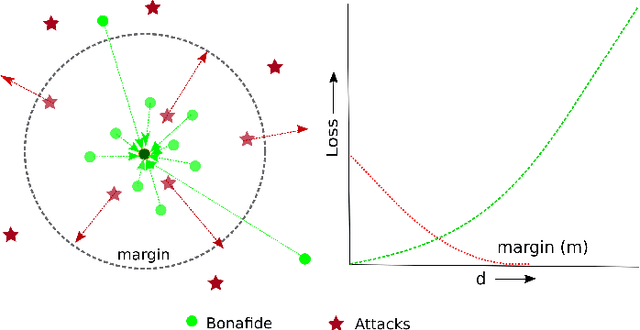

Abstract:Face recognition has evolved as a widely used biometric modality. However, its vulnerability against presentation attacks poses a significant security threat. Though presentation attack detection (PAD) methods try to address this issue, they often fail in generalizing to unseen attacks. In this work, we propose a new framework for PAD using a one-class classifier, where the representation used is learned with a Multi-Channel Convolutional Neural Network (MCCNN). A novel loss function is introduced, which forces the network to learn a compact embedding for bonafide class while being far from the representation of attacks. A one-class Gaussian Mixture Model is used on top of these embeddings for the PAD task. The proposed framework introduces a novel approach to learn a robust PAD system from bonafide and available (known) attack classes. This is particularly important as collecting bonafide data and simpler attacks are much easier than collecting a wide variety of expensive attacks. The proposed system is evaluated on the publicly available WMCA multi-channel face PAD database, which contains a wide variety of 2D and 3D attacks. Further, we have performed experiments with MLFP and SiW-M datasets using RGB channels only. Superior performance in unseen attack protocols shows the effectiveness of the proposed approach. Software, data, and protocols to reproduce the results are made available publicly.

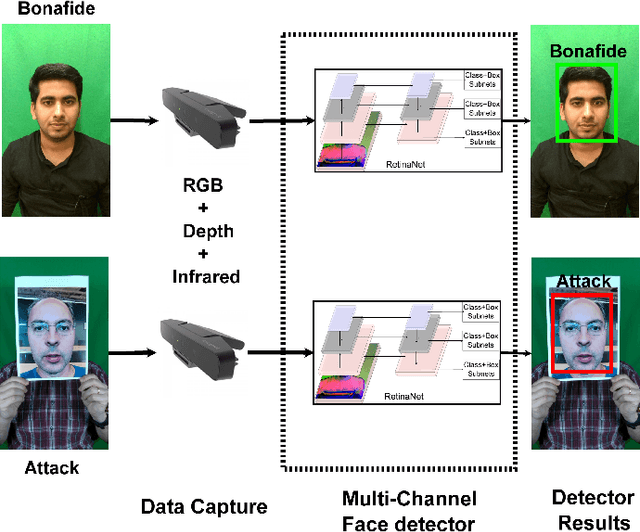

Can Your Face Detector Do Anti-spoofing? Face Presentation Attack Detection with a Multi-Channel Face Detector

Jun 30, 2020

Abstract:In a typical face recognition pipeline, the task of the face detector is to localize the face region. However, the face detector localizes regions that look like a face, irrespective of the liveliness of the face, which makes the entire system susceptible to presentation attacks. In this work, we try to reformulate the task of the face detector to detect real faces, thus eliminating the threat of presentation attacks. While this task could be challenging with visible spectrum images alone, we leverage the multi-channel information available from off the shelf devices (such as color, depth, and infrared channels) to design a multi-channel face detector. The proposed system can be used as a live-face detector obviating the need for a separate presentation attack detection module, making the system reliable in practice without any additional computational overhead. The main idea is to leverage a single-stage object detection framework, with a joint representation obtained from different channels for the PAD task. We have evaluated our approach in the multi-channel WMCA dataset containing a wide variety of attacks to show the effectiveness of the proposed framework.

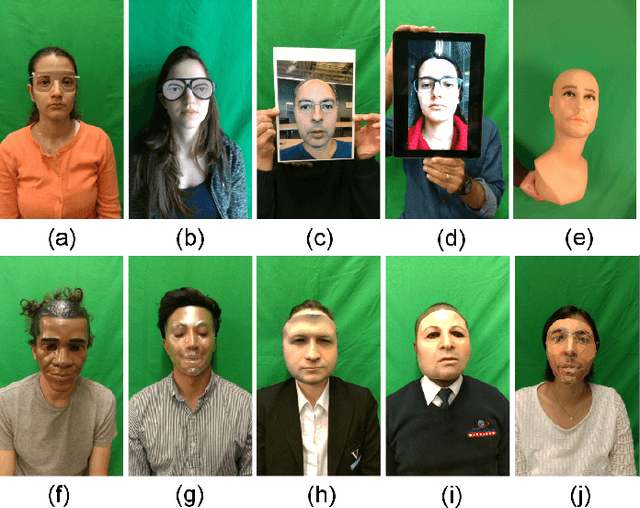

Biometric Face Presentation Attack Detection with Multi-Channel Convolutional Neural Network

Sep 19, 2019

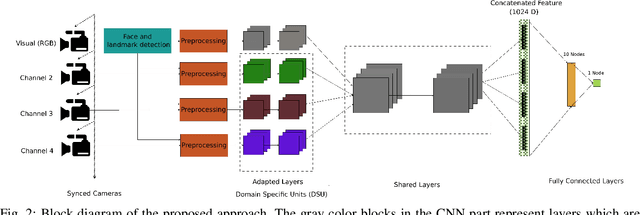

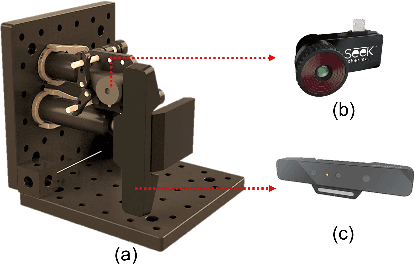

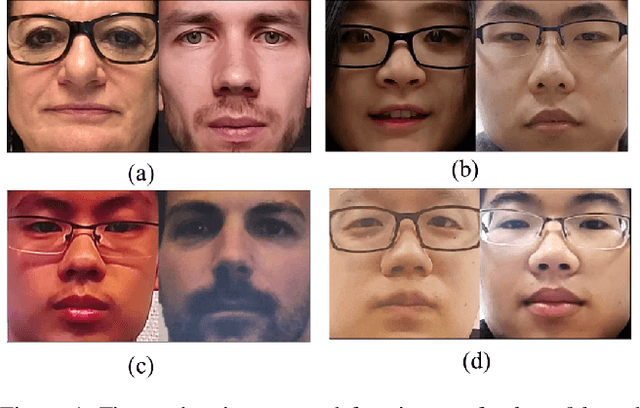

Abstract:Face recognition is a mainstream biometric authentication method. However, vulnerability to presentation attacks (a.k.a spoofing) limits its usability in unsupervised applications. Even though there are many methods available for tackling presentation attacks (PA), most of them fail to detect sophisticated attacks such as silicone masks. As the quality of presentation attack instruments improves over time, achieving reliable PA detection with visual spectra alone remains very challenging. We argue that analysis in multiple channels might help to address this issue. In this context, we propose a multi-channel Convolutional Neural Network based approach for presentation attack detection (PAD). We also introduce the new Wide Multi-Channel presentation Attack (WMCA) database for face PAD which contains a wide variety of 2D and 3D presentation attacks for both impersonation and obfuscation attacks. Data from different channels such as color, depth, near-infrared and thermal are available to advance the research in face PAD. The proposed method was compared with feature-based approaches and found to outperform the baselines achieving an ACER of 0.3% on the introduced dataset. The database and the software to reproduce the results are made available publicly.

* 16 pages

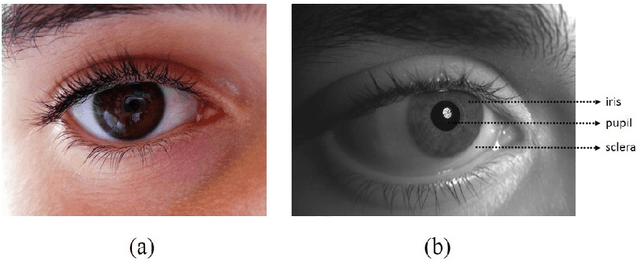

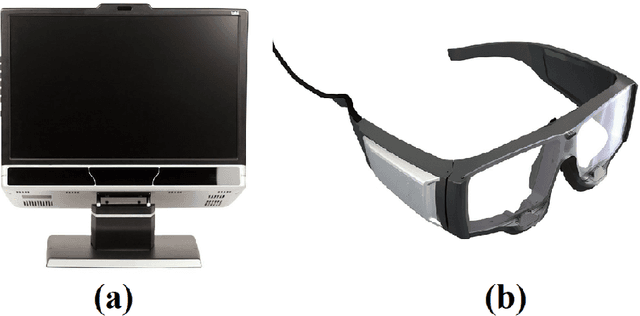

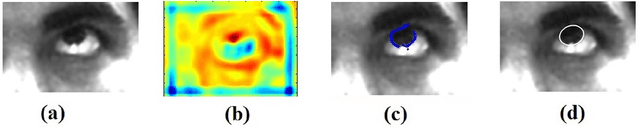

Image based Eye Gaze Tracking and its Applications

Jul 09, 2019

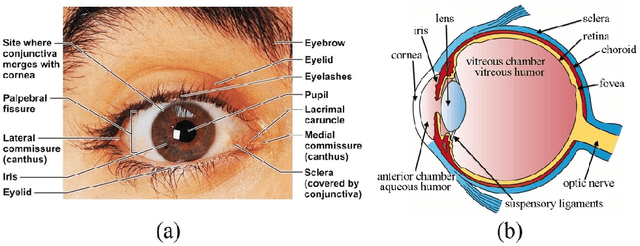

Abstract:Eye movements play a vital role in perceiving the world. Eye gaze can give a direct indication of the users point of attention, which can be useful in improving human-computer interaction. Gaze estimation in a non-intrusive manner can make human-computer interaction more natural. Eye tracking can be used for several applications such as fatigue detection, biometric authentication, disease diagnosis, activity recognition, alertness level estimation, gaze-contingent display, human-computer interaction, etc. Even though eye-tracking technology has been around for many decades, it has not found much use in consumer applications. The main reasons are the high cost of eye tracking hardware and lack of consumer level applications. In this work, we attempt to address these two issues. In the first part of this work, image-based algorithms are developed for gaze tracking which includes a new two-stage iris center localization algorithm. We have developed a new algorithm which works in challenging conditions such as motion blur, glint, and varying illumination levels. A person independent gaze direction classification framework using a convolutional neural network is also developed which eliminates the requirement of user-specific calibration. In the second part of this work, we have developed two applications which can benefit from eye tracking data. A new framework for biometric identification based on eye movement parameters is developed. A framework for activity recognition, using gaze data from a head-mounted eye tracker is also developed. The information from gaze data, ego-motion, and visual features are integrated to classify the activities.

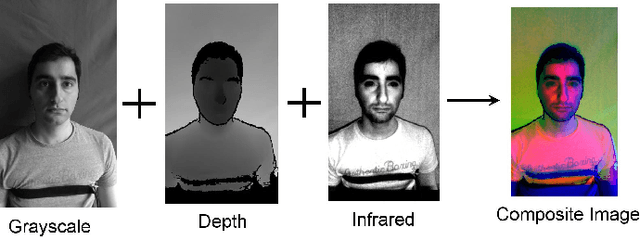

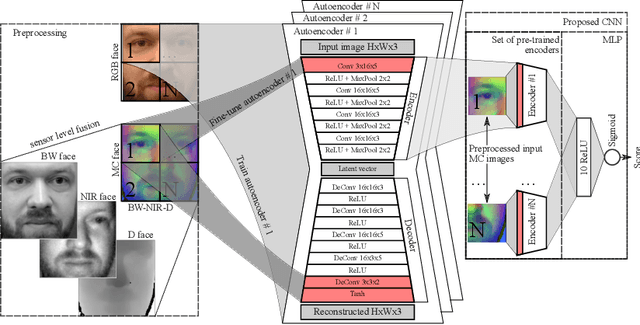

Domain Adaptation in Multi-Channel Autoencoder based Features for Robust Face Anti-Spoofing

Jul 09, 2019

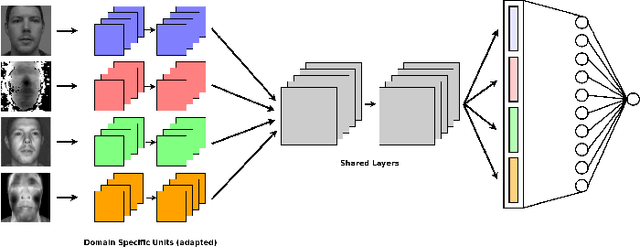

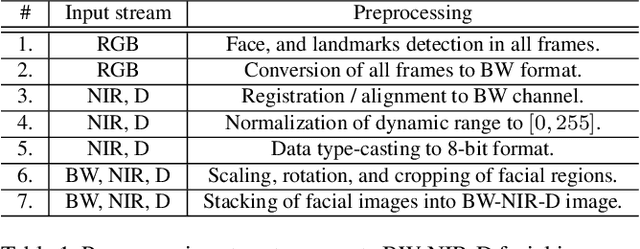

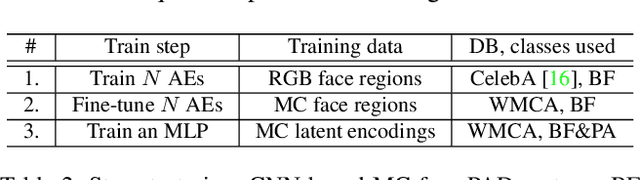

Abstract:While the performance of face recognition systems has improved significantly in the last decade, they are proved to be highly vulnerable to presentation attacks (spoofing). Most of the research in the field of face presentation attack detection (PAD), was focused on boosting the performance of the systems within a single database. Face PAD datasets are usually captured with RGB cameras, and have very limited number of both bona-fide samples and presentation attack instruments. Training face PAD systems on such data leads to poor performance, even in the closed-set scenario, especially when sophisticated attacks are involved. We explore two paths to boost the performance of the face PAD system against challenging attacks. First, by using multi-channel (RGB, Depth and NIR) data, which is still easily accessible in a number of mass production devices. Second, we develop a novel Autoencoders + MLP based face PAD algorithm. Moreover, instead of collecting more data for training of the proposed deep architecture, the domain adaptation technique is proposed, transferring the knowledge of facial appearance from RGB to multi-channel domain. We also demonstrate, that learning the features of individual facial regions, is more discriminative than the features learned from an entire face. The proposed system is tested on a very recent publicly available multi-channel PAD database with a wide variety of presentation attacks.

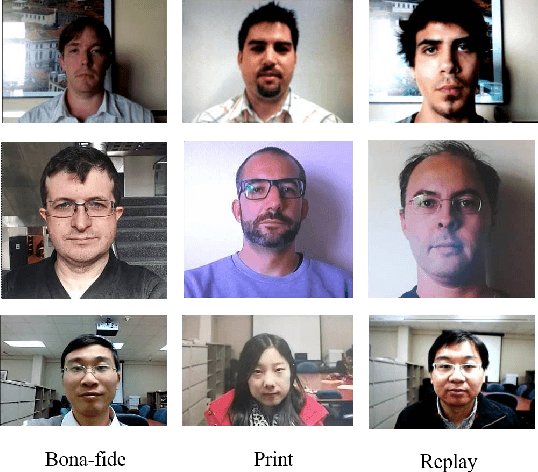

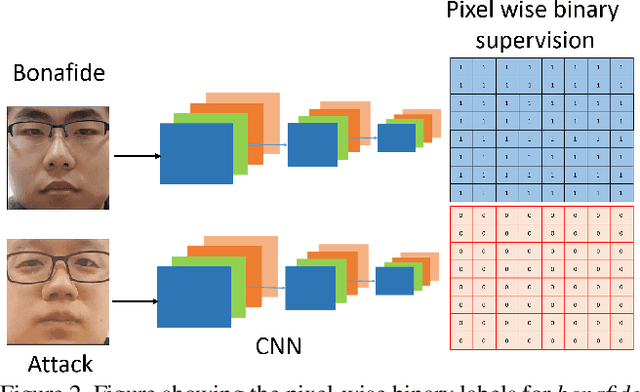

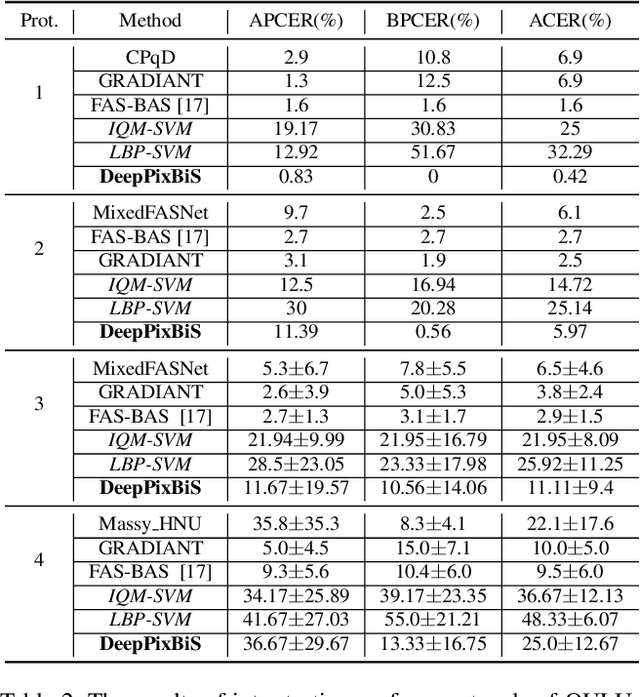

Deep Pixel-wise Binary Supervision for Face Presentation Attack Detection

Jul 09, 2019

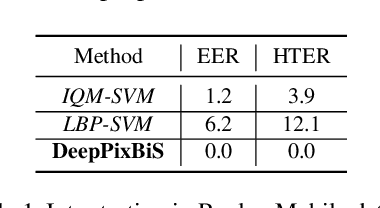

Abstract:Face recognition has evolved as a prominent biometric authentication modality. However, vulnerability to presentation attacks curtails its reliable deployment. Automatic detection of presentation attacks is essential for secure use of face recognition technology in unattended scenarios. In this work, we introduce a Convolutional Neural Network (CNN) based framework for presentation attack detection, with deep pixel-wise supervision. The framework uses only frame level information making it suitable for deployment in smart devices with minimal computational and time overhead. We demonstrate the effectiveness of the proposed approach in public datasets for both intra as well as cross-dataset experiments. The proposed approach achieves an HTER of 0% in Replay Mobile dataset and an ACER of 0.42% in Protocol-1 of OULU dataset outperforming state of the art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge