Andru P. Twinanda

Single- and Multi-Task Architectures for Surgical Workflow Challenge at M2CAI 2016

Oct 28, 2016

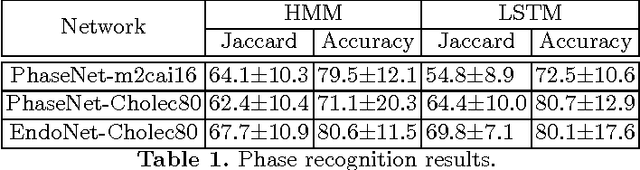

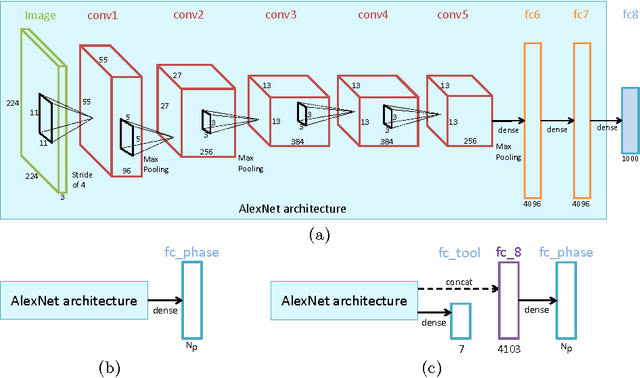

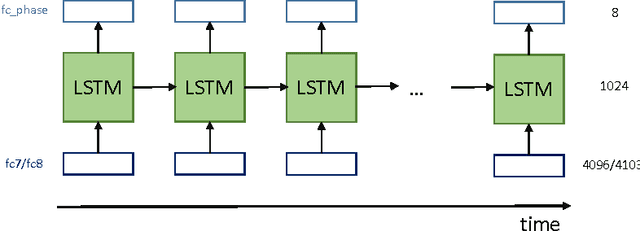

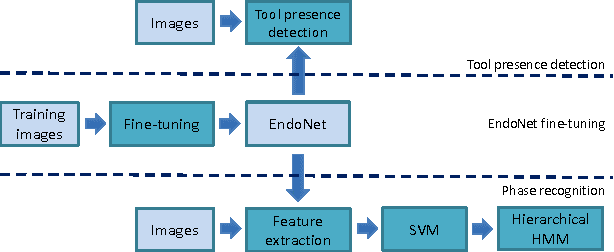

Abstract:The surgical workflow challenge at M2CAI 2016 consists of identifying 8 surgical phases in cholecystectomy procedures. Here, we propose to use deep architectures that are based on our previous work where we presented several architectures to perform multiple recognition tasks on laparoscopic videos. In this technical report, we present the phase recognition results using two architectures: (1) a single-task architecture designed to perform solely the surgical phase recognition task and (2) a multi-task architecture designed to perform jointly phase recognition and tool presence detection. On top of these architectures we propose to use two different approaches to enforce the temporal constraints of the surgical workflow: (1) HMM-based and (2) LSTM-based pipelines. The results show that the LSTM-based approach is able to outperform the HMM-based approach and also to properly enforce the temporal constraints into the recognition process.

Single- and Multi-Task Architectures for Tool Presence Detection Challenge at M2CAI 2016

Oct 27, 2016

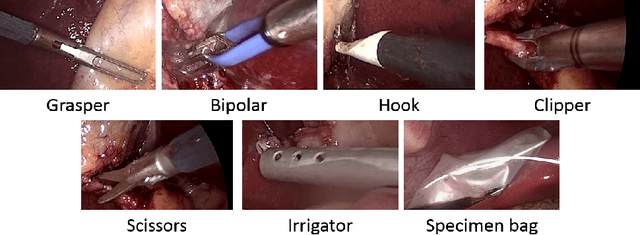

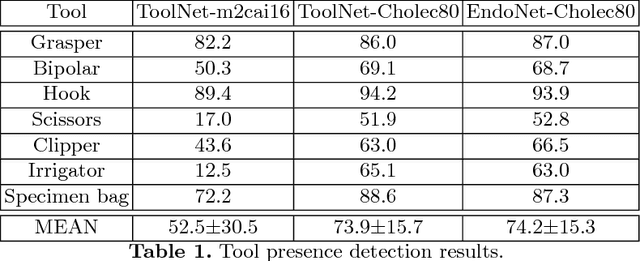

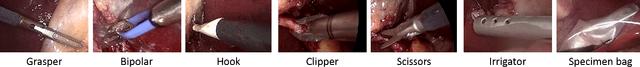

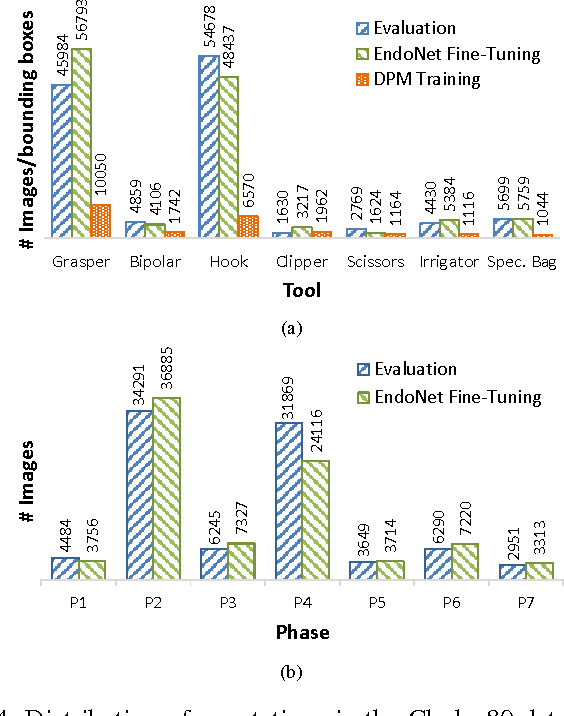

Abstract:The tool presence detection challenge at M2CAI 2016 consists of identifying the presence/absence of seven surgical tools in the images of cholecystectomy videos. Here, we propose to use deep architectures that are based on our previous work where we presented several architectures to perform multiple recognition tasks on laparoscopic videos. In this technical report, we present the tool presence detection results using two architectures: (1) a single-task architecture designed to perform solely the tool presence detection task and (2) a multi-task architecture designed to perform jointly phase recognition and tool presence detection. The results show that the multi-task network only slightly improves the tool presence detection results. In constrast, a significant improvement is obtained when there are more data available to train the networks. This significant improvement can be regarded as a call for action for other institutions to start working toward publishing more datasets into the community, so that better models could be generated to perform the task.

EndoNet: A Deep Architecture for Recognition Tasks on Laparoscopic Videos

May 23, 2016

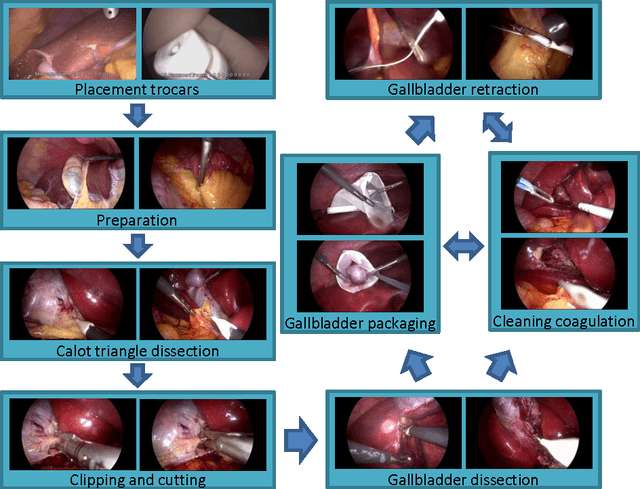

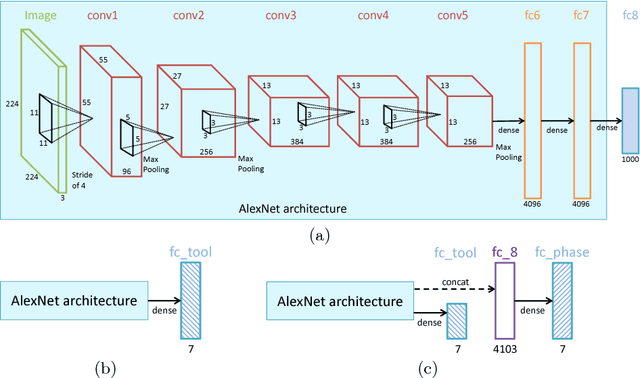

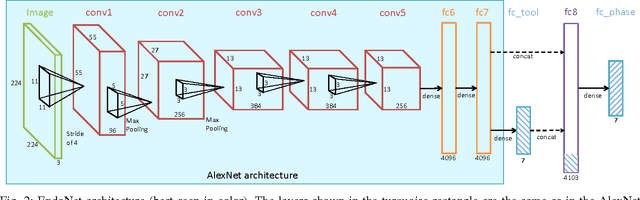

Abstract:Surgical workflow recognition has numerous potential medical applications, such as the automatic indexing of surgical video databases and the optimization of real-time operating room scheduling, among others. As a result, phase recognition has been studied in the context of several kinds of surgeries, such as cataract, neurological, and laparoscopic surgeries. In the literature, two types of features are typically used to perform this task: visual features and tool usage signals. However, the visual features used are mostly handcrafted. Furthermore, the tool usage signals are usually collected via a manual annotation process or by using additional equipment. In this paper, we propose a novel method for phase recognition that uses a convolutional neural network (CNN) to automatically learn features from cholecystectomy videos and that relies uniquely on visual information. In previous studies, it has been shown that the tool signals can provide valuable information in performing the phase recognition task. Thus, we present a novel CNN architecture, called EndoNet, that is designed to carry out the phase recognition and tool presence detection tasks in a multi-task manner. To the best of our knowledge, this is the first work proposing to use a CNN for multiple recognition tasks on laparoscopic videos. Extensive experimental comparisons to other methods show that EndoNet yields state-of-the-art results for both tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge