Andrei Aksjonov

A Safety-Critical Decision Making and Control Framework Combining Machine Learning and Rule-based Algorithms

Jan 30, 2022Abstract:While artificial-intelligence-based methods suffer from lack of transparency, rule-based methods dominate in safety-critical systems. Yet, the latter cannot compete with the first ones in robustness to multiple requirements, for instance, simultaneously addressing safety, comfort, and efficiency. Hence, to benefit from both methods they must be joined in a single system. This paper proposes a decision making and control framework, which profits from advantages of both the rule- and machine-learning-based techniques while compensating for their disadvantages. The proposed method embodies two controllers operating in parallel, called Safety and Learned. A rule-based switching logic selects one of the actions transmitted from both controllers. The Safety controller is prioritized every time, when the Learned one does not meet the safety constraint, and also directly participates in the safe Learned controller training. Decision making and control in autonomous driving is chosen as the system case study, where an autonomous vehicle learns a multi-task policy to safely cross an unprotected intersection. Multiple requirements (i.e., safety, efficiency, and comfort) are set for vehicle operation. A numerical simulation is performed for the proposed framework validation, where its ability to satisfy the requirements and robustness to changing environment is successfully demonstrated.

Planning for Safe Abortable Overtaking Maneuvers in Autonomous Driving

Apr 12, 2021

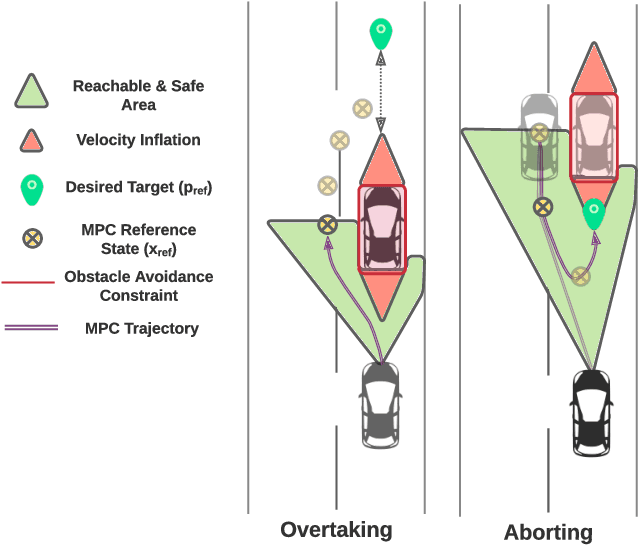

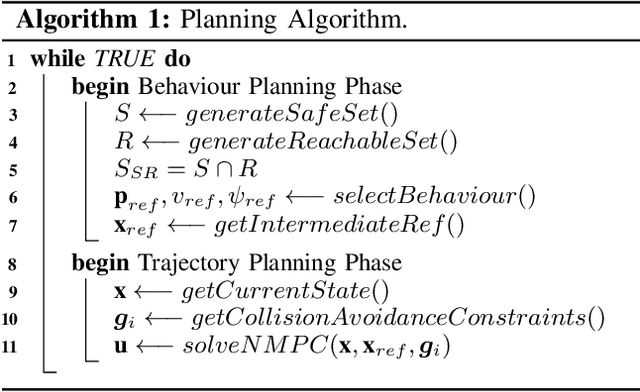

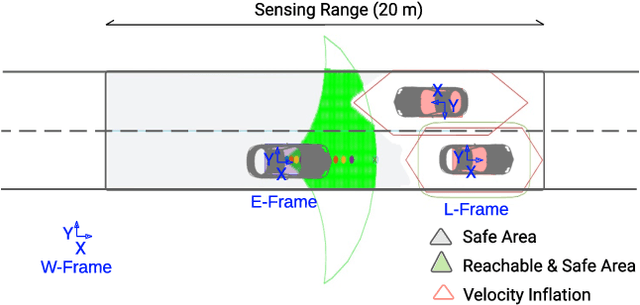

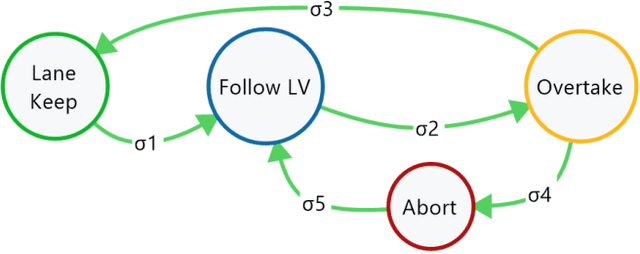

Abstract:Overtaking is one of the most challenging tasks in driving, and the current solutions to autonomous overtaking are limited to simple and static scenarios. In this paper, we present a method for behaviour and trajectory planning for safe autonomous overtaking. The proposed method optimizes the trajectory by simultaneously enforcing safety and minimizing intrusion onto the adjacent lane. Furthermore, the method allows the overtaking to be aborted, enabling the autonomous vehicle to merge back in the lane, if safety is compromised, because of e.g. traffic in opposing direction appearing during the maneuver execution. A finite state machine is used to select an appropriate maneuver at each time, and a combination of safe and reachable sets is used to iteratively generate intermediate reference targets based on the current maneuver. A nonlinear model predictive controller then plans dynamically feasible and collision-free trajectories to these intermediate reference targets. Simulation experiments demonstrate that the combination of intermediate reference generation and model predictive control is able to handle multiple behaviors, including following a lead vehicle, overtaking and aborting the overtake, within a single framework.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge