Andreas Toftegaard Kristensen

ComplexBeat: Breathing Rate Estimation from Complex CSI

Feb 18, 2025

Abstract:In this paper, we explore the use of channel state information (CSI) from a WiFi system to estimate the breathing rate of a person in a room. In order to extract WiFi CSI components that are sensitive to breathing, we propose to consider the delay domain channel impulse response (CIR), while most state-of-the-art methods consider its frequency domain representation. One obstacle while processing the CSI data is that its amplitude and phase are highly distorted by measurement uncertainties. We thus also propose an amplitude calibration method and a phase offset calibration method for CSI measured in orthogonal frequency-division multiplexing (OFDM) multiple-input multiple-output (MIMO) systems. Finally, we implement a complete breathing rate estimation system in order to showcase the effectiveness of our proposed calibration and CSI extraction methods.

* This work has been accepted for publication in the 2021 IEEE Workshop on Signal Processing Systems (SiPS)

An SDR-Based Monostatic Wi-Fi System with Analog Self-Interference Cancellation for Sensing

Dec 11, 2024

Abstract:Wireless sensing offers an alternative to wearables for contactless monitoring of human activity and vital signs. However, most existing systems use bistatic setups, which suffer from phase imperfections due to unsynchronized clocks. Monostatic systems overcome this issue, but are hindered by strong self-interference (SI) that require effective cancellation. We present a monostatic Wi-Fi sensing system that uses an auxiliary transmit RF chain to achieve SI cancellation levels of 40 dB, comparable to existing solutions with custom cancellation hardware. We demonstrate that the cancellation filter weights, fine-tuned using least-mean squares, can be directly repurposed for target sensing. Moreover, we achieve stable SI cancellation over 30 minutes in an office environment without fine-tuning, enabling traditional vital sign monitoring using channel estimates derived from baseband samples without the adaptation of the cancellation affecting the sensing channel -- a significant limitation in prior work. Experimental results confirm the detection of small, slow-moving targets, representative for breathing chest movements, at distances up to 10 meters in non-line-of-sight conditions.

On the Implementation Complexity of Digital Full-Duplex Self-Interference Cancellation

Jan 09, 2021

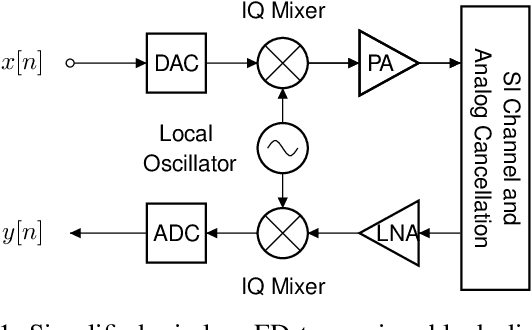

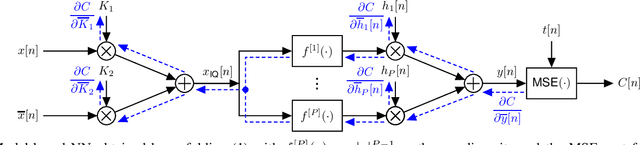

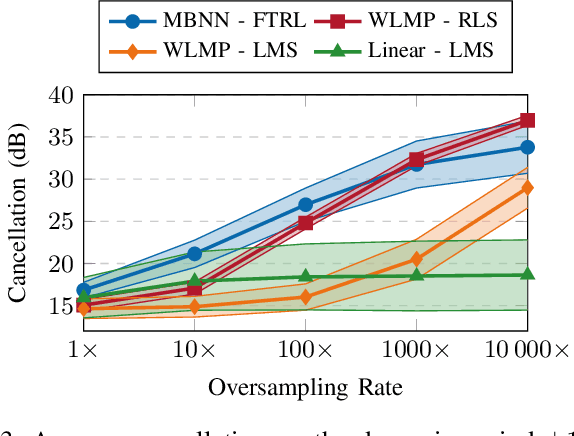

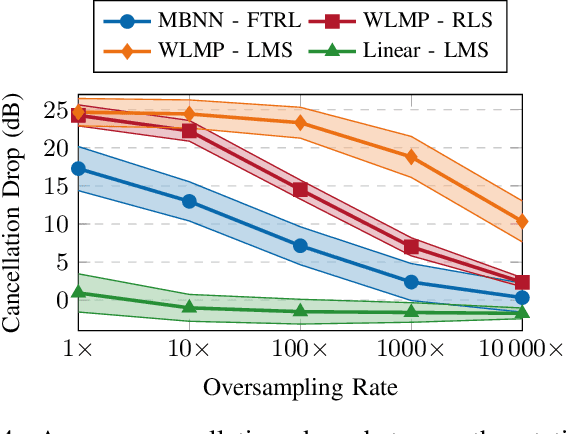

Abstract:In-band full-duplex systems promise to further increase the throughput of wireless systems, by simultaneously transmitting and receiving on the same frequency band. However, concurrent transmission generates a strong self-interference signal at the receiver, which requires the use of cancellation techniques. A wide range of techniques for analog and digital self-interference cancellation have already been presented in the literature. However, their evaluation focuses on cases where the underlying physical parameters of the full-duplex system do not vary significantly. In this paper, we focus on adaptive digital cancellation, motivated by the fact that physical systems change over time. We examine some of the different cancellation methods in terms of their performance and implementation complexity, considering the cost of both cancellation and training. We then present a comparative analysis of all these methods to determine which perform better under different system performance requirements. We demonstrate that with a neural network approach, the reduction in arithmetic complexity for the same cancellation performance relative to a state-of-the-art polynomial model is several orders of magnitude.

Lupulus: A Flexible Hardware Accelerator for Neural Networks

May 03, 2020

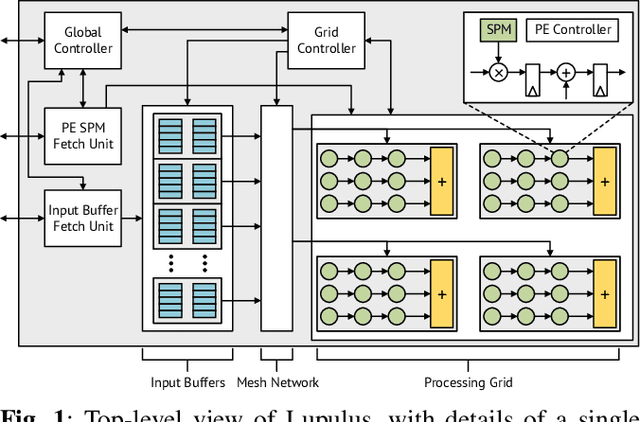

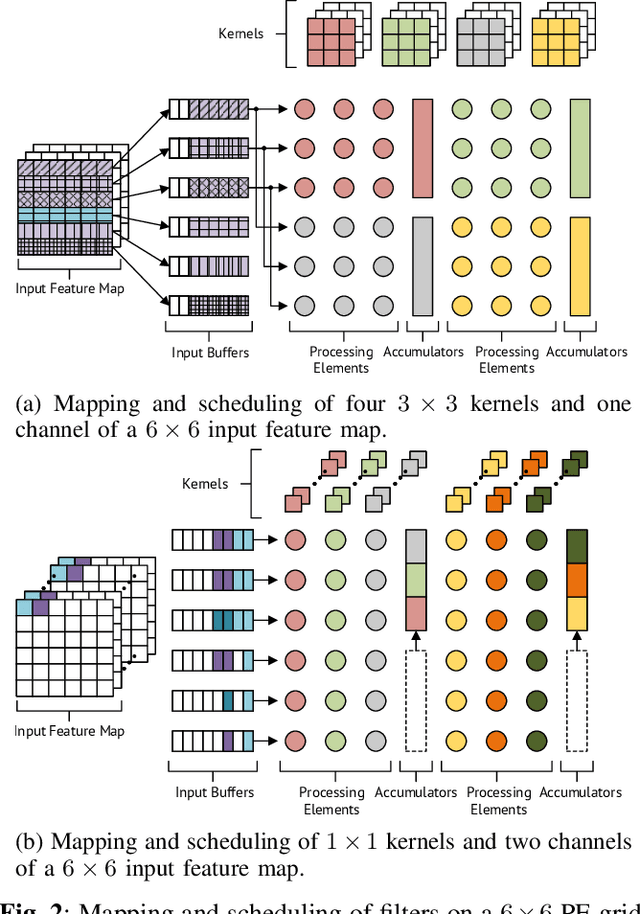

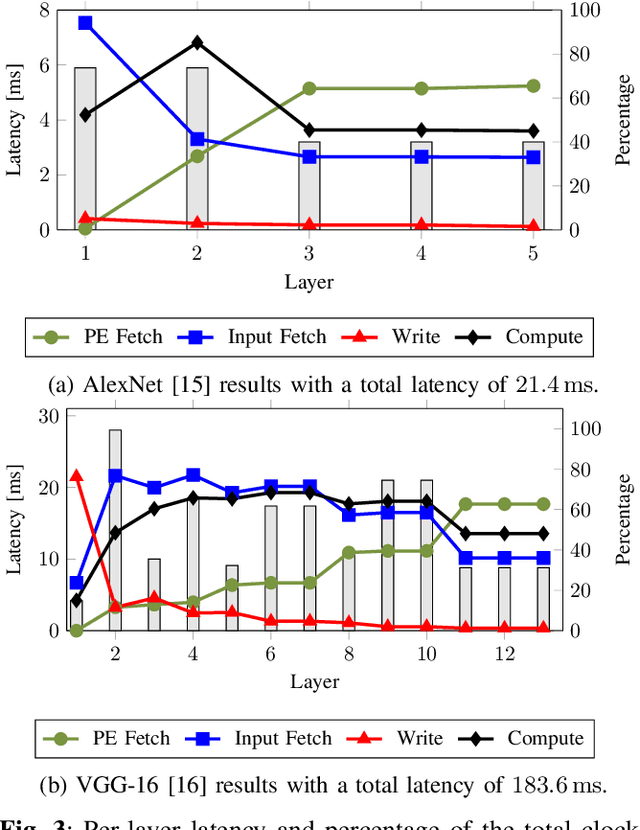

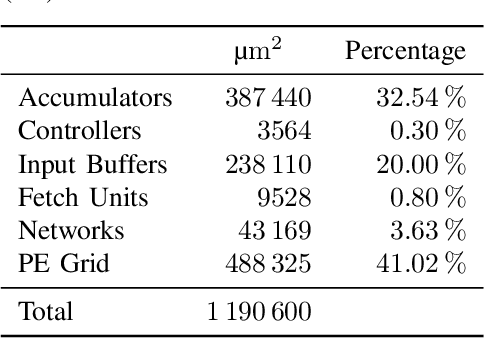

Abstract:Neural networks have become indispensable for a wide range of applications, but they suffer from high computational- and memory-requirements, requiring optimizations from the algorithmic description of the network to the hardware implementation. Moreover, the high rate of innovation in machine learning makes it important that hardware implementations provide a high level of programmability to support current and future requirements of neural networks. In this work, we present a flexible hardware accelerator for neural networks, called Lupulus, supporting various methods for scheduling and mapping of operations onto the accelerator. Lupulus was implemented in a 28nm FD-SOI technology and demonstrates a peak performance of 380 GOPS/GHz with latencies of 21.4ms and 183.6ms for the convolutional layers of AlexNet and VGG-16, respectively.

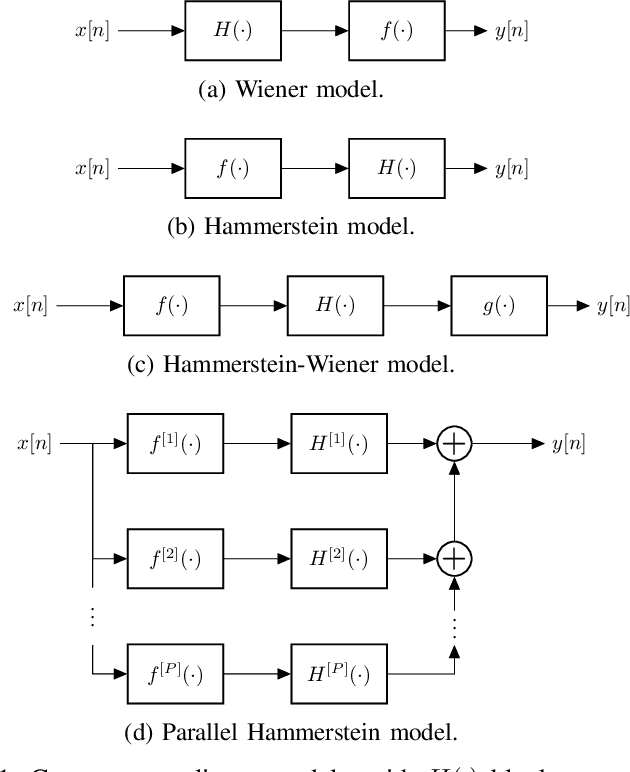

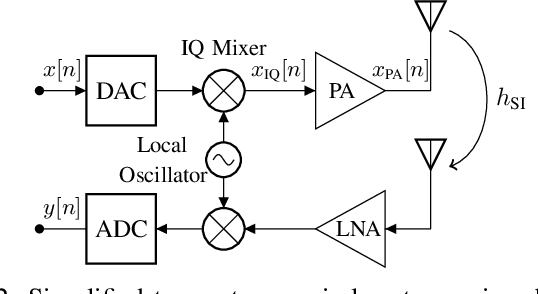

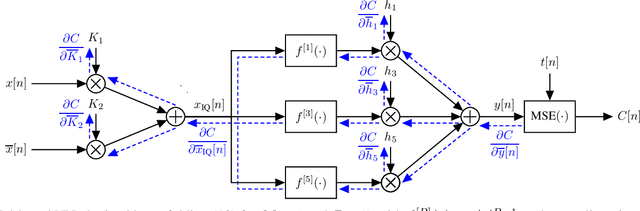

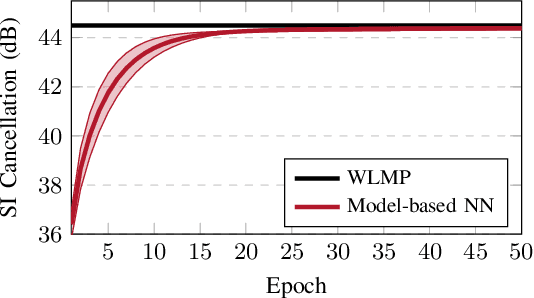

Identification of Non-Linear RF Systems Using Backpropagation

Jan 27, 2020

Abstract:In this work, we use deep unfolding to view cascaded non-linear RF systems as model-based neural networks. This view enables the direct use of a wide range of neural network tools and optimizers to efficiently identify such cascaded models. We demonstrate the effectiveness of this approach through the example of digital self-interference cancellation in full-duplex communications where an IQ imbalance model and a non-linear PA model are cascaded in series. For a self-interference cancellation performance of approximately 44.5 dB, the number of model parameters can be reduced by 74% and the number of operations per sample can be reduced by 79% compared to an expanded linear-in-parameters polynomial model.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge