Aluizio F. R. Araujo

A Neural Network Architecture for Learning Word-Referent Associations in Multiple Contexts

May 20, 2019

Abstract:This article proposes a biologically inspired neurocomputational architecture which learns associations between words and referents in different contexts, considering evidence collected from the literature of Psycholinguistics and Neurolinguistics. The multi-layered architecture takes as input raw images of objects (referents) and streams of word's phonemes (labels), builds an adequate representation, recognizes the current context, and associates label with referents incrementally, by employing a Self-Organizing Map which creates new association nodes (prototypes) as required, adjusts the existing prototypes to better represent the input stimuli and removes prototypes that become obsolete/unused. The model takes into account the current context to retrieve the correct meaning of words with multiple meanings. Simulations show that the model can reach up to 78% of word-referent association accuracy in ambiguous situations and approximates well the learning rates of humans as reported by three different authors in five Cross-Situational Word Learning experiments, also displaying similar learning patterns in the different learning conditions.

Improving NSGA-II with an Adaptive Mutation Operator

May 21, 2013

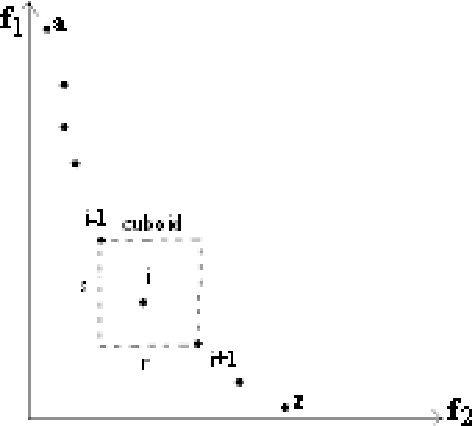

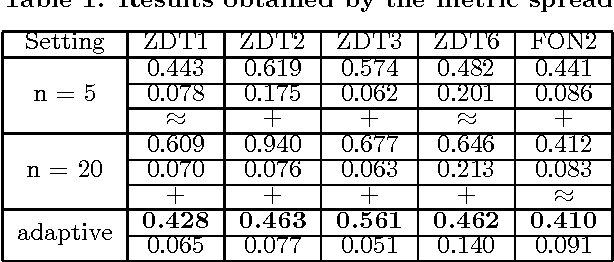

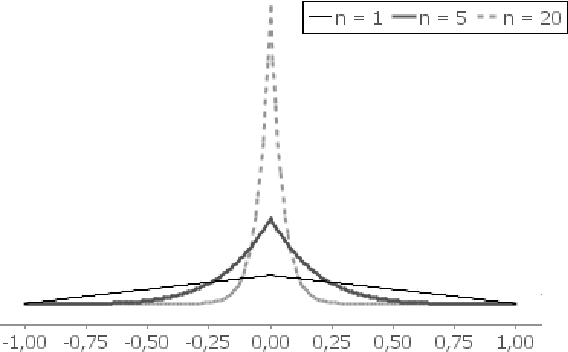

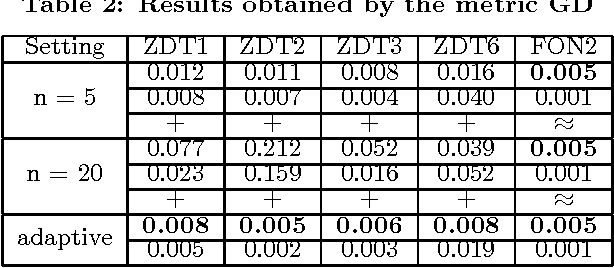

Abstract:The performance of a Multiobjective Evolutionary Algorithm (MOEA) is crucially dependent on the parameter setting of the operators. The most desired control of such parameters presents the characteristic of adaptiveness, i.e., the capacity of changing the value of the parameter, in distinct stages of the evolutionary process, using feedbacks from the search for determining the direction and/or magnitude of changing. Given the great popularity of the algorithm NSGA-II, the objective of this research is to create adaptive controls for each parameter existing in this MOEA. With these controls, we expect to improve even more the performance of the algorithm. In this work, we propose an adaptive mutation operator that has an adaptive control which uses information about the diversity of candidate solutions for controlling the magnitude of the mutation. A number of experiments considering different problems suggest that this mutation operator improves the ability of the NSGA-II for reaching the Pareto optimal Front and for getting a better diversity among the final solutions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge