Alina Zare

Robust Semi-Supervised Classification using GANs with Self-Organizing Maps

Oct 19, 2021

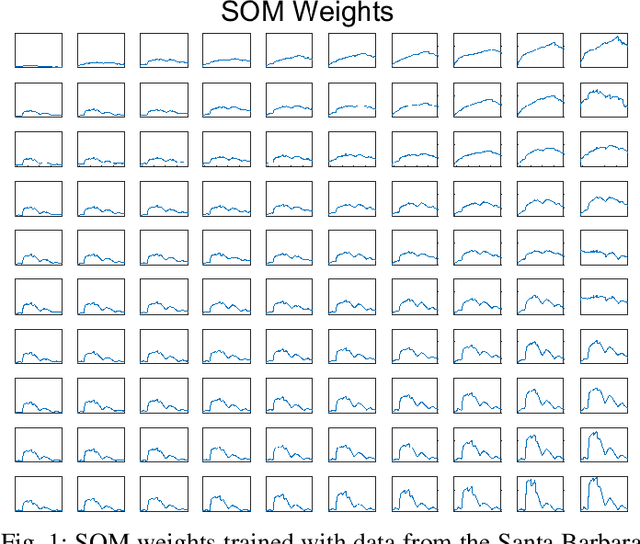

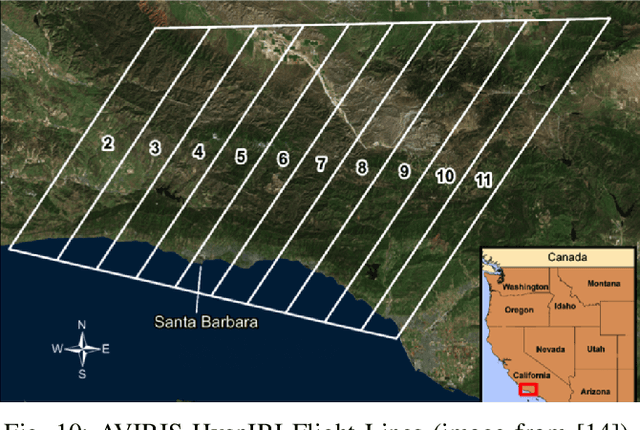

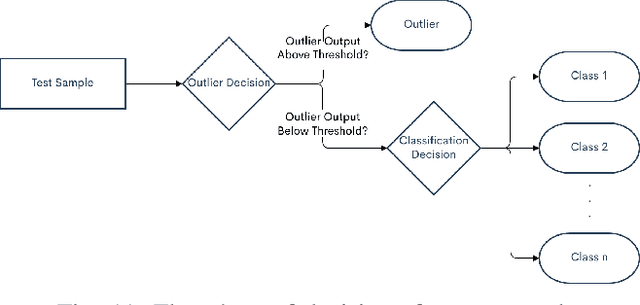

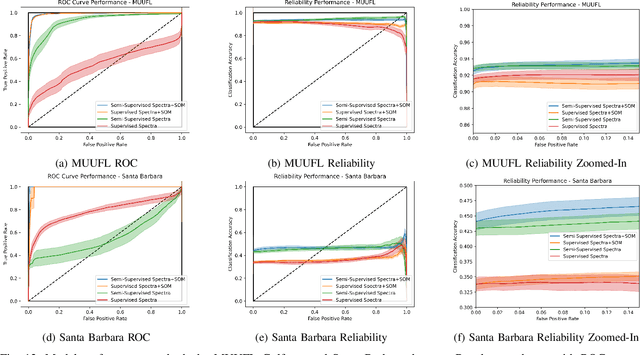

Abstract:Generative adversarial networks (GANs) have shown tremendous promise in learning to generate data and effective at aiding semi-supervised classification. However, to this point, semi-supervised GAN methods make the assumption that the unlabeled data set contains only samples of the joint distribution of the classes of interest, referred to as inliers. Consequently, when presented with a sample from other distributions, referred to as outliers, GANs perform poorly at determining that it is not qualified to make a decision on the sample. The problem of discriminating outliers from inliers while maintaining classification accuracy is referred to here as the DOIC problem. In this work, we describe an architecture that combines self-organizing maps (SOMs) with SS-GANS with the goal of mitigating the DOIC problem and experimental results indicating that the architecture achieves the goal. Multiple experiments were conducted on hyperspectral image data sets. The SS-GANS performed slightly better than supervised GANS on classification problems with and without the SOM. Incorporating the SOMs into the SS-GANs and the supervised GANS led to substantially mitigation of the DOIC problem when compared to SS-GANS and GANs without the SOMs. Furthermore, the SS-GANS performed much better than GANS on the DOIC problem, even without the SOMs.

Learnable Adaptive Cosine Estimator (LACE) for Image Classification

Oct 15, 2021

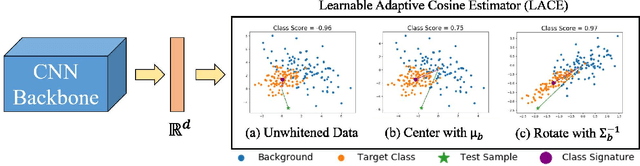

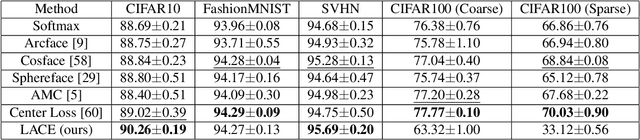

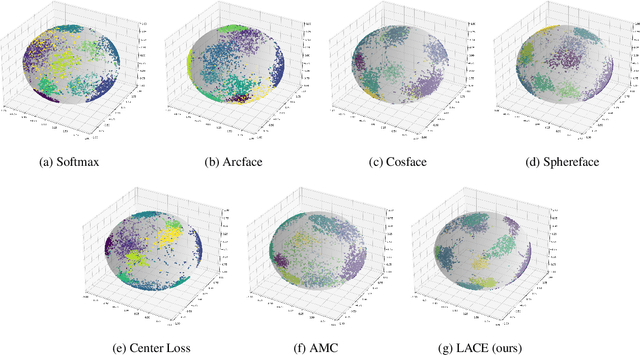

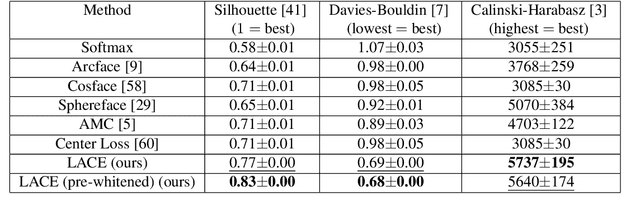

Abstract:In this work, we propose a new loss to improve feature discriminability and classification performance. Motivated by the adaptive cosine/coherence estimator (ACE), our proposed method incorporates angular information that is inherently learned by artificial neural networks. Our learnable ACE (LACE) transforms the data into a new "whitened" space that improves the inter-class separability and intra-class compactness. We compare our LACE to alternative state-of-the art softmax-based and feature regularization approaches. Our results show that the proposed method can serve as a viable alternative to cross entropy and angular softmax approaches. Our code is publicly available: https://github.com/GatorSense/LACE.

Possibilistic Fuzzy Local Information C-Means with Automated Feature Selection for Seafloor Segmentation

Oct 14, 2021Abstract:The Possibilistic Fuzzy Local Information C-Means (PFLICM) method is presented as a technique to segment side-look synthetic aperture sonar (SAS) imagery into distinct regions of the sea-floor. In this work, we investigate and present the results of an automated feature selection approach for SAS image segmentation. The chosen features and resulting segmentation from the image will be assessed based on a select quantitative clustering validity criterion and the subset of the features that reach a desired threshold will be used for the segmentation process.

Addressing Annotation Imprecision for Tree Crown Delineation Using the RandCrowns Index

May 05, 2021

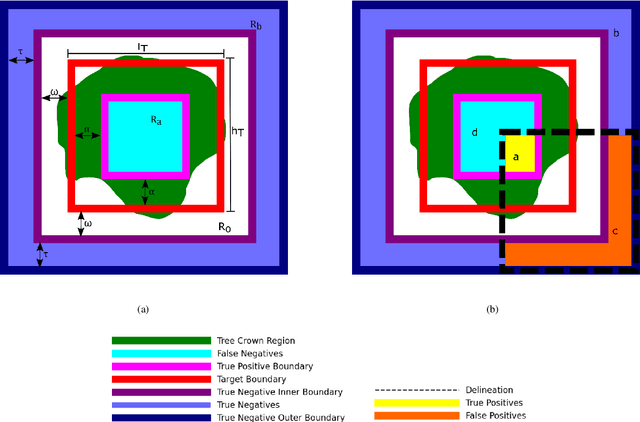

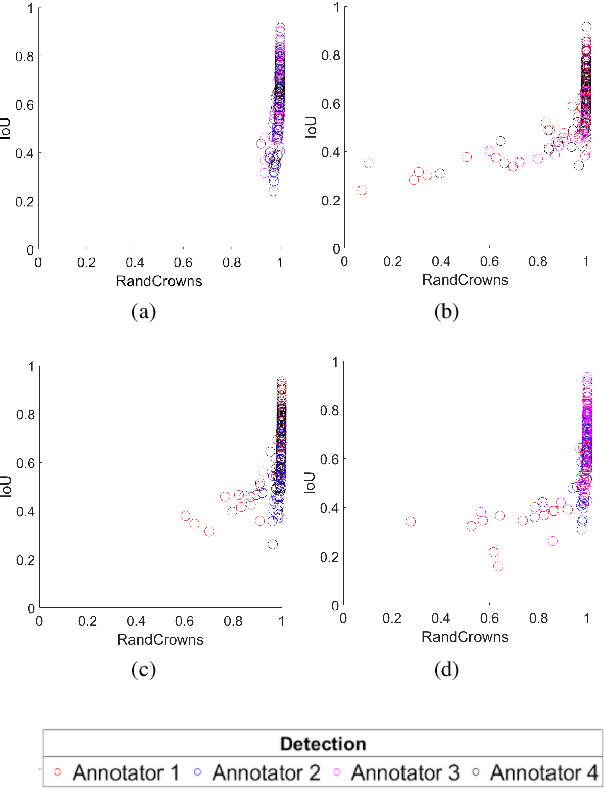

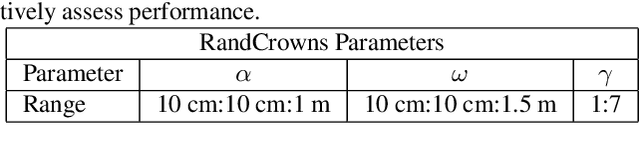

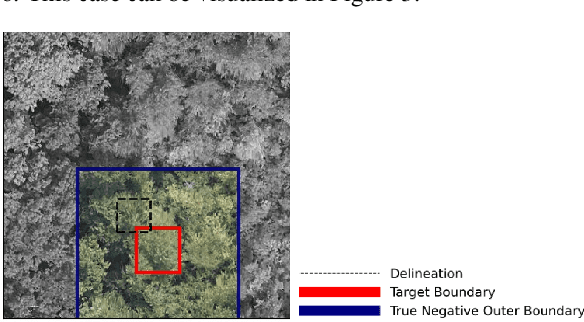

Abstract:Supervised methods for object delineation in remote sensing require labeled ground-truth data. Gathering sufficient high quality ground-truth data is difficult, especially when the targets are of irregular shape or difficult to distinguish from the background or neighboring objects. Tree crown delineation provides key information from remote sensing images for forestry, ecology, and management. However, tree crowns in remote sensing imagery are often difficult to label and annotate due to irregular shape, overlapping canopies, shadowing, and indistinct edges. There are also multiple approaches to annotation in this field (e.g., rectangular boxes vs. convex polygons) that further contribute to annotation imprecision. However, current evaluation methods do not account for this uncertainty in annotations, and quantitative metrics for evaluation can vary across multiple annotators. We address these limitations using an adaptation of the Rand index for weakly-labeled crown delineation that we call RandCrowns. The RandCrowns metric reformulates the Rand index by adjusting the areas over which each term of the index is computed to account for uncertain and imprecise object delineation labels. Quantitative comparisons to the commonly used intersection over union (Jaccard similarity) method shows a decrease in the variance generated by differences among multiple annotators. Combined with qualitative examples, our results suggest that this RandCrowns metric is more robust for scoring target delineations in the presence of uncertainty and imprecision in annotations that are inherent to tree crown delineation. Although the focus of this paper is on evaluation of tree crown delineations, annotation imprecision is a challenge that is common across remote sensing of the environment (and many computer vision problems in general).

The Weakly-Labeled Rand Index

Mar 09, 2021

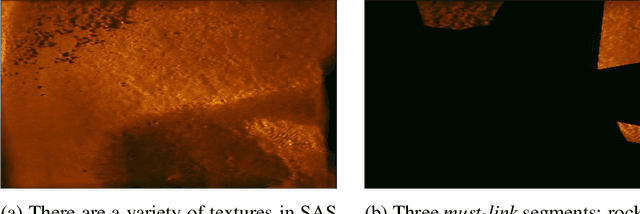

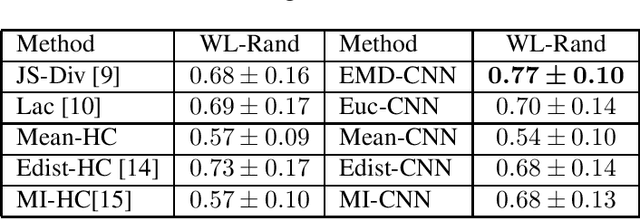

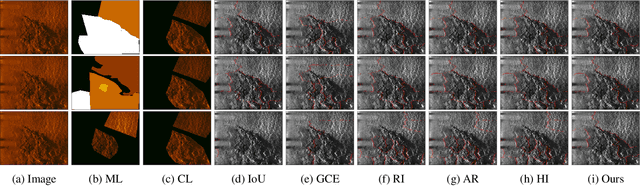

Abstract:Synthetic Aperture Sonar (SAS) surveys produce imagery with large regions of transition between seabed types. Due to these regions, it is difficult to label and segment the imagery and, furthermore, challenging to score the image segmentations appropriately. While there are many approaches to quantify performance in standard crisp segmentation schemes, drawing hard boundaries in remote sensing imagery where gradients and regions of uncertainty exist is inappropriate. These cases warrant weak labels and an associated appropriate scoring approach. In this paper, a labeling approach and associated modified version of the Rand index for weakly-labeled data is introduced to address these issues. Results are evaluated with the new index and compared to traditional segmentation evaluation methods. Experimental results on a SAS data set containing must-link and cannot-link labels show that our Weakly-Labeled Rand index scores segmentations appropriately in reference to qualitative performance and is more suitable than traditional quantitative metrics for scoring weakly-labeled data.

Explainable Systematic Analysis for Synthetic Aperture Sonar Imagery

Jan 21, 2021

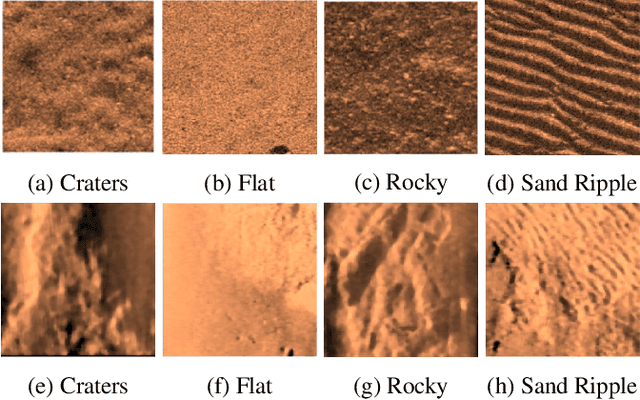

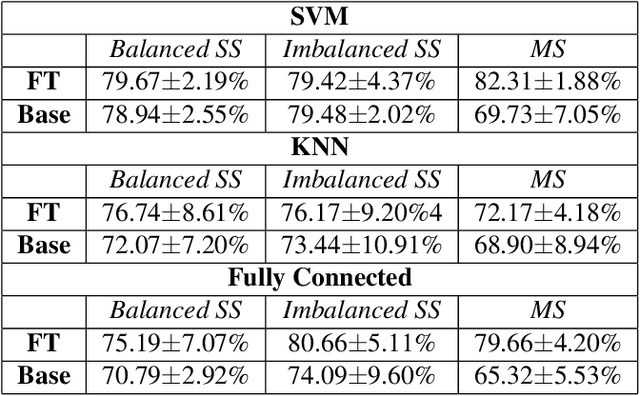

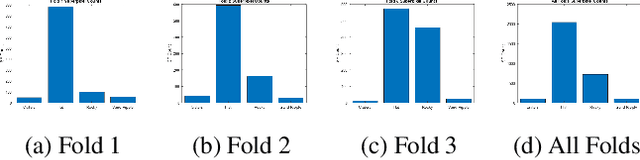

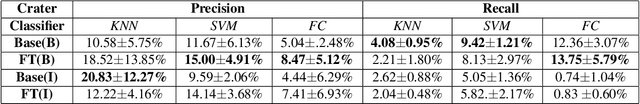

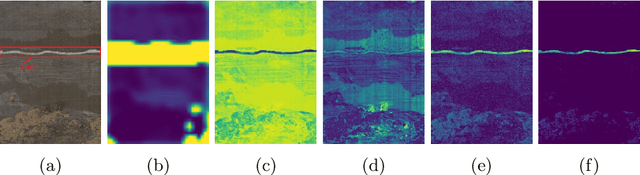

Abstract:In this work, we present an in-depth and systematic analysis using tools such as local interpretable model-agnostic explanations (LIME) (arXiv:1602.04938) and divergence measures to analyze what changes lead to improvement in performance in fine tuned models for synthetic aperture sonar (SAS) data. We examine the sensitivity to factors in the fine tuning process such as class imbalance. Our findings show not only an improvement in seafloor texture classification, but also provide greater insight into what features play critical roles in improving performance as well as a knowledge of the importance of balanced data for fine tuning deep learning models for seafloor classification in SAS imagery.

Divergence Regulated Encoder Network for Joint Dimensionality Reduction and Classification

Jan 21, 2021

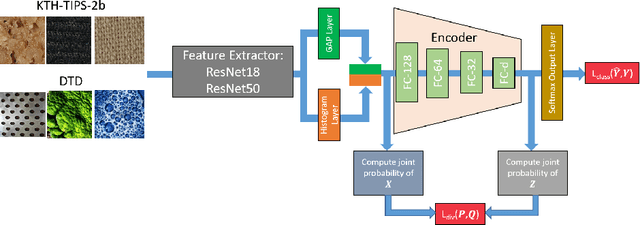

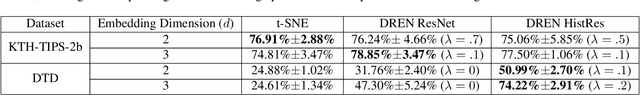

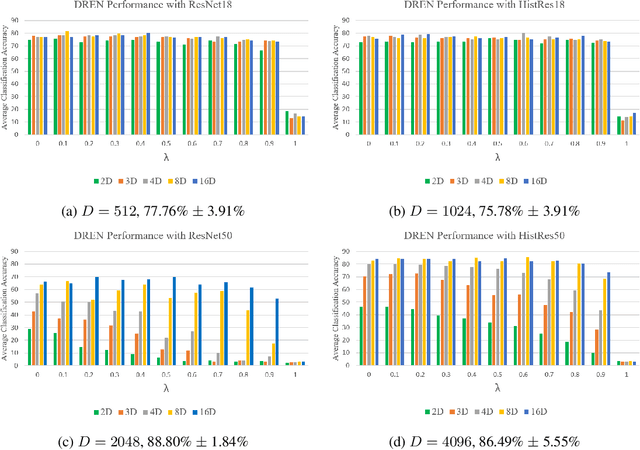

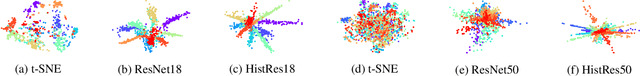

Abstract:In this paper, we investigate performing joint dimensionality reduction and classification using a novel histogram neural network. Motivated by a popular dimensionality reduction approach, t-Distributed Stochastic Neighbor Embedding (t-SNE), our proposed method incorporates a classification loss computed on samples in a low-dimensional embedding space. We compare the learned sample embeddings against coordinates found by t-SNE in terms of classification accuracy and qualitative assessment. We also explore use of various divergence measures in the t-SNE objective. The proposed method has several advantages such as readily embedding out-of-sample points and reducing feature dimensionality while retaining class discriminability. Our results show that the proposed approach maintains and/or improves classification performance and reveals characteristics of features produced by neural networks that may be helpful for other applications.

Weakly Supervised Minirhizotron Image Segmentation with MIL-CAM

Jul 30, 2020

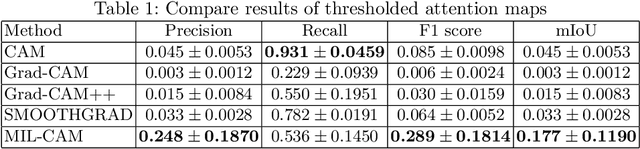

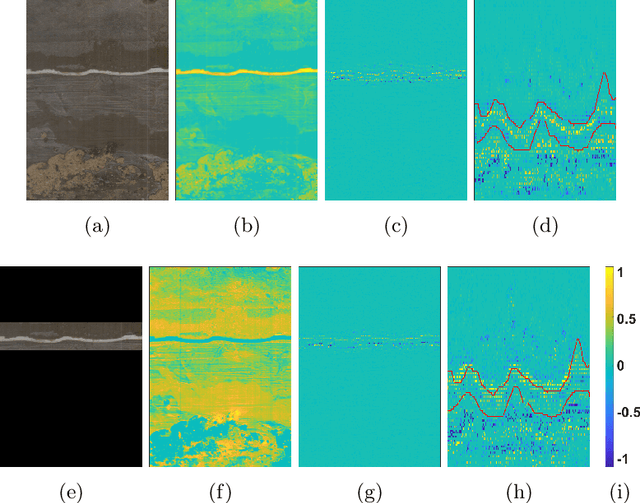

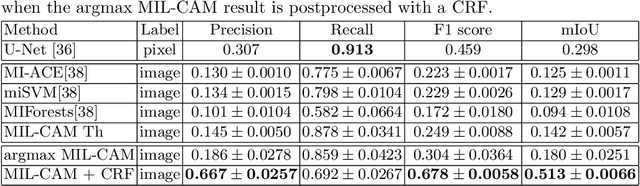

Abstract:We present a multiple instance learning class activation map (MIL-CAM) approach for pixel-level minirhizotron image segmentation given weak image-level labels. Minirhizotrons are used to image plant roots in situ. Minirhizotron imagery is often composed of soil containing a few long and thin root objects of small diameter. The roots prove to be challenging for existing semantic image segmentation methods to discriminate. In addition to learning from weak labels, our proposed MIL-CAM approach re-weights the root versus soil pixels during analysis for improved performance due to the heavy imbalance between soil and root pixels. The proposed approach outperforms other attention map and multiple instance learning methods for localization of root objects in minirhizotron imagery.

Outlier Detection through Null Space Analysis of Neural Networks

Jul 02, 2020

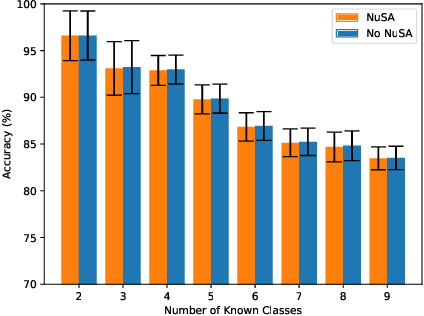

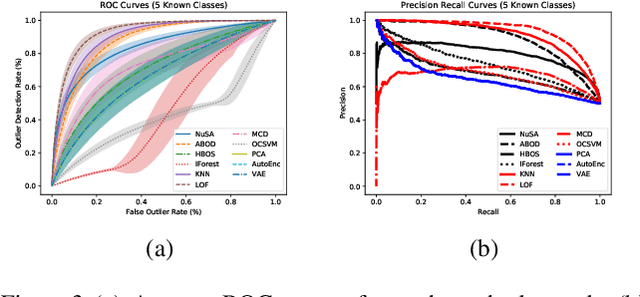

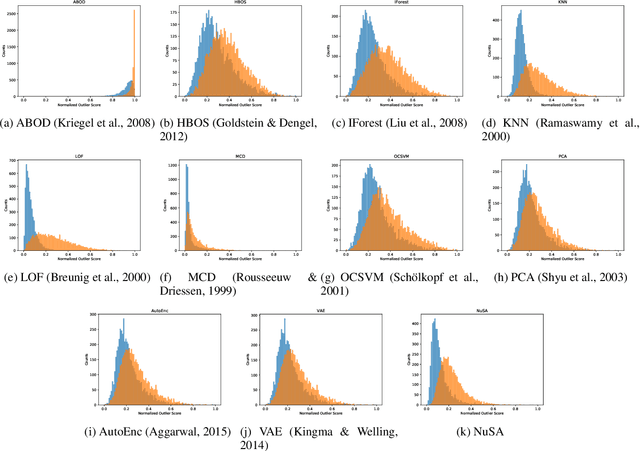

Abstract:Many machine learning classification systems lack competency awareness. Specifically, many systems lack the ability to identify when outliers (e.g., samples that are distinct from and not represented in the training data distribution) are being presented to the system. The ability to detect outliers is of practical significance since it can help the system behave in an reasonable way when encountering unexpected data. In prior work, outlier detection is commonly carried out in a processing pipeline that is distinct from the classification model. Thus, for a complete system that incorporates outlier detection and classification, two models must be trained, increasing the overall complexity of the approach. In this paper we use the concept of the null space to integrate an outlier detection method directly into a neural network used for classification. Our method, called Null Space Analysis (NuSA) of neural networks, works by computing and controlling the magnitude of the null space projection as data is passed through a network. Using these projections, we can then calculate a score that can differentiate between normal and abnormal data. Results are shown that indicate networks trained with NuSA retain their classification performance while also being able to detect outliers at rates similar to commonly used outlier detection algorithms.

Super Resolution for Root Imaging

Mar 30, 2020

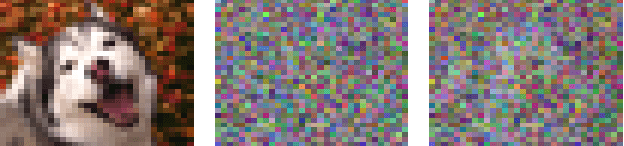

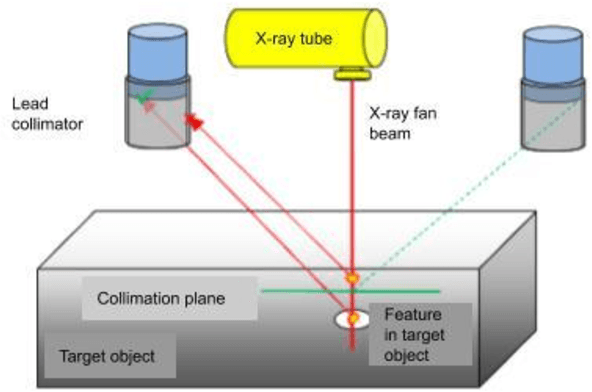

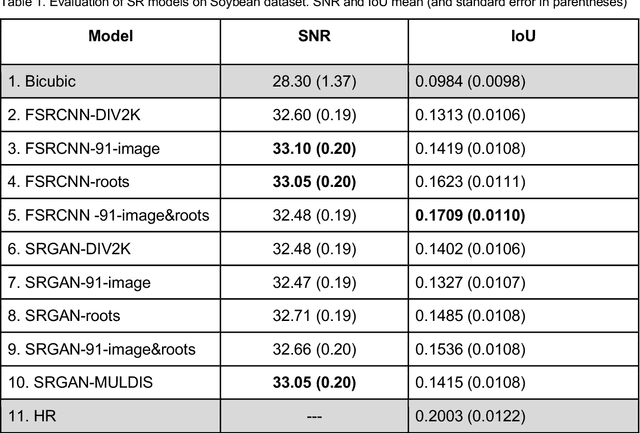

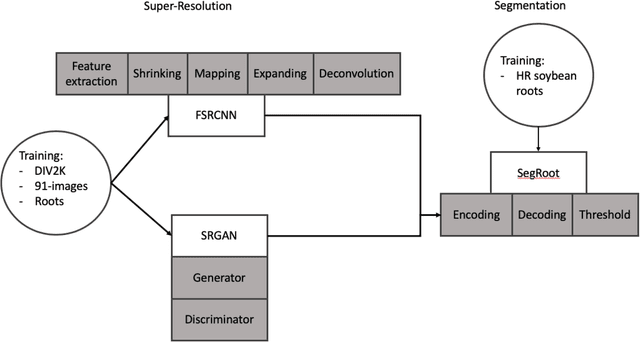

Abstract:High-resolution cameras have become very helpful for plant phenotyping by providing a mechanism for tasks such as target versus background discrimination, and the measurement and analysis of fine-above-ground plant attributes, e.g., the venation network of leaves. However, the acquisition of high-resolution (HR) imagery of roots in situ remains a challenge. We apply super-resolution (SR) convolutional neural networks (CNNs) to boost the resolution capability of a backscatter X-ray system designed to image buried roots. To overcome limited available backscatter X-ray data for training, we compare three alternatives for training: i) non-plant-root images, ii) plant-root images, and iii) pretraining the model with non-plant-root images and fine-tuning with plant-root images and two deep learning approaches i) Fast Super Resolution Convolutional Neural Network and ii) Super Resolution Generative Adversarial Network). We evaluate SR performance using signal to noise ratio (SNR) and intersection over union (IoU) metrics when segmenting the SR images. In our experiments, we observe that the studied SR models improve the quality of the low-resolution images (LR) of plant roots of an unseen dataset in terms of SNR. Likewise, we demonstrate that SR pre-processing boosts the performance of a machine learning system trained to separate plant roots from their background. In addition, we show examples of backscatter X-ray images upscaled by using the SR model. The current technology for non-intrusive root imaging acquires noisy and LR images. In this study, we show that this issue can be tackled by the incorporation of a deep-learning based SR model in the image formation process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge