Ali Soltan Mohammadi

RESQ: A Unified Framework for REliability- and Security Enhancement of Quantized Deep Neural Networks

Mar 16, 2026Abstract:This work proposes a unified three-stage framework that produces a quantized DNN with balanced fault and attack robustness. The first stage improves attack resilience via fine-tuning that desensitizes feature representations to small input perturbations. The second stage reinforces fault resilience through fault-aware fine-tuning under simulated bit-flip faults. Finally, a lightweight post-training adjustment integrates quantization to enhance efficiency and further mitigate fault sensitivity without degrading attack resilience. Experiments on ResNet18, VGG16, EfficientNet, and Swin-Tiny in CIFAR-10, CIFAR-100, and GTSRB show consistent gains of up to 10.35% in attack resilience and 12.47% in fault resilience, while maintaining competitive accuracy in quantized networks. The results also highlight an asymmetric interaction in which improvements in fault resilience generally increase resilience to adversarial attacks, whereas enhanced adversarial resilience does not necessarily lead to higher fault resilience.

Learning Fuzzy β-Certain and β-Possible rules from incomplete quantitative data by rough sets

Apr 06, 2012

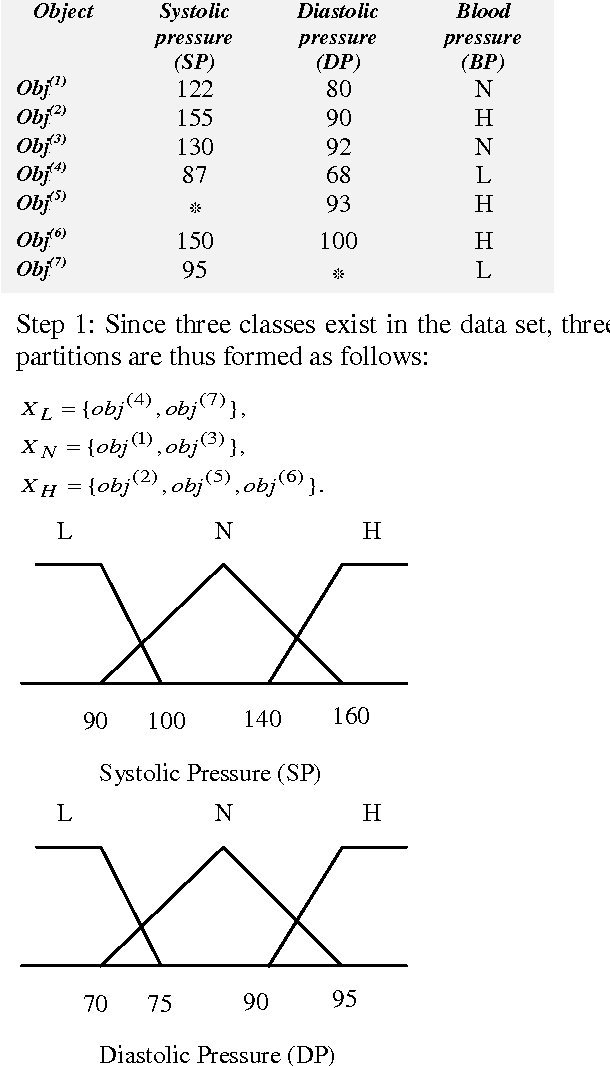

Abstract:The rough-set theory proposed by Pawlak, has been widely used in dealing with data classification problems. The original rough-set model is, however, quite sensitive to noisy data. Tzung thus proposed deals with the problem of producing a set of fuzzy certain and fuzzy possible rules from quantitative data with a predefined tolerance degree of uncertainty and misclassification. This model allowed, which combines the variable precision rough-set model and the fuzzy set theory, is thus proposed to solve this problem. This paper thus deals with the problem of producing a set of fuzzy certain and fuzzy possible rules from incomplete quantitative data with a predefined tolerance degree of uncertainty and misclassification. A new method, incomplete quantitative data for rough-set model and the fuzzy set theory, is thus proposed to solve this problem. It first transforms each quantitative value into a fuzzy set of linguistic terms using membership functions and then finding incomplete quantitative data with lower and the fuzzy upper approximations. It second calculates the fuzzy {\beta}-lower and the fuzzy {\beta}-upper approximations. The certain and possible rules are then generated based on these fuzzy approximations. These rules can then be used to classify unknown objects.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge