Ali Kashefi

Flow Matching and Diffusion Models via PointNet for Generating Fluid Fields on Irregular Geometries

Jan 06, 2026Abstract:We present two novel generative geometric deep learning frameworks, termed Flow Matching PointNet and Diffusion PointNet, for predicting fluid flow variables on irregular geometries by incorporating PointNet into flow matching and diffusion models, respectively. In these frameworks, a reverse generative process reconstructs physical fields from standard Gaussian noise conditioned on unseen geometries. The proposed approaches operate directly on point-cloud representations of computational domains (e.g., grid vertices of finite-volume meshes) and therefore avoid the limitations of pixelation used to project geometries onto uniform lattices. In contrast to graph neural network-based diffusion models, Flow Matching PointNet and Diffusion PointNet do not exhibit high-frequency noise artifacts in the predicted fields. Moreover, unlike such approaches, which require auxiliary intermediate networks to condition geometry, the proposed frameworks rely solely on PointNet, resulting in a simple and unified architecture. The performance of the proposed frameworks is evaluated on steady incompressible flow past a cylinder, using a geometric dataset constructed by varying the cylinder's cross-sectional shape and orientation across samples. The results demonstrate that Flow Matching PointNet and Diffusion PointNet achieve more accurate predictions of velocity and pressure fields, as well as lift and drag forces, and exhibit greater robustness to incomplete geometries compared to a vanilla PointNet with the same number of trainable parameters.

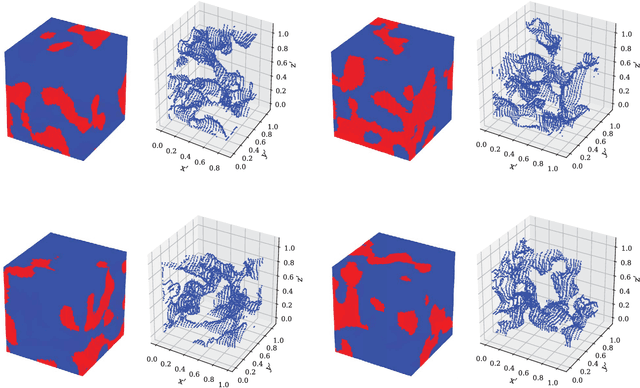

Vision Mamba for Permeability Prediction of Porous Media

Oct 16, 2025

Abstract:Vision Mamba has recently received attention as an alternative to Vision Transformers (ViTs) for image classification. The network size of Vision Mamba scales linearly with input image resolution, whereas ViTs scale quadratically, a feature that improves computational and memory efficiency. Moreover, Vision Mamba requires a significantly smaller number of trainable parameters than traditional convolutional neural networks (CNNs), and thus, they can be more memory efficient. Because of these features, we introduce, for the first time, a neural network that uses Vision Mamba as its backbone for predicting the permeability of three-dimensional porous media. We compare the performance of Vision Mamba with ViT and CNN models across multiple aspects of permeability prediction and perform an ablation study to assess the effects of its components on accuracy. We demonstrate in practice the aforementioned advantages of Vision Mamba over ViTs and CNNs in the permeability prediction of three-dimensional porous media. We make the source code publicly available to facilitate reproducibility and to enable other researchers to build on and extend this work. We believe the proposed framework has the potential to be integrated into large vision models in which Vision Mamba is used instead of ViTs.

Physics-informed KAN PointNet: Deep learning for simultaneous solutions to inverse problems in incompressible flow on numerous irregular geometries

Apr 08, 2025

Abstract:Kolmogorov-Arnold Networks (KANs) have gained attention as a promising alternative to traditional Multilayer Perceptrons (MLPs) for deep learning applications in computational physics, especially within the framework of physics-informed neural networks (PINNs). Physics-informed Kolmogorov-Arnold Networks (PIKANs) and their variants have been introduced and evaluated to solve inverse problems. However, similar to PINNs, current versions of PIKANs are limited to obtaining solutions for a single computational domain per training run; consequently, a new geometry requires retraining the model from scratch. Physics-informed PointNet (PIPN) was introduced to address this limitation for PINNs. In this work, we introduce physics-informed Kolmogorov-Arnold PointNet (PI-KAN-PointNet) to extend this capability to PIKANs. PI-KAN-PointNet enables the simultaneous solution of an inverse problem over multiple irregular geometries within a single training run, reducing computational costs. We construct KANs using Jacobi polynomials and investigate their performance by considering Jacobi polynomials of different degrees and types in terms of both computational cost and prediction accuracy. As a benchmark test case, we consider natural convection in a square enclosure with a cylinder, where the cylinder's shape varies across a dataset of 135 geometries. We compare the performance of PI-KAN-PointNet with that of PIPN (i.e., physics-informed PointNet with MLPs) and observe that, with approximately an equal number of trainable parameters and similar computational cost, PI-KAN-PointNet provides more accurate predictions. Finally, we explore the combination of KAN and MLP in constructing a physics-informed PointNet. Our findings indicate that a physics-informed PointNet model employing MLP layers as the encoder and KAN layers as the decoder represents the optimal configuration among all models investigated.

PointNet with KAN versus PointNet with MLP for 3D Classification and Segmentation of Point Sets

Oct 14, 2024Abstract:We introduce PointNet-KAN, a neural network for 3D point cloud classification and segmentation tasks, built upon two key components. First, it employs Kolmogorov-Arnold Networks (KANs) instead of traditional Multilayer Perceptrons (MLPs). Second, it retains the core principle of PointNet by using shared KAN layers and applying symmetric functions for global feature extraction, ensuring permutation invariance with respect to the input features. In traditional MLPs, the goal is to train the weights and biases with fixed activation functions; however, in KANs, the goal is to train the activation functions themselves. We use Jacobi polynomials to construct the KAN layers. We extensively evaluate PointNet-KAN across various polynomial degrees and special types such as the Lagrange, Chebyshev, and Gegenbauer polynomials. Our results show that PointNet-KAN achieves competitive performance compared to PointNet with MLPs on benchmark datasets for 3D object classification and segmentation, despite employing a shallower and simpler network architecture. We hope this work serves as a foundation and provides guidance for integrating KANs, as an alternative to MLPs, into more advanced point cloud processing architectures.

Kolmogorov-Arnold PointNet: Deep learning for prediction of fluid fields on irregular geometries

Aug 06, 2024Abstract:We present Kolmogorov-Arnold PointNet (KA-PointNet) as a novel supervised deep learning framework for the prediction of incompressible steady-state fluid flow fields in irregular domains, where the predicted fields are a function of the geometry of the domains. In KA-PointNet, we implement shared Kolmogorov-Arnold Networks (KANs) in the segmentation branch of the PointNet architecture. We utilize Jacobi polynomials to construct shared KANs. As a benchmark test case, we consider incompressible laminar steady-state flow over a cylinder, where the geometry of its cross-section varies over the data set. We investigate the performance of Jacobi polynomials with different degrees as well as special cases of Jacobi polynomials such as Legendre polynomials, Chebyshev polynomials of the first and second kinds, and Gegenbauer polynomials, in terms of the computational cost of training and accuracy of prediction of the test set. Additionally, we compare the performance of PointNet with shared KANs (i.e., KA-PointNet) and PointNet with shared Multilayer Perceptrons (MLPs). It is observed that when the number of trainable parameters is approximately equal, PointNet with shared KANs (i.e., KA-PointNet) outperforms PointNet with shared MLPs.

A Misleading Gallery of Fluid Motion by Generative Artificial Intelligence

May 24, 2024

Abstract:In this technical report, we extensively investigate the accuracy of outputs from well-known generative artificial intelligence (AI) applications in response to prompts describing common fluid motion phenomena familiar to the fluid mechanics community. We examine a range of applications, including Midjourney, Dall-E, Runway ML, Microsoft Designer, Gemini, Meta AI, and Leonardo AI, introduced by prominent companies such as Google, OpenAI, Meta, and Microsoft. Our text prompts for generating images or videos include examples such as "Von Karman vortex street", "flow past an airfoil", "Kelvin-Helmholtz instability", "shock waves on a sharp-nosed supersonic body", etc. We compare the images generated by these applications with real images from laboratory experiments and numerical software. Our findings indicate that these generative AI models are not adequately trained in fluid dynamics imagery, leading to potentially misleading outputs. Beyond text-to-image/video generation, we further explore the transition from image/video to text generation using these AI tools, aiming to investigate the accuracy of their descriptions of fluid motion phenomena. This report serves as a cautionary note for educators in academic institutions, highlighting the potential for these tools to mislead students. It also aims to inform researchers at these renowned companies, encouraging them to address this issue. We conjecture that a primary reason for this shortcoming is the limited access to copyright-protected fluid motion images from scientific journals.

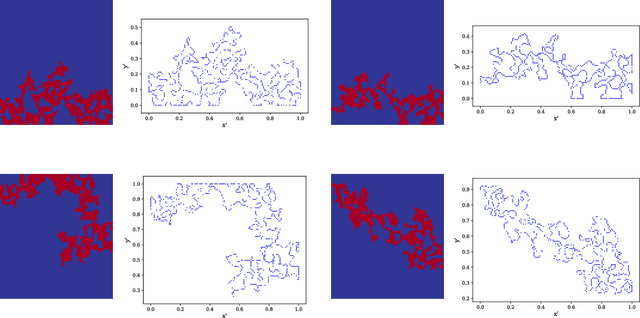

A novel Fourier neural operator framework for classification of multi-sized images: Application to 3D digital porous media

Feb 18, 2024

Abstract:Fourier neural operators (FNOs) are invariant with respect to the size of input images, and thus images with any size can be fed into FNO-based frameworks without any modification of network architectures, in contrast to traditional convolutional neural networks (CNNs). Leveraging the advantage of FNOs, we propose a novel deep-learning framework for classifying images with varying sizes. Particularly, we simultaneously train the proposed network on multi-sized images. As a practical application, we consider the problem of predicting the label (e.g., permeability) of three-dimensional digital porous media. To construct the framework, an intuitive approach is to connect FNO layers to a classifier using adaptive max pooling. First, we show that this approach is only effective for porous media with fixed sizes, whereas it fails for porous media of varying sizes. To overcome this limitation, we introduce our approach: instead of using adaptive max pooling, we use static max pooling with the size of channel width of FNO layers. Since the channel width of the FNO layers is independent of input image size, the introduced framework can handle multi-sized images during training. We show the effectiveness of the introduced framework and compare its performance with the intuitive approach through the example of the classification of three-dimensional digital porous media of varying sizes.

Physics-informed PointNet: On how many irregular geometries can it solve an inverse problem simultaneously? Application to linear elasticity

Apr 03, 2023Abstract:Regular physics-informed neural networks (PINNs) predict the solution of partial differential equations using sparse labeled data but only over a single domain. On the other hand, fully supervised learning models are first trained usually over a few thousand domains with known solutions (i.e., labeled data) and then predict the solution over a few hundred unseen domains. Physics-informed PointNet (PIPN) is primarily designed to fill this gap between PINNs (as weakly supervised learning models) and fully supervised learning models. In this article, we demonstrate that PIPN predicts the solution of desired partial differential equations over a few hundred domains simultaneously, while it only uses sparse labeled data. This framework benefits fast geometric designs in the industry when only sparse labeled data are available. Particularly, we show that PIPN predicts the solution of a plane stress problem over more than 500 domains with different geometries, simultaneously. Moreover, we pioneer implementing the concept of remarkable batch size (i.e., the number of geometries fed into PIPN at each sub-epoch) into PIPN. Specifically, we try batch sizes of 7, 14, 19, 38, 76, and 133. Additionally, the effect of the PIPN size, symmetric function in the PIPN architecture, and static and dynamic weights for the component of the sparse labeled data in the loss function are investigated.

ChatGPT for Programming Numerical Methods

Mar 25, 2023

Abstract:ChatGPT is a large language model recently released by the OpenAI company. In this technical report, we explore for the first time the capability of ChatGPT for programming numerical algorithms. Specifically, we examine the capability of GhatGPT for generating codes for numerical algorithms in different programming languages, for debugging and improving written codes by users, for completing missed parts of numerical codes, rewriting available codes in other programming languages, and for parallelizing serial codes. Additionally, we assess if ChatGPT can recognize if given codes are written by humans or machines. To reach this goal, we consider a variety of mathematical problems such as the Poisson equation, the diffusion equation, the incompressible Navier-Stokes equations, compressible inviscid flow, eigenvalue problems, solving linear systems of equations, storing sparse matrices, etc. Furthermore, we exemplify scientific machine learning such as physics-informed neural networks and convolutional neural networks with applications to computational physics. Through these examples, we investigate the successes, failures, and challenges of ChatGPT. Examples of failures are producing singular matrices, operations on arrays with incompatible sizes, programming interruption for relatively long codes, etc. Our outcomes suggest that ChatGPT can successfully program numerical algorithms in different programming languages, but certain limitations and challenges exist that require further improvement of this machine learning model.

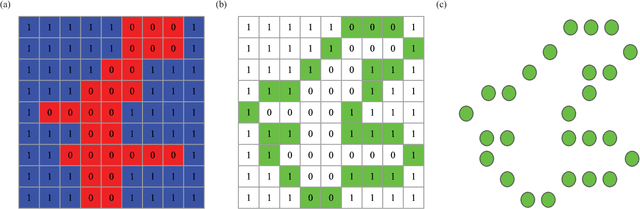

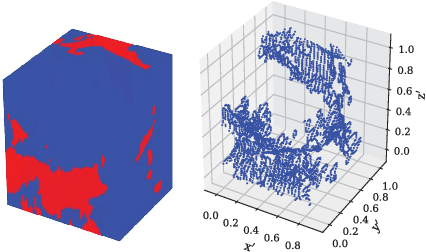

Point-Cloud Deep Learning of Porous Media for Permeability Prediction

Jul 18, 2021

Abstract:We propose a novel deep learning framework for predicting permeability of porous media from their digital images. Unlike convolutional neural networks, instead of feeding the whole image volume as inputs to the network, we model the boundary between solid matrix and pore spaces as point clouds and feed them as inputs to a neural network based on the PointNet architecture. This approach overcomes the challenge of memory restriction of graphics processing units and its consequences on the choice of batch size, and convergence. Compared to convolutional neural networks, the proposed deep learning methodology provides freedom to select larger batch sizes, due to reducing significantly the size of network inputs. Specifically, we use the classification branch of PointNet and adjust it for a regression task. As a test case, two and three dimensional synthetic digital rock images are considered. We investigate the effect of different components of our neural network on its performance. We compare our deep learning strategy with a convolutional neural network from various perspectives, specifically for maximum possible batch size. We inspect the generalizability of our network by predicting the permeability of real-world rock samples as well as synthetic digital rocks that are statistically different from the samples used during training. The network predicts the permeability of digital rocks a few thousand times faster than a Lattice Boltzmann solver with a high level of prediction accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge