Alan Medlar

Unexplored Frontiers: A Review of Empirical Studies of Exploratory Search

Dec 22, 2023Abstract:This article reviews how empirical research of exploratory search is conducted. We investigated aspects of interdisciplinarity, study settings and evaluation methodologies from a systematically selected sample of 231 publications from 2010-2021, including a total of 172 articles with empirical studies. Our results show that exploratory search is highly interdisciplinary, with the most frequently occurring publication venues including high impact venues in information science, information systems and human-computer interaction. However, taken in aggregate, the breadth of study settings investigated was limited. We found that a majority of studies (77%) focused on evaluating novel retrieval systems as opposed to investigating users' search processes. Furthermore, a disproportionate number of studies were based on scientific literature search (20.7%), a majority of which only considered searching for Computer Science articles. Study participants were generally from convenience samples, with 75% of studies composed exclusively of students and other academics. The methodologies used for evaluation were mostly quantitative, but lacked consistency between studies and validated questionnaires were rarely used. In discussion, we offer a critical analysis of our findings and suggest potential improvements for future exploratory search studies.

Statistically significant detection of semantic shifts using contextual word embeddings

Apr 08, 2021

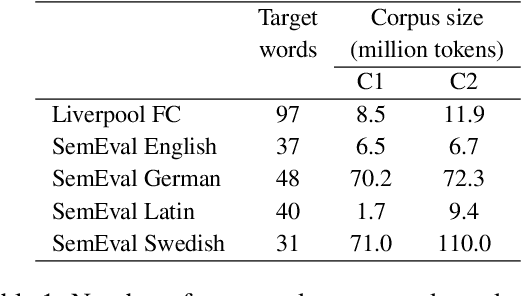

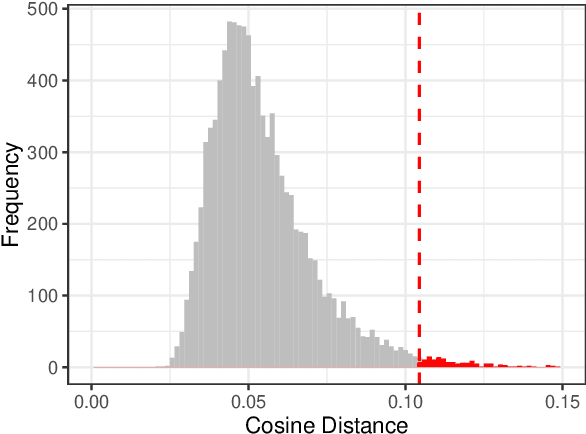

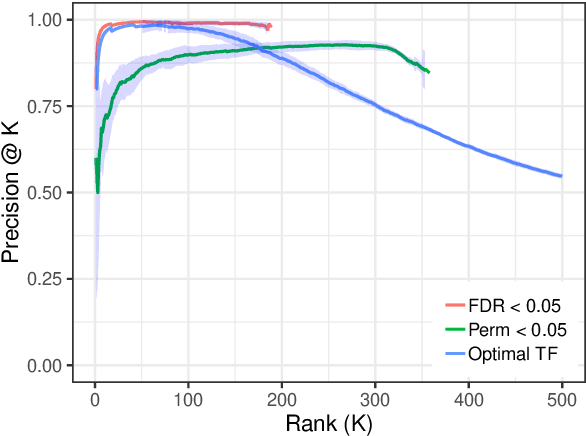

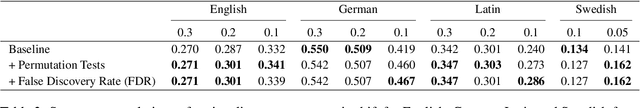

Abstract:Detecting lexical semantic shifts in smaller data sets, e.g. in historical linguistics and digital humanities, is challenging due to a lack of statistical power. This issue is exacerbated by non-contextual word embeddings that produce one embedding per token and therefore mask the variability present in the data. In this article, we propose an approach to estimate semantic shifts by combining contextual word embeddings with permutation-based statistical tests. Multiple comparisons are addressed using a false discovery rate procedure. We demonstrate the performance of this approach in simulation, achieving consistently high precision by suppressing false positives. We additionally analyzed real-world data from SemEval-2020 Task 1 and the Liverpool FC subreddit corpus. We show that by taking sample variation into account, we can improve the robustness of individual semantic shift estimates without degrading overall performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge