Adrian Bulat

BATS: Binary ArchitecTure Search

Mar 03, 2020

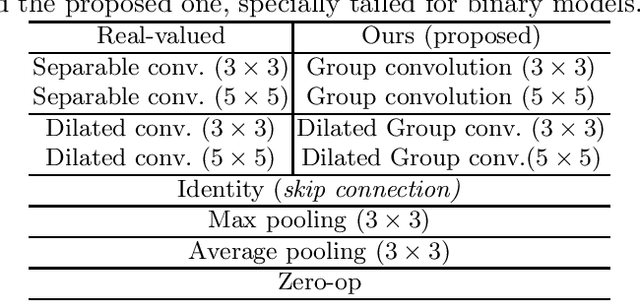

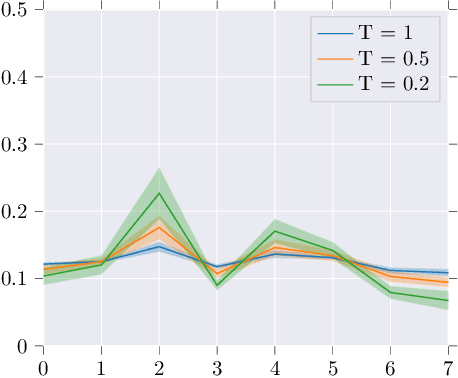

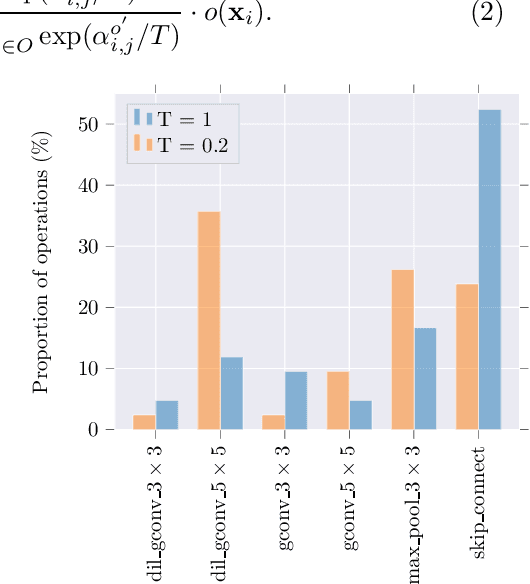

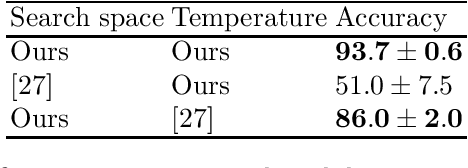

Abstract:This paper proposes Binary ArchitecTure Search (BATS), a framework that drastically reduces the accuracy gap between binary neural networks and their real-valued counterparts by means of Neural Architecture Search (NAS). We show that directly applying NAS to the binary domain provides very poor results. To alleviate this, we describe, to our knowledge, for the first time, the 3 key ingredients for successfully applying NAS to the binary domain. Specifically, we (1) introduce and design a novel binary-oriented search space, (2) propose a new mechanism for controlling and stabilising the resulting searched topologies, (3) propose and validate a series of new search strategies for binary networks that lead to faster convergence and lower search times. Experimental results demonstrate the effectiveness of the proposed approach and the necessity of searching in the binary space directly. Moreover, (4) we set a new state-of-the-art for binary neural networks on CIFAR10, CIFAR100 and ImageNet datasets. Code will be made available https://github.com/1adrianb/binary-nas

Toward fast and accurate human pose estimation via soft-gated skip connections

Feb 25, 2020

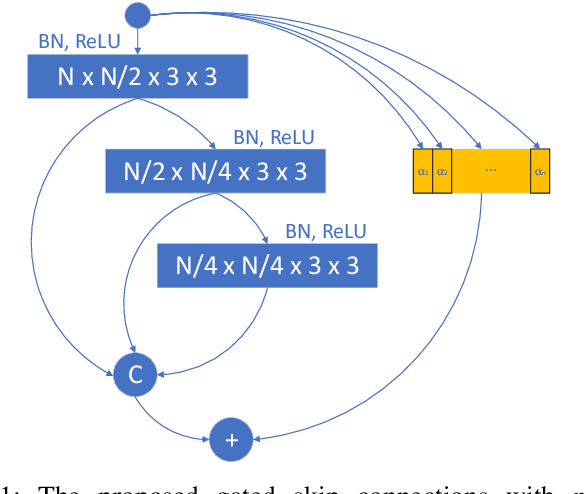

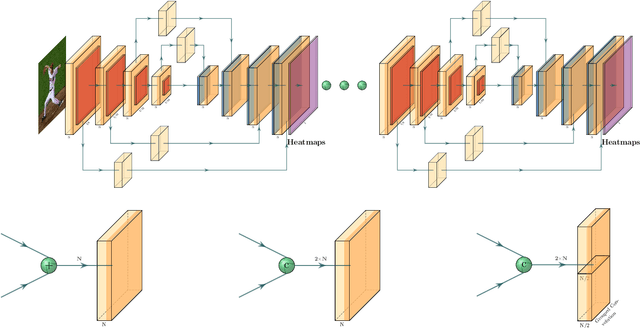

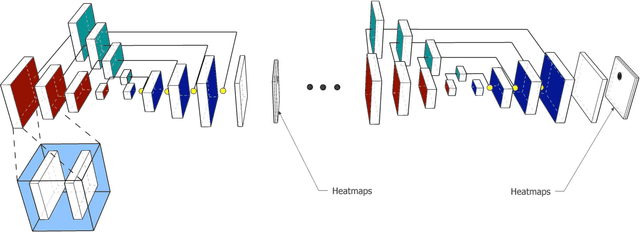

Abstract:This paper is on highly accurate and highly efficient human pose estimation. Recent works based on Fully Convolutional Networks (FCNs) have demonstrated excellent results for this difficult problem. While residual connections within FCNs have proved to be quintessential for achieving high accuracy, we re-analyze this design choice in the context of improving both the accuracy and the efficiency over the state-of-the-art. In particular, we make the following contributions: (a) We propose gated skip connections with per-channel learnable parameters to control the data flow for each channel within the module within the macro-module. (b) We introduce a hybrid network that combines the HourGlass and U-Net architectures which minimizes the number of identity connections within the network and increases the performance for the same parameter budget. Our model achieves state-of-the-art results on the MPII and LSP datasets. In addition, with a reduction of 3x in model size and complexity, we show no decrease in performance when compared to the original HourGlass network.

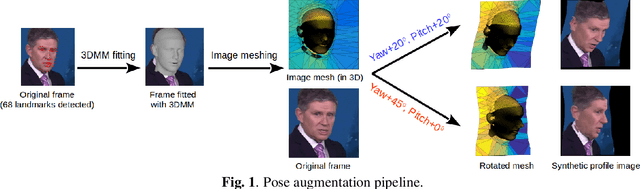

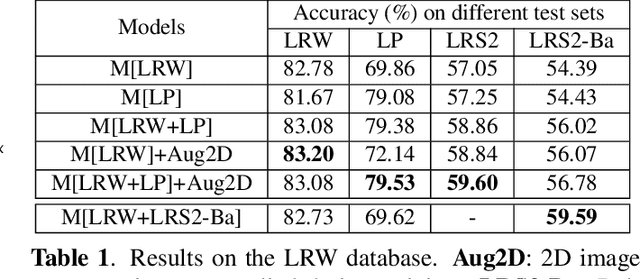

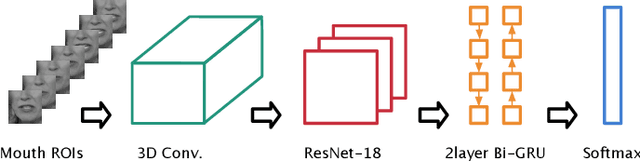

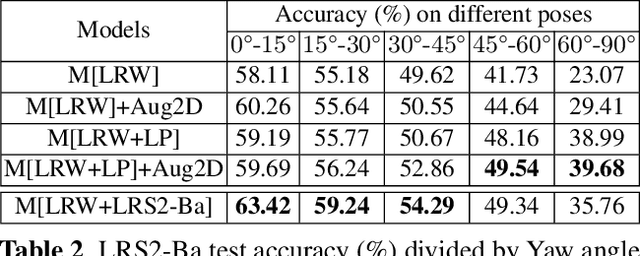

Towards Pose-invariant Lip-Reading

Nov 14, 2019

Abstract:Lip-reading models have been significantly improved recently thanks to powerful deep learning architectures. However, most works focused on frontal or near frontal views of the mouth. As a consequence, lip-reading performance seriously deteriorates in non-frontal mouth views. In this work, we present a framework for training pose-invariant lip-reading models on synthetic data instead of collecting and annotating non-frontal data which is costly and tedious. The proposed model significantly outperforms previous approaches on non-frontal views while retaining the superior performance on frontal and near frontal mouth views. Specifically, we propose to use a 3D Morphable Model (3DMM) to augment LRW, an existing large-scale but mostly frontal dataset, by generating synthetic facial data in arbitrary poses. The newly derived dataset, is used to train a state-of-the-art neural network for lip-reading. We conducted a cross-database experiment for isolated word recognition on the LRS2 dataset, and reported an absolute improvement of 2.55%. The benefit of the proposed approach becomes clearer in extreme poses where an absolute improvement of up to 20.64% over the baseline is achieved.

XNOR-Net++: Improved Binary Neural Networks

Sep 30, 2019

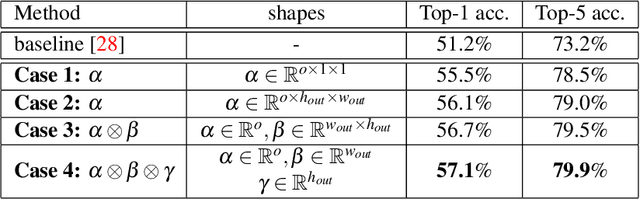

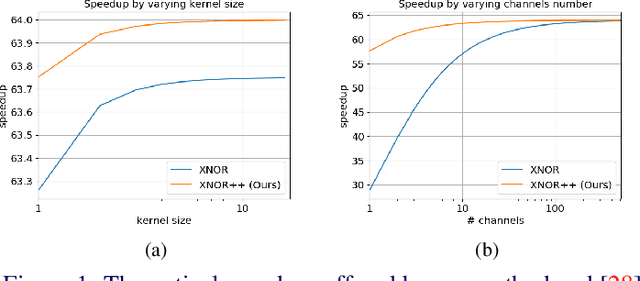

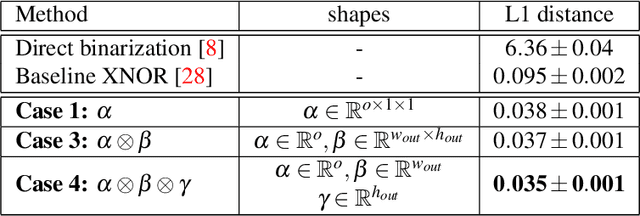

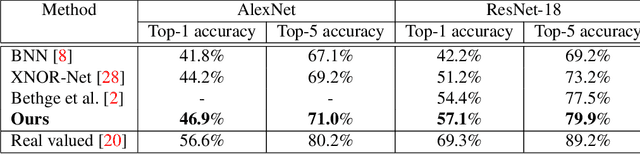

Abstract:This paper proposes an improved training algorithm for binary neural networks in which both weights and activations are binary numbers. A key but fairly overlooked feature of the current state-of-the-art method of XNOR-Net is the use of analytically calculated real-valued scaling factors for re-weighting the output of binary convolutions. We argue that analytic calculation of these factors is sub-optimal. Instead, in this work, we make the following contributions: (a) we propose to fuse the activation and weight scaling factors into a single one that is learned discriminatively via backpropagation. (b) More importantly, we explore several ways of constructing the shape of the scale factors while keeping the computational budget fixed. (c) We empirically measure the accuracy of our approximations and show that they are significantly more accurate than the analytically calculated one. (d) We show that our approach significantly outperforms XNOR-Net within the same computational budget when tested on the challenging task of ImageNet classification, offering up to 6\% accuracy gain.

Efficient N-Dimensional Convolutions via Higher-Order Factorization

Jun 14, 2019

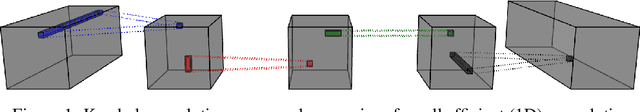

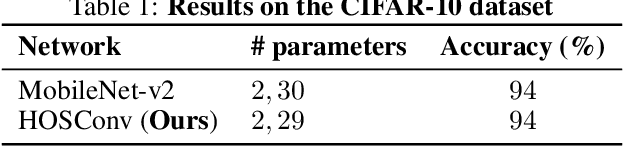

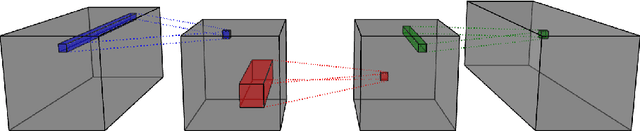

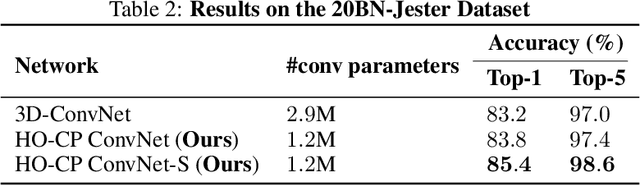

Abstract:With the unprecedented success of deep convolutional neural networks came the quest for training always deeper networks. However, while deeper neural networks give better performance when trained appropriately, that depth also translates in memory and computation heavy models, typically with tens of millions of parameters. Several methods have been proposed to leverage redundancies in the network to alleviate this complexity. Either a pretrained network is compressed, e.g. using a low-rank tensor decomposition, or the architecture of the network is directly modified to be more effective. In this paper, we study both approaches in a unified framework, under the lens of tensor decompositions. We show how tensor decomposition applied to the convolutional kernel relates to efficient architectures such as MobileNet. Moreover, we propose a tensor-based method for efficient higher order convolutions, which can be used as a plugin replacement for N-dimensional convolutions. We demonstrate their advantageous properties both theoretically and empirically for image classification, for both 2D and 3D convolutional networks.

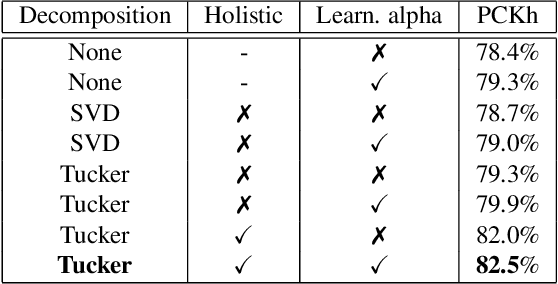

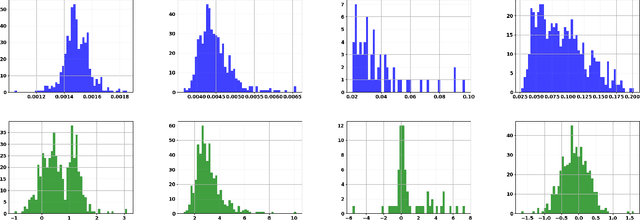

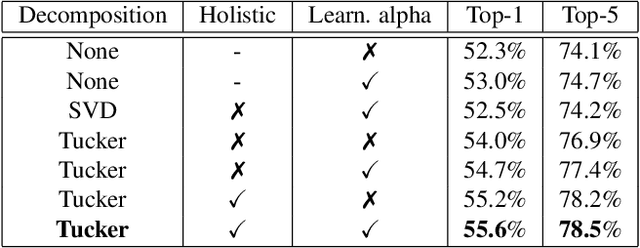

Matrix and tensor decompositions for training binary neural networks

Apr 16, 2019

Abstract:This paper is on improving the training of binary neural networks in which both activations and weights are binary. While prior methods for neural network binarization binarize each filter independently, we propose to instead parametrize the weight tensor of each layer using matrix or tensor decomposition. The binarization process is then performed using this latent parametrization, via a quantization function (e.g. sign function) applied to the reconstructed weights. A key feature of our method is that while the reconstruction is binarized, the computation in the latent factorized space is done in the real domain. This has several advantages: (i) the latent factorization enforces a coupling of the filters before binarization, which significantly improves the accuracy of the trained models. (ii) while at training time, the binary weights of each convolutional layer are parametrized using real-valued matrix or tensor decomposition, during inference we simply use the reconstructed (binary) weights. As a result, our method does not sacrifice any advantage of binary networks in terms of model compression and speeding-up inference. As a further contribution, instead of computing the binary weight scaling factors analytically, as in prior work, we propose to learn them discriminatively via back-propagation. Finally, we show that our approach significantly outperforms existing methods when tested on the challenging tasks of (a) human pose estimation (more than 4% improvements) and (b) ImageNet classification (up to 5% performance gains).

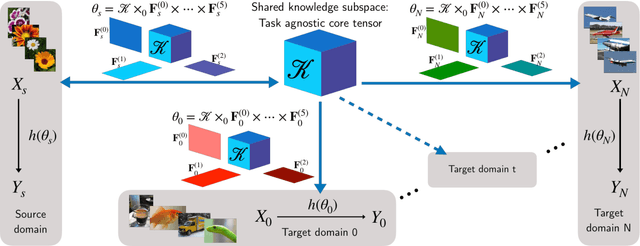

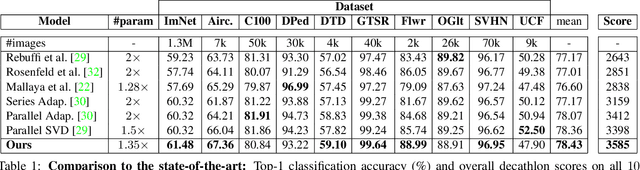

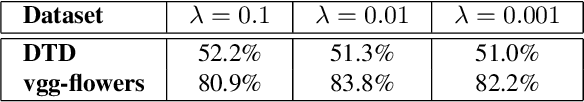

Incremental multi-domain learning with network latent tensor factorization

Apr 12, 2019

Abstract:The prominence of deep learning, large amount of annotated data and increasingly powerful hardware made it possible to reach remarkable performance for supervised classification tasks, in many cases saturating the training sets. However, adapting the learned classification to new domains remains a hard problem due to at least three reasons: (1) the domains and the tasks might be drastically different; (2) there might be very limited amount of annotated data on the new domain and (3) full training of a new model for each new task is prohibitive in terms of memory, due to the shear number of parameter of deep networks. Instead, new tasks should be learned incrementally, building on prior knowledge from already learned tasks, and without catastrophic forgetting, i.e. without hurting performance on prior tasks. To our knowledge this paper presents the first method for multi-domain/task learning without catastrophic forgetting using a fully tensorized architecture. Our main contribution is a method for multi-domain learning which models groups of identically structured blocks within a CNN as a high-order tensor. We show that this joint modelling naturally leverages correlations across different layers and results in more compact representations for each new task/domain over previous methods which have focused on adapting each layer separately. We apply the proposed method to 10 datasets of the Visual Decathlon Challenge and show that our method offers on average about 7.5x reduction in number of parameters and superior performance in terms of both classification accuracy and Decathlon score. In particular, our method outperforms all prior work on the Visual Decathlon Challenge.

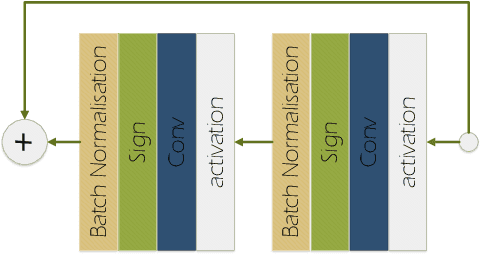

Improved training of binary networks for human pose estimation and image recognition

Apr 11, 2019

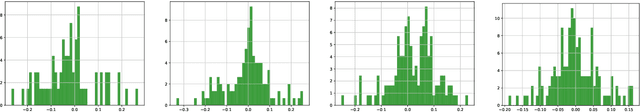

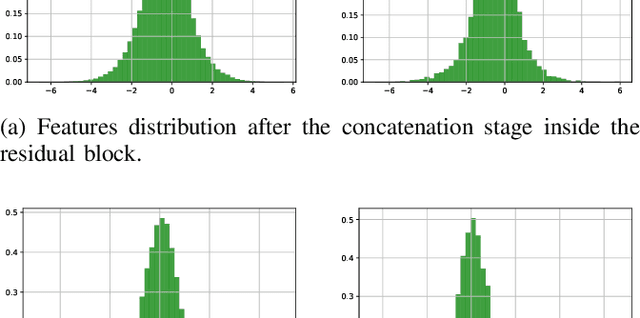

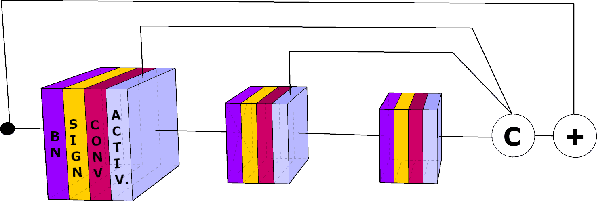

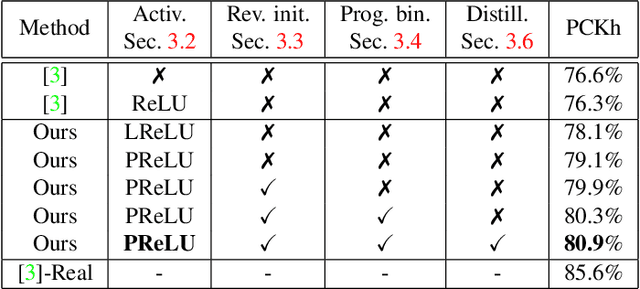

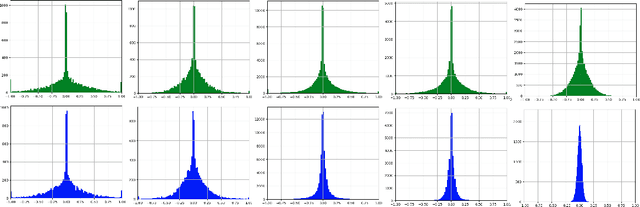

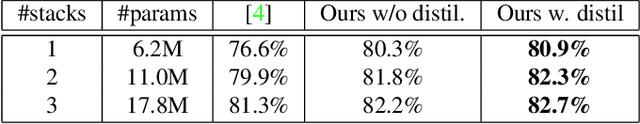

Abstract:Big neural networks trained on large datasets have advanced the state-of-the-art for a large variety of challenging problems, improving performance by a large margin. However, under low memory and limited computational power constraints, the accuracy on the same problems drops considerable. In this paper, we propose a series of techniques that significantly improve the accuracy of binarized neural networks (i.e networks where both the features and the weights are binary). We evaluate the proposed improvements on two diverse tasks: fine-grained recognition (human pose estimation) and large-scale image recognition (ImageNet classification). Specifically, we introduce a series of novel methodological changes including: (a) more appropriate activation functions, (b) reverse-order initialization, (c) progressive quantization, and (d) network stacking and show that these additions improve existing state-of-the-art network binarization techniques, significantly. Additionally, for the first time, we also investigate the extent to which network binarization and knowledge distillation can be combined. When tested on the challenging MPII dataset, our method shows a performance improvement of more than 4% in absolute terms. Finally, we further validate our findings by applying the proposed techniques for large-scale object recognition on the Imagenet dataset, on which we report a reduction of error rate by 4%.

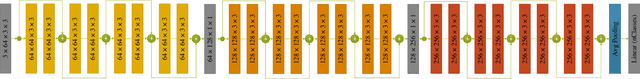

T-Net: Parametrizing Fully Convolutional Nets with a Single High-Order Tensor

Apr 04, 2019

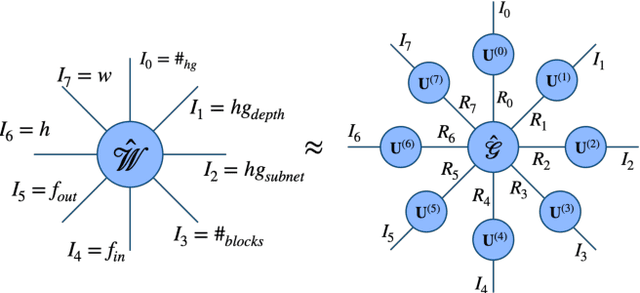

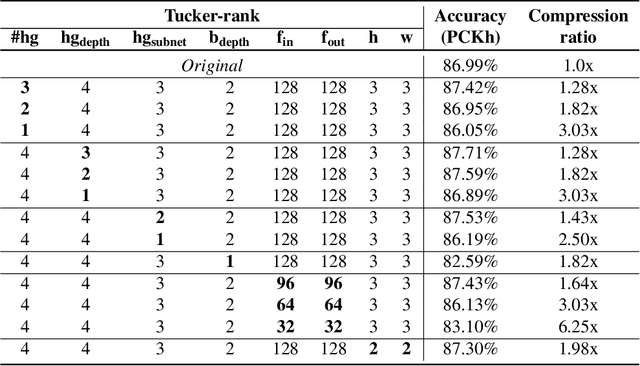

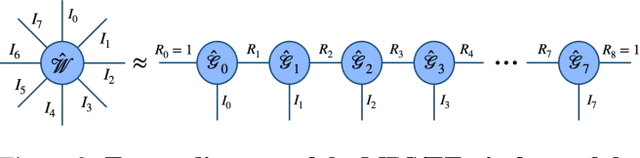

Abstract:Recent findings indicate that over-parametrization, while crucial for successfully training deep neural networks, also introduces large amounts of redundancy. Tensor methods have the potential to efficiently parametrize over-complete representations by leveraging this redundancy. In this paper, we propose to fully parametrize Convolutional Neural Networks (CNNs) with a single high-order, low-rank tensor. Previous works on network tensorization have focused on parametrizing individual layers (convolutional or fully connected) only, and perform the tensorization layer-by-layer separately. In contrast, we propose to jointly capture the full structure of a neural network by parametrizing it with a single high-order tensor, the modes of which represent each of the architectural design parameters of the network (e.g. number of convolutional blocks, depth, number of stacks, input features, etc). This parametrization allows to regularize the whole network and drastically reduce the number of parameters. Our model is end-to-end trainable and the low-rank structure imposed on the weight tensor acts as an implicit regularization. We study the case of networks with rich structure, namely Fully Convolutional Networks (FCNs), which we propose to parametrize with a single 8th-order tensor. We show that our approach can achieve superior performance with small compression rates, and attain high compression rates with negligible drop in accuracy for the challenging task of human pose estimation.

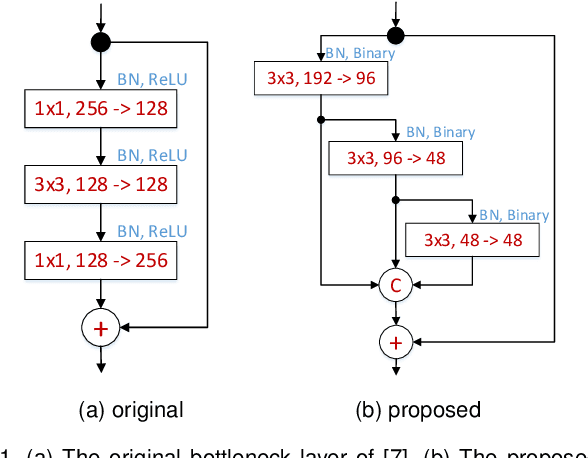

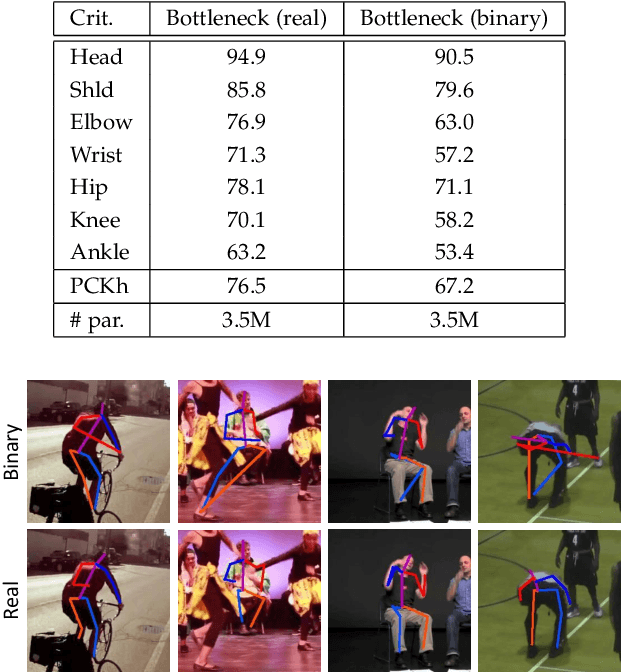

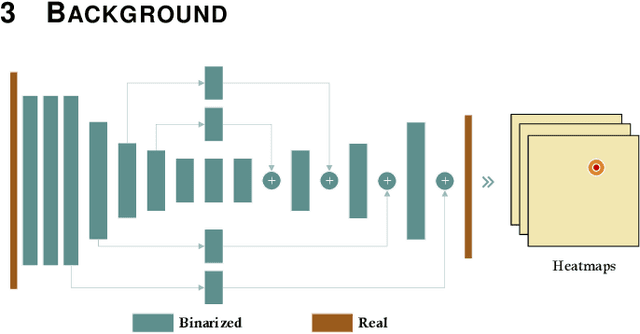

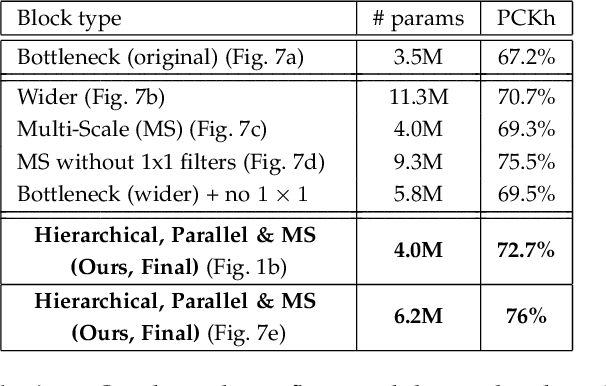

Hierarchical binary CNNs for landmark localization with limited resources

Aug 14, 2018

Abstract:Our goal is to design architectures that retain the groundbreaking performance of Convolutional Neural Networks (CNNs) for landmark localization and at the same time are lightweight, compact and suitable for applications with limited computational resources. To this end, we make the following contributions: (a) we are the first to study the effect of neural network binarization on localization tasks, namely human pose estimation and face alignment. We exhaustively evaluate various design choices, identify performance bottlenecks, and more importantly propose multiple orthogonal ways to boost performance. (b) Based on our analysis, we propose a novel hierarchical, parallel and multi-scale residual architecture that yields large performance improvement over the standard bottleneck block while having the same number of parameters, thus bridging the gap between the original network and its binarized counterpart. (c) We perform a large number of ablation studies that shed light on the properties and the performance of the proposed block. (d) We present results for experiments on the most challenging datasets for human pose estimation and face alignment, reporting in many cases state-of-the-art performance. (e) We further provide additional results for the problem of facial part segmentation. Code can be downloaded from https://www.adrianbulat.com/binary-cnn-landmark

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge