Adriaan M. J. Schakel

Controlled Experiments for Word Embeddings

Dec 14, 2015

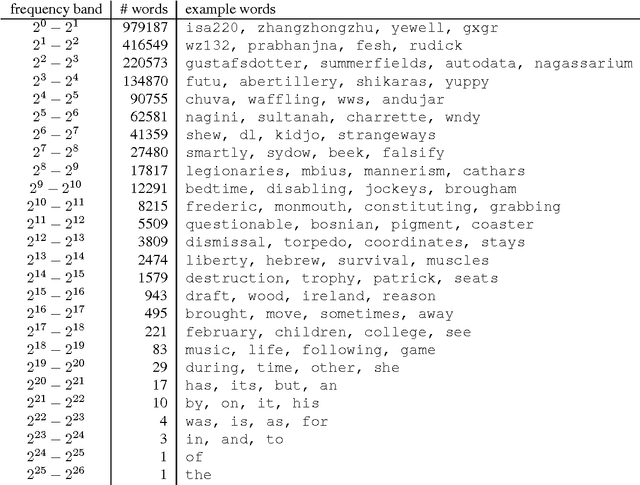

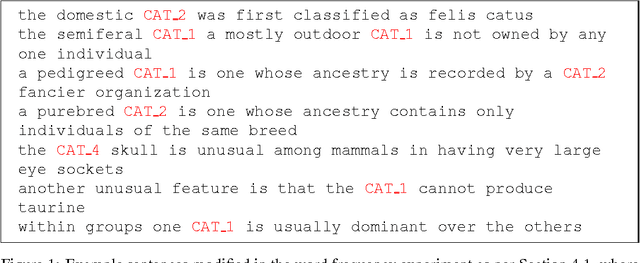

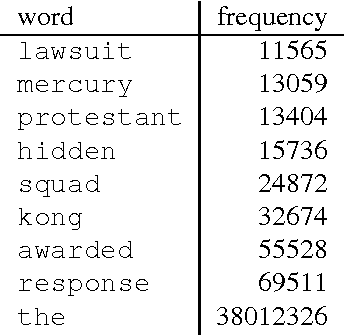

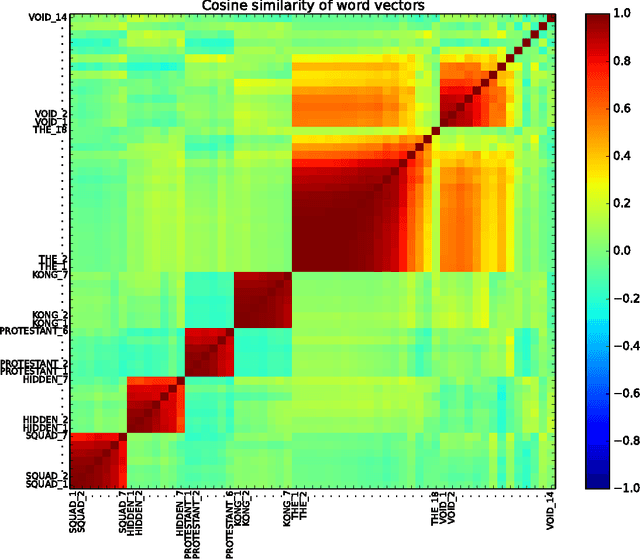

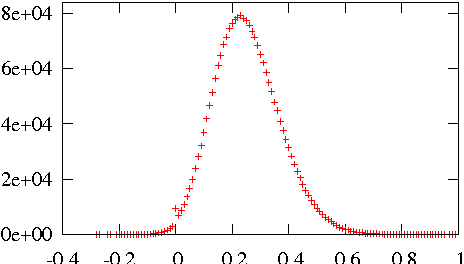

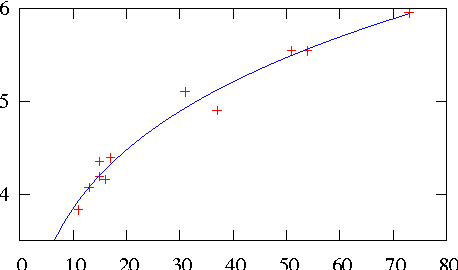

Abstract:An experimental approach to studying the properties of word embeddings is proposed. Controlled experiments, achieved through modifications of the training corpus, permit the demonstration of direct relations between word properties and word vector direction and length. The approach is demonstrated using the word2vec CBOW model with experiments that independently vary word frequency and word co-occurrence noise. The experiments reveal that word vector length depends more or less linearly on both word frequency and the level of noise in the co-occurrence distribution of the word. The coefficients of linearity depend upon the word. The special point in feature space, defined by the (artificial) word with pure noise in its co-occurrence distribution, is found to be small but non-zero.

Measuring Word Significance using Distributed Representations of Words

Aug 10, 2015

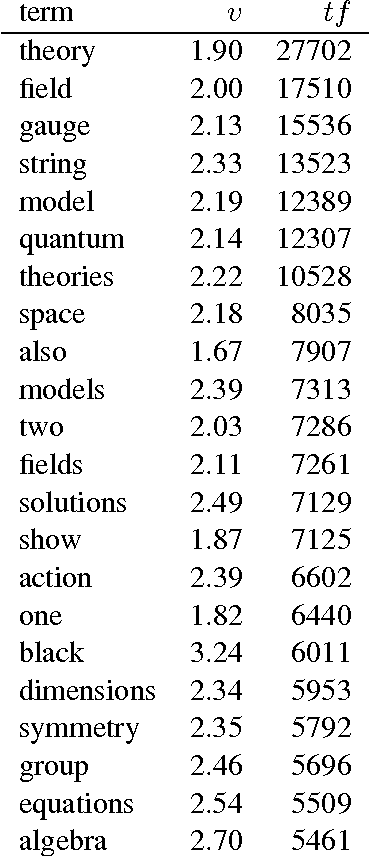

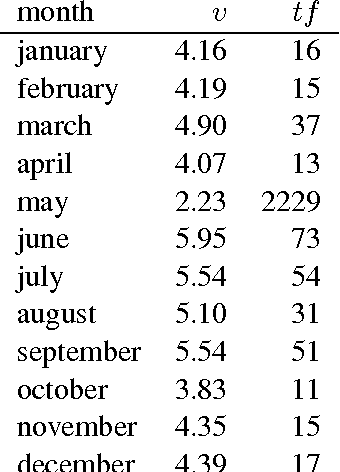

Abstract:Distributed representations of words as real-valued vectors in a relatively low-dimensional space aim at extracting syntactic and semantic features from large text corpora. A recently introduced neural network, named word2vec (Mikolov et al., 2013a; Mikolov et al., 2013b), was shown to encode semantic information in the direction of the word vectors. In this brief report, it is proposed to use the length of the vectors, together with the term frequency, as measure of word significance in a corpus. Experimental evidence using a domain-specific corpus of abstracts is presented to support this proposal. A useful visualization technique for text corpora emerges, where words are mapped onto a two-dimensional plane and automatically ranked by significance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge