Abdallah Shami

Sound Event Classification in an Industrial Environment: Pipe Leakage Detection Use Case

May 05, 2022

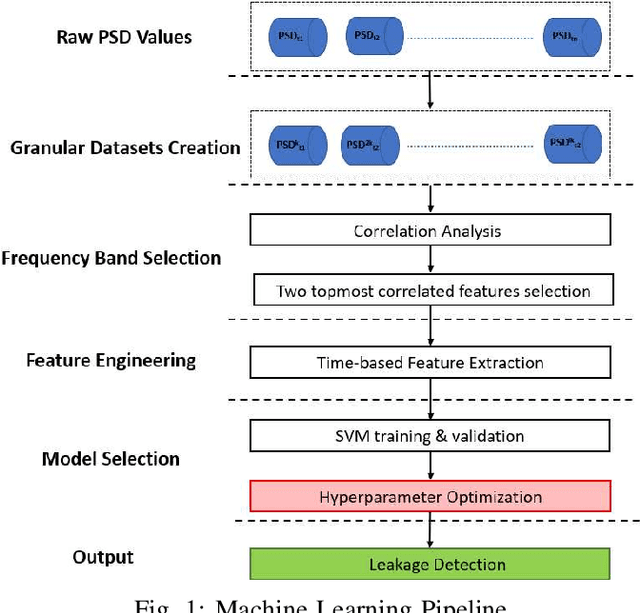

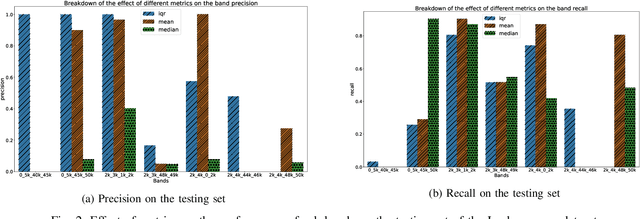

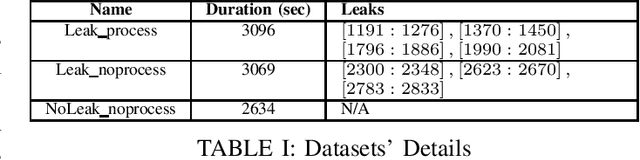

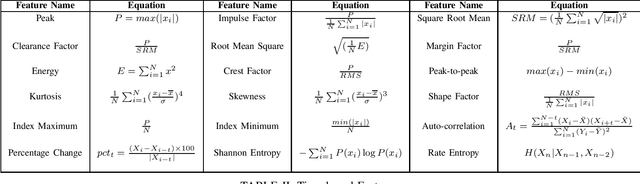

Abstract:In this work, a multi-stage Machine Learning (ML) pipeline is proposed for pipe leakage detection in an industrial environment. As opposed to other industrial and urban environments, the environment under study includes many interfering background noises, complicating the identification of leaks. Furthermore, the harsh environmental conditions limit the amount of data collected and impose the use of low-complexity algorithms. To address the environment's constraints, the developed ML pipeline applies multiple steps, each addressing the environment's challenges. The proposed ML pipeline first reduces the data dimensionality by feature selection techniques and then incorporates time correlations by extracting time-based features. The resultant features are fed to a Support Vector Machine (SVM) of low-complexity that generalizes well to a small amount of data. An extensive experimental procedure was carried out on two datasets, one with background industrial noise and one without, to evaluate the validity of the proposed pipeline. The SVM hyper-parameters and parameters specific to the pipeline steps were tuned as part of the experimental procedure. The best models obtained from the dataset with industrial noise and leaks were applied to datasets without noise and with and without leaks to test their generalizability. The results show that the model produces excellent results with 99\% accuracy and an F1-score of 0.93 and 0.9 for the respective datasets.

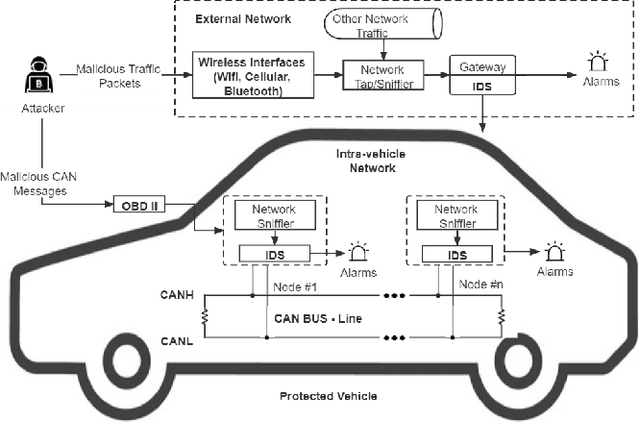

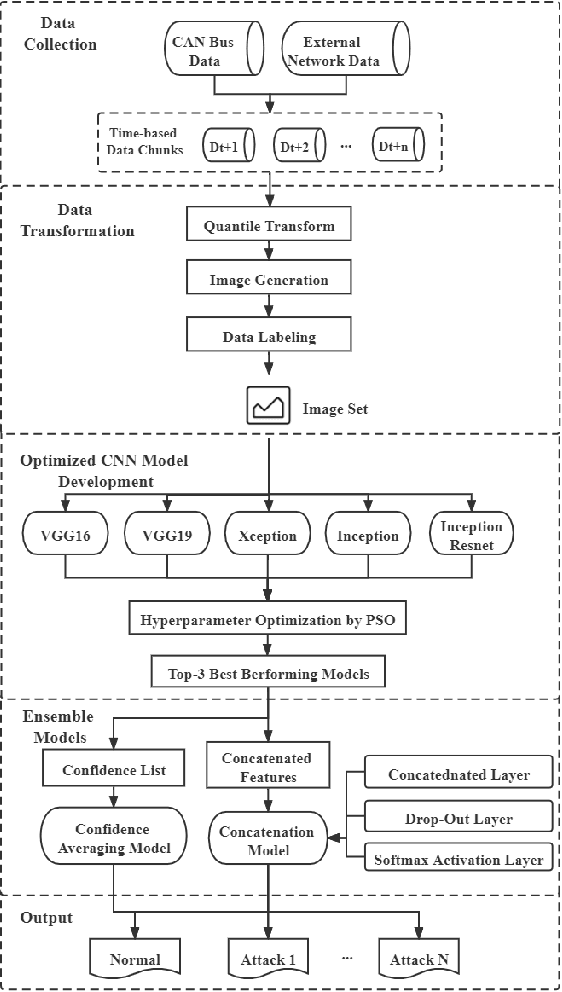

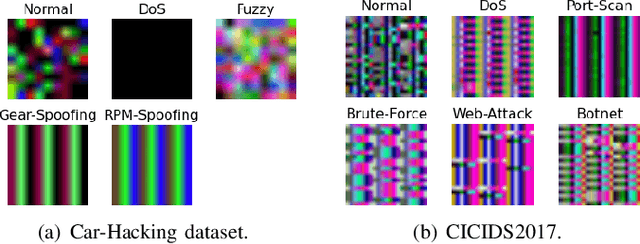

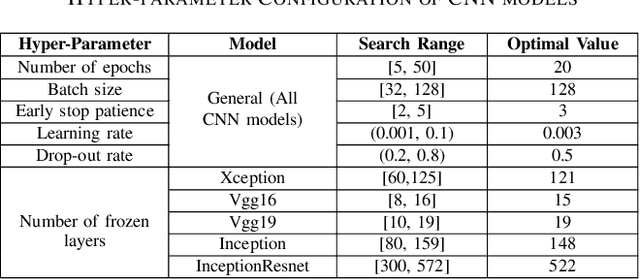

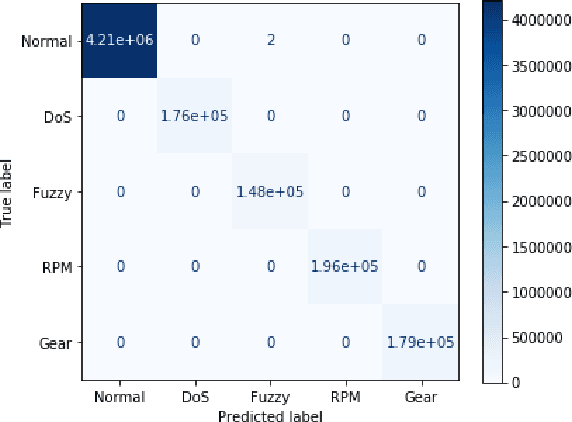

A Transfer Learning and Optimized CNN Based Intrusion Detection System for Internet of Vehicles

Jan 27, 2022

Abstract:Modern vehicles, including autonomous vehicles and connected vehicles, are increasingly connected to the external world, which enables various functionalities and services. However, the improving connectivity also increases the attack surfaces of the Internet of Vehicles (IoV), causing its vulnerabilities to cyber-threats. Due to the lack of authentication and encryption procedures in vehicular networks, Intrusion Detection Systems (IDSs) are essential approaches to protect modern vehicle systems from network attacks. In this paper, a transfer learning and ensemble learning-based IDS is proposed for IoV systems using convolutional neural networks (CNNs) and hyper-parameter optimization techniques. In the experiments, the proposed IDS has demonstrated over 99.25% detection rates and F1-scores on two well-known public benchmark IoV security datasets: the Car-Hacking dataset and the CICIDS2017 dataset. This shows the effectiveness of the proposed IDS for cyber-attack detection in both intra-vehicle and external vehicular networks.

An Attention-based ConvLSTM Autoencoder with Dynamic Thresholding for Unsupervised Anomaly Detection in Multivariate Time Series

Jan 23, 2022

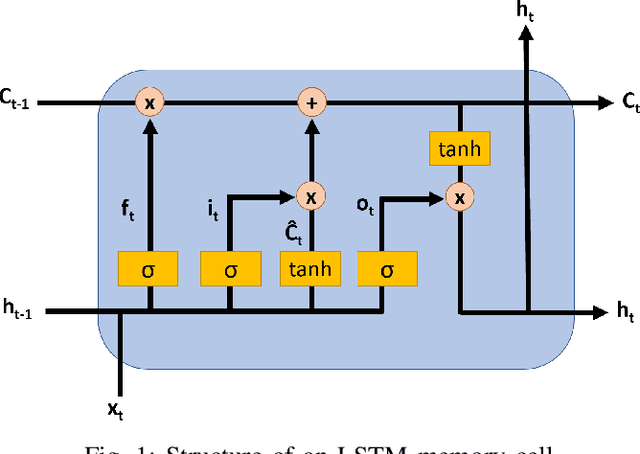

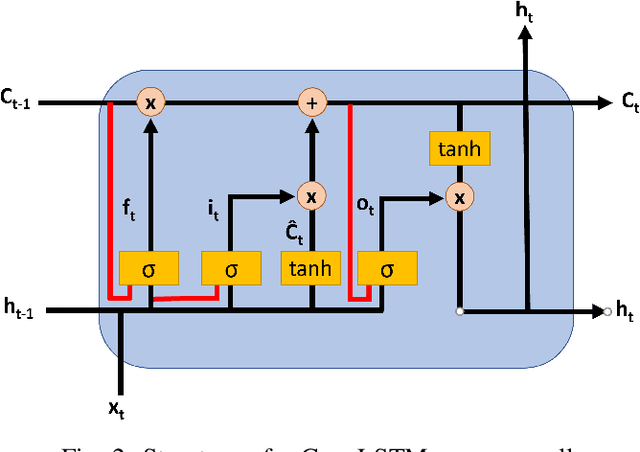

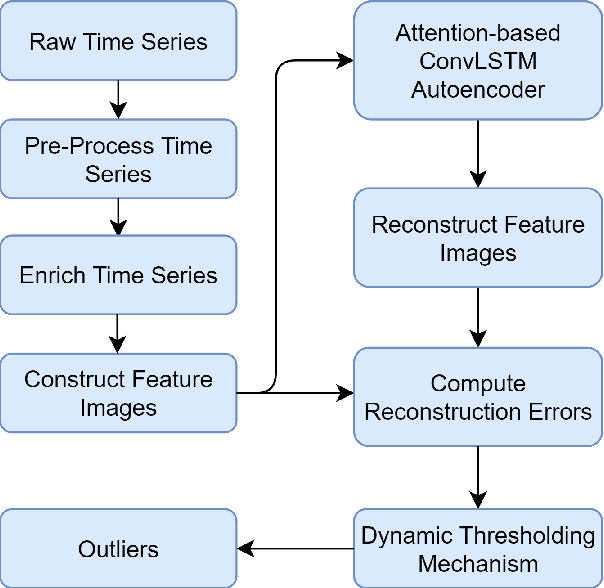

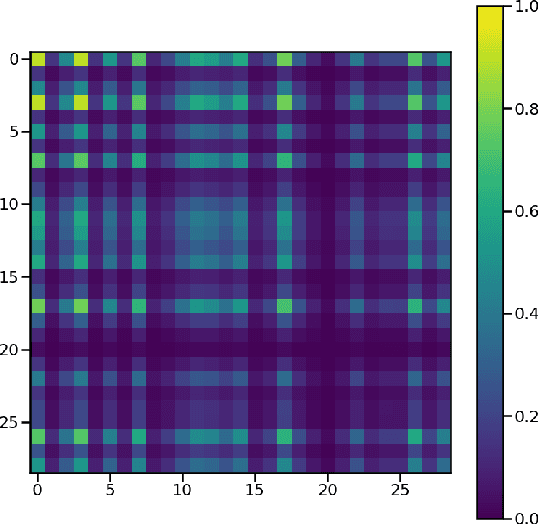

Abstract:As a substantial amount of multivariate time series data is being produced by the complex systems in Smart Manufacturing, improved anomaly detection frameworks are needed to reduce the operational risks and the monitoring burden placed on the system operators. However, building such frameworks is challenging, as a sufficiently large amount of defective training data is often not available and frameworks are required to capture both the temporal and contextual dependencies across different time steps while being robust to noise. In this paper, we propose an unsupervised Attention-based Convolutional Long Short-Term Memory (ConvLSTM) Autoencoder with Dynamic Thresholding (ACLAE-DT) framework for anomaly detection and diagnosis in multivariate time series. The framework starts by pre-processing and enriching the data, before constructing feature images to characterize the system statuses across different time steps by capturing the inter-correlations between pairs of time series. Afterwards, the constructed feature images are fed into an attention-based ConvLSTM autoencoder, which aims to encode the constructed feature images and capture the temporal behavior, followed by decoding the compressed knowledge representation to reconstruct the feature images input. The reconstruction errors are then computed and subjected to a statistical-based, dynamic thresholding mechanism to detect and diagnose the anomalies. Evaluation results conducted on real-life manufacturing data demonstrate the performance strengths of the proposed approach over state-of-the-art methods under different experimental settings.

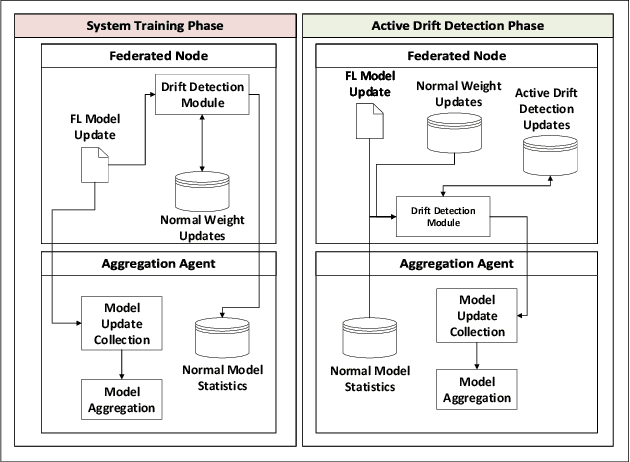

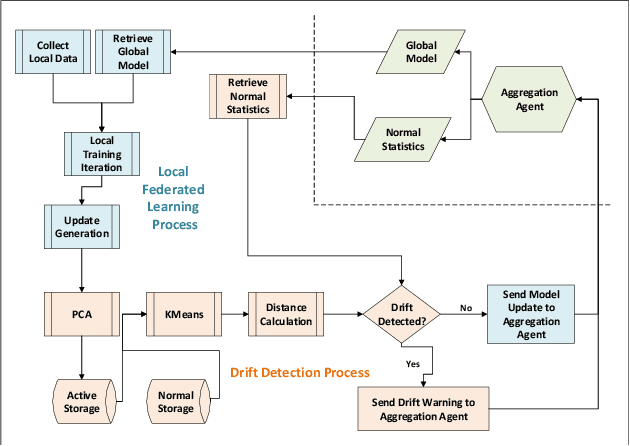

Concept Drift Detection in Federated Networked Systems

Sep 13, 2021

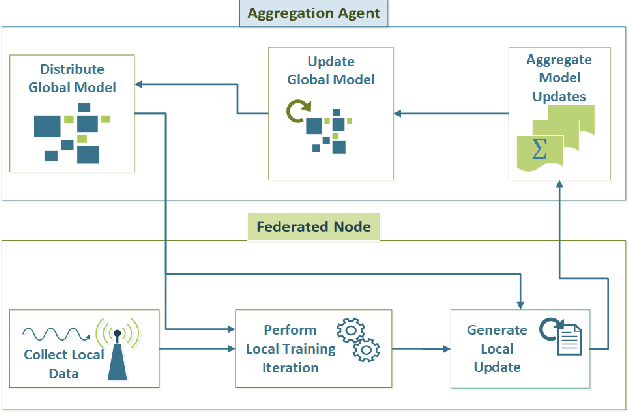

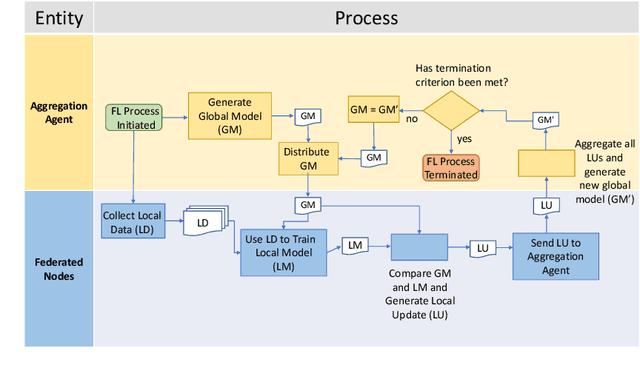

Abstract:As next-generation networks materialize, increasing levels of intelligence are required. Federated Learning has been identified as a key enabling technology of intelligent and distributed networks; however, it is prone to concept drift as with any machine learning application. Concept drift directly affects the model's performance and can result in severe consequences considering the critical and emergency services provided by modern networks. To mitigate the adverse effects of drift, this paper proposes a concept drift detection system leveraging the federated learning updates provided at each iteration of the federated training process. Using dimensionality reduction and clustering techniques, a framework that isolates the system's drifted nodes is presented through experiments using an Intelligent Transportation System as a use case. The presented work demonstrates that the proposed framework is able to detect drifted nodes in a variety of non-iid scenarios at different stages of drift and different levels of system exposure.

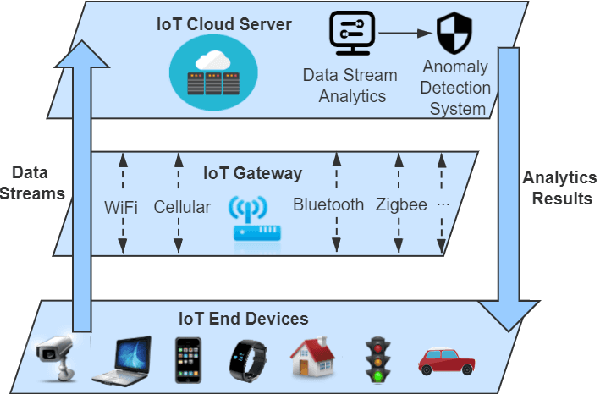

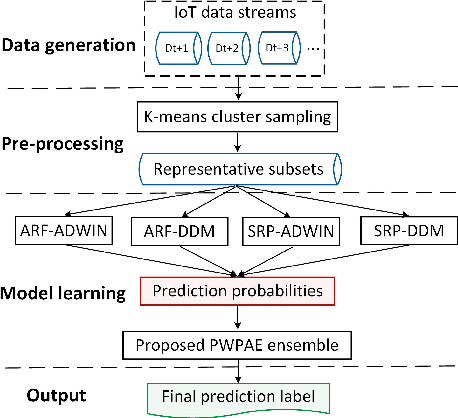

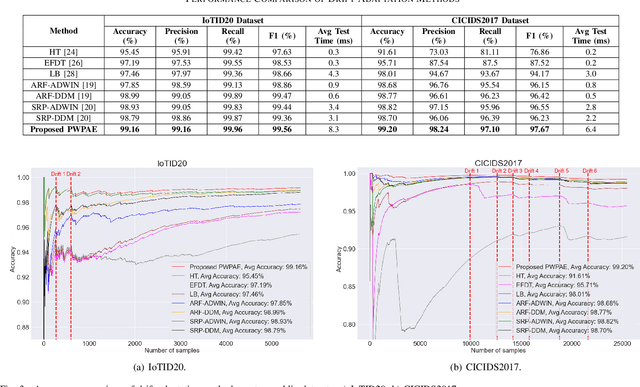

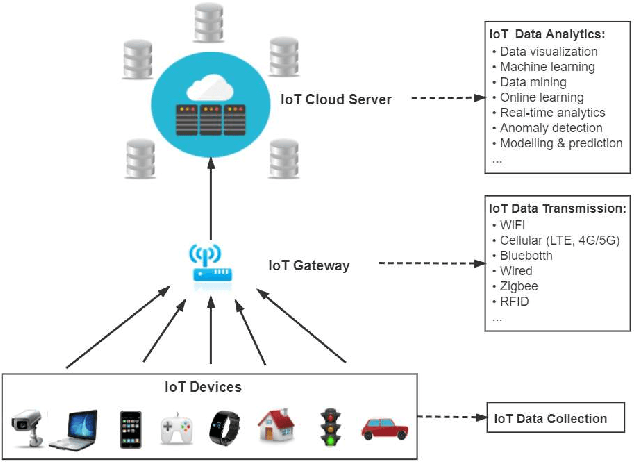

PWPAE: An Ensemble Framework for Concept Drift Adaptation in IoT Data Streams

Sep 10, 2021

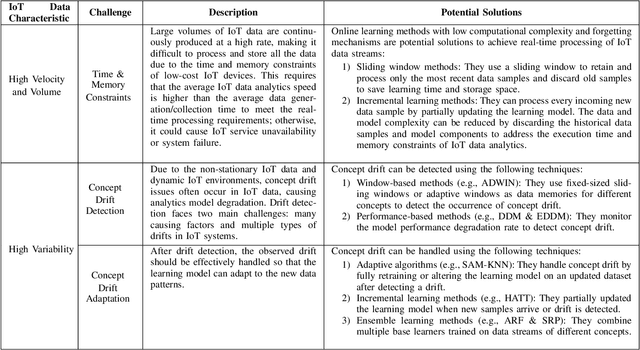

Abstract:As the number of Internet of Things (IoT) devices and systems have surged, IoT data analytics techniques have been developed to detect malicious cyber-attacks and secure IoT systems; however, concept drift issues often occur in IoT data analytics, as IoT data is often dynamic data streams that change over time, causing model degradation and attack detection failure. This is because traditional data analytics models are static models that cannot adapt to data distribution changes. In this paper, we propose a Performance Weighted Probability Averaging Ensemble (PWPAE) framework for drift adaptive IoT anomaly detection through IoT data stream analytics. Experiments on two public datasets show the effectiveness of our proposed PWPAE method compared against state-of-the-art methods.

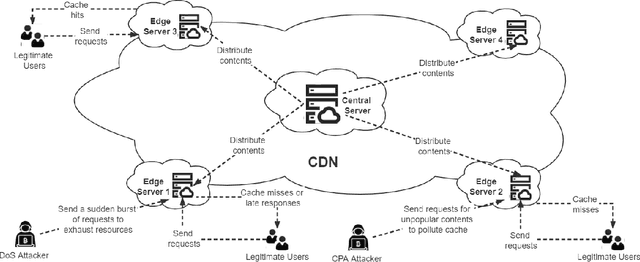

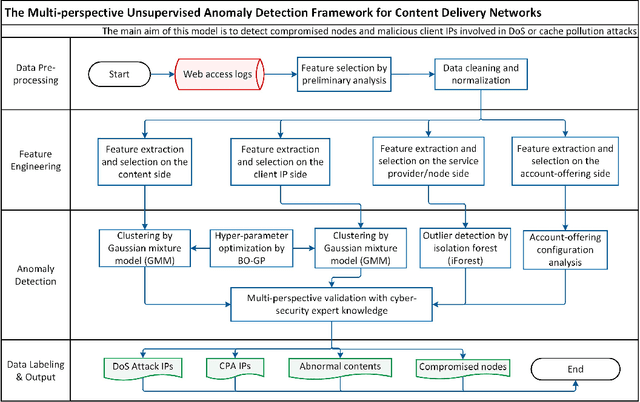

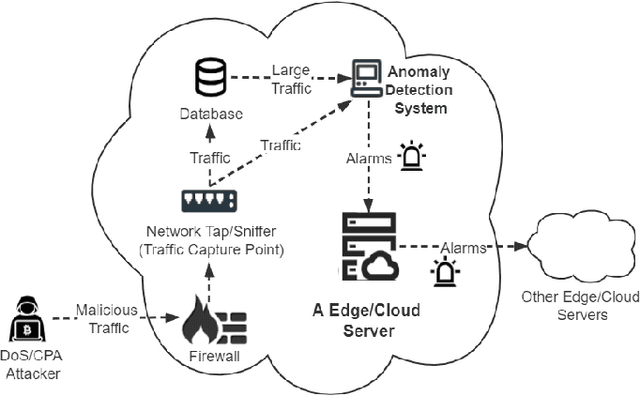

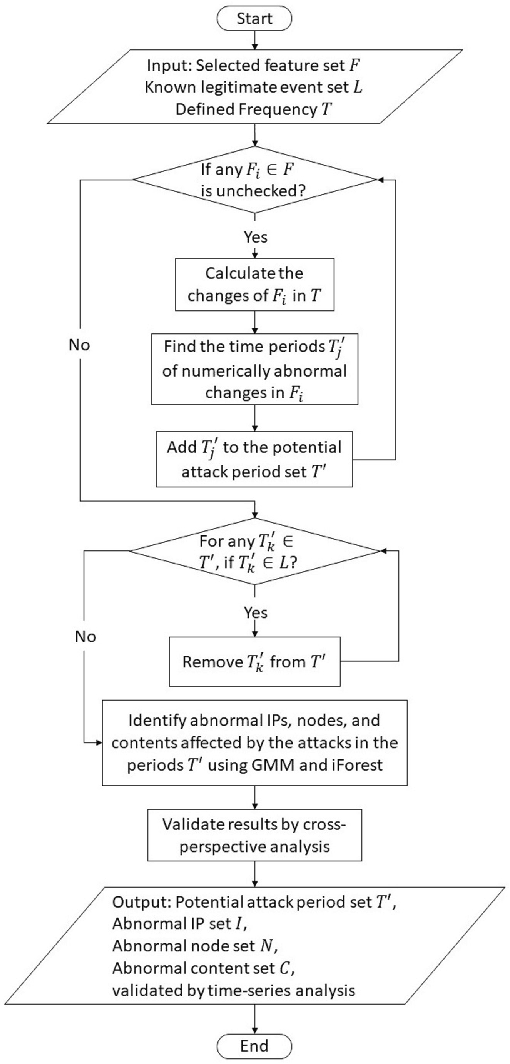

Multi-Perspective Content Delivery Networks Security Framework Using Optimized Unsupervised Anomaly Detection

Jul 24, 2021

Abstract:Content delivery networks (CDNs) provide efficient content distribution over the Internet. CDNs improve the connectivity and efficiency of global communications, but their caching mechanisms may be breached by cyber-attackers. Among the security mechanisms, effective anomaly detection forms an important part of CDN security enhancement. In this work, we propose a multi-perspective unsupervised learning framework for anomaly detection in CDNs. In the proposed framework, a multi-perspective feature engineering approach, an optimized unsupervised anomaly detection model that utilizes an isolation forest and a Gaussian mixture model, and a multi-perspective validation method, are developed to detect abnormal behaviors in CDNs mainly from the client Internet Protocol (IP) and node perspectives, therefore to identify the denial of service (DoS) and cache pollution attack (CPA) patterns. Experimental results are presented based on the analytics of eight days of real-world CDN log data provided by a major CDN operator. Through experiments, the abnormal contents, compromised nodes, malicious IPs, as well as their corresponding attack types, are identified effectively by the proposed framework and validated by multiple cybersecurity experts. This shows the effectiveness of the proposed method when applied to real-world CDN data.

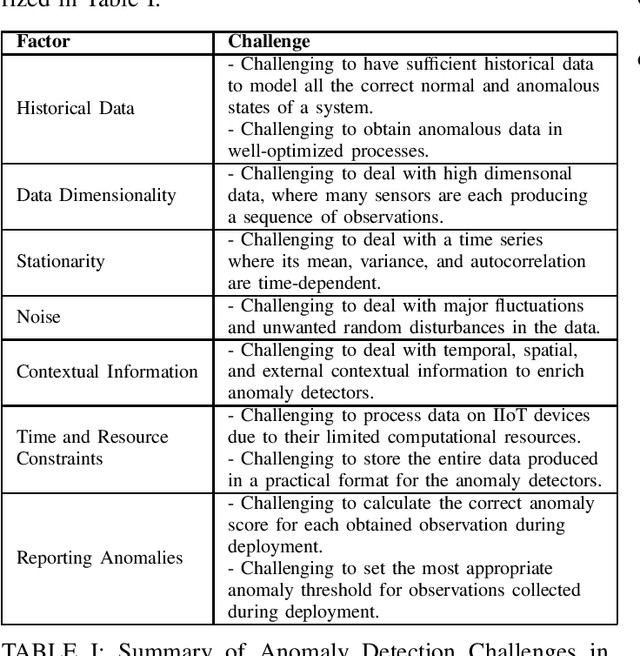

Anomaly Detection in Smart Manufacturing with an Application Focus on Robotic Finishing Systems: A Review

Jul 13, 2021

Abstract:As systems in smart manufacturing become increasingly complex, producing an abundance of data, the potential for production failures becomes increasingly more likely. There arises the need to minimize or eradicate production failures, one of which is by means of anomaly detection. However, with the deployment of anomaly detection systems, there are many aspects to be considered. In this paper, an overview of the components, benefits, challenges, methods, and open problems of anomaly detection in smart manufacturing and robotic finishing systems are discussed.

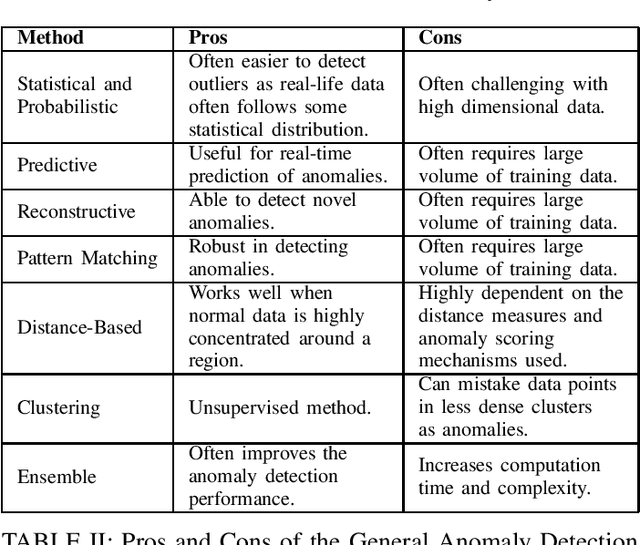

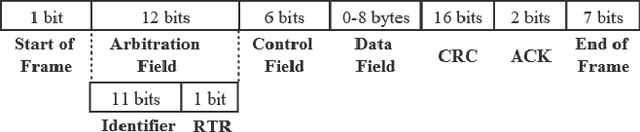

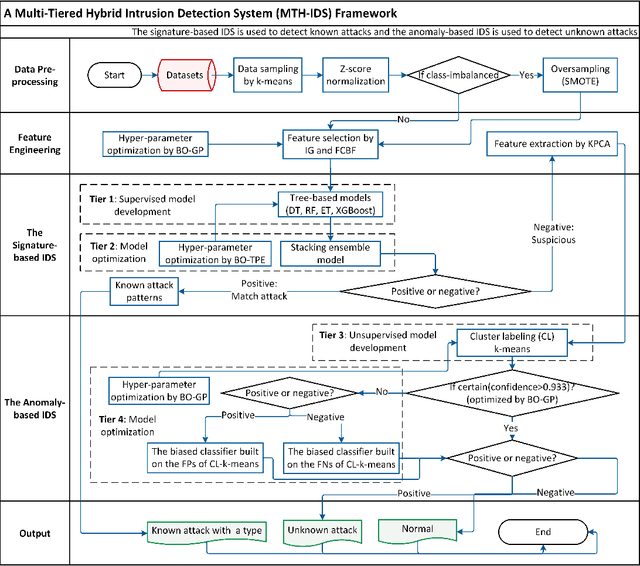

MTH-IDS: A Multi-Tiered Hybrid Intrusion Detection System for Internet of Vehicles

May 26, 2021

Abstract:Modern vehicles, including connected vehicles and autonomous vehicles, nowadays involve many electronic control units connected through intra-vehicle networks to implement various functionalities and perform actions. Modern vehicles are also connected to external networks through vehicle-to-everything technologies, enabling their communications with other vehicles, infrastructures, and smart devices. However, the improving functionality and connectivity of modern vehicles also increase their vulnerabilities to cyber-attacks targeting both intra-vehicle and external networks due to the large attack surfaces. To secure vehicular networks, many researchers have focused on developing intrusion detection systems (IDSs) that capitalize on machine learning methods to detect malicious cyber-attacks. In this paper, the vulnerabilities of intra-vehicle and external networks are discussed, and a multi-tiered hybrid IDS that incorporates a signature-based IDS and an anomaly-based IDS is proposed to detect both known and unknown attacks on vehicular networks. Experimental results illustrate that the proposed system can detect various types of known attacks with 99.99% accuracy on the CAN-intrusion-dataset representing the intra-vehicle network data and 99.88% accuracy on the CICIDS2017 dataset illustrating the external vehicular network data. For the zero-day attack detection, the proposed system achieves high F1-scores of 0.963 and 0.800 on the above two datasets, respectively. The average processing time of each data packet on a vehicle-level machine is less than 0.6 ms, which shows the feasibility of implementing the proposed system in real-time vehicle systems. This emphasizes the effectiveness and efficiency of the proposed IDS.

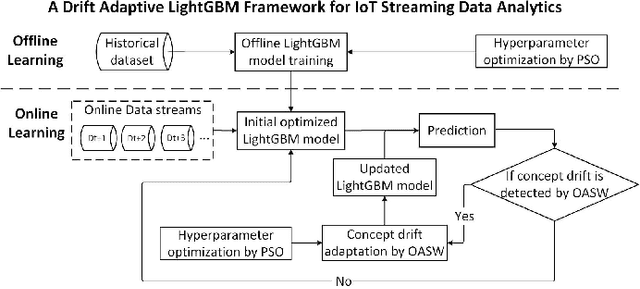

A Lightweight Concept Drift Detection and Adaptation Framework for IoT Data Streams

Apr 21, 2021

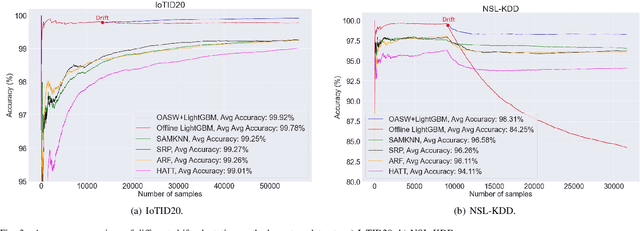

Abstract:In recent years, with the increasing popularity of "Smart Technology", the number of Internet of Things (IoT) devices and systems have surged significantly. Various IoT services and functionalities are based on the analytics of IoT streaming data. However, IoT data analytics faces concept drift challenges due to the dynamic nature of IoT systems and the ever-changing patterns of IoT data streams. In this article, we propose an adaptive IoT streaming data analytics framework for anomaly detection use cases based on optimized LightGBM and concept drift adaptation. A novel drift adaptation method named Optimized Adaptive and Sliding Windowing (OASW) is proposed to adapt to the pattern changes of online IoT data streams. Experiments on two public datasets show the high accuracy and efficiency of our proposed adaptive LightGBM model compared against other state-of-the-art approaches. The proposed adaptive LightGBM model can perform continuous learning and drift adaptation on IoT data streams without human intervention.

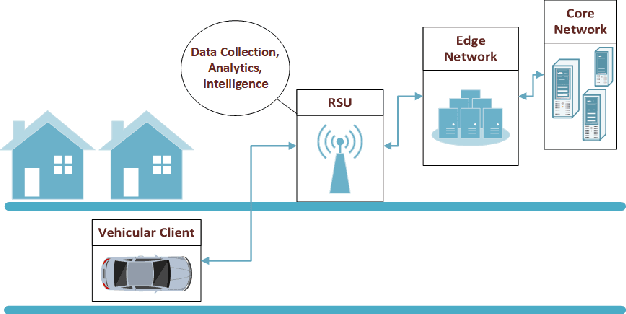

Making a Case for Federated Learning in the Internet of Vehicles and Intelligent Transportation Systems

Feb 19, 2021

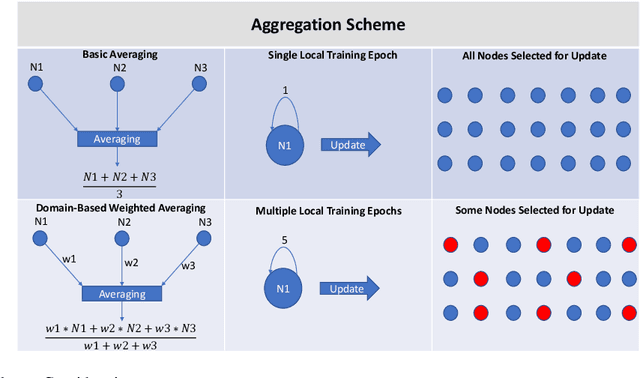

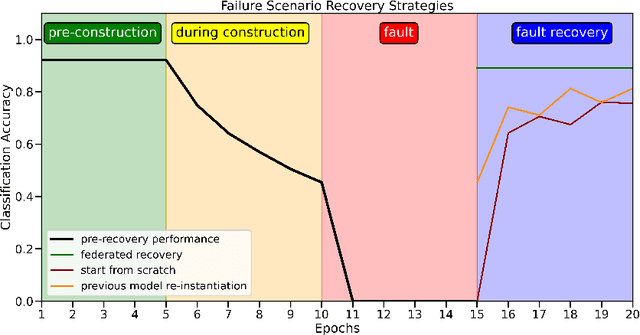

Abstract:With the incoming introduction of 5G networks and the advancement in technologies, such as Network Function Virtualization and Software Defined Networking, new and emerging networking technologies and use cases are taking shape. One such technology is the Internet of Vehicles (IoV), which describes an interconnected system of vehicles and infrastructure. Coupled with recent developments in artificial intelligence and machine learning, the IoV is transformed into an Intelligent Transportation System (ITS). There are, however, several operational considerations that hinder the adoption of ITS systems, including scalability, high availability, and data privacy. To address these challenges, Federated Learning, a collaborative and distributed intelligence technique, is suggested. Through an ITS case study, the ability of a federated model deployed on roadside infrastructure throughout the network to recover from faults by leveraging group intelligence while reducing recovery time and restoring acceptable system performance is highlighted. With a multitude of use cases and benefits, Federated Learning is a key enabler for ITS and is poised to achieve widespread implementation in 5G and beyond networks and applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge