África Periáñez

From Restless to Contextual: A Thresholding Bandit Approach to Improve Finite-horizon Performance

Feb 07, 2025

Abstract:Online restless bandits extend classic contextual bandits by incorporating state transitions and budget constraints, representing each agent as a Markov Decision Process (MDP). This framework is crucial for finite-horizon strategic resource allocation, optimizing limited costly interventions for long-term benefits. However, learning the underlying MDP for each agent poses a major challenge in finite-horizon settings. To facilitate learning, we reformulate the problem as a scalable budgeted thresholding contextual bandit problem, carefully integrating the state transitions into the reward design and focusing on identifying agents with action benefits exceeding a threshold. We establish the optimality of an oracle greedy solution in a simple two-state setting, and propose an algorithm that achieves minimax optimal constant regret in the online multi-state setting with heterogeneous agents and knowledge of outcomes under no intervention. We numerically show that our algorithm outperforms existing online restless bandit methods, offering significant improvements in finite-horizon performance.

The Digital Transformation in Health: How AI Can Improve the Performance of Health Systems

Sep 24, 2024Abstract:Mobile health has the potential to revolutionize health care delivery and patient engagement. In this work, we discuss how integrating Artificial Intelligence into digital health applications-focused on supply chain, patient management, and capacity building, among other use cases-can improve the health system and public health performance. We present an Artificial Intelligence and Reinforcement Learning platform that allows the delivery of adaptive interventions whose impact can be optimized through experimentation and real-time monitoring. The system can integrate multiple data sources and digital health applications. The flexibility of this platform to connect to various mobile health applications and digital devices and send personalized recommendations based on past data and predictions can significantly improve the impact of digital tools on health system outcomes. The potential for resource-poor settings, where the impact of this approach on health outcomes could be more decisive, is discussed specifically. This framework is, however, similarly applicable to improving efficiency in health systems where scarcity is not an issue.

Adaptive User Journeys in Pharma E-Commerce with Reinforcement Learning: Insights from SwipeRx

Aug 15, 2024

Abstract:This paper introduces a reinforcement learning (RL) platform that enhances end-to-end user journeys in healthcare digital tools through personalization. We explore a case study with SwipeRx, the most popular all-in-one app for pharmacists in Southeast Asia, demonstrating how the platform can be used to personalize and adapt user experiences. Our RL framework is tested through a series of experiments with product recommendations tailored to each pharmacy based on real-time information on their purchasing history and in-app engagement, showing a significant increase in basket size. By integrating adaptive interventions into existing mobile health solutions and enriching user journeys, our platform offers a scalable solution to improve pharmaceutical supply chain management, health worker capacity building, and clinical decision and patient care, ultimately contributing to better healthcare outcomes.

Optimizing HIV Patient Engagement with Reinforcement Learning in Resource-Limited Settings

Aug 14, 2024

Abstract:By providing evidence-based clinical decision support, digital tools and electronic health records can revolutionize patient management, especially in resource-poor settings where fewer health workers are available and often need more training. When these tools are integrated with AI, they can offer personalized support and adaptive interventions, effectively connecting community health workers (CHWs) and healthcare facilities. The CHARM (Community Health Access & Resource Management) app is an AI-native mobile app for CHWs. Developed through a joint partnership of Causal Foundry (CF) and mothers2mothers (m2m), CHARM empowers CHWs, mainly local women, by streamlining case management, enhancing learning, and improving communication. This paper details CHARM's development, integration, and upcoming reinforcement learning-based adaptive interventions, all aimed at enhancing health worker engagement, efficiency, and patient outcomes, thereby enhancing CHWs' capabilities and community health.

Adaptive Behavioral AI: Reinforcement Learning to Enhance Pharmacy Services

Aug 14, 2024

Abstract:Pharmacies are critical in healthcare systems, particularly in low- and middle-income countries. Procuring pharmacists with the right behavioral interventions or nudges can enhance their skills, public health awareness, and pharmacy inventory management, ensuring access to essential medicines that ultimately benefit their patients. We introduce a reinforcement learning operational system to deliver personalized behavioral interventions through mobile health applications. We illustrate its potential by discussing a series of initial experiments run with SwipeRx, an all-in-one app for pharmacists, including B2B e-commerce, in Indonesia. The proposed method has broader applications extending beyond pharmacy operations to optimize healthcare delivery.

Adaptive Interventions for Global Health: A Case Study of Malaria

Mar 17, 2023Abstract:Malaria can be prevented, diagnosed, and treated; however, every year, there are more than 200 million cases and 200.000 preventable deaths. Malaria remains a pressing public health concern in low- and middle-income countries, especially in sub-Saharan Africa. We describe how by means of mobile health applications, machine-learning-based adaptive interventions can strengthen malaria surveillance and treatment adherence, increase testing, measure provider skills and quality of care, improve public health by supporting front-line workers and patients (e.g., by capacity building and encouraging behavioral changes, like using bed nets), reduce test stockouts in pharmacies and clinics and informing public health for policy intervention.

Synthetic Data Generator for Adaptive Interventions in Global Health

Mar 06, 2023Abstract:Artificial Intelligence and digital health have the potential to transform global health. However, having access to representative data to test and validate algorithms in realistic production environments is essential. We introduce HealthSyn, an open-source synthetic data generator of user behavior for testing reinforcement learning algorithms in the context of mobile health interventions. The generator utilizes Markov processes to generate diverse user actions, with individual user behavioral patterns that can change in reaction to personalized interventions (i.e., reminders, recommendations, and incentives). These actions are translated into actual logs using an ML-purposed data schema specific to the mobile health application functionality included with HealthKit, and open-source SDK. The logs can be fed to pipelines to obtain user metrics. The generated data, which is based on real-world behaviors and simulation techniques, can be used to develop, test, and evaluate, both ML algorithms in research and end-to-end operational RL-based intervention delivery frameworks.

User Engagement in Mobile Health Applications

Jun 23, 2022

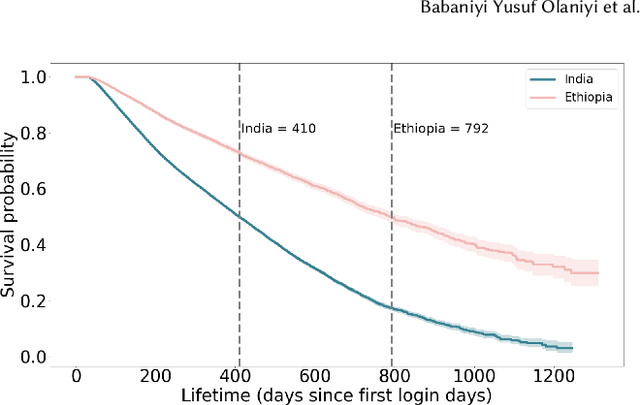

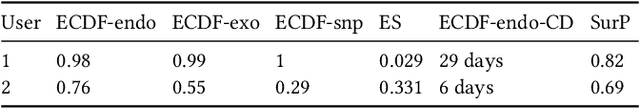

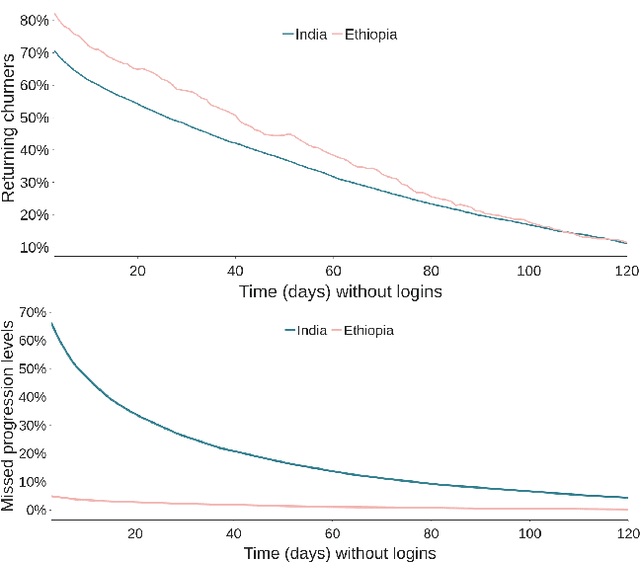

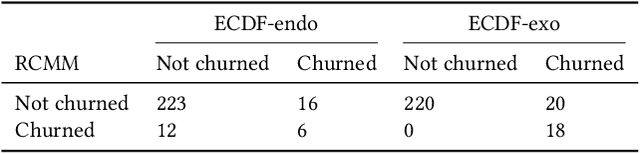

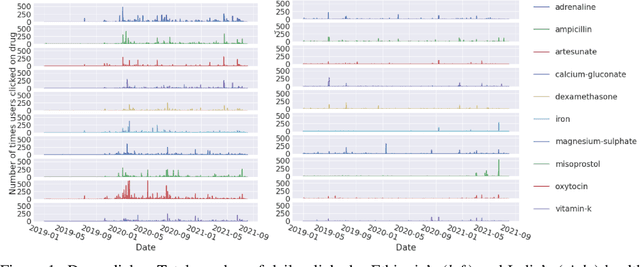

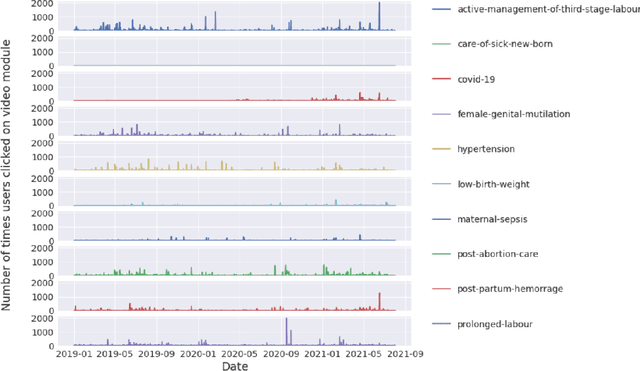

Abstract:Mobile health apps are revolutionizing the healthcare ecosystem by improving communication, efficiency, and quality of service. In low- and middle-income countries, they also play a unique role as a source of information about health outcomes and behaviors of patients and healthcare workers, while providing a suitable channel to deliver both personalized and collective policy interventions. We propose a framework to study user engagement with mobile health, focusing on healthcare workers and digital health apps designed to support them in resource-poor settings. The behavioral logs produced by these apps can be transformed into daily time series characterizing each user's activity. We use probabilistic and survival analysis to build multiple personalized measures of meaningful engagement, which could serve to tailor content and digital interventions suiting each health worker's specific needs. Special attention is given to the problem of detecting churn, understood as a marker of complete disengagement. We discuss the application of our methods to the Indian and Ethiopian users of the Safe Delivery App, a capacity-building tool for skilled birth attendants. This work represents an important step towards a full characterization of user engagement in mobile health applications, which can significantly enhance the abilities of health workers and, ultimately, save lives.

A Data-Centric Behavioral Machine Learning Platform to Reduce Health Inequalities

Nov 17, 2021

Abstract:Providing front-line health workers in low- and middle- income countries with recommendations and predictions to improve health outcomes can have a tremendous impact on reducing healthcare inequalities, for instance by helping to prevent the thousands of maternal and newborn deaths that occur every day. To that end, we are developing a data-centric machine learning platform that leverages the behavioral logs from a wide range of mobile health applications running in those countries. Here we describe the platform architecture, focusing on the details that help us to maximize the quality and organization of the data throughout the whole process, from the data ingestion with a data-science purposed software development kit to the data pipelines, feature engineering and model management.

A Recommendation System to Enhance Midwives' Capacities in Low-Income Countries

Nov 04, 2021

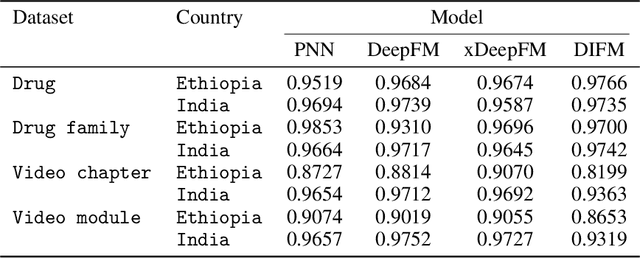

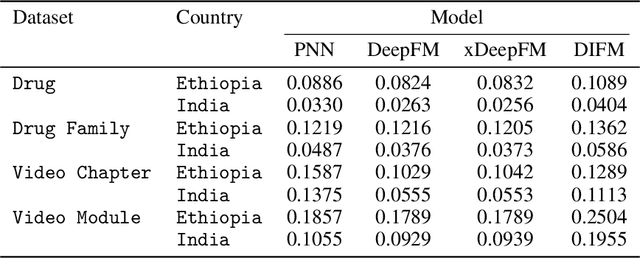

Abstract:Maternal and child mortality is a public health problem that disproportionately affects low- and middle-income countries. Every day, 800 women and 6,700 newborns die from complications related to pregnancy or childbirth. And for every maternal death, about 20 women suffer serious birth injuries. However, nearly all of these deaths and negative health outcomes are preventable. Midwives are key to revert this situation, and thus it is essential to strengthen their capacities and the quality of their education. This is the aim of the Safe Delivery App, a digital job aid and learning tool to enhance the knowledge, confidence and skills of health practitioners. Here, we use the behavioral logs of the App to implement a recommendation system that presents each midwife with suitable contents to continue gaining expertise. We focus on predicting the click-through rate, the probability that a given user will click on a recommended content. We evaluate four deep learning models and show that all of them produce highly accurate predictions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge