Training Two-Layer ReLU Networks with Gradient Descent is Inconsistent

Paper and Code

Feb 12, 2020

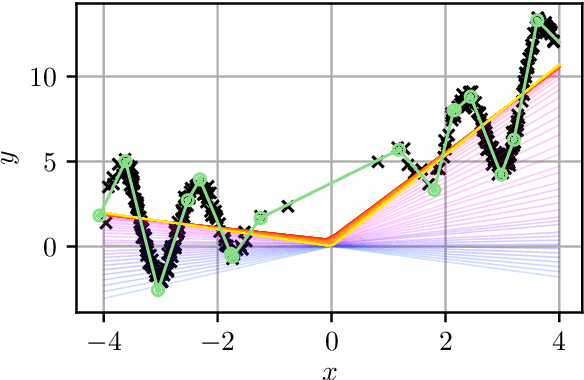

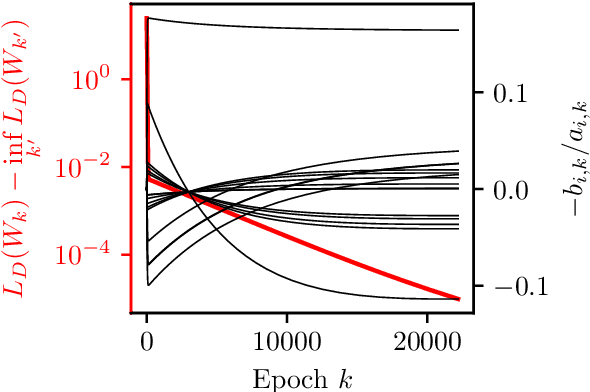

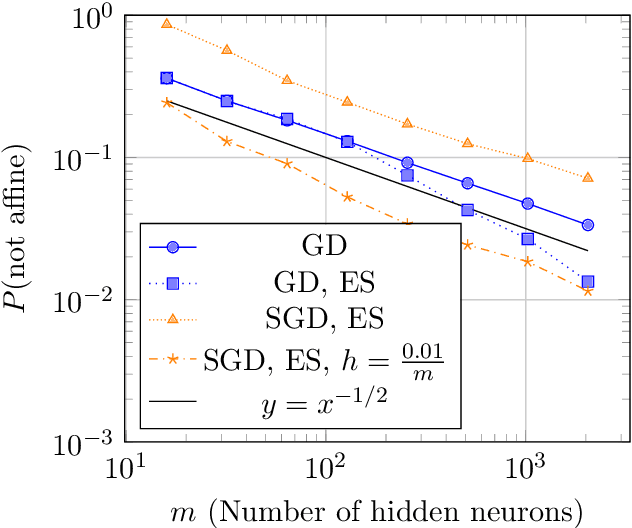

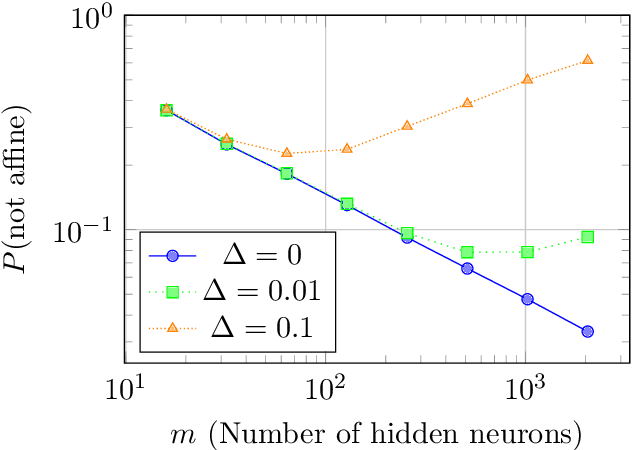

We prove that two-layer (Leaky)ReLU networks initialized by e.g. the widely used method proposed by He et al. (2015) and trained using gradient descent on a least-squares loss are not universally consistent. Specifically, we describe a large class of data-generating distributions for which, with high probability, gradient descent only finds a bad local minimum of the optimization landscape. It turns out that in these cases, the found network essentially performs linear regression even if the target function is non-linear. We further provide numerical evidence that this happens in practical situations and that stochastic gradient descent exhibits similar behavior.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge