Zhiqiang Zhou

Optimization for Oriented Object Detection via Representation Invariance Loss

Mar 22, 2021

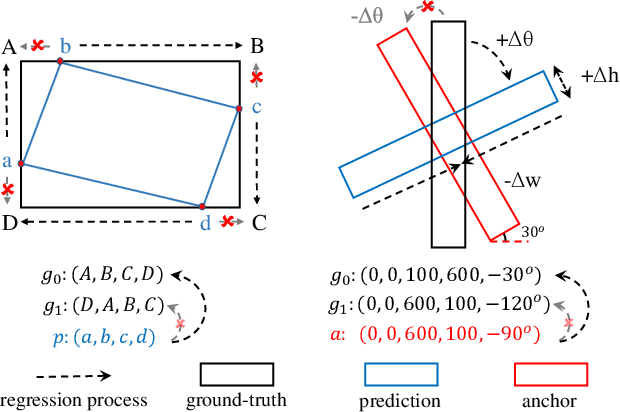

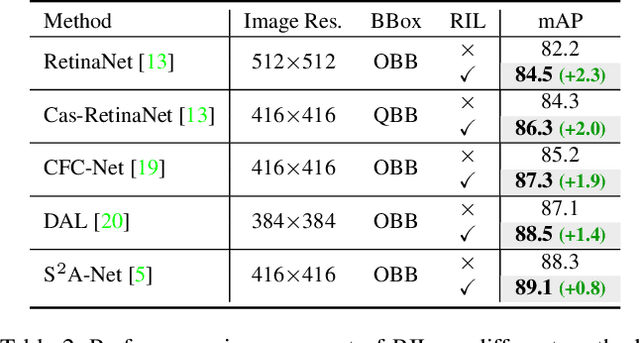

Abstract:Arbitrary-oriented objects exist widely in natural scenes, and thus the oriented object detection has received extensive attention in recent years. The mainstream rotation detectors use oriented bounding boxes (OBB) or quadrilateral bounding boxes (QBB) to represent the rotating objects. However, these methods suffer from the representation ambiguity for oriented object definition, which leads to suboptimal regression optimization and the inconsistency between the loss metric and the localization accuracy of the predictions. In this paper, we propose a Representation Invariance Loss (RIL) to optimize the bounding box regression for the rotating objects. Specifically, RIL treats multiple representations of an oriented object as multiple equivalent local minima, and hence transforms bounding box regression into an adaptive matching process with these local minima. Then, the Hungarian matching algorithm is adopted to obtain the optimal regression strategy. We also propose a normalized rotation loss to alleviate the weak correlation between different variables and their unbalanced loss contribution in OBB representation. Extensive experiments on remote sensing datasets and scene text datasets show that our method achieves consistent and substantial improvement. The source code and trained models are available at https://github.com/ming71/RIDet.

CFC-Net: A Critical Feature Capturing Network for Arbitrary-Oriented Object Detection in Remote Sensing Images

Jan 18, 2021

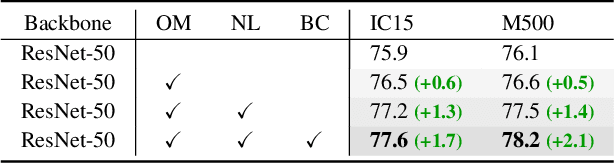

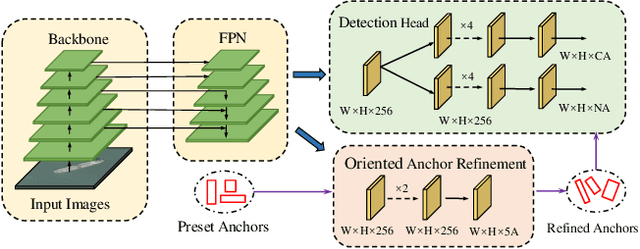

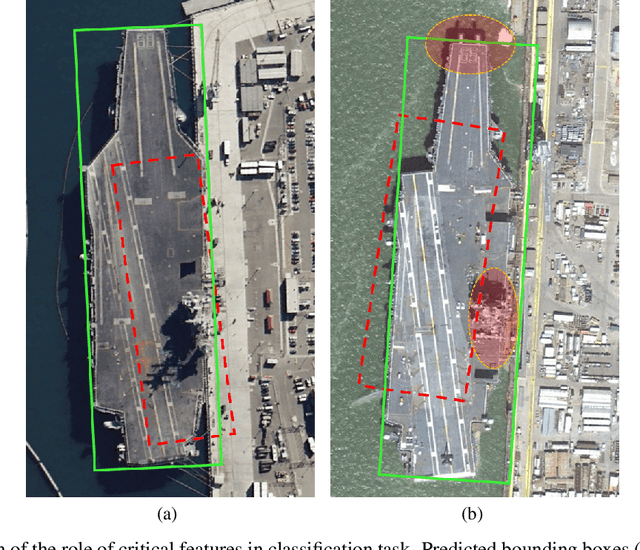

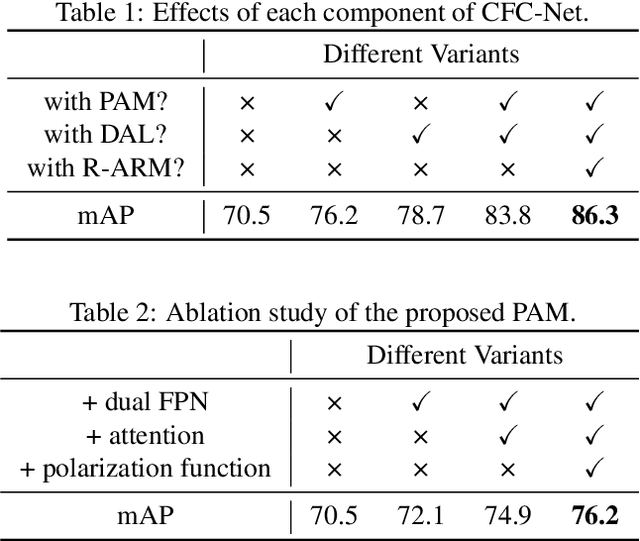

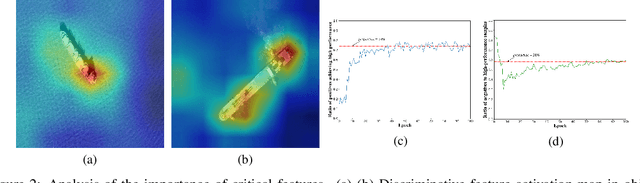

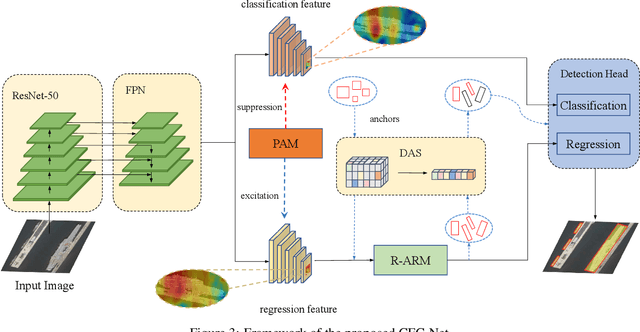

Abstract:Object detection in optical remote sensing images is an important and challenging task. In recent years, the methods based on convolutional neural networks have made good progress. However, due to the large variation in object scale, aspect ratio, and arbitrary orientation, the detection performance is difficult to be further improved. In this paper, we discuss the role of discriminative features in object detection, and then propose a Critical Feature Capturing Network (CFC-Net) to improve detection accuracy from three aspects: building powerful feature representation, refining preset anchors, and optimizing label assignment. Specifically, we first decouple the classification and regression features, and then construct robust critical features adapted to the respective tasks through the Polarization Attention Module (PAM). With the extracted discriminative regression features, the Rotation Anchor Refinement Module (R-ARM) performs localization refinement on preset horizontal anchors to obtain superior rotation anchors. Next, the Dynamic Anchor Learning (DAL) strategy is given to adaptively select high-quality anchors based on their ability to capture critical features. The proposed framework creates more powerful semantic representations for objects in remote sensing images and achieves high-performance real-time object detection. Experimental results on three remote sensing datasets including HRSC2016, DOTA, and UCAS-AOD show that our method achieves superior detection performance compared with many state-of-the-art approaches. Code and models are available at https://github.com/ming71/CFC-Net.

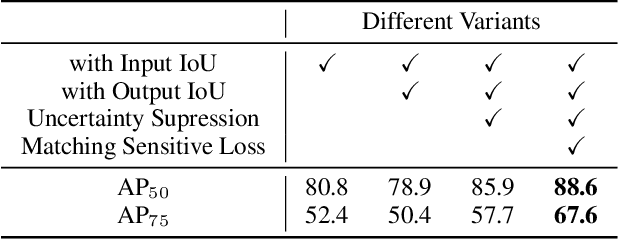

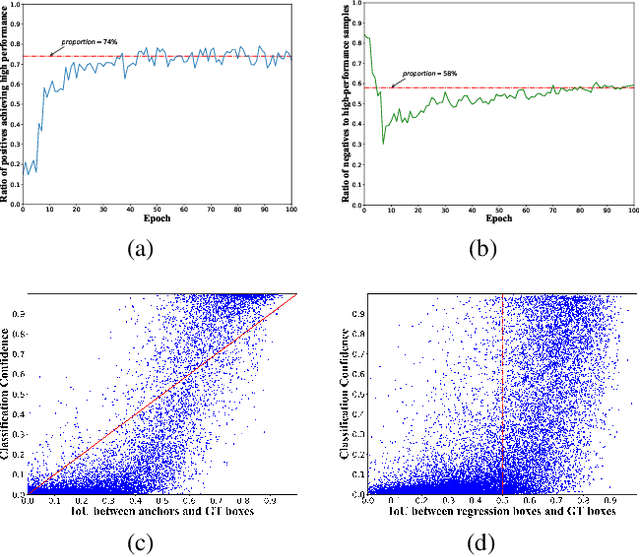

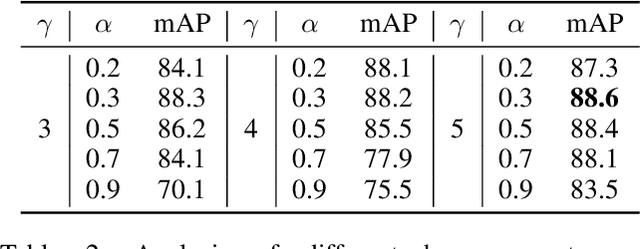

Dynamic Anchor Learning for Arbitrary-Oriented Object Detection

Dec 15, 2020

Abstract:Arbitrary-oriented objects widely appear in natural scenes, aerial photographs, remote sensing images, etc., thus arbitrary-oriented object detection has received considerable attention. Many current rotation detectors use plenty of anchors with different orientations to achieve spatial alignment with ground truth boxes, then Intersection-over-Union (IoU) is applied to sample the positive and negative candidates for training. However, we observe that the selected positive anchors cannot always ensure accurate detections after regression, while some negative samples can achieve accurate localization. It indicates that the quality assessment of anchors through IoU is not appropriate, and this further lead to inconsistency between classification confidence and localization accuracy. In this paper, we propose a dynamic anchor learning (DAL) method, which utilizes the newly defined matching degree to comprehensively evaluate the localization potential of the anchors and carry out a more efficient label assignment process. In this way, the detector can dynamically select high-quality anchors to achieve accurate object detection, and the divergence between classification and regression will be alleviated. With the newly introduced DAL, we achieve superior detection performance for arbitrary-oriented objects with only a few horizontal preset anchors. Experimental results on three remote sensing datasets HRSC2016, DOTA, UCAS-AOD as well as a scene text dataset ICDAR 2015 show that our method achieves substantial improvement compared with the baseline model. Besides, our approach is also universal for object detection using horizontal bound box. The code and models are available at https://github.com/ming71/DAL.

Conditional Gradient Methods for convex optimization with function constraints

Jun 30, 2020

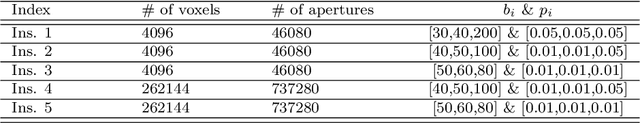

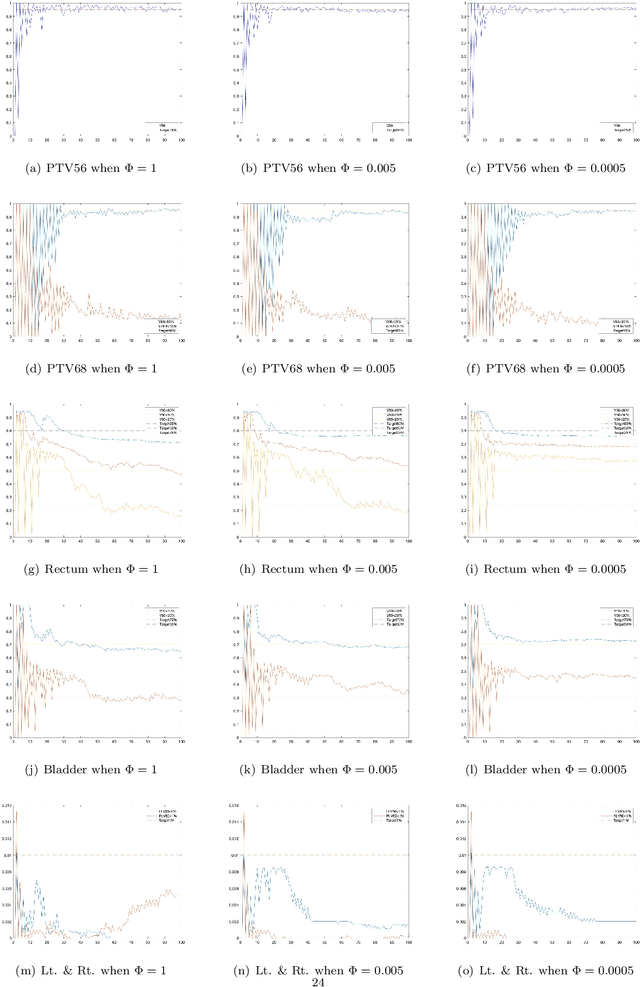

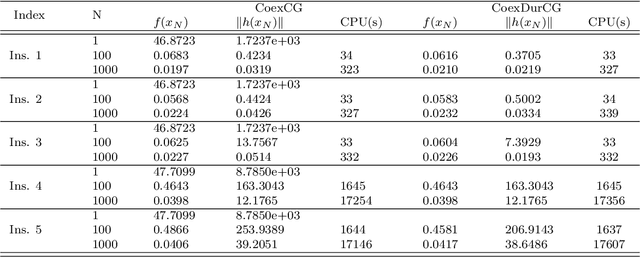

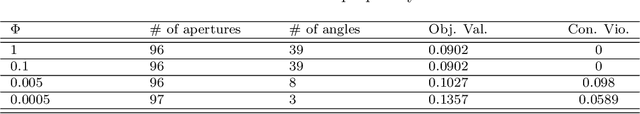

Abstract:Conditional gradient methods have attracted much attention in both machine learning and optimization communities recently. These simple methods can guarantee the generation of sparse solutions. In addition, without the computation of full gradients, they can handle huge-scale problems sometimes even with an exponentially increasing number of decision variables. This paper aims to significantly expand the application areas of these methods by presenting new conditional gradient methods for solving convex optimization problems with general affine and nonlinear constraints. More specifically, we first present a new constraint extrapolated condition gradient (CoexCG) method that can achieve an ${\cal O}(1/\epsilon^2)$ iteration complexity for both smooth and structured nonsmooth function constrained convex optimization. We further develop novel variants of CoexCG, namely constraint extrapolated and dual regularized conditional gradient (CoexDurCG) methods, that can achieve similar iteration complexity to CoexCG but allow adaptive selection for algorithmic parameters. We illustrate the effectiveness of these methods for solving an important class of radiation therapy treatment planning problems arising from healthcare industry.

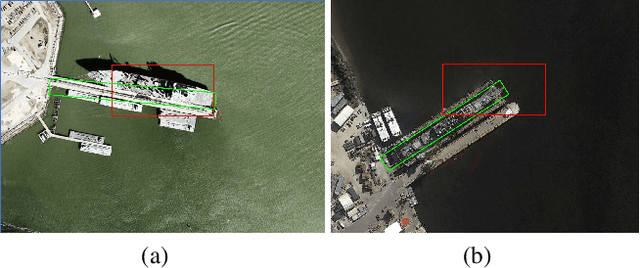

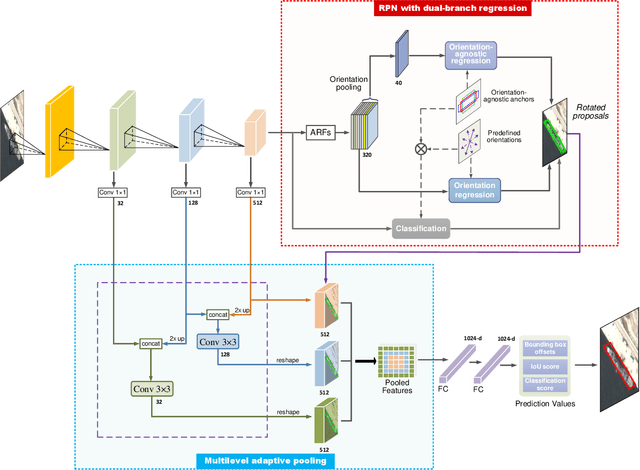

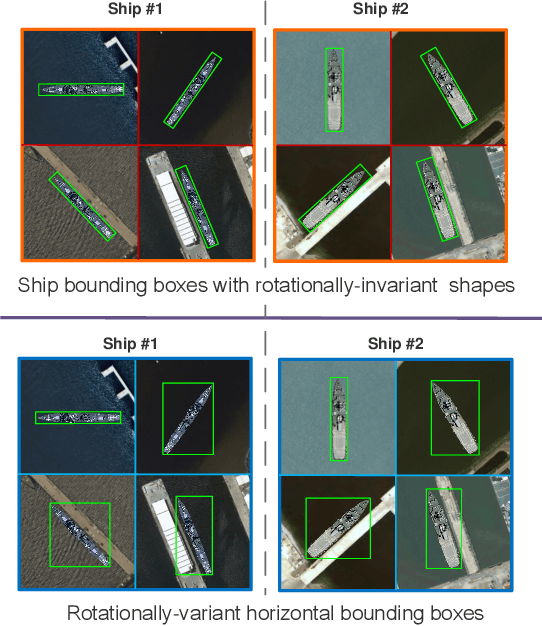

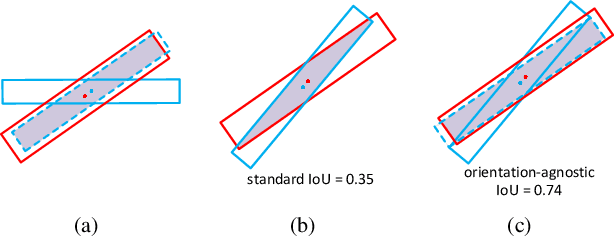

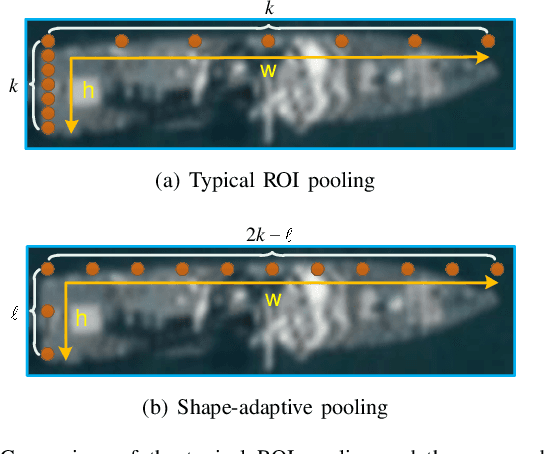

A Novel CNN-based Method for Accurate Ship Detection in HR Optical Remote Sensing Images via Rotated Bounding Box

May 08, 2020

Abstract:Currently, reliable and accurate ship detection in optical remote sensing images is still challenging. Even the state-of-the-art convolutional neural network (CNN) based methods cannot obtain very satisfactory results. To more accurately locate the ships in diverse orientations, some recent methods conduct the detection via the rotated bounding box. However, it further increases the difficulty of detection, because an additional variable of ship orientation must be accurately predicted in the algorithm. In this paper, a novel CNN-based ship detection method is proposed, by overcoming some common deficiencies of current CNN-based methods in ship detection. Specifically, to generate rotated region proposals, current methods have to predefine multi-oriented anchors, and predict all unknown variables together in one regression process, limiting the quality of overall prediction. By contrast, we are able to predict the orientation and other variables independently, and yet more effectively, with a novel dual-branch regression network, based on the observation that the ship targets are nearly rotation-invariant in remote sensing images. Next, a shape-adaptive pooling method is proposed, to overcome the limitation of typical regular ROI-pooling in extracting the features of the ships with various aspect ratios. Furthermore, we propose to incorporate multilevel features via the spatially-variant adaptive pooling. This novel approach, called multilevel adaptive pooling, leads to a compact feature representation more qualified for the simultaneous ship classification and localization. Finally, detailed ablation study performed on the proposed approaches is provided, along with some useful insights. Experimental results demonstrate the great superiority of the proposed method in ship detection.

Algorithms for stochastic optimization with expectation constraints

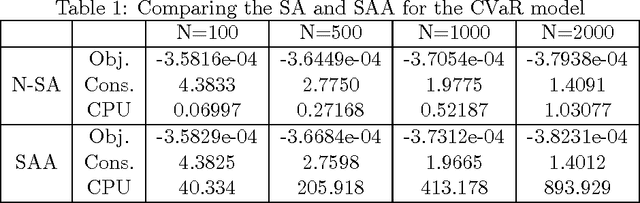

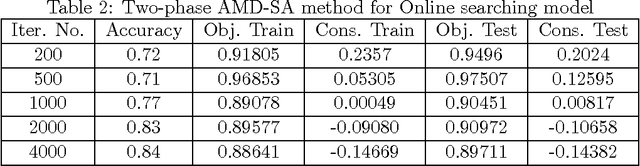

Sep 06, 2018

Abstract:This paper considers the problem of minimizing an expectation function over a closed convex set, coupled with a functional or expectation constraint on either decision variables or problem parameters. We first present a new stochastic approximation (SA) type algorithm, namely the cooperative SA (CSA), to handle problems with the constraint on devision variables. We show that this algorithm exhibits the optimal O(1/\epsilon^2 ) rate of convergence, in terms of both optimality gap and constraint violation, when the objective and constraint functions are generally convex, where ? denotes the optimality gap and infeasibility. Moreover, we show that this rate of convergence can be improved to O(1/\epsilon) if the objective and constraint functions are strongly convex. We then present a variant of CSA, namely the cooperative stochastic parameter approximation (CSPA) algorithm, to deal with the situation when the constraint is defined over problem parameters and show that it exhibits similar optimal rate of convergence to CSA. It is worth noting that CSA and CSPA are primal methods which do not require the iterations on the dual space and/or the estimation on the size of the dual variables. To the best of our knowledge, this is the first time that such optimal SA methods for solving functional and expectation constrained stochastic optimization are presented in the literature.

Dynamic Stochastic Approximation for Multi-stage Stochastic Optimization

Jul 11, 2017Abstract:In this paper, we consider multi-stage stochastic optimization problems with convex objectives and conic constraints at each stage. We present a new stochastic first-order method, namely the dynamic stochastic approximation (DSA) algorithm, for solving these types of stochastic optimization problems. We show that DSA can achieve an optimal ${\cal O}(1/\epsilon^4)$ rate of convergence in terms of the total number of required scenarios when applied to a three-stage stochastic optimization problem. We further show that this rate of convergence can be improved to ${\cal O}(1/\epsilon^2)$ when the objective function is strongly convex. We also discuss variants of DSA for solving more general multi-stage stochastic optimization problems with the number of stages $T > 3$. The developed DSA algorithms only need to go through the scenario tree once in order to compute an $\epsilon$-solution of the multi-stage stochastic optimization problem. To the best of our knowledge, this is the first time that stochastic approximation type methods are generalized for multi-stage stochastic optimization with $T \ge 3$.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge