Zhengrui Huang

Fusion of Complex Networks-based Global and Local Features for Texture Classification

Jun 20, 2021

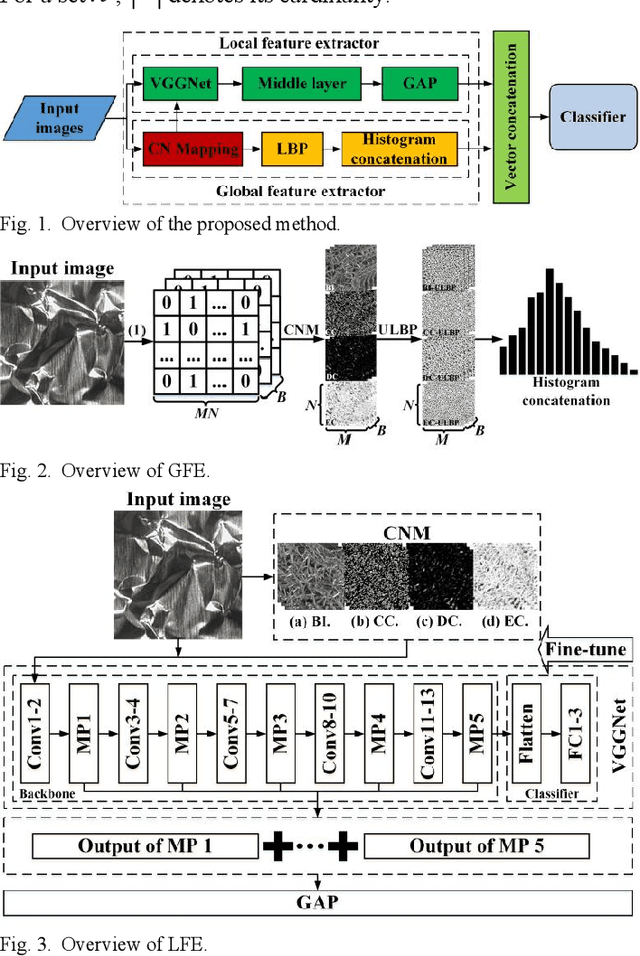

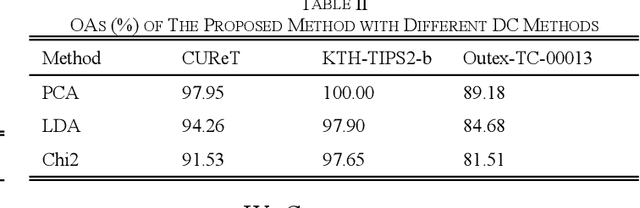

Abstract:To realize accurate texture classification, this article proposes a complex networks (CN)-based multi-feature fusion method to recognize texture images. Specifically, we propose two feature extractors to detect the global and local features of texture images respectively. To capture the global features, we first map a texture image as an undirected graph based on pixel location and intensity, and three feature measurements are designed to further decipher the image features, which retains the image information as much as possible. Then, given the original band images (BI) and the generated feature images, we encode them based on the local binary patterns (LBP). Therefore, the global feature vector is obtained by concatenating four spatial histograms. To decipher the local features, we jointly transfer and fine-tune the pre-trained VGGNet-16 model. Next, we fuse and connect the middle outputs of max-pooling layers (MP), and generate the local feature vector by a global average pooling layer (GAP). Finally, the global and local feature vectors are concatenated to form the final feature representation of texture images. Experiment results show that the proposed method outperforms the state-of-the-art statistical descriptors and the deep convolutional neural networks (CNN) models.

CN-LBP: Complex Networks-based Local Binary Patterns for Texture Classification

Jun 04, 2021

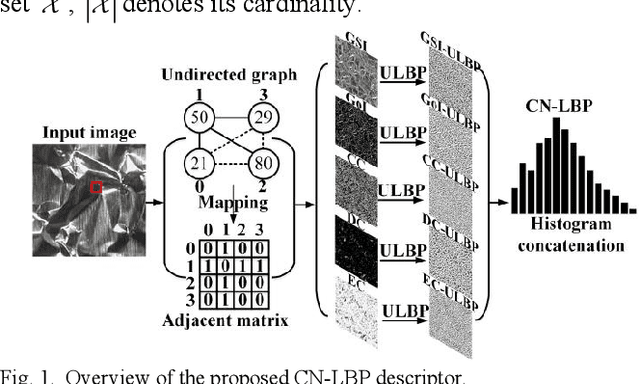

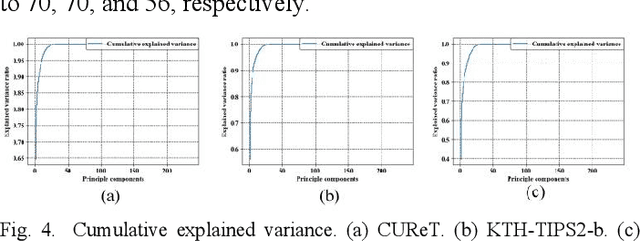

Abstract:To effectively overcome the limitations of local binary patterns (LBP), this letter proposes a new texture descriptor aided by complex networks (CN) and uniform LBP (ULBP), namely, CN-LBP. Specifically, we first abstract a gray-scale image (GSI) as an undirected graph with the help of pixel distance and intensity, and gradient of image (GoI). Second, three variants of CN-based feature measurements (clustering coefficient, degree centrality, and eigenvector centrality) are proposed to decipher the image spatial-relationship, energy, and entropy, respectively, thus generating three feature maps, which can retain the image information as much as possible. Third, given the generated feature maps, we apply ULBP on feature maps, GSI, and GoI, and obtain the discriminative representation of the texture image. Finally, CN-LBP is obtained by jointly calculating and concatenating the spatial histograms. In contrast to original LBP, the proposed texture descriptor contains more detailed image information and shows certain resistance to noise. Experiment results show that the proposed approach significantly improves the texture classification accuracy compared with state-of-the-art LBP-based variants and deep learning-based approaches.

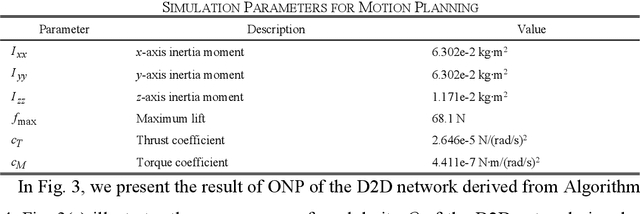

Hybrid Device-to-Device and Device-to-Vehicle Networks for Energy-Efficient Emergency Communication

May 26, 2021

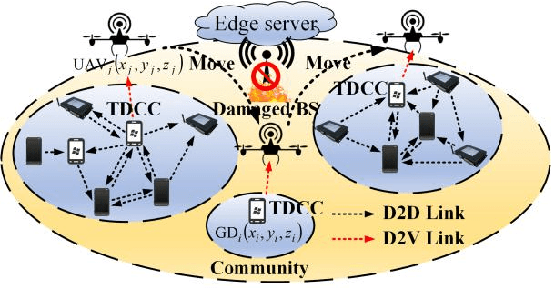

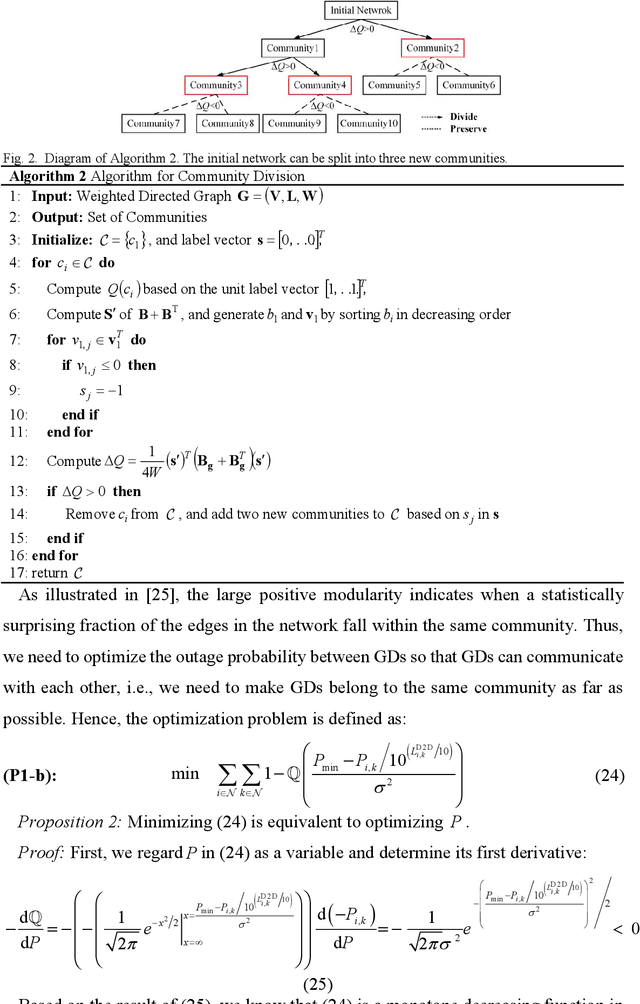

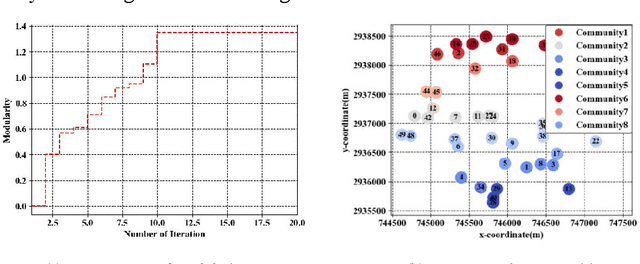

Abstract:Considering the energy-efficient emergency response, subject to a given set of constraints on emergency communication networks (ECN), this article proposes a hybrid device-to-device (D2D) and device-to-vehicle (D2V) network for collecting and transmitting emergency information. First, we establish the D2D network from the perspective of complex networks by jointly determining the optimal network partition (ONP) and the temporary data caching centers (TDCC), and thus emergency data can be forwarded and cached in TDCCs. Second, based on the distribution of TDCCs, the D2V network is established by unmanned aerial vehicles (UAV)-based waypoint and motion planning, which saves the time for wireless transmission and aerial moving. Finally, the amount of time for emergency response and the total energy consumption are simultaneously minimized by a multiobjective evolutionary algorithm based on decomposition (MOEA/D), subject to a given set of minimum signal-to-interference-plus-noise ratio (SINR), number of UAVs, transmit power, and energy constraints. Simulation results show that the proposed method significantly improves response efficiency and reasonably controls the energy, thus overcoming limitations of existing ECNs. Therefore, this network effectively solves the key problem in the rescue system and makes great contributions to post-disaster decision-making.

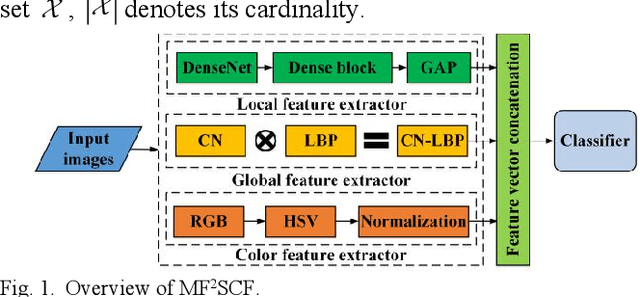

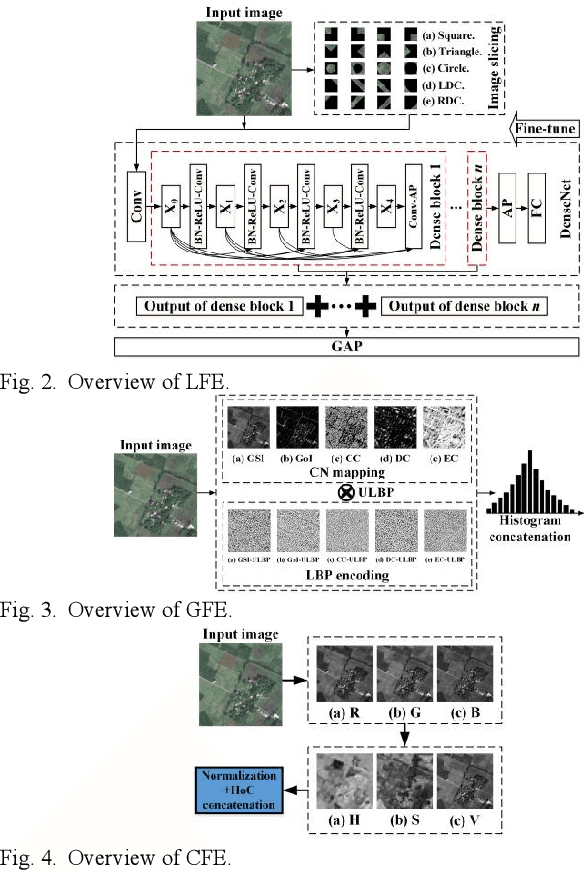

Multi-Feature Fusion-based Scene Classification Framework for HSR Images

May 22, 2021

Abstract:To realize high-accuracy classification of high spatial resolution (HSR) images, this letter proposes a new multi-feature fusion-based scene classification framework (MF2SCF) by fusing local, global, and color features of HSR images. Specifically, we first extract the local features with the help of image slicing and densely connected convolutional networks (DenseNet), where the outputs of dense blocks in the fine-tuned DenseNet-121 model are jointly averaged and concatenated to describe local features. Second, from the perspective of complex networks (CN), we model a HSR image as an undirected graph based on pixel distance, intensity, and gradient, and obtain a gray-scale image (GSI), a gradient of image (GoI), and three CN-based feature images to delineate global features. To make the global feature descriptor resist to the impact of rotation and illumination, we apply uniform local binary patterns (LBP) on GSI, GoI, and feature images, respectively, and generate the final global feature representation by concatenating spatial histograms. Third, the color features are determined based on the normalized HSV histogram, where HSV stands for hue, saturation, and value, respectively. Finally, three feature vectors are jointly concatenated for scene classification. Experiment results show that MF2SCF significantly improves the classification accuracy compared with state-of-the-art LBP-based methods and deep learning-based methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge