Yuta Umezu

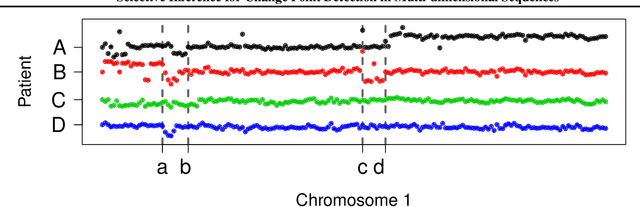

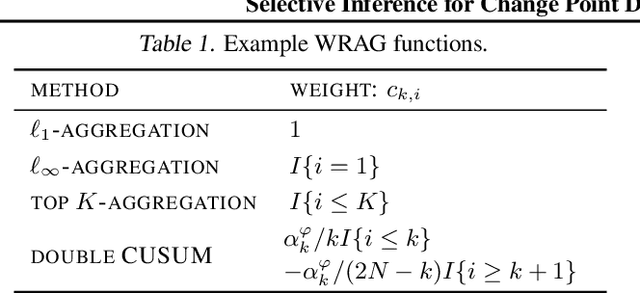

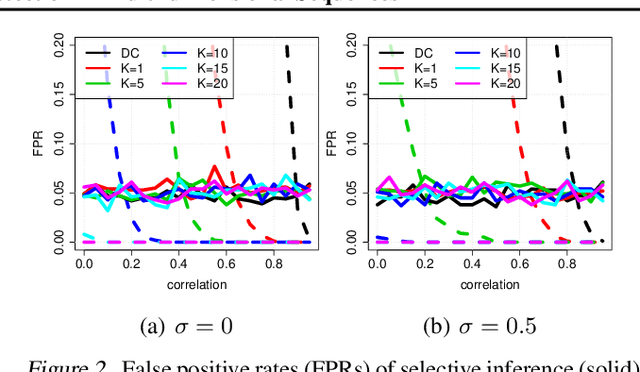

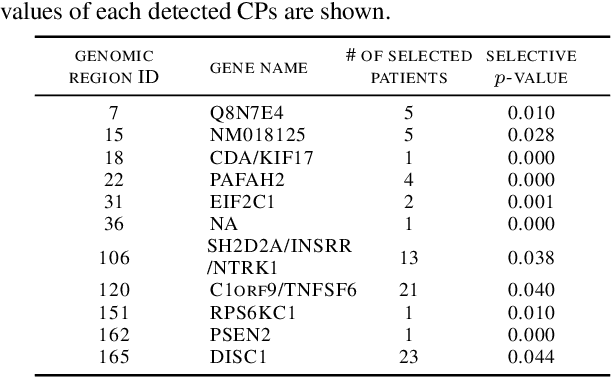

Selective Inference for Change Point Detection in Multi-dimensional Sequences

Mar 02, 2018

Abstract:We study the problem of detecting change points (CPs) that are characterized by a subset of dimensions in a multi-dimensional sequence. A method for detecting those CPs can be formulated as a two-stage method: one for selecting relevant dimensions, and another for selecting CPs. It has been difficult to properly control the false detection probability of these CP detection methods because selection bias in each stage must be properly corrected. Our main contribution in this paper is to formulate a CP detection problem as a selective inference problem, and show that exact (non-asymptotic) inference is possible for a class of CP detection methods. We demonstrate the performances of the proposed selective inference framework through numerical simulations and its application to our motivating medical data analysis problem.

Post Selection Inference with Kernels

Oct 14, 2016

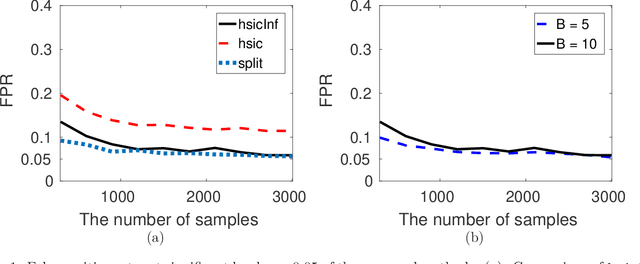

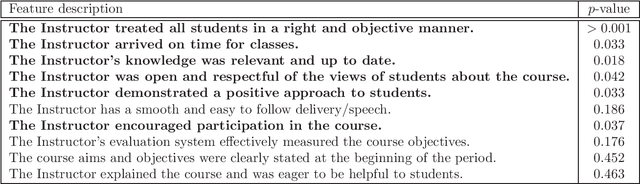

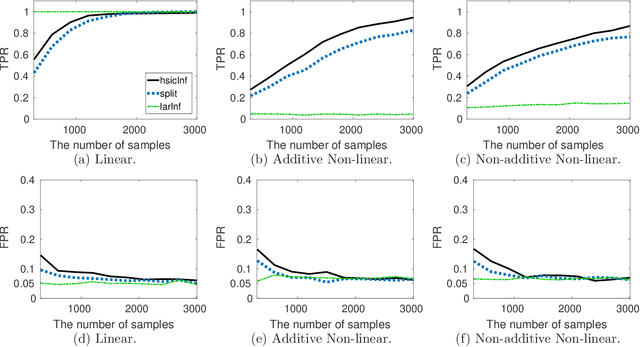

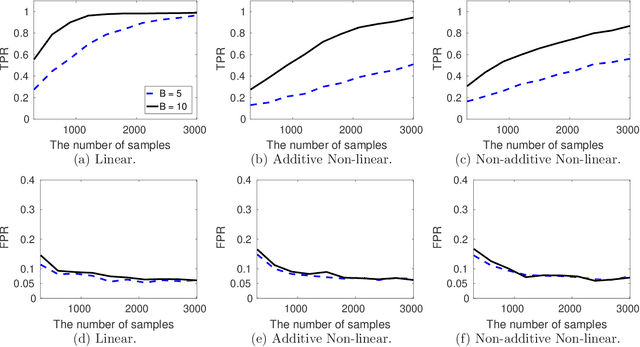

Abstract:We propose a novel kernel based post selection inference (PSI) algorithm, which can not only handle non-linearity in data but also structured output such as multi-dimensional and multi-label outputs. Specifically, we develop a PSI algorithm for independence measures, and propose the Hilbert-Schmidt Independence Criterion (HSIC) based PSI algorithm (hsicInf). The novelty of the proposed algorithm is that it can handle non-linearity and/or structured data through kernels. Namely, the proposed algorithm can be used for wider range of applications including nonlinear multi-class classification and multi-variate regressions, while existing PSI algorithms cannot handle them. Through synthetic experiments, we show that the proposed approach can find a set of statistically significant features for both regression and classification problems. Moreover, we apply the hsicInf algorithm to a real-world data, and show that hsicInf can successfully identify important features.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge