Yuejia He

Joint Association Graph Screening and Decomposition for Large-scale Linear Dynamical Systems

Nov 17, 2014

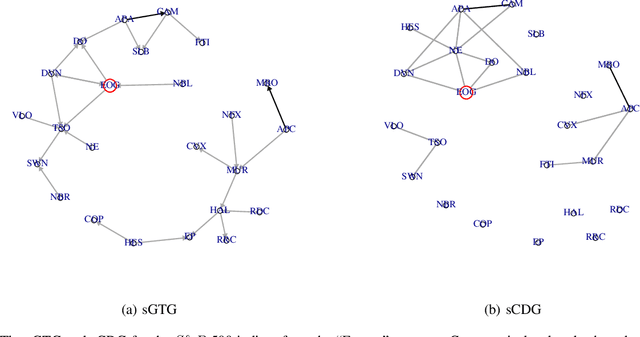

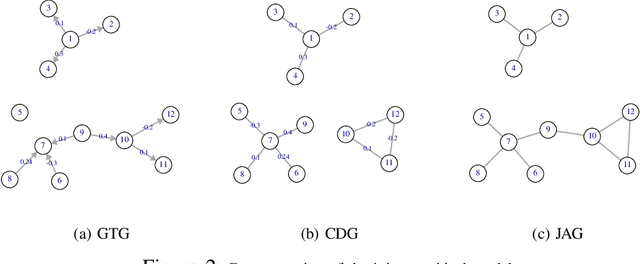

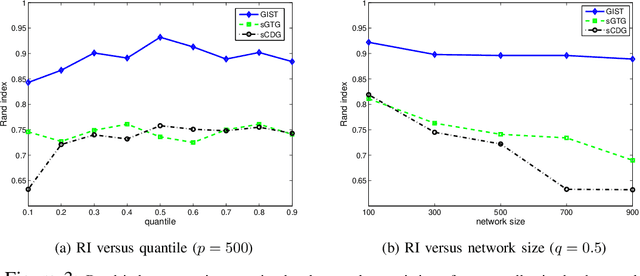

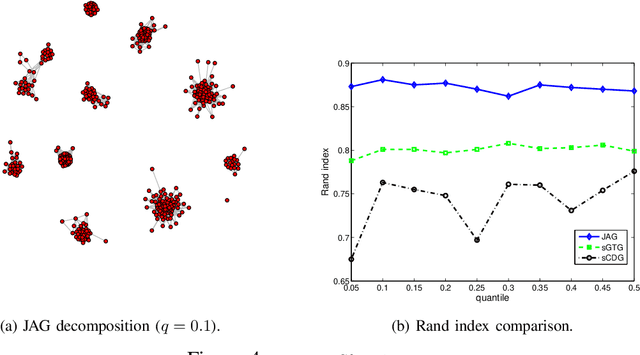

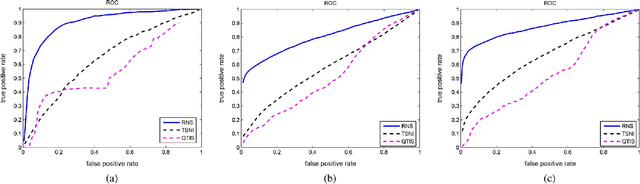

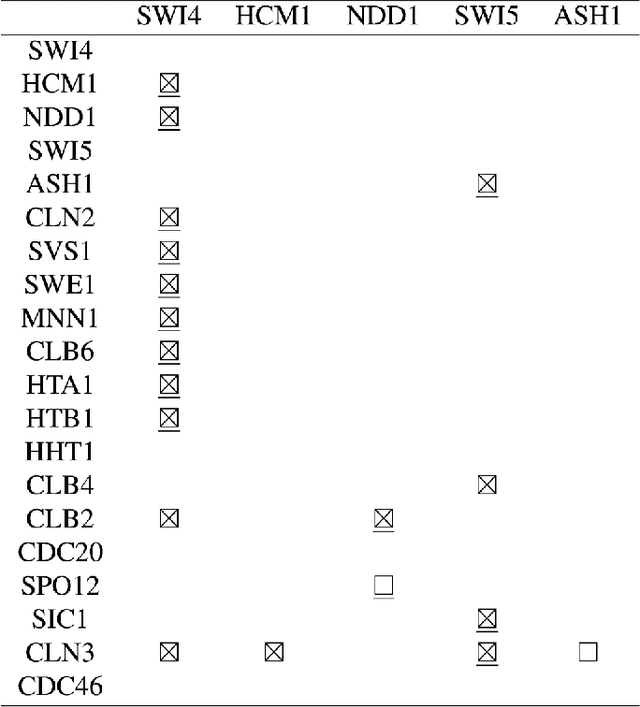

Abstract:This paper studies large-scale dynamical networks where the current state of the system is a linear transformation of the previous state, contaminated by a multivariate Gaussian noise. Examples include stock markets, human brains and gene regulatory networks. We introduce a transition matrix to describe the evolution, which can be translated to a directed Granger transition graph, and use the concentration matrix of the Gaussian noise to capture the second-order relations between nodes, which can be translated to an undirected conditional dependence graph. We propose regularizing the two graphs jointly in topology identification and dynamics estimation. Based on the notion of joint association graph (JAG), we develop a joint graphical screening and estimation (JGSE) framework for efficient network learning in big data. In particular, our method can pre-determine and remove unnecessary edges based on the joint graphical structure, referred to as JAG screening, and can decompose a large network into smaller subnetworks in a robust manner, referred to as JAG decomposition. JAG screening and decomposition can reduce the problem size and search space for fine estimation at a later stage. Experiments on both synthetic data and real-world applications show the effectiveness of the proposed framework in large-scale network topology identification and dynamics estimation.

Learning Topology and Dynamics of Large Recurrent Neural Networks

Oct 05, 2014

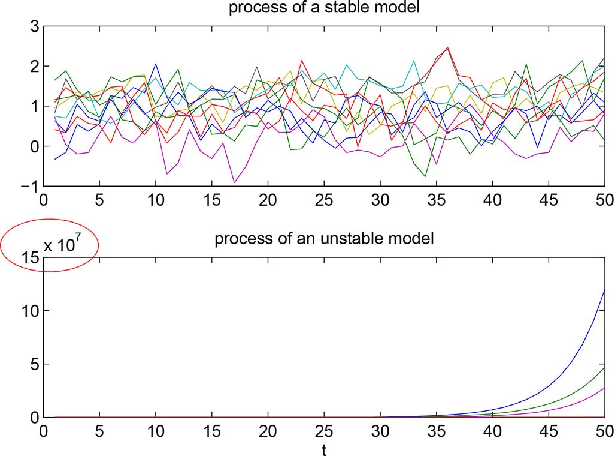

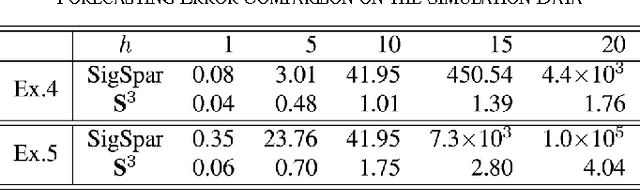

Abstract:Large-scale recurrent networks have drawn increasing attention recently because of their capabilities in modeling a large variety of real-world phenomena and physical mechanisms. This paper studies how to identify all authentic connections and estimate system parameters of a recurrent network, given a sequence of node observations. This task becomes extremely challenging in modern network applications, because the available observations are usually very noisy and limited, and the associated dynamical system is strongly nonlinear. By formulating the problem as multivariate sparse sigmoidal regression, we develop simple-to-implement network learning algorithms, with rigorous convergence guarantee in theory, for a variety of sparsity-promoting penalty forms. A quantile variant of progressive recurrent network screening is proposed for efficient computation and allows for direct cardinality control of network topology in estimation. Moreover, we investigate recurrent network stability conditions in Lyapunov's sense, and integrate such stability constraints into sparse network learning. Experiments show excellent performance of the proposed algorithms in network topology identification and forecasting.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge