Yasuno Takato

Road Surface Translation Under Snow-covered and Semantic Segmentation for Snow Hazard Index

Jan 23, 2021

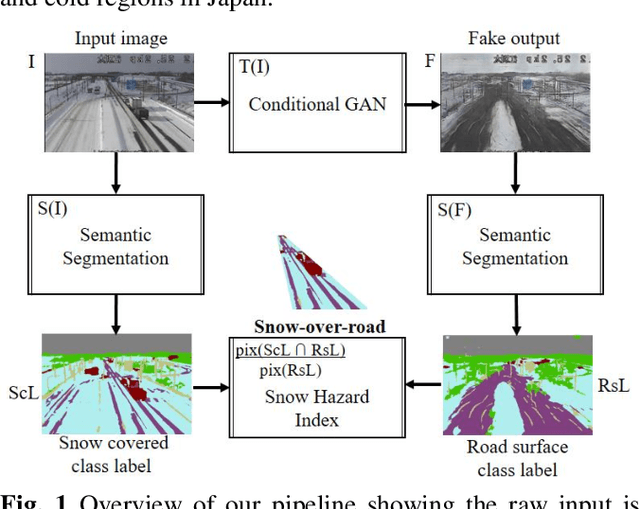

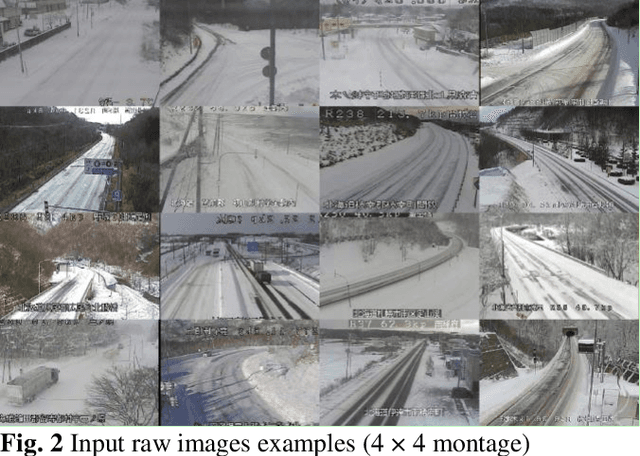

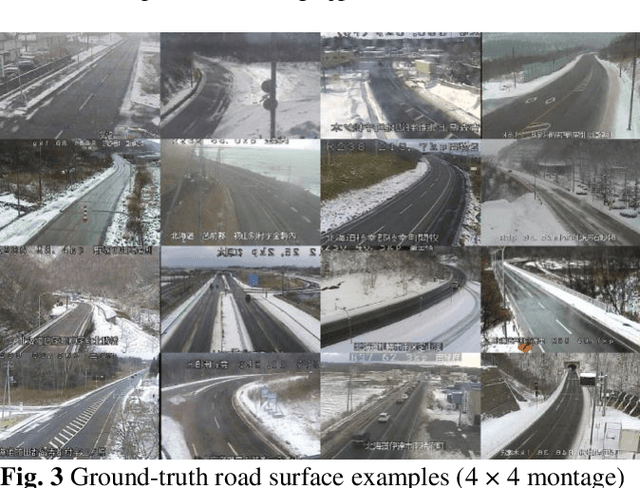

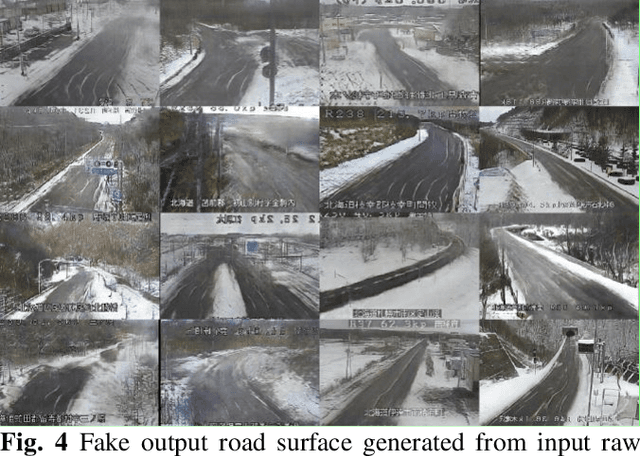

Abstract:In 2020, there was a record heavy snowfall owing to climate change. In reality, 2,000 vehicles were stuck on the highway for three days. Because of the freezing of the road surface, 10 vehicles had a billiard accident. Road managers are required to provide indicators to alert drivers regarding snow cover at hazardous locations. This study proposes a deep learning application with live image post-processing to automatically calculate a snow hazard ratio indicator. First, the road surface hidden under snow is translated using a generative adversarial network, pix2pix. Second, snow-covered and road surface classes are detected by semantic segmentation using DeepLabv3+ with MobileNet as a backbone. Based on these trained networks, we automatically compute the road to snow rate hazard index, indicating the amount of snow covered on the road surface. We demonstrate the applied results to 1,155 live snow images of the cold region in Japan. We mention the usefulness and the practical robustness of our study.

Rain Code: Multi-Frame Based Forecasting Spatiotemporal Precipitation Using ConvLSTM

Oct 17, 2020

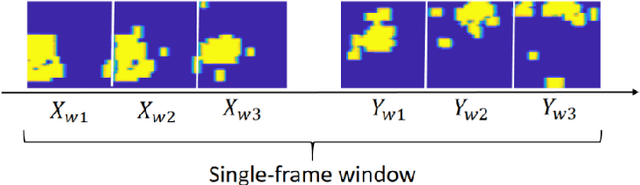

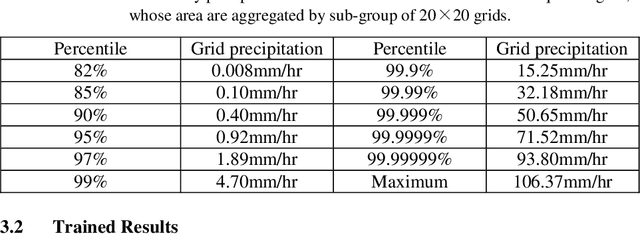

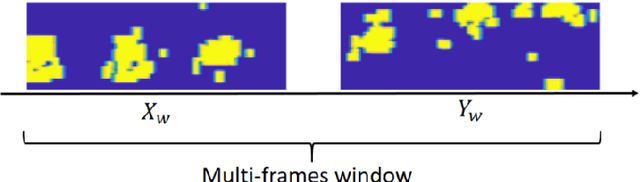

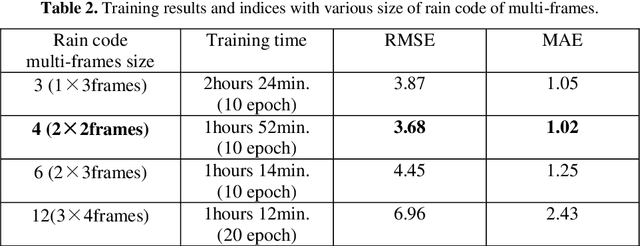

Abstract:Recently, flood damage has become a social problem owing to unexperienced weather conditions arising from climate change. An immediate response to heavy rain and high water levels is important for the mitigation of casualties and economic losses and also for rapid recovery. Spatiotemporal precipitation forecasts may enhance the accuracy of dam inflow prediction, more than 6 hours forward for flood damage mitigation. This paper proposes a rain-code approach for spatiotemporal precipitation forecasting. We propose a novel rainy feature that represents a temporal rainy process using multi-frame fusion for timestep reduction. We perform rain-code studies with various term ranges based on spatiotemporal precipitation forecasting using the standard ConvLSTM. We applied to a dam region within the Japanese rainy term hourly precipitation data, under 2006 to 2019 approximately 127 thousands hours, every year from May to October. We apply the radar analysis hourly data on the central broader region with an area of 136 x 148 km2 , based on new data fusion rain code with multi-frame sequences. Finally we got some evidences and capabilities for strengthen forecasting range.

Per-pixel Classification Rebar Exposures in Bridge Eye-inspection

Apr 22, 2020

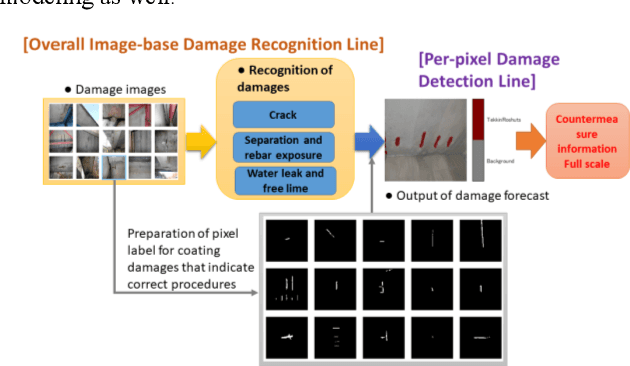

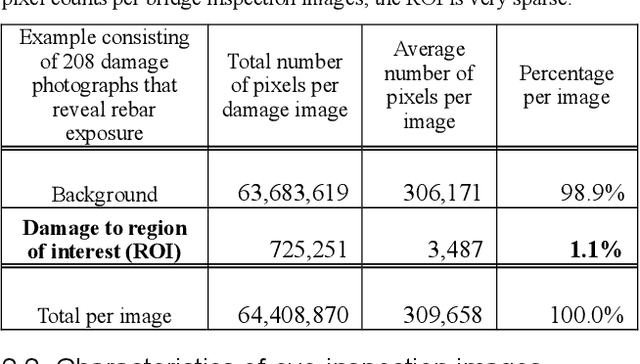

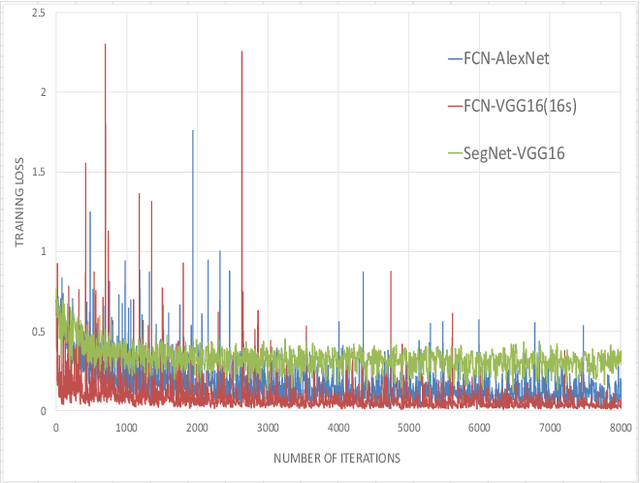

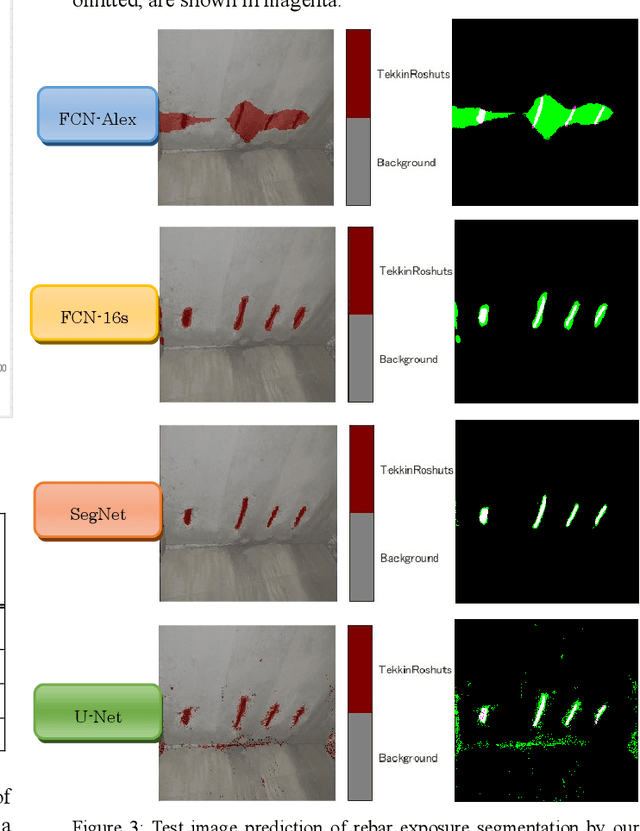

Abstract:Efficient inspection and accurate diagnosis are required for civil infrastructures with 50 years since completion. Especially in municipalities, the shortage of technical staff and budget constraints on repair expenses have become a critical problem. If we can detect damaged photos automatically per-pixels from the record of the inspection record in addition to the 5-step judgment and countermeasure classification of eye-inspection vision, then it is possible that countermeasure information can be provided more flexibly, whether we need to repair and how large the expose of damage interest. A piece of damage photo is often sparse as long as it is not zoomed around damage, exactly the range where the detection target is photographed, is at most only 1%. Generally speaking, rebar exposure is frequently occurred, and there are many opportunities to judge repair measure. In this paper, we propose three damage detection methods of transfer learning which enables semantic segmentation in an image with low pixels using damaged photos of human eye-inspection. Also, we tried to create a deep convolutional network from scratch with the preprocessing that random crops with rotations are generated. In fact, we show the results applied this method using the 208 rebar exposed images on the 106 real-world bridges. Finally, future tasks of damage detection modeling are mentioned.

Disaster Feature Classification on Aerial Photography to Explain Typhoon Damaged Region using Grad-CAM

Apr 21, 2020

Abstract:Recent years, typhoon damages has become social problem owing to climate change. Especially, 9 September 2019, Typhoon Faxai passed on the south Chiba prefecture in Japan, whose damages included with electric and water provision stop and house roof break because of strong wind recorded on the maximum 45 meter per second. A large amount of tree fell down, and the neighbor electric poles also fell down at the same time. These disaster features have caused that it took eighteen days for recovery longer than past ones. Initial responses are important for faster recovery. As long as we can, aerial survey for global screening of devastated region would be required for decision support to respond where to recover ahead. This paper proposes a practical method to visualize the damaged areas focused on the typhoon disaster features using aerial photography. This method can classify eight classes which contains land covers without damages and areas with disaster, where an aerial photograph is partitioned into 4,096 grids that is 64 by 64, with each unit image of 48 meter square. Using target feature class probabilities, we can visualize disaster features map to scale the color range from blue to red or yellow. Furthermore, we can realize disaster feature mapping on each unit grid images to compute the convolutional activation map using Grad-CAM based on deep neural network layers for classification. This paper demonstrates case studies applied to aerial photographs recorded at the south Chiba prefecture in Japan after typhoon disaster.

Synthetic Augmentation pix2pix using Tri-category Label with Edge structure for Accurate Segmentation architectures

Apr 21, 2020Abstract:In medical image diagnosis, pathology image analysis using semantic segmentation becomes important for efficient screening as a field of digital pathology. The spatial augmentation is ordinarily used for semantic segmentation. Images of malignant tumor are rare, and annotating the labels of the nuclei region is a time-consuming process. An effective use of the data set is required to maximize the segmentation accuracy. It is expected that augmentation to transform generalized images influences the segmentation performance. We propose a synthetic augmentation using label-to-image translation, mapping from a semantic label with an edge structure to a real image. This paper deals with the stain slides of nuclei in tumor. We demonstrate several segmentation algorithms applied to the initial data set that contains real images and labels using synthetic augmentation in order to add their generalized images. We compute and report that a proposed synthetic augmentation procedure improves the accuracy indices.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge