Xiliang Luo

Online optimal task offloading with one-bit feedback

Jul 02, 2018

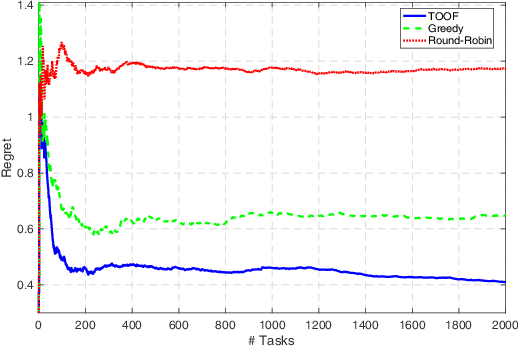

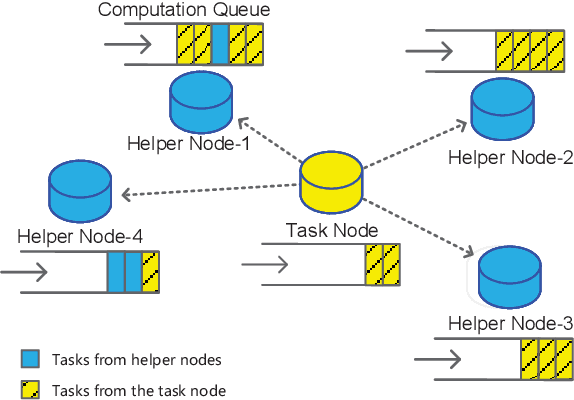

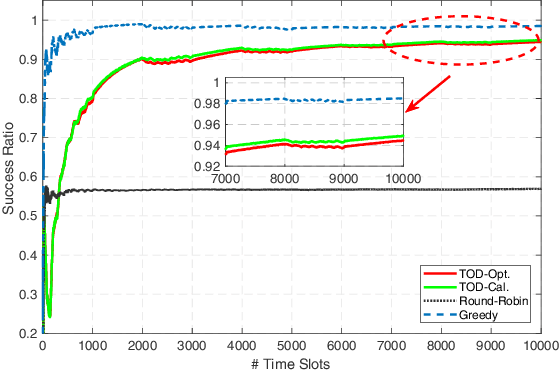

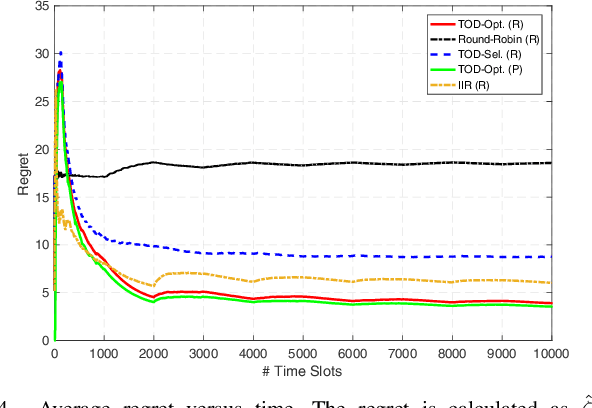

Abstract:Task offloading is an emerging technology in fog-enabled networks. It allows users to transmit tasks to neighbor fog nodes so as to utilize the computing resources of the networks. In this paper, we investigate a stochastic task offloading model and propose a multi-armed bandit framework to formulate this model. We consider the fact that different helper nodes prefer different kinds of tasks. Further, we assume each helper node just feeds back one-bit information to the task node to indicate the level of happiness. The key challenge of this problem lies in the exploration-exploitation tradeoff. We thus implement a UCB-type algorithm to maximize the long-term happiness metric. Numerical simulations are given in the end of the paper to corroborate our strategy.

Learn and Pick Right Nodes to Offload

Apr 24, 2018

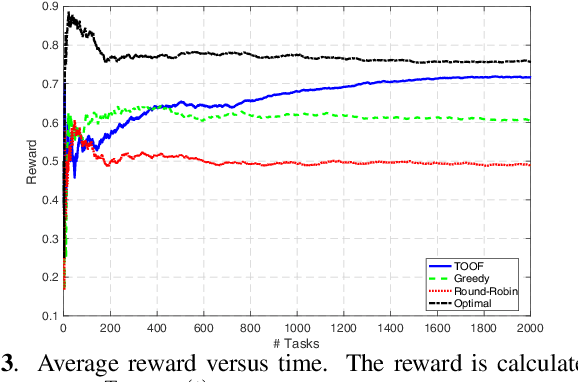

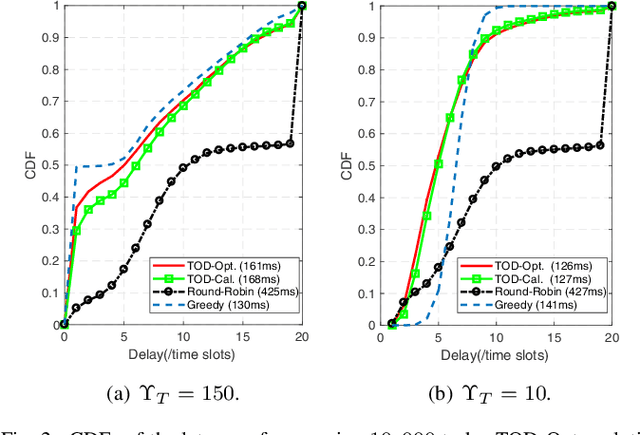

Abstract:Task offloading is a promising technology to exploit the benefits of fog computing. An effective task offloading strategy is needed to utilize the computational resources efficiently. In this paper, we endeavor to seek an online task offloading strategy to minimize the long-term latency. In particular, we formulate a stochastic programming problem, where the expectations of the system parameters change abruptly at unknown time instants. Meanwhile, we consider the fact that the queried nodes can only feed back the processing results after finishing the tasks. We then put forward an effective algorithm to solve this challenging stochastic programming under the non-stationary bandit model. We further prove that our proposed algorithm is asymptotically optimal in a non-stationary fog-enabled network. Numerical simulations are carried out to corroborate our designs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge