Xiaozhao Zhao

A Confident Information First Principle for Parametric Reduction and Model Selection of Boltzmann Machines

Feb 05, 2015

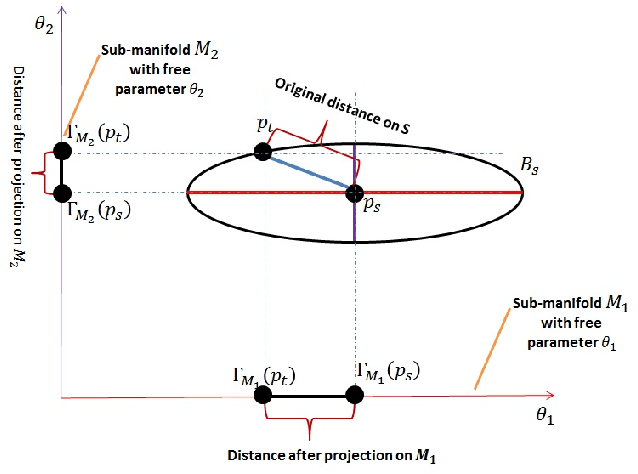

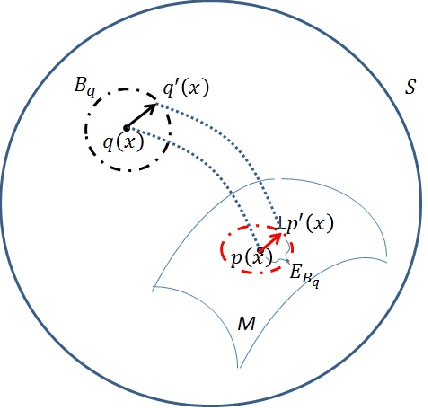

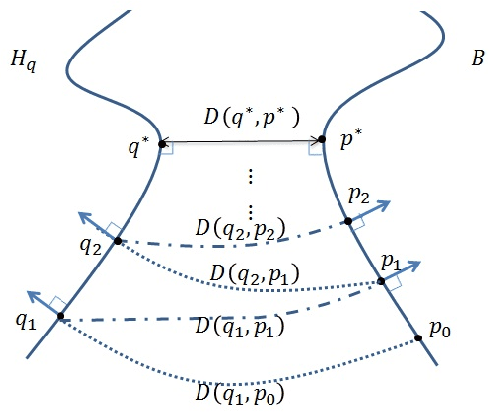

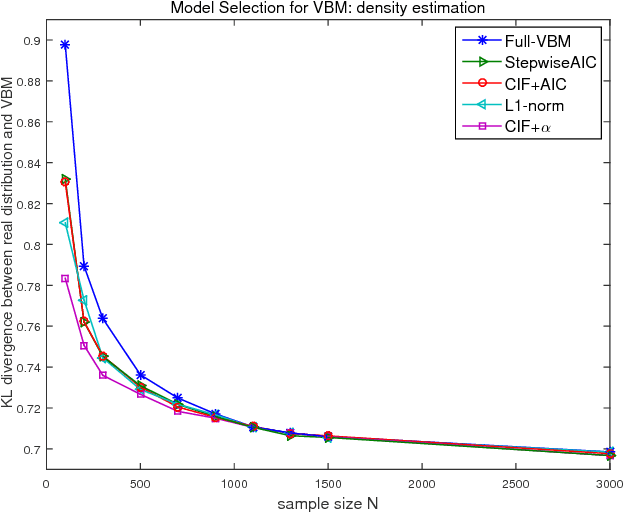

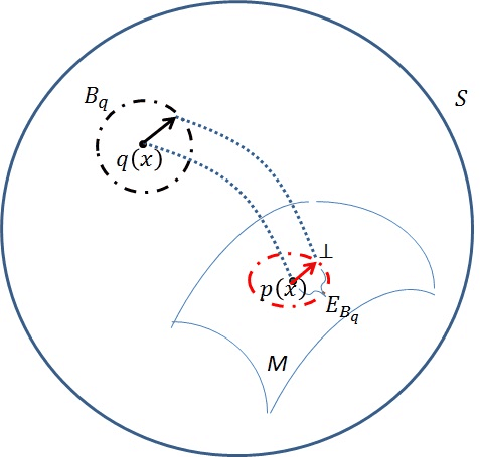

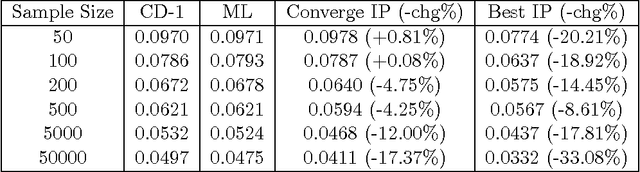

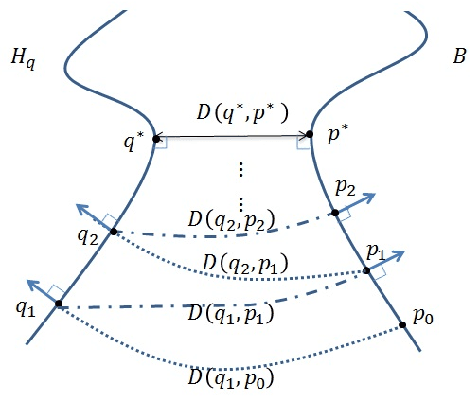

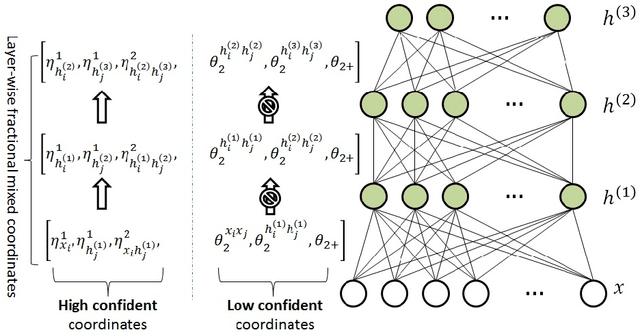

Abstract:Typical dimensionality reduction (DR) methods are often data-oriented, focusing on directly reducing the number of random variables (features) while retaining the maximal variations in the high-dimensional data. In unsupervised situations, one of the main limitations of these methods lies in their dependency on the scale of data features. This paper aims to address the problem from a new perspective and considers model-oriented dimensionality reduction in parameter spaces of binary multivariate distributions. Specifically, we propose a general parameter reduction criterion, called Confident-Information-First (CIF) principle, to maximally preserve confident parameters and rule out less confident parameters. Formally, the confidence of each parameter can be assessed by its contribution to the expected Fisher information distance within the geometric manifold over the neighbourhood of the underlying real distribution. We then revisit Boltzmann machines (BM) from a model selection perspective and theoretically show that both the fully visible BM (VBM) and the BM with hidden units can be derived from the general binary multivariate distribution using the CIF principle. This can help us uncover and formalize the essential parts of the target density that BM aims to capture and the non-essential parts that BM should discard. Guided by the theoretical analysis, we develop a sample-specific CIF for model selection of BM that is adaptive to the observed samples. The method is studied in a series of density estimation experiments and has been shown effective in terms of the estimate accuracy.

Understanding Boltzmann Machine and Deep Learning via A Confident Information First Principle

Oct 09, 2013

Abstract:Typical dimensionality reduction methods focus on directly reducing the number of random variables while retaining maximal variations in the data. In this paper, we consider the dimensionality reduction in parameter spaces of binary multivariate distributions. We propose a general Confident-Information-First (CIF) principle to maximally preserve parameters with confident estimates and rule out unreliable or noisy parameters. Formally, the confidence of a parameter can be assessed by its Fisher information, which establishes a connection with the inverse variance of any unbiased estimate for the parameter via the Cram\'{e}r-Rao bound. We then revisit Boltzmann machines (BM) and theoretically show that both single-layer BM without hidden units (SBM) and restricted BM (RBM) can be solidly derived using the CIF principle. This can not only help us uncover and formalize the essential parts of the target density that SBM and RBM capture, but also suggest that the deep neural network consisting of several layers of RBM can be seen as the layer-wise application of CIF. Guided by the theoretical analysis, we develop a sample-specific CIF-based contrastive divergence (CD-CIF) algorithm for SBM and a CIF-based iterative projection procedure (IP) for RBM. Both CD-CIF and IP are studied in a series of density estimation experiments.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge