Wolfgang Maass

Pattern representation and recognition with accelerated analog neuromorphic systems

Jul 03, 2017

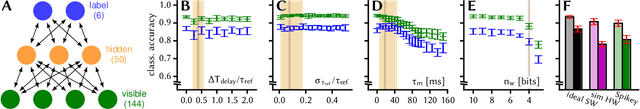

Abstract:Despite being originally inspired by the central nervous system, artificial neural networks have diverged from their biological archetypes as they have been remodeled to fit particular tasks. In this paper, we review several possibilites to reverse map these architectures to biologically more realistic spiking networks with the aim of emulating them on fast, low-power neuromorphic hardware. Since many of these devices employ analog components, which cannot be perfectly controlled, finding ways to compensate for the resulting effects represents a key challenge. Here, we discuss three different strategies to address this problem: the addition of auxiliary network components for stabilizing activity, the utilization of inherently robust architectures and a training method for hardware-emulated networks that functions without perfect knowledge of the system's dynamics and parameters. For all three scenarios, we corroborate our theoretical considerations with experimental results on accelerated analog neuromorphic platforms.

* accepted at ISCAS 2017

Neuromorphic Hardware In The Loop: Training a Deep Spiking Network on the BrainScaleS Wafer-Scale System

Mar 06, 2017

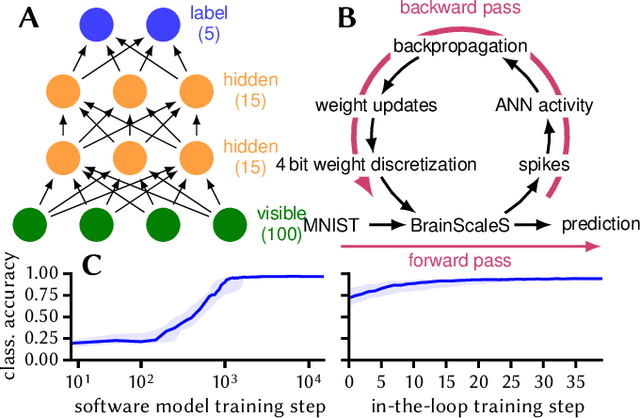

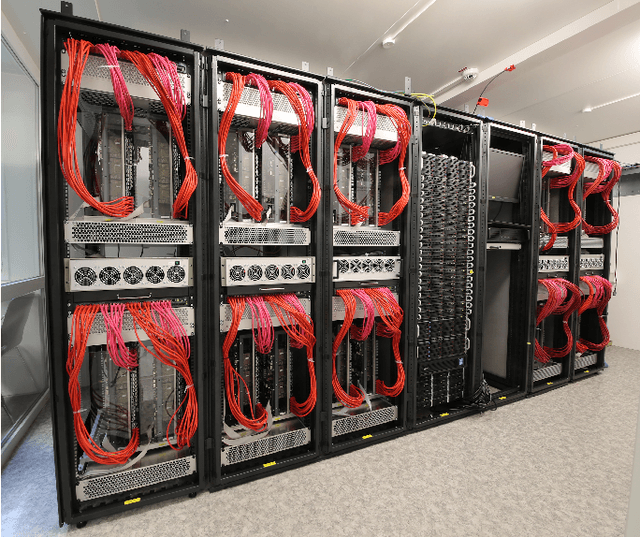

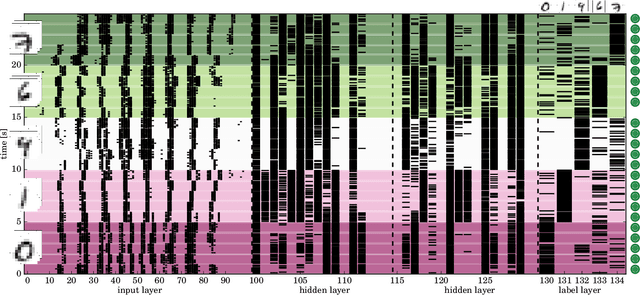

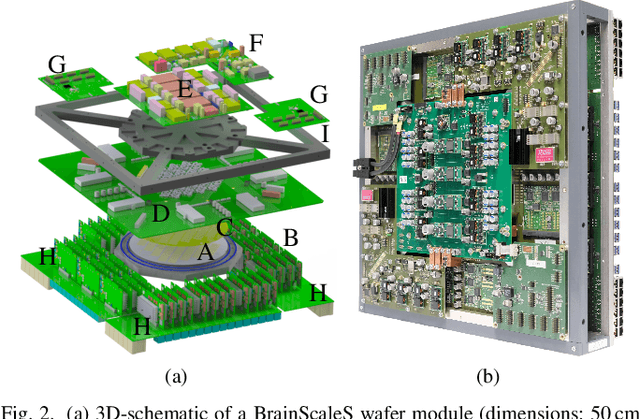

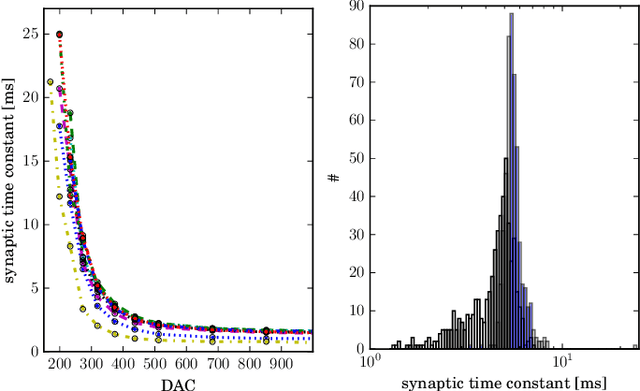

Abstract:Emulating spiking neural networks on analog neuromorphic hardware offers several advantages over simulating them on conventional computers, particularly in terms of speed and energy consumption. However, this usually comes at the cost of reduced control over the dynamics of the emulated networks. In this paper, we demonstrate how iterative training of a hardware-emulated network can compensate for anomalies induced by the analog substrate. We first convert a deep neural network trained in software to a spiking network on the BrainScaleS wafer-scale neuromorphic system, thereby enabling an acceleration factor of 10 000 compared to the biological time domain. This mapping is followed by the in-the-loop training, where in each training step, the network activity is first recorded in hardware and then used to compute the parameter updates in software via backpropagation. An essential finding is that the parameter updates do not have to be precise, but only need to approximately follow the correct gradient, which simplifies the computation of updates. Using this approach, after only several tens of iterations, the spiking network shows an accuracy close to the ideal software-emulated prototype. The presented techniques show that deep spiking networks emulated on analog neuromorphic devices can attain good computational performance despite the inherent variations of the analog substrate.

Network Plasticity as Bayesian Inference

Apr 20, 2015

Abstract:General results from statistical learning theory suggest to understand not only brain computations, but also brain plasticity as probabilistic inference. But a model for that has been missing. We propose that inherently stochastic features of synaptic plasticity and spine motility enable cortical networks of neurons to carry out probabilistic inference by sampling from a posterior distribution of network configurations. This model provides a viable alternative to existing models that propose convergence of parameters to maximum likelihood values. It explains how priors on weight distributions and connection probabilities can be merged optimally with learned experience, how cortical networks can generalize learned information so well to novel experiences, and how they can compensate continuously for unforeseen disturbances of the network. The resulting new theory of network plasticity explains from a functional perspective a number of experimental data on stochastic aspects of synaptic plasticity that previously appeared to be quite puzzling.

A theoretical basis for efficient computations with noisy spiking neurons

Dec 18, 2014

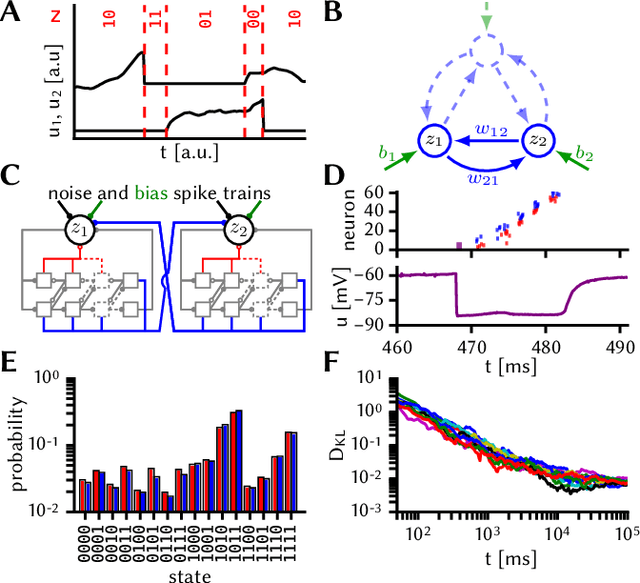

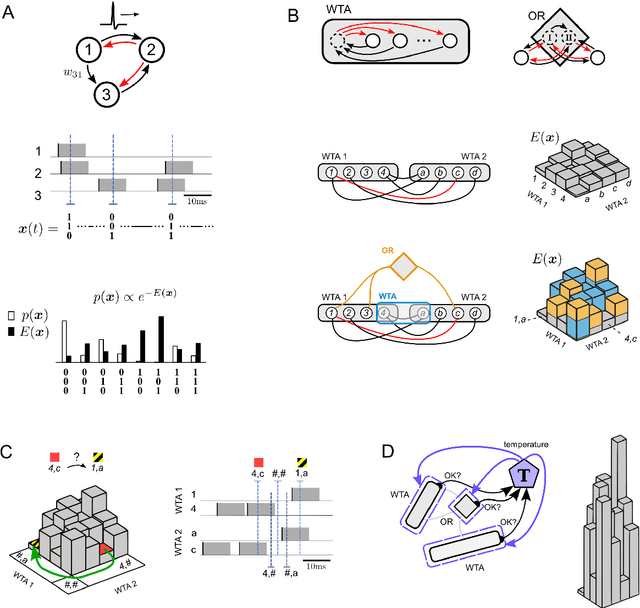

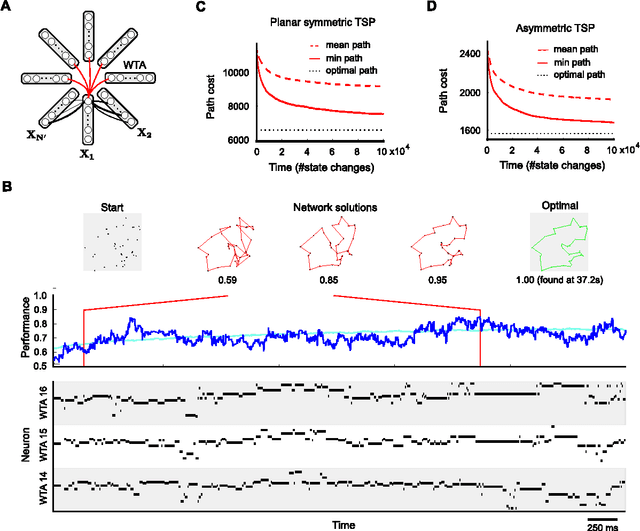

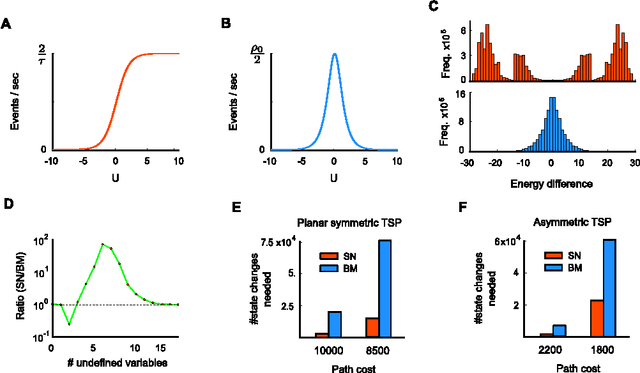

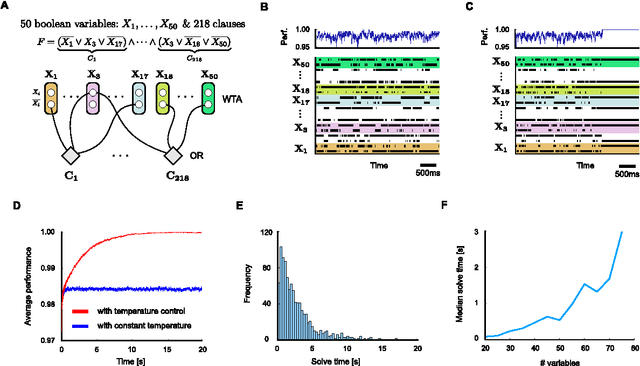

Abstract:Network of neurons in the brain apply - unlike processors in our current generation of computer hardware - an event-based processing strategy, where short pulses (spikes) are emitted sparsely by neurons to signal the occurrence of an event at a particular point in time. Such spike-based computations promise to be substantially more power-efficient than traditional clocked processing schemes. However it turned out to be surprisingly difficult to design networks of spiking neurons that are able to carry out demanding computations. We present here a new theoretical framework for organizing computations of networks of spiking neurons. In particular, we show that a suitable design enables them to solve hard constraint satisfaction problems from the domains of planning - optimization and verification - logical inference. The underlying design principles employ noise as a computational resource. Nevertheless the timing of spikes (rather than just spike rates) plays an essential role in the resulting computations. Furthermore, one can demonstrate for the Traveling Salesman Problem a surprising computational advantage of networks of spiking neurons compared with traditional artificial neural networks and Gibbs sampling. The identification of such advantage has been a well-known open problem.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge