Wojciech Skaba

Binary Space Partitioning as Intrinsic Reward

Apr 10, 2018

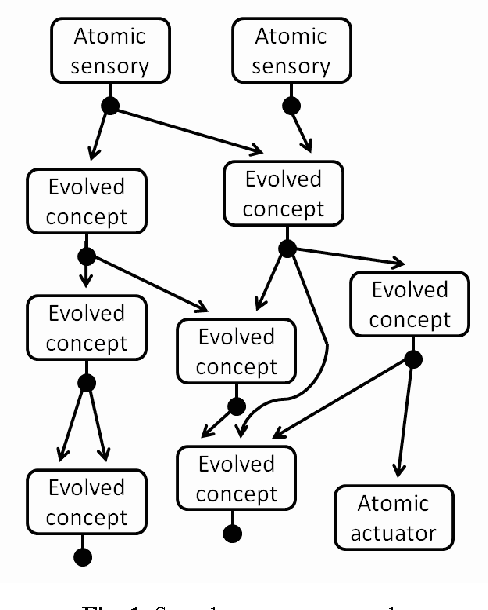

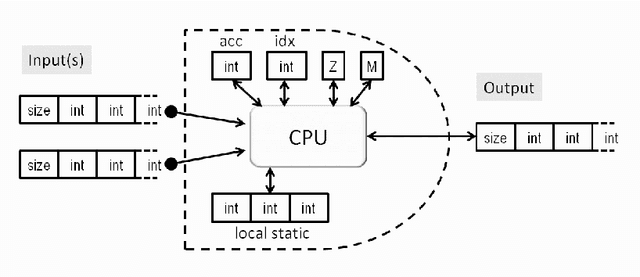

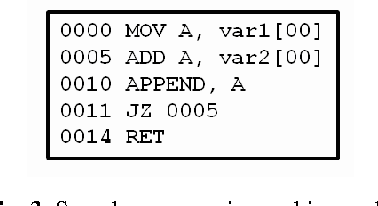

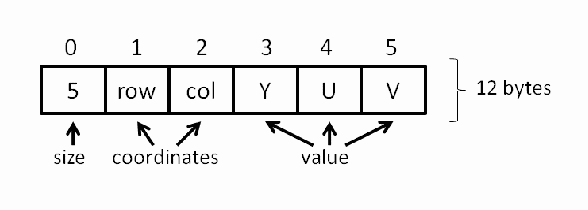

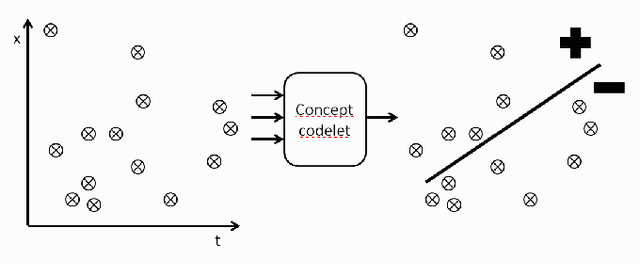

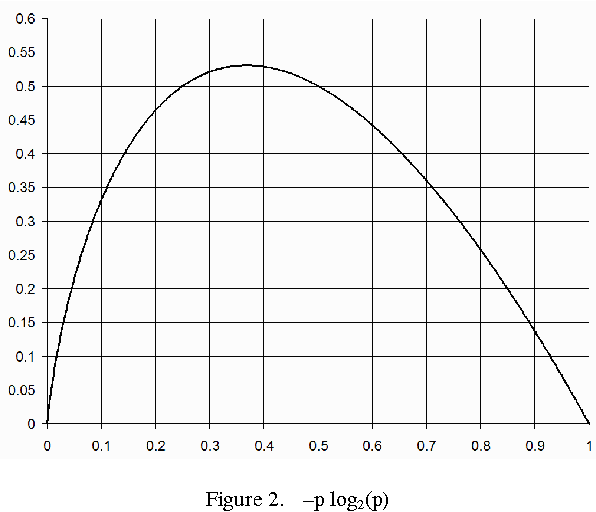

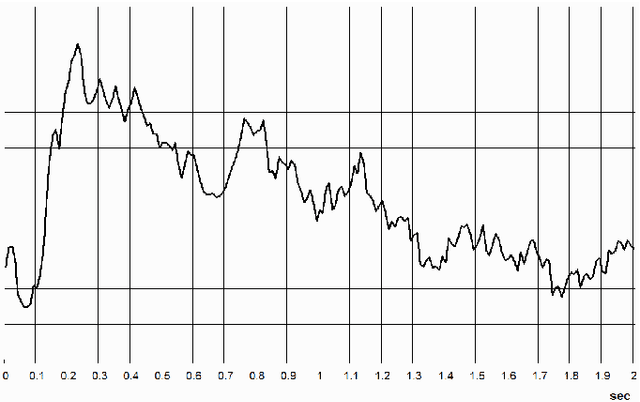

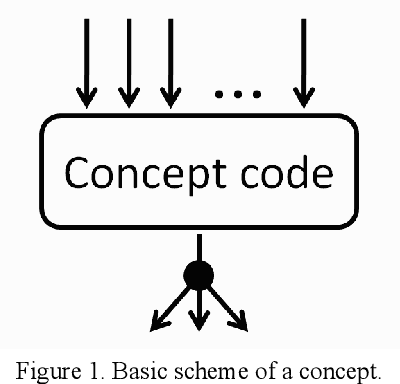

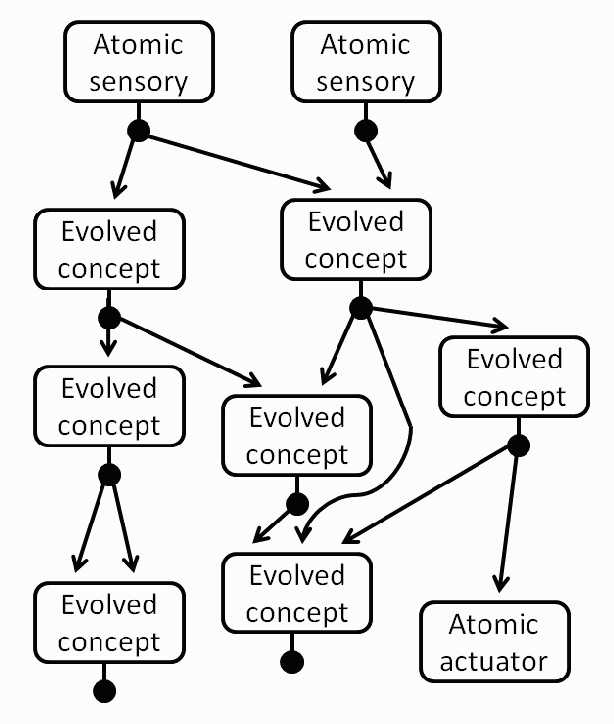

Abstract:An autonomous agent embodied in a humanoid robot, in order to learn from the overwhelming flow of raw and noisy sensory, has to effectively reduce the high spatial-temporal data dimensionality. In this paper we propose a novel method of unsupervised feature extraction and selection with binary space partitioning, followed by a computation of information gain that is interpreted as intrinsic reward, then applied as immediate-reward signal for the reinforcement-learning. The space partitioning is executed by tiny codelets running on a simulated Turing Machine. The features are represented by concept nodes arranged in a hierarchy, in which those of a lower level become the input vectors of a higher level.

* AGI 2012

Evaluating Actuators in a Purely Information-Theory Based Reward Model

Apr 10, 2018

Abstract:AGINAO builds its cognitive engine by applying self-programming techniques to create a hierarchy of interconnected codelets - the tiny pieces of code executed on a virtual machine. These basic processing units are evaluated for their applicability and fitness with a notion of reward calculated from self-information gain of binary partitioning of the codelet's input state-space. This approach, however, is useless for the evaluation of actuators. Instead, a model is proposed in which actuators are evaluated by measuring the impact that an activation of an effector, and consequently the feedback from the robot sensors, has on average reward received by the processing units.

* IEEE SSCI 2013, Singapore

The AGINAO Self-Programming Engine

Apr 10, 2018

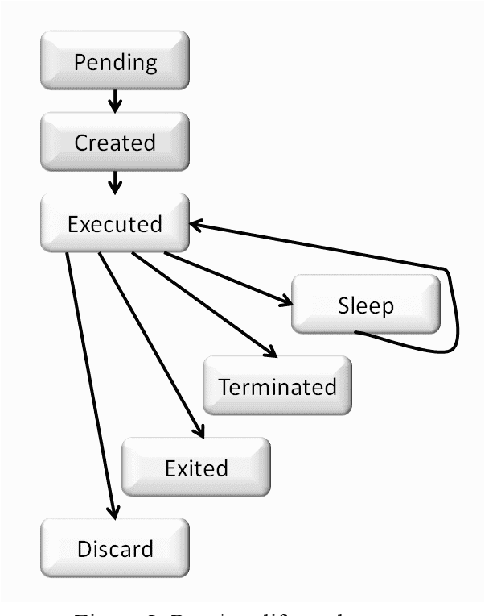

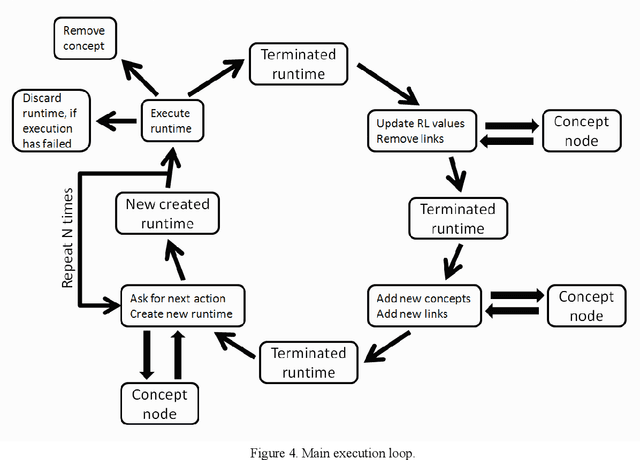

Abstract:The AGINAO is a project to create a human-level artificial general intelligence system (HL AGI) embodied in the Aldebaran Robotics' NAO humanoid robot. The dynamical and open-ended cognitive engine of the robot is represented by an embedded and multi-threaded control program, that is self-crafted rather than hand-crafted, and is executed on a simulated Universal Turing Machine (UTM). The actual structure of the cognitive engine emerges as a result of placing the robot in a natural preschool-like environment and running a core start-up system that executes self-programming of the cognitive layer on top of the core layer. The data from the robot's sensory devices supplies the training samples for the machine learning methods, while the commands sent to actuators enable testing hypotheses and getting a feedback. The individual self-created subroutines are supposed to reflect the patterns and concepts of the real world, while the overall program structure reflects the spatial and temporal hierarchy of the world dependencies. This paper focuses on the details of the self-programming approach, limiting the discussion of the applied cognitive architecture to a necessary minimum.

* Journal of Artificial General Intelligence

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge