Wenshan Cai

Real-time Non-line-of-sight Imaging with Two-step Deep Remapping

Jan 26, 2021

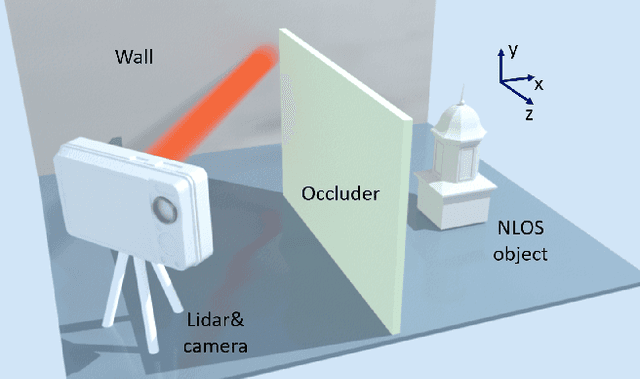

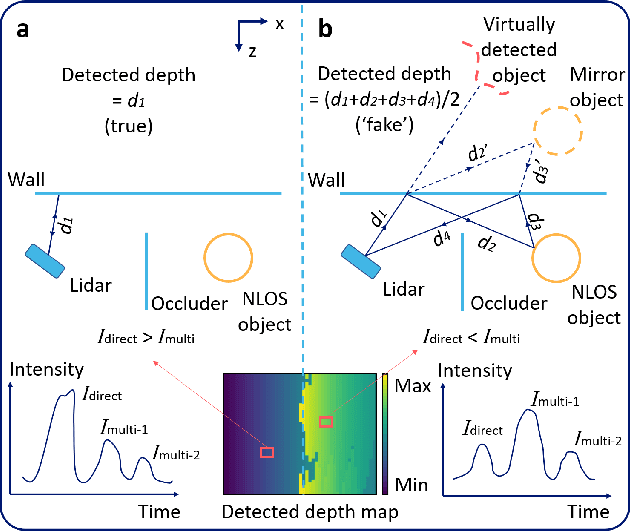

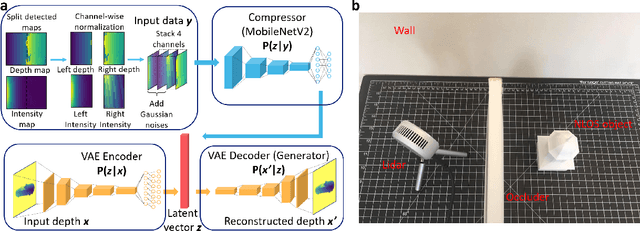

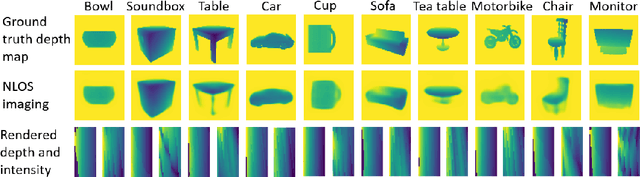

Abstract:Conventional imaging only records the photons directly sent from the object to the detector, while non-line-of-sight (NLOS) imaging takes the indirect light into account. To explore the NLOS surroundings, most NLOS solutions employ a transient scanning process, followed by a back-projection based algorithm to reconstruct the NLOS scenes. However, the transient detection requires sophisticated apparatus, with long scanning time and low robustness to ambient environment, and the reconstruction algorithms typically cost tens of minutes with high demand on memory and computational resources. Here we propose a new NLOS solution to address the above defects, with innovations on both detection equipment and reconstruction algorithm. We apply inexpensive commercial Lidar for detection, with much higher scanning speed and better compatibility to real-world imaging tasks. Our reconstruction framework is deep learning based, consisting of a variational autoencoder and a compression neural network. The generative feature and the two-step reconstruction strategy of the framework guarantee high fidelity of NLOS imaging. The overall detection and reconstruction process allows for real-time responses, with state-of-the-art reconstruction performance. We have experimentally tested the proposed solution on both a synthetic dataset and real objects, and further demonstrated our method to be applicable for full-color NLOS imaging.

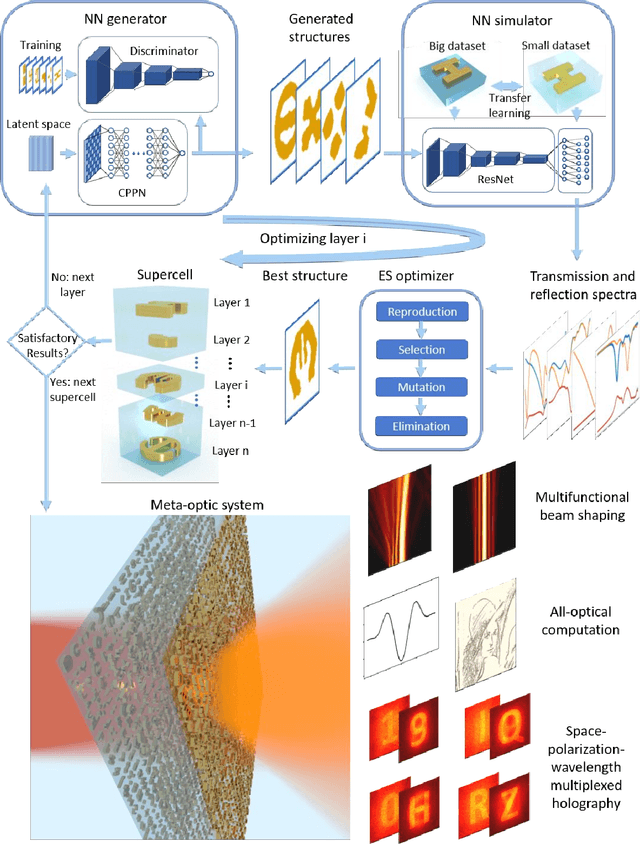

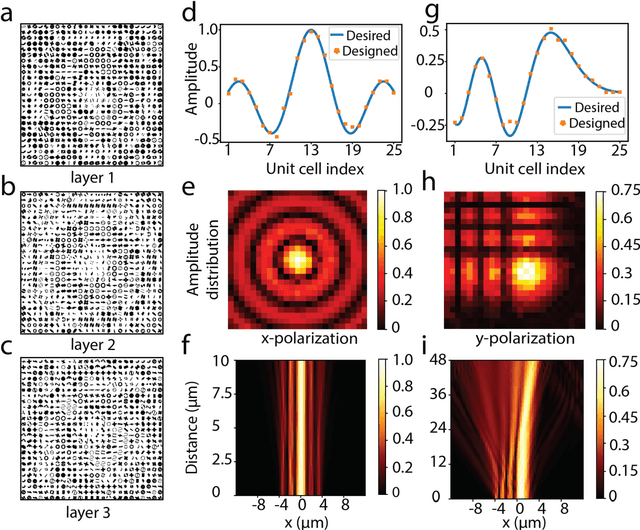

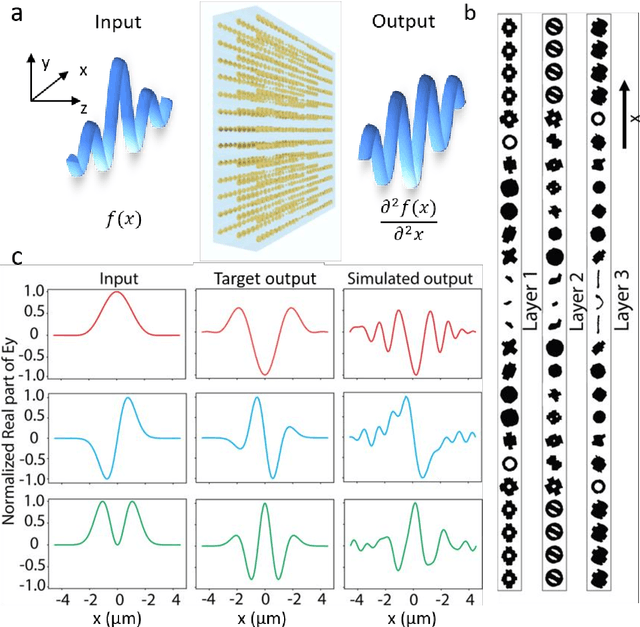

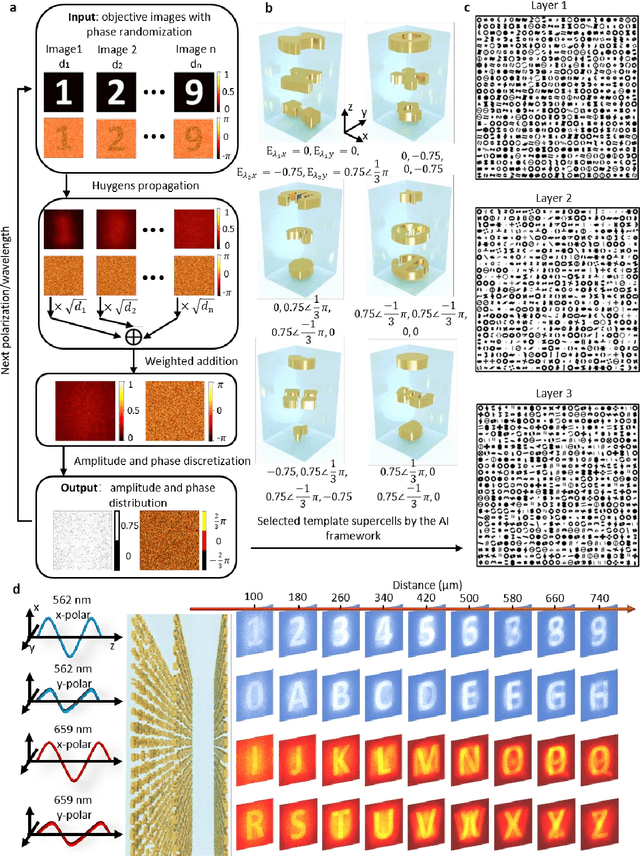

Multifunctional Meta-Optic Systems: Inversely Designed with Artificial Intelligence

Jun 30, 2020

Abstract:Flat optics foresees a new era of ultra-compact optical devices, where metasurfaces serve as the foundation. Conventional designs of metasurfaces start with a certain structure as the prototype, followed by an extensive parametric sweep to accommodate the requirements of phase and amplitude of the emerging light. Regardless of how computation-consuming the process is, a predefined structure can hardly realize the independent control over the polarization, frequency, and spatial channels, which hinders the potential of metasurfaces to be multifunctional. Besides, achieving complicated and multiple functions calls for designing a meta-optic system with multiple cascading layers of metasurfaces, which introduces super exponential complexity. In this work we present an artificial intelligence framework for designing multilayer meta-optic systems with multifunctional capabilities. We demonstrate examples of a polarization-multiplexed dual-functional beam generator, a second order differentiator for all-optical computation, and a space-polarization-wavelength multiplexed hologram. These examples are barely achievable by single-layer metasurfaces and unattainable by traditional design processes.

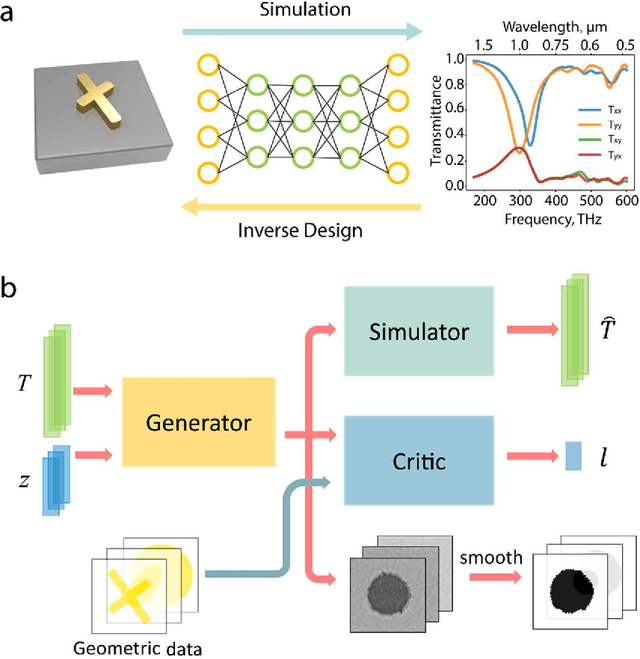

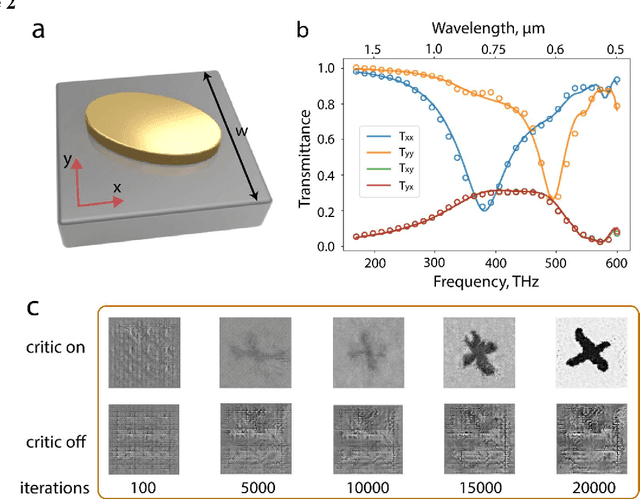

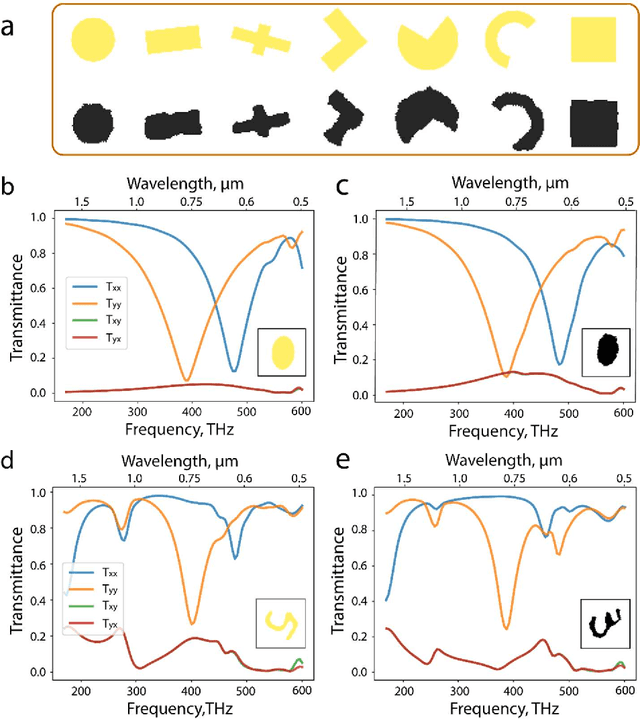

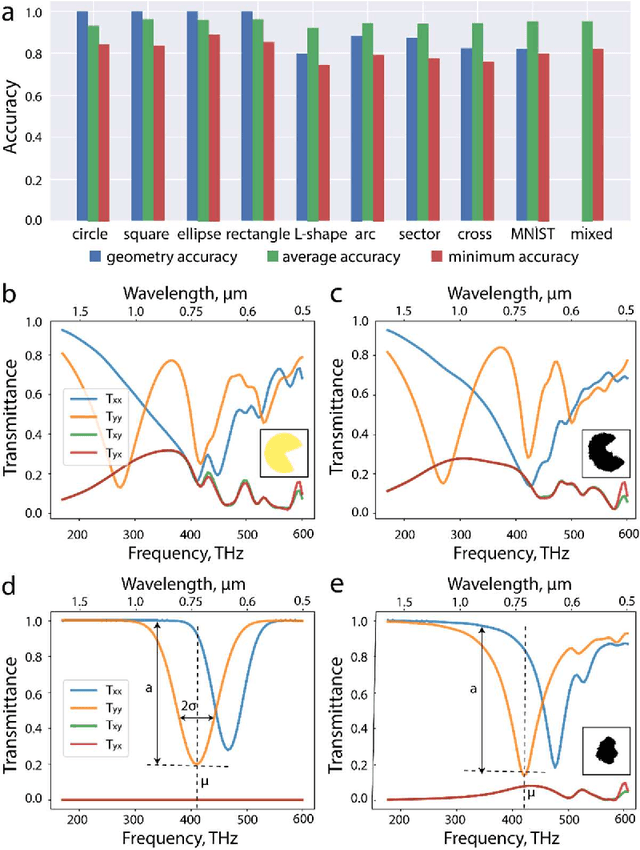

A Generative Model for Inverse Design of Metamaterials

May 25, 2018

Abstract:The advent of two-dimensional metamaterials in recent years has ushered in a revolutionary means to manipulate the behavior of light on the nanoscale. The effective parameters of these architected materials render unprecedented control over the optical properties of light, thereby eliciting previously unattainable applications in flat lenses, holographic imaging, and emission control among others. The design of such structures, to date, has relied on the expertise of an optical scientist to guide a progression of electromagnetic simulations that iteratively solve Maxwell's equations until a locally optimized solution can be attained. In this work, we identify a solution to circumvent this intuition-guided design by means of a deep learning architecture. When fed an input set of optical spectra, the constructed generative network assimilates a candidate pattern from a user-defined dataset of geometric structures in order to match the input spectra. The generated metamaterial patterns demonstrate high fidelity, yielding equivalent optical spectra at an average accuracy of about 0.9. This approach reveals an opportunity to expedite the discovery and design of metasurfaces for tailored optical responses in a systematic, inverse-design manner.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge